Single-Cell RNA Sequencing Analysis: A Comprehensive Guide from Fundamentals to Clinical Applications

This article provides a comprehensive overview of single-cell RNA sequencing (scRNA-seq) analysis, addressing the complete workflow from experimental design to clinical translation.

Single-Cell RNA Sequencing Analysis: A Comprehensive Guide from Fundamentals to Clinical Applications

Abstract

This article provides a comprehensive overview of single-cell RNA sequencing (scRNA-seq) analysis, addressing the complete workflow from experimental design to clinical translation. It covers foundational concepts and the technological evolution that enabled high-resolution cellular profiling, detailed methodological pipelines for data processing and interpretation, best practices for troubleshooting and optimizing study designs, and validation approaches for translating discoveries into biomedical applications. Tailored for researchers, scientists, and drug development professionals, this guide synthesizes current best practices and emerging trends to empower robust scRNA-seq implementation across basic research and therapeutic development.

Understanding scRNA-seq: Core Principles and Revolutionary Potential

The transition from bulk RNA sequencing (RNA-seq) to single-cell RNA sequencing (scRNA-seq) represents a paradigm shift in genomic science, moving from population-level averages to single-cell resolution. This technological advancement has fundamentally transformed our understanding of cellular heterogeneity, revealing complex cellular ecosystems within tissues that were previously obscured. Framed within broader single-cell research, this whitepaper examines the technical foundations, methodological considerations, and practical applications of scRNA-seq, with particular relevance for researchers, scientists, and drug development professionals seeking to leverage these technologies for biological discovery and therapeutic development.

Traditional bulk RNA sequencing has provided invaluable insights into gene expression patterns for decades, but with an inherent limitation: it measures the average expression profile across thousands to millions of cells, effectively masking cellular diversity [1]. The emergence of scRNA-seq technologies has enabled the characterization of gene expression at the individual cell level, revealing the remarkable heterogeneity within seemingly homogeneous cell populations and opening new frontiers in understanding development, disease mechanisms, and therapeutic responses [2].

This resolution shift has been particularly transformative for studying complex biological systems where cellular diversity is fundamental to function—such as the nervous system, immune system, and tumor microenvironments. Where bulk RNA-seq could identify differentially expressed genes between healthy and diseased tissue, scRNA-seq can pinpoint which specific cell types drive these differences, identify rare but functionally critical populations, and reveal continuous transitional states between cell types [1] [2].

Technological Foundations: Comparing Bulk and Single-Cell RNA Sequencing

Fundamental Methodological Differences

The core distinction between these approaches lies in their initial sample processing. In bulk RNA-seq, the biological sample is homogenized, and RNA is extracted from the entire cell population, producing a composite expression profile [1] [2]. In contrast, scRNA-seq begins with physically separating individual cells before RNA capture, barcoding, and sequencing, preserving cell-of-origin information [2].

This methodological divergence creates a trade-off: bulk RNA-seq provides greater sensitivity for detecting low-abundance transcripts through deep sequencing of the pooled RNA, while scRNA-seq sacrifices some sensitivity to gain critical information about cell-to-cell variation [1]. The following table summarizes the key technical and practical differences between these approaches:

Table 1: Comprehensive Comparison of Bulk vs. Single-Cell RNA Sequencing

| Feature | Bulk RNA Sequencing | Single-Cell RNA Sequencing |

|---|---|---|

| Resolution | Population average [1] | Individual cell level [1] |

| Cost per Sample | Lower (~$300/sample) [1] | Higher (~$500-$2000/sample) [1] |

| Cell Heterogeneity Detection | Limited [1] | High [1] |

| Rare Cell Type Detection | Limited [1] | Possible [1] |

| Gene Detection Sensitivity | Higher (median 13,378 genes) [1] | Lower (median 3,361 genes) [1] |

| Sample Input Requirement | Higher [1] | Lower (can work with picograms of RNA) [1] |

| Data Complexity | Lower, simpler analysis [1] | Higher, requires specialized computational methods [1] |

| Splicing Analysis | More comprehensive [1] | Limited [1] |

| Ideal Application | Homogeneous samples, differential expression [2] | Heterogeneous tissues, cell type discovery [2] |

Experimental Workflows and Technical Considerations

The scRNA-seq workflow incorporates several critical steps not present in bulk protocols. After tissue dissociation and single-cell suspension preparation, individual cells are partitioned into nanoliter-scale reactions using microfluidic devices [2]. Within these partitions, cells are lysed, and mRNA transcripts are captured and barcoded with cell-specific identifiers (cellular barcodes) and molecular identifiers (UMIs) that enable attribution of sequencing reads to their original cell and molecule, respectively [3].

The following diagram illustrates the core experimental workflow for scRNA-seq, highlighting key differences from bulk approaches:

Diagram Title: scRNA-seq Experimental Workflow

This instrument-enabled partitioning is a critical advancement, with platforms like the 10x Genomics Chromium system automating this process to ensure high reproducibility and reduced technical variation [2]. The subsequent library preparation and sequencing steps share similarities with bulk approaches but must accommodate the unique barcoding structure and amplification requirements of single-cell protocols.

Single-Cell RNA Sequencing Analysis Framework

Quality Control and Preprocessing

The initial analysis of scRNA-seq data requires rigorous quality control to distinguish high-quality cells from artifacts. Three key metrics guide this process: the number of counts per barcode (count depth), the number of genes detected per barcode, and the fraction of counts from mitochondrial genes [3] [4]. Cells with low counts, few detected genes, and high mitochondrial content typically indicate broken membranes or dying cells and should be filtered out [4].

However, these thresholds must be applied judiciously, as they can reflect genuine biological variation rather than technical artifacts. For instance, metabolically active cells may naturally exhibit higher mitochondrial content, and small cell types (like platelets) may have lower RNA content [4]. Automated thresholding approaches using median absolute deviations (MAD) provide a robust statistical framework for this filtering, typically flagging as outliers cells that differ by more than 5 MADs from the median [4].

Table 2: Essential Research Reagent Solutions for scRNA-seq

| Reagent/Category | Function | Technical Considerations |

|---|---|---|

| Cellular Barcodes | Unique oligonucleotides that label all mRNAs from individual cells | Enable multiplexing and cell-of-origin identification [3] |

| Unique Molecular Identifiers (UMIs) | Random nucleotide sequences that tag individual mRNA molecules | Distinguish biological duplicates from PCR amplification artifacts [3] |

| Cell Partitioning Systems | Microfluidic devices that isolate individual cells | Platforms like 10x Genomics Chromium enable high-throughput partitioning [2] |

| Viability Dyes | Identify and exclude dead cells | Critical for assessing suspension quality pre-sequencing |

| Enzymatic Mixes | Cell lysis and reverse transcription | Must work efficiently in partition volumes |

| Library Preparation Kits | Prepare barcoded cDNA for sequencing | Optimized for low-input single-cell libraries |

Data Analysis Workflow

After quality control, scRNA-seq data undergoes multiple preprocessing steps, including normalization to account for varying count depths between cells, feature selection to identify highly variable genes, and dimensionality reduction to visualize and explore the high-dimensional data [3]. Principal component analysis (PCA) is typically followed by nonlinear methods like t-distributed stochastic neighbor embedding (t-SNE) or uniform manifold approximation and projection (UMAP) for visualization [3].

Clustering analysis then groups cells based on transcriptional similarity, enabling the identification of distinct cell types and states [3]. Differential expression analysis between clusters reveals marker genes that define each population, facilitating cell type annotation through comparison with existing databases [5].

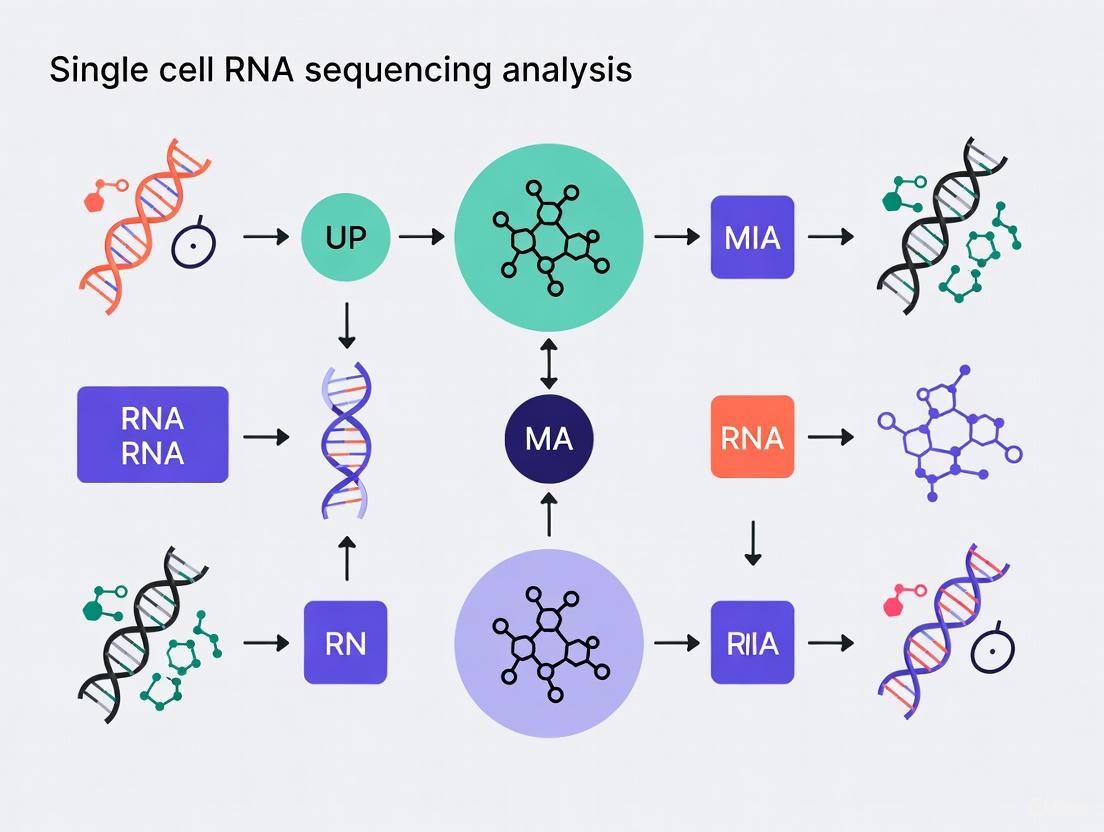

The following diagram outlines the standard computational analysis workflow for scRNA-seq data:

Diagram Title: scRNA-seq Computational Workflow

Applications in Drug Discovery and Development

The pharmaceutical industry has increasingly adopted scRNA-seq technologies to address key challenges in the drug development pipeline. By providing unprecedented resolution into cellular heterogeneity and disease mechanisms, scRNA-seq enables more precise target identification, better preclinical model selection, and enhanced biomarker discovery [6] [7].

Target Identification and Prioritization

ScRNA-seq enables the identification of novel therapeutic targets by resolving cell type-specific disease mechanisms. By comparing healthy and diseased tissues at single-cell resolution, researchers can pinpoint dysregulated genes and pathways in specific cell populations, leading to more targeted therapeutic interventions with potentially fewer off-target effects [6] [7]. For example, in oncology, scRNA-seq has revealed distinct cancer subclones within tumors, identifying potential targets for precision medicine approaches [1].

Functional Genomics Screens

The integration of scRNA-seq with CRISPR-based screening technologies (Perturb-seq) represents a powerful approach for target validation and mechanism of action studies [6]. By introducing genetic perturbations and measuring their transcriptomic consequences at single-cell resolution, researchers can systematically map gene regulatory networks and identify key drivers of disease phenotypes [6] [8]. This approach provides direct functional evidence for target involvement in disease-relevant pathways.

Biomarker Discovery and Patient Stratification

ScRNA-seq facilitates the identification of cell type-specific biomarkers for patient stratification and treatment response monitoring [6] [7]. By characterizing the cellular composition of patient samples and identifying rare cell populations associated with disease progression or treatment resistance, clinicians can develop more precise diagnostic and prognostic tools [6]. For instance, in cancer immunotherapy, scRNA-seq has identified T cell states predictive of response to checkpoint inhibitors [1].

The rise of single-cell resolution technologies represents a fundamental transformation in transcriptomics, enabling researchers to move beyond population averages and explore the full complexity of cellular ecosystems. While bulk RNA-seq remains valuable for hypothesis generation and studies of homogeneous populations, scRNA-seq provides unprecedented insights into cellular heterogeneity, rare cell populations, and dynamic biological processes.

As both technologies continue to evolve, we are witnessing not a replacement of one method by another, but rather a strategic integration where each approach addresses complementary questions. The future of transcriptomic research lies in selecting the appropriate tool for the biological question at hand—whether that requires the deep sensitivity of bulk sequencing or the resolution of single-cell analysis—and in combining these approaches to gain both macroscopic and microscopic views of biological systems.

For drug discovery professionals and researchers, understanding the capabilities, limitations, and appropriate applications of these technologies is essential for designing impactful studies that advance our understanding of biology and disease mechanisms. As scRNA-seq technologies become more accessible and cost-effective, their integration into standard research pipelines will continue to drive discoveries across biological and medical disciplines.

The development of single-cell RNA sequencing (scRNA-seq) represents a paradigm shift in biological research, transitioning scientific inquiry from population-averaged transcriptomic measurements to high-resolution analysis of individual cells. This technological revolution has revealed the profound cellular heterogeneity inherent in biological systems—from embryonic development to disease pathogenesis—enabling researchers to discover novel cell types, characterize rare cell populations, and decipher developmental trajectories with unprecedented precision [9]. While bulk RNA sequencing provides an average gene expression profile across thousands of cells, scRNA-seq captures the unique transcriptional identity of each individual cell, exposing the complex cellular diversity that underlies biological function and dysfunction [10].

The fundamental breakthrough came in 2009 when Tang et al. published the first scRNA-seq method, sequencing the transcriptome of single blastomeres and oocytes [9]. This conceptual and technical achievement established the foundational approach that would eventually scale to analyze millions of cells in a single experiment. The technology has since evolved through iterative improvements in cell capture, barcoding strategies, molecular biology, and sequencing platforms, leading to the sophisticated high-throughput systems available today [9]. This whitepaper traces the key technological breakthroughs along this developmental trajectory, providing researchers with a comprehensive technical guide to the evolution of scRNA-seq platforms and their applications in biomedical research.

The Foundational Breakthrough: Tang et al. 2009

The pioneering work by Tang et al. in 2009 established the fundamental methodology for single-cell transcriptome analysis, demonstrating for the first time that the complete mRNA complement of an individual cell could be amplified and sequenced [9]. Their approach overcame the critical challenge of working with the minimal RNA quantities present in a single cell (approximately 10⁵–10⁶ mRNA molecules in vertebrate cells) through sophisticated amplification strategies [10].

Core Methodology and Technical Approach

The original protocol employed poly(T) priming to selectively reverse-transcribe mRNA molecules, followed by template-switching activity using Moloney Murine Leukemia Virus (M-MLV) reverse transcriptase to incorporate universal adapter sequences. This strategic implementation enabled cDNA amplification via polymerase chain reaction (PCR), dramatically increasing the nucleic acid material to quantities sufficient for sequencing library construction [9]. While revolutionary, this initial method was low-throughput, technically demanding, and limited in sensitivity, analyzing only a few cells per experiment. Nevertheless, it contained the conceptual DNA that would drive the field forward: the use of cell-specific barcodes to label transcripts from individual cells and unique molecular identifiers (UMIs) to quantitatively track and count individual mRNA molecules while controlling for amplification biases [9].

Evolution of High-Throughput scRNA-seq Platforms

The decade following Tang's breakthrough witnessed rapid innovation focused on scaling throughput from handfuls of cells to hundreds of thousands of cells per experiment. This scaling was achieved through parallel developments in cell capture technologies, molecular barcoding strategies, and microfluidic implementations.

Key Technological Developments

Table 1: Evolution of High-Throughput scRNA-seq Platform Technologies

| Time Period | Key Technologies | Throughput Range | Primary Innovations |

|---|---|---|---|

| 2009 (Foundational) | Single-cell RT-PCR, Tang et al. method | 1-10 cells | First full-transcript scRNA-seq; poly(T) priming; template-switching |

| 2014-2016 (Early High-Throughput) | Drop-seq, inDrop-seq, CEL-Seq2 | 1,000-10,000 cells | Droplet microfluidics; combinatorial barcoding; UMIs for quantification |

| 2017-Present (Commercial Platforms) | 10× Chromium, BD Rhapsody, Smart-seq3 | 10,000-1,000,000+ cells | Commercial standardization; integrated workflows; optimized reagents |

| 2020-Present (Advanced Applications) | SCAN-seq2, Single-Nucleus RNA-seq | 1,000-100,000 cells | Third-generation sequencing; full-length isoforms; difficult tissues |

Platform Architecture: Droplet vs. Microwell-Based Technologies

Modern high-throughput scRNA-seq platforms primarily utilize two distinct architectural approaches for partitioning individual cells:

Droplet-Based Systems (10× Chromium) The 10× Chromium system employs microfluidic chips to co-encapsulate individual cells with barcoded beads in nanoliter-scale water-in-oil droplets. Each bead contains millions of oligonucleotides with identical cell barcodes but diverse UMIs. Within each droplet, cell lysis occurs, and mRNA molecules bind to the bead-conjugated oligonucleotides via poly(T) tails, labeling each transcript with its cell of origin [11] [9].

Microwell-Based Systems (BD Rhapsody) The BD Rhapsody system uses microwell arrays where cells are randomly deposited by gravity into picoliter-size wells. Like droplet-based systems, it employs barcoded beads for mRNA capture but achieves partitioning through physical confinement rather than fluidic encapsulation [11]. Both systems ultimately leverage barcoded reverse transcription to cDNA molecules that maintain information about their cellular origin, enabling pooled sequencing of thousands of cells while retaining the ability to attribute sequences to individual cells during computational analysis [9].

Figure 1: Workflow comparison of droplet-based versus microwell-based scRNA-seq platforms. Both approaches begin with tissue dissociation and progress through single-cell suspension, but diverge in their cell partitioning mechanisms before converging again for library preparation and sequencing.

Advanced Methodological Variations

Single-Nucleus RNA Sequencing (snRNA-seq)

Single-nucleus RNA sequencing emerged as a powerful alternative to conventional scRNA-seq, particularly for tissues that are difficult to dissociate or incompatible with droplet-based platforms. snRNA-seq sequences nuclear transcripts rather than cytoplasmic mRNA, offering several distinct advantages [12]:

- Compatibility with frozen specimens: Enables utilization of biobank samples and complex study designs [12]

- Minimized dissociation artifacts: Avoids artificial transcriptional stress responses induced by enzymatic digestion at 37°C [9]

- Access to difficult cell types: Permits analysis of cells resistant to dissociation, including adipocytes, neurons, and cardiomyocytes [12]

Recent methodological refinements have significantly improved snRNA-seq data quality. So et al. developed an optimized nucleus isolation protocol incorporating vanadyl ribonucleoside complex (VRC) that dramatically reduces nuclear RNA degradation, particularly in challenging tissues like visceral adipose tissue where complete RNA degradation previously occurred within 2 hours post-homogenization [12]. This advancement has enabled high-resolution analysis of previously inaccessible cell populations, such as mature adipocytes in obesity research, revealing distinct hypertrophic adipocyte subpopulations with pathological gene expression signatures [12].

Full-Length Transcript ScRNA-seq with Third-Generation Sequencing

While 3'-end counting methods (10× Chromium, BD Rhapsody) dominate large-scale cell atlas projects, full-length transcript methods have evolved significantly. The recently developed SCAN-seq2 platform represents a major advancement in third-generation sequencing-based scRNA-seq, enabling high-throughput, high-sensitivity full-length transcriptome analysis [13].

SCAN-seq2 incorporates a dual barcoding strategy—employing both 3' and 5' barcodes—that enables pooling of up to 3,072 single cells per sequencing run while maintaining accurate cell identity assignment [13]. This approach detects over 4,000 genes and 4,500 well-assembled RNA isoforms per cell, facilitating:

- Comprehensive isoform characterization: Distinguishing between different RNA isoforms from the same gene, such as the distinct PTPRC (CD45) isoforms expressed in different immune cell lines [13]

- Pseudogene expression analysis: Unambiguously distinguishing transcripts of pseudogenes from their parent genes, identifying 1,444 expressed pseudogenes with cell-type-specific expression patterns [13]

- V(D)J recombination analysis: Accurately determining highly polymorphic T-cell receptor (TCR) and B-cell receptor (BCR) rearrangement events at single-cell resolution [13]

Spatial Transcriptomics Integration

A key limitation of conventional scRNA-seq is the loss of spatial context during tissue dissociation. Spatial transcriptomics technologies have emerged to address this gap, enabling transcriptome profiling while preserving the two-dimensional organization of RNA molecules within tissue sections [10] [14]. These methods utilize specialized slides with position-barcoded capture probes or in situ sequencing approaches to correlate gene expression data with histological location. In bladder cancer research, the integration of scRNA-seq with spatial transcriptomics has revealed complex cellular ecosystems within the tumor microenvironment, identifying molecular subtypes within individual tumors and elucidating mechanisms of treatment resistance [14].

Comparative Performance Analysis

Platform-Specific Performance Metrics

Table 2: Performance Comparison of Modern High-Throughput scRNA-seq Platforms

| Platform | Technology Type | Genes/Cell | Cell Throughput | Key Strengths | Documented Biases |

|---|---|---|---|---|---|

| 10× Chromium | Droplet-based | 1,000-5,000 | 10,000-100,000+ | High cell throughput; standardized workflows | Lower gene sensitivity in granulocytes [11] |

| BD Rhapsody | Microwell-based | 1,000-5,000 | 1,000-100,000 | Flexible sample loading; mitochondrial content | Lower proportion of endothelial/myofibroblast cells [11] |

| SCAN-seq2 | TGS full-length | 4,000-4,500 | 3,000-5,000 | Isoform resolution; V(D)J analysis | Lower throughput than leading NGS platforms [13] |

| snRNA-seq | Nuclear transcript | Varies by protocol | 1,000-100,000 | Frozen sample compatibility; difficult tissues | Nuclear transcript bias; missing cytoplasmic regulation [12] |

Direct comparative studies reveal that while modern platforms generally exhibit similar gene sensitivity, they display distinct performance characteristics and cell-type-specific biases. A systematic comparison of 10× Chromium and BD Rhapsody using complex mammary gland tumors found both platforms had comparable gene sensitivity, but differed in mitochondrial content and specific cell type detection [11]. BD Rhapsody demonstrated higher mitochondrial content, while 10× Chromium showed lower gene sensitivity specifically in granulocytes [11]. Additionally, each platform exhibited distinct cell type representation biases—BD Rhapsody captured lower proportions of endothelial and myofibroblast cells, suggesting platform-specific capture efficiencies that may influence biological interpretations [11].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for scRNA-seq Experiments

| Reagent/Material | Function | Technical Considerations |

|---|---|---|

| Barcoded Beads | Cell barcoding & mRNA capture | Oligo design determines capture efficiency; UMI complexity affects quantification accuracy [9] |

| Tissue Dissociation Enzymes | Single-cell suspension preparation | Optimization required for different tissues; temperature control critical to minimize stress responses [9] |

| RNase Inhibitors | RNA integrity preservation | Vanadyl ribonucleoside complex (VRC) particularly effective in challenging tissues like adipose [12] |

| Unique Molecular Identifiers (UMIs) | Molecular counting & amplification bias correction | Essential for accurate quantification; sequence diversity reduces collision errors [9] [13] |

| Template-Switching Oligos | cDNA amplification | Enables full-length transcript capture; critical for SMART-based methods [9] |

| Microfluidic Chips/Microwell Cartridges | Single-cell partitioning | Platform-specific designs determine maximum throughput and capture efficiency [11] |

Experimental Protocols and Methodological Considerations

Standardized Workflow for High-Throughput scRNA-seq

The typical scRNA-seq experimental workflow consists of several critical stages, each requiring careful optimization to ensure data quality:

Sample Preparation and Cell Isolation

- Tissue dissociation using optimized enzyme cocktails (collagenase/hyaluronidase for tumors [11])

- Viability preservation through temperature control (4°C dissociation minimizes stress responses [9])

- Dead cell removal using magnetic bead-based separation (Annexin-specific MACS beads [11])

- Quality assessment via flow cytometry or microscopy (≥85% viability recommended [11])

Single-Cell Partitioning and mRNA Capture

- Platform-specific encapsulation (droplet-based or microwell-based)

- Cell lysis within partitions

- mRNA binding to barcoded oligo-dT primers

Reverse Transcription and Library Construction

- Barcoded cDNA synthesis using reverse transcriptase

- cDNA amplification via PCR or in vitro transcription (IVT)

- Library preparation with platform-specific adapters

Sequencing and Data Analysis

- Illumina sequencing (typically 150bp paired-end)

- Demultiplexing based on cell barcodes

- UMI counting and gene expression matrix generation

Specialized Protocol: Single-Nucleus RNA-seq with VRC Enhancement

For tissues prone to RNA degradation or difficult to dissociate, the optimized snRNA-seq protocol with VRC treatment provides superior results [12]:

Nucleus Isolation with RNase Protection

- Fresh or frozen tissue homogenization in hypotonic lysis buffer

- Addition of vanadyl ribonucleoside complex (VRC) at optimized concentration

- Optional combination with recombinant RNase inhibitors

- Sucrose gradient centrifugation for nucleus purification

Quality Assessment and Normalization

- RNA quality analysis via bioanalyzer

- Nucleus counting and integrity verification by microscopy

- Loading concentration optimization (typically 1,000-10,000 nuclei per reaction)

Partitioning and Library Preparation

- Standard scRNA-seq workflow compatible with multiple platforms

- Modified lysis conditions to preserve nuclear membrane integrity

This optimized approach maintains RNA integrity for extended periods (up to 24 hours at 4°C in adipose tissue, versus complete degradation within 2 hours using standard protocols), enabling flexible experimental designs and improved data quality from challenging samples [12].

Applications and Impact on Biomedical Research

The technological advancements in scRNA-seq have driven transformative applications across diverse fields of biomedical research:

In cancer biology, scRNA-seq has revealed unprecedented tumor heterogeneity, identifying rare cell populations with distinct functional properties and therapeutic vulnerabilities. In bladder cancer, integrated scRNA-seq and spatial transcriptomics have uncovered molecular subtypes within individual tumors and elucidated mechanisms of treatment resistance [14]. Similar approaches in mammary gland tumors have delineated the complex cellular ecosystem of the tumor microenvironment, revealing intricate interactions between malignant cells and diverse stromal components [11].

In neurobiology, snRNA-seq has enabled the characterization of cellular diversity in the human brain, identifying neural stem cells, neuroblasts, and immature neurons in the adult subependymal zone neurogenic niche [15]. These findings demonstrate ongoing neurogenesis in adult humans and reveal age-associated transcriptional changes, with decreased oligodendrocyte progenitor abundance in middle-aged adults compared to youth [15].

In metabolic disease research, optimized snRNA-seq protocols have enabled comprehensive characterization of adipose tissue remodeling during obesity, identifying distinct adipocyte subpopulations following divergent adaptive and pathological trajectories [12]. These findings provide mechanistic insights into how different fat depots contribute variably to metabolic health.

In immunology, scRNA-seq has revolutionized our understanding of immune cell diversity and function. Studies of sepsis patients using scRNA-seq have identified key immune cell populations and telomere-related biomarkers, revealing CD16+ and CD14+ monocytes as central players in the dysregulated host response [16].

In drug development, scRNA-seq enables high-resolution assessment of therapeutic responses and resistance mechanisms, facilitating the identification of novel targets and biomarkers. The technology provides unprecedented resolution for tracking cellular responses to therapeutic interventions, as demonstrated in studies of spliceosome inhibitor treatments where full-length scRNA-seq revealed extensive differential transcript usage that would be missed by conventional gene-level analysis [13].

The journey from Tang et al.'s pioneering 2009 method to today's sophisticated high-throughput platforms represents one of the most transformative technological evolution in modern biology. Key breakthroughs in cell partitioning, molecular barcoding, and sequencing chemistry have collectively enabled the routine generation of cellular atlases with unprecedented resolution and scale. The current landscape offers researchers a diverse toolkit of platform options, each with distinct strengths optimized for specific biological questions and sample types.

Despite remarkable progress, scRNA-seq technologies continue to evolve. Current challenges include further reducing costs, improving integration with other omics modalities, enhancing spatial resolution, and developing more sophisticated computational methods for extracting biological insights from the complex high-dimensional data generated. The recent emergence of third-generation sequencing platforms for full-length single-cell transcriptomics represents an exciting frontier, promising to reveal the full complexity of isoform-level regulation alongside cellular heterogeneity [13].

As these technologies become increasingly accessible and standardized, their impact on basic research and translational applications will continue to expand. The ongoing refinement of both experimental and computational approaches will further solidify scRNA-seq's position as an indispensable tool for deciphering cellular complexity in health and disease, ultimately accelerating the development of novel therapeutic strategies across the biomedical spectrum.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling transcriptome-wide profiling of individual cells, uncovering complex and rare cell populations, and revealing regulatory relationships between genes that are often masked in bulk population analyses [17]. This transformative technology allows researchers to track the trajectories of distinct cell lineages in development and disease, providing unprecedented insights into cellular heterogeneity [17]. The fundamental building block of this technology stems from the cell itself—the basic unit of life that maintains homeostasis, has a metabolism, grows, adapts to its environment, and reproduces [18]. While next-generation sequencing (NGS) technologies have advanced rapidly in recent years, single-cell analyses present unique technical challenges in both wet-lab procedures and computational analysis that must be carefully addressed throughout the experimental workflow [17].

The complete scRNA-seq workflow encompasses multiple critical stages, from single-cell isolation through library preparation to sequencing and data analysis. Each step introduces specific technical considerations that can significantly impact data quality and biological interpretation. This technical guide provides an in-depth examination of the core experimental workflow, with particular emphasis on the transition from single-cell isolation to library preparation—a phase that determines the success of all downstream analyses. Within the broader context of single-cell RNA sequencing analysis research, understanding these foundational wet-lab procedures is essential for designing robust experiments and accurately interpreting the resulting data in both basic research and drug development applications.

Single-Cell Technologies and Sequencing Platforms

Evolution of Sequencing Technologies

The development of scRNA-seq builds upon decades of sequencing technology evolution. First-generation sequencing, pioneered by Sanger in 1977, utilized the chain termination method with radiolabeled fragments and was later automated with fluorescent dyes [18]. While accurate, this approach was limited by short read lengths (300-1000 bp), high cost per base, and inability to scale for single-cell applications [18]. Second-generation sequencing (next-generation sequencing) emerged with pyrosequencing in 1996 and later Solexa technology (now Illumina), which dominates the current market [18]. These platforms use sequencing-by-synthesis with fluorescent dyes, offering high sensitivity, comprehensive coverage, and the ability to sequence thousands of genes simultaneously—making them ideal for scRNA-seq [18]. Third-generation sequencing introduced long-read technologies from PacBio (2010) and Oxford Nanopore (2012), enabling real-time sequencing of longer fragments without amplification bias and direct detection of epigenetic modifications [18].

scRNA-seq Technology Comparison

Table 1: Comparison of Single-Cell RNA Sequencing Technologies

| Technology Type | Read Length | Key Advantages | Limitations | Common Applications |

|---|---|---|---|---|

| Full-length transcript (Smart-seq2) | Full-length mRNA | Complete transcript coverage, detects isoforms | Lower throughput, higher cost per cell | Alternative splicing analysis, mutation detection |

| 3' end-counting (10X Genomics, Illumina) | 75-300 bp | High throughput, cost-effective, cell barcoding | Limited to 3' end, loses isoform information | Large-scale cell atlas projects, heterogeneity studies |

| Direct RNA (Nanopore) | Variable, long reads | No RT or PCR bias, detects RNA modifications | Higher error rates, specialized equipment | Native RNA analysis, modification studies |

The choice between these technologies involves trade-offs between throughput, sensitivity, transcript coverage, and cost. For most large-scale applications, 3' end-counting methods like 10X Genomics and Illumina's Single Cell 3' RNA Prep have become predominant due to their scalability and cost-effectiveness [19]. These methods utilize microfluidic partitioning to isolate individual cells, where reverse transcription occurs with barcoded primers that label all mRNAs from the same cell with a unique cellular barcode [20]. Full-length methods like SMART-Seq2 remain valuable for applications requiring complete transcript information, while emerging direct RNA sequencing approaches offer unique capabilities for studying native RNA molecules without reverse transcription or amplification biases [21] [22].

Comprehensive Experimental Workflow

Single-Cell Isolation and Quality Control

The initial phase of any scRNA-seq experiment involves the isolation of viable single cells from complex tissues or cell cultures. This process requires careful optimization to preserve cell viability, minimize stress responses, and maintain representative cellular diversity. Common isolation methods include fluorescence-activated cell sorting (FACS), microfluidic capture, and dilution-based techniques, each with specific advantages depending on cell type and experimental goals. Following isolation, cell quality must be rigorously assessed through viability staining, visual inspection, and quantification to ensure high-quality input material.

Critical to this stage is the preparation of high-quality RNA starting material. For most scRNA-seq protocols, including the Illumina Single Cell 3' RNA Prep, the process requires either 300 ng of poly(A) tailed RNA or 1 µg of total RNA in 8 µl as input [21]. The quality checks performed during this stage are essential in ensuring experimental success, as using too little or too much RNA, or RNA of poor quality (e.g., fragmented or containing chemical contaminants) can severely compromise library preparation [21]. Researchers should utilize quality control metrics such as RNA Integrity Number (RIN) or similar assessments to verify RNA quality before proceeding to library construction.

Library Preparation Workflow

The library preparation process converts captured single-cell transcripts into sequencing-ready libraries through a series of molecular biology steps. The following diagram illustrates the complete workflow from single-cell isolation to sequencing:

Diagram 1: Single-Cell RNA Sequencing Workflow

Reverse Transcription and Barcoding

The first biochemical step in library preparation involves reverse transcription to synthesize complementary DNA (cDNA) from captured mRNA transcripts [21]. This process typically utilizes a reverse transcriptase with terminal transferase activity, which, when combined with a template-switch primer, constructs cDNAs containing two universal priming sequences [22]. For 3' end-counting methods, this reaction incorporates unique molecular identifiers (UMIs) and cellular barcodes that enable multiplexing and digital counting of transcripts during downstream analysis. The reverse transcription step requires approximately 85 minutes and represents the only recommended pause point in the protocol, where RT-RNA can be stored at -80°C for later use [21].

cDNA Amplification and Adapter Ligation

Following reverse transcription, the cDNA undergoes preamplification from universal priming sequences to generate sufficient material for library construction [22]. The amplified cDNA then proceeds to adapter ligation, where sequencing adapters are attached to the RNA-cDNA hybrid ends in a process requiring approximately 45 minutes [21]. It is strongly recommended to sequence the library immediately after adapter preparation to maintain optimal sample quality. For Illumina platforms, the resulting libraries are composed of standard paired-end constructs that begin with P5 and end with P7, with Read 1 containing barcode information (>45 bases) and Read 2 containing gene expression information (>72 bases) [19].

Library Quality Control and Sequencing Specifications

Following library preparation, rigorous quality control is essential to ensure sequencing success. This includes quantification using fluorometric methods (e.g., Qubit assays) and assessment of size distribution. Libraries must then be diluted to appropriate concentrations for sequencing, with specific requirements varying by platform:

Table 2: Sequencing Specifications for Illumina Single Cell 3' RNA Prep

| Parameter | T2 Kit (5,000 cells) | T10 Kit (17,000 cells) | T20 Kit (40,000 cells) | T100 Kit (200,000 cells) |

|---|---|---|---|---|

| Recommended Reads per Cell | 20,000 | 20,000 | 20,000 | 20,000 |

| Total Reads Required | 100 million | 340 million | 800 million | 4 billion |

| NextSeq 500/550 Loading | 1.6 pM + ≥1% PhiX | 1.6 pM + ≥1% PhiX | 1.6 pM + ≥1% PhiX | 1.6 pM + ≥1% PhiX |

| NovaSeq 6000 Loading | 210 pM + ≥1% PhiX | 210 pM + ≥1% PhiX | 210 pM + ≥1% PhiX | 210 pM + ≥1% PhiX |

| NovaSeq X Series Loading | 190-200 pM + ≥2% PhiX | 190-200 pM + ≥2% PhiX | 190-200 pM + ≥2% PhiX | 190-200 pM + ≥2% PhiX |

A critical consideration is that libraries from all experimental conditions should be pooled together before single-cell sequencing to minimize batch effects and assist with index color balancing [19]. Additionally, a minimum of 1% PhiX (2% for NovaSeq X Series) must be included in the final library loading pool for proper calibration and quality control [19].

Library Preparation Methodologies

Comparative Analysis of Library Prep Methods

Several library preparation methodologies have been developed for scRNA-seq applications, each with distinct advantages and limitations. The following diagram illustrates the key decision points in selecting an appropriate library preparation strategy:

Diagram 2: Library Preparation Method Selection

3' End-Counting Methods

The 3' end-counting approaches employed by 10X Genomics and Illumina Single Cell 3' RNA Prep utilize microfluidic partitioning to isolate individual cells, followed by reverse transcription with barcoded primers [20] [19]. These methods specifically capture the 3' ends of transcripts, which determines downstream analysis and biological insights [20]. The library preparation method chosen directly influences whether RNA sequences are captured from transcript ends (e.g., 10X Genomics, Drop-seq) or full-length transcripts (e.g., Smart-seq) [20]. These approaches offer high throughput and cost-effectiveness, making them ideal for large-scale cell atlas projects and studies focusing on cellular heterogeneity rather than isoform-level analysis.

Full-Length Methods

Full-length transcript methods such as SMART-Seq2 employ a different strategy using reverse transcriptase with terminal transferase activity combined with a template-switch mechanism to construct cDNAs with universal priming sequences on both ends [22]. This approach enables complete transcript coverage from 5' to 3' ends, allowing for detection of alternative splicing variants, single nucleotide polymorphisms, and complete transcript isoforms. While offering more comprehensive transcript information, these methods generally have lower throughput and higher cost per cell compared to 3' end-counting approaches.

Direct RNA Sequencing

Direct RNA sequencing methodologies, such as Oxford Nanopore's SQK-RNA004 kit, sequence native RNA molecules without reverse transcription or PCR amplification [21]. This approach removes RT and PCR biases and allows direct detection of RNA modifications, including base modifications. The protocol requires either poly(A) tailed RNA or total RNA as starting material and involves synthesis of a complementary cDNA strand for stability before adapter ligation and sequencing [21]. Unlike DNA, RNA is translocated through the nanopore in the 3'-5' direction, though basecalling algorithms automatically flip the data to display reads 5'-3' [21].

Essential Research Reagents and Materials

Successful scRNA-seq library preparation requires carefully selected reagents and materials optimized for single-cell applications. The following toolkit represents essential components for the experimental workflow:

Table 3: Research Reagent Solutions for scRNA-seq Library Preparation

| Reagent/Material | Function | Example Products | Quality Control Considerations |

|---|---|---|---|

| Cell Viability Stain | Distinguish live/dead cells during isolation | Propidium iodide, Trypan blue | >90% viability typically required |

| Lysis Buffer | Release RNA while preserving integrity | Various commercial kits | Must inactivate RNases immediately |

| Oligo-dT Primers with Barcodes | mRNA capture and cellular indexing | 10X Barcoded beads, Illumina RT Primer | UMI design critical for counting |

| Reverse Transcriptase | cDNA synthesis from mRNA | Induro Reverse Transcriptase | High processivity and fidelity |

| Template Switching Oligo | Add universal primer site to cDNA | SMART-Seq2 oligonucleotides | Efficiency affects library complexity |

| PCR Master Mix | cDNA preamplification | Various high-fidelity polymerases | Minimize amplification bias |

| Sequencing Adapters | Platform-specific library tagging | Illumina P5/P7, Nanopore adapters | Ligation efficiency impacts yield |

| Solid Phase Reversible Immobilization Beads | Size selection and clean-up | Agencourt RNAClean XP beads | Ratio optimization critical |

| Library Quantification Kits | Accurate concentration measurement | Qubit RNA HS, Qubit dsDNA HS | Fluorometric methods preferred |

| Sequencing Spike-in Controls | Monitor technical performance | PhiX, ERCC RNA Spike-In mixes | Essential for quality monitoring |

Additional specialized reagents may be required for specific protocols, such as the RNA Flush Tether and Flow Cell Flush for nanopore-based direct RNA sequencing [21] or Murine RNase Inhibitor to maintain RNA integrity during library preparation [21]. For all third-party reagents, it is recommended to follow the manufacturer's instructions for preparation and use, as alternatives may not have been validated for specific scRNA-seq applications [21].

The experimental workflow from single-cell isolation to library preparation represents a critical foundation for all subsequent analysis in scRNA-seq studies. Technical decisions made during these initial stages—from cell quality control through reverse transcription to final library construction—profoundly impact data quality, reliability, and biological interpretability. As the field continues to evolve with emerging methods including Methyl-Seq, CRISPR-Cas9 screening, ATAC-Seq, and multiomics approaches, the fundamental principles of careful experimental design and rigorous quality control remain paramount [23]. By understanding the comprehensive workflow, technical requirements, and reagent considerations outlined in this guide, researchers can design and execute robust scRNA-seq experiments that generate meaningful biological insights and advance our understanding of cellular heterogeneity in health and disease.

Single-cell RNA sequencing (scRNA-seq) has revolutionized our ability to study biological systems at unprecedented resolution, enabling the characterization of transcriptomes for individual cells within complex tissues [24]. This technology has become the leading technique for compiling cell atlases of tissues, organs, and organisms, providing powerful insights into cellular heterogeneity [24] [3]. As researchers construct comprehensive cell atlases and seek to identify rare cell populations, they face significant technical and computational challenges related to protocol selection, data quality, and analytical methods.

The fundamental unit of life—the cell—serves as the building block for all living organisms, and understanding cellular diversity is essential for unraveling complex biological processes [18]. Single-cell technologies now allow researchers to profile thousands to millions of cells simultaneously, creating unprecedented opportunities to discover novel cell types and states, particularly rare populations that may play critical roles in development, disease, and therapeutic responses [25] [26]. This technical guide examines current best practices and methodologies for addressing cellular complexity through scRNA-seq, with specific focus on cell atlas construction and rare cell identification within the broader context of single-cell RNA sequencing analysis research.

Experimental Design and Protocol Selection

Comparative Performance of scRNA-seq Protocols

Selecting appropriate scRNA-seq protocols is crucial for generating high-quality data capable of addressing specific research questions. A comprehensive multicenter benchmarking study evaluating 13 commonly used scRNA-seq and single-nucleus RNA-seq protocols revealed marked differences in performance characteristics [24]. These protocols differed substantially in their RNA capture efficiency, technical bias, scalability, and costs, directly impacting their predictive value and suitability for integration into reference cell atlases.

Table 1: Performance Characteristics of Major scRNA-seq Protocol Categories

| Protocol Type | Capture Efficiency | Library Complexity | Cell Throughput | Cost per Cell | Best Application |

|---|---|---|---|---|---|

| Plate-based (Smart-seq2) | High | High | Low | High | Detailed transcriptome characterization |

| Droplet-based (10X Genomics) | Medium | Medium | High | Low | Large-scale cell atlas projects |

| Single-nucleus RNA-seq | Lower | Lower | High | Medium | Frozen or hard-to-dissociate tissues |

| In situ sequencing | Low | Low | Medium | High | Spatial context preservation |

The benchmarking results demonstrated that no single protocol excels across all applications, highlighting the importance of matching protocol capabilities to specific research goals [24]. For large-scale cell atlas projects, droplet-based methods often provide the optimal balance of throughput, cost, and data quality, while plate-based methods with full-length transcript coverage remain valuable for characterizing splice variants or detecting low-abundance transcripts.

Sample Preparation and Library Construction

Proper sample preparation is fundamental to successful scRNA-seq experiments. The initial steps involve creating a single-cell suspension through tissue dissociation, which must be optimized to maximize cell viability while preserving transcriptomic integrity [3]. Following dissociation, cells are isolated using either plate-based or droplet-based methods, each with distinct advantages and limitations:

- Plate-based techniques isolate individual cells into separate wells, typically providing higher sequencing depth per cell but at lower throughput [3]

- Droplet-based methods encapsulate cells in nanoliter-scale droplets, enabling profiling of thousands to tens of thousands of cells in a single experiment [3]

During library construction, cellular mRNA is captured, reverse-transcribed to cDNA, and amplified [3]. Critical to this process is the incorporation of cellular barcodes (to tag mRNA from individual cells) and unique molecular identifiers (UMIs, to distinguish biological duplicates from amplification artifacts) [3]. The quality of library preparation directly influences downstream data quality, emphasizing the need for rigorous optimization and quality control at this stage.

Quality Control and Preprocessing Framework

Quality Control Metrics and Thresholding

Robust quality control (QC) is essential for ensuring that subsequent analyses reflect biological reality rather than technical artifacts. scRNA-seq data requires careful evaluation using three key QC covariates [3] [4]:

- Count depth: The total number of counts per barcode

- Gene detection: The number of genes detected per barcode

- Mitochondrial fraction: The fraction of counts originating from mitochondrial genes

These metrics must be considered jointly rather than in isolation, as each has biological interpretations that could be mistakenly filtered out if thresholds are set too stringently [3]. For example, cells with high mitochondrial fractions may represent stressed or dying cells but could also reflect metabolically active populations involved in respiratory processes [4].

Table 2: Quality Control Thresholds and Interpretations

| QC Metric | Typical Threshold | Below Threshold Interpretation | Above Threshold Interpretation |

|---|---|---|---|

| Count depth | 500-1,000 counts | Low-quality cell, empty droplet | Potential doublet |

| Genes detected | 200-500 genes | Poor mRNA capture | Potential doublet |

| Mitochondrial fraction | 10-20% | Normal variation | Stressed/dying cell |

| Ribosomal fraction | 5-15% | Normal variation | Possible technical bias |

Advanced QC approaches utilize median absolute deviation (MAD) for automated thresholding, identifying outliers that differ by more than 5 MADs from the median as potential low-quality cells [4]. This statistical approach provides a more robust filtering strategy than fixed thresholds, especially when working with heterogeneous cell populations with naturally varying RNA content.

Doublet Detection and Ambient RNA Correction

Two significant technical challenges in scRNA-seq analysis are doublets (multiple cells labeled as a single cell) and ambient RNA (background RNA released from dead cells). Doublets can create misleading intermediate cell states that complicate downstream analysis, while ambient RNA can blur distinct cell type boundaries [3].

Specialized computational tools have been developed to address these challenges:

- Doublet detection: Scrublet, DoubletFinder, and DoubletDecon identify likely doublets based on their expression profiles representing a mixture of multiple cell types [3]

- Ambient RNA correction: Methods like CellBender and DecontX model and subtract background RNA signals, improving cluster resolution [4]

These correction methods are particularly important for rare cell identification, as technical artifacts can either obscure true rare populations or create artificial ones.

Computational Methods for Rare Cell Identification

Algorithmic Approaches for Rare Cell Detection

The identification of rare cell populations presents unique computational challenges, as these populations often represent less than 1% of total cells and may not form distinct clusters in conventional dimensionality reduction space [25] [26]. Several algorithmic strategies have been developed specifically for rare cell detection:

Similarity-based methods like scSID (single-cell similarity division algorithm) leverage the observation that cells of the same type exhibit higher intercellular similarity than cells from different types [25]. scSID employs a two-step approach: (1) cell division based on individual similarity through K-nearest neighbor analysis in gene expression space, and (2) rare cell detection based on population similarity to address potential noise and outlier effects [25].

Gene expression-based methods include CellSIUS (Cell Subtype Identification from Upregulated gene Sets), which identifies rare populations based on bimodal distribution patterns of marker genes within initially identified major clusters [26]. This approach first performs coarse clustering to define major cell populations, then searches for subpopulations exhibiting strong upregulation of specific gene sets that show bimodal distributions within the major cluster [26].

Other specialized algorithms include:

- RaceID: Uses k-means clustering and count probabilities to identify abnormal cells [25]

- GiniClust: Employs Gini coefficients for gene selection prior to density-based clustering [25]

- FiRE: Assigns rarity scores based on sketching techniques and hash codes [25]

Benchmarking Rare Cell Detection Performance

A comprehensive evaluation of rare cell identification methods using a controlled dataset of ~12,000 single-cell transcriptomes from eight human cell lines revealed significant differences in algorithm performance [26]. When tested on datasets containing cell populations representing as little as 0.08-0.15% of total cells, most standard clustering methods (including SC3, Seurat, and DBSCAN) failed to identify these rare populations, instead merging them with more abundant cell types [26].

Table 3: Performance Comparison of Rare Cell Identification Methods

| Method | Sensitivity | Specificity | Scalability | Signature Gene Detection | Memory Efficiency |

|---|---|---|---|---|---|

| CellSIUS | High | High | Medium | Yes | Medium |

| scSID | High | High | High | Limited | High |

| RaceID3 | Medium | Medium | Low | Yes | Low |

| GiniClust2 | Medium | Medium | Medium | Yes | Low |

| FiRE | High | Medium | High | No | Medium |

CellSIUS consistently demonstrated high sensitivity and specificity for rare cell identification across multiple benchmark datasets, simultaneously providing transcriptomic signatures indicative of rare cell function [26]. Meanwhile, scSID showed exceptional scalability and memory efficiency when applied to large datasets (e.g., 68K PBMC cells), making it particularly suitable for atlas-scale projects [25].

Cell Atlas Construction and Integration

Multi-Batch Integration and Comparative Analysis

Cell atlas projects frequently involve data generated across multiple batches, platforms, or experimental conditions, introducing technical variations that can confound biological comparisons [27]. Batch effects—systematic technical differences between datasets—can obscure true biological signals and complicate the identification of consistent cell types across samples [27].

Advanced computational methods like CODAL (COvariate Disentangling Augmented Loss) have been developed to explicitly disentangle technical effects from biological variation [27]. CODAL uses a variational autoencoder-based statistical model with mutual information regularization to separate factors related to technical artifacts from genuine biological signals, enabling more accurate comparative analysis of perturbation effects across batches [27].

Reference Atlas Construction and Annotation

Constructing comprehensive reference atlases requires integrating multiple datasets while maintaining consistent cell type annotations. The process typically involves:

- Individual dataset processing: Quality control, normalization, and preliminary clustering performed on each dataset separately

- Batch correction: Application of integration methods to remove technical variations while preserving biological differences

- Reference building: Creation of a unified reference framework incorporating all datasets

- Cell type annotation: Labeling of cell populations using marker genes, reference datasets, and automated annotation tools

The iterative nature of atlas construction necessitates careful validation at each step, with particular attention to the potential introduction of artifacts during integration and the biological plausibility of identified cell states.

Visualization and Interpretation Strategies

Optimized Visualization for Complex Cell Atlases

Effective visualization is crucial for interpreting scRNA-seq data, particularly when dealing with complex atlases containing dozens of cell populations. Standard visualization methods often assign visually similar colors to spatially neighboring clusters in dimensionality reduction plots (e.g., UMAP, t-SNE), making distinct cell populations difficult to differentiate [28].

Spatially-aware color optimization tools like Palo address this challenge by calculating spatial overlap scores between cluster pairs and assigning visually distinct colors to clusters that appear close in reduced dimension space [28]. The Palo algorithm:

- Fits 2-D kernel density functions for each cluster

- Identifies "hot grid points" representing cluster cores

- Calculates pairwise Jaccard similarity indices between clusters

- Optimizes color assignments to maximize perceptual differences between neighboring clusters

This approach significantly improves the interpretability of complex single-cell and spatial transcriptomics visualizations, enabling researchers to more accurately identify cluster boundaries and relationships [28].

Interpretable Latent Space Representations

Beyond conventional dimensionality reduction methods, approaches like topic modeling (e.g., MIRA, CODAL) decompose scRNA-seq data into interpretable modules of co-regulated genes or co-accessible chromatin regions [27]. These modules often correspond to biologically meaningful gene programs activated in specific cell states or during particular processes like differentiation or activation.

By representing cells as mixtures of these latent topics, researchers can gain insights into the regulatory programs underlying cell states and their alterations in response to perturbations [27]. This representation is particularly valuable for comparative atlas analysis, where topics provide a stable framework for comparing cells across different conditions or batches.

Research Reagent Solutions and Experimental Tools

Successful single-cell research requires appropriate selection of reagents and computational tools throughout the experimental workflow. Key components include:

Table 4: Essential Research Reagents and Computational Tools

| Category | Specific Tools/Reagents | Function | Considerations |

|---|---|---|---|

| Library Preparation | 10X Chromium, SMART-seq, CEL-seq2 | Single-cell RNA library generation | Throughput, sensitivity, cost per cell |

| Quality Control | Seurat, Scater, Scanpy | QC metric calculation and visualization | Integration with downstream analysis |

| Doublet Detection | Scrublet, DoubletFinder | Identification of multiple cells mislabeled as single | Threshold optimization for specific datasets |

| Rare Cell Detection | CellSIUS, scSID, RaceID | Identification of low-abundance cell populations | Sensitivity/specificity trade-offs |

| Batch Correction | CODAL, Harmony, Seurat CCA | Removal of technical batch effects | Handling of batch-confounded cell types |

| Visualization | Palo, ggplot2, SCUBI | Data visualization and interpretation | Color palette optimization for clarity |

Advanced Applications and Future Directions

Multimodal Single-Cell Analysis

The integration of scRNA-seq with other single-cell modalities (e.g., ATAC-seq, protein abundance, spatial information) represents the cutting edge of single-cell genomics [27] [29]. Methods like CODAL and Seqtometry enable simultaneous analysis of multiple data types from the same cells, providing complementary insights into gene regulation and cellular function [27] [29].

Seqtometry, for example, uses direct profiling of gene expression and chromatin accessibility through advanced signature scoring to generate biologically interpretable dimensions for cell identification and characterization [29]. This approach combines cell grouping and specific characterization into a single step based on enrichment values for predefined gene signatures [29].

Perturbation Modeling and Comparative Analysis

Single-cell technologies are increasingly applied to study the effects of genetic and chemical perturbations on complex cell systems [27]. Comparative analysis of perturbation atlases can reveal profound insights into cell state and trajectory alterations, but requires specialized analytical approaches to distinguish true biological effects from technical artifacts [27].

The CODAL framework has demonstrated particular utility in perturbation analysis, enabling identification of batch-confounded cell states in embryonic development atlases with gene knockouts [27]. By explicitly modeling technical effects, CODAL facilitates direct comparison of perturbation effects across different experimental batches, revealing altered cell states and differentiation trajectories that might otherwise remain obscured [27].

Addressing cellular complexity through single-cell RNA sequencing requires careful consideration of experimental design, computational methods, and interpretation frameworks. Cell atlas construction and rare cell identification represent complementary approaches to mapping cellular heterogeneity, each with distinct methodological requirements. As single-cell technologies continue to evolve, integrating multimodal data, improving batch correction, and enhancing visualization will further advance our ability to decipher complex biological systems at single-cell resolution. The methodologies and best practices outlined in this technical guide provide a foundation for researchers embarking on single-cell studies aimed at comprehensive cellular characterization and rare population identification.

Single-cell RNA sequencing (scRNA-seq) has revolutionized transcriptomic studies by enabling the dissection of gene expression at the resolution of individual cells, moving beyond the population-averaged data provided by bulk RNA-seq [10] [30]. This technological advancement has created unprecedented opportunities for exploring cell-to-cell heterogeneity, identifying rare cell populations, and understanding complex biological systems [17]. More recently, single-nucleus RNA sequencing (snRNA-seq) has emerged as a complementary approach that addresses some of the key limitations of scRNA-seq, particularly for specific tissues and experimental conditions [31]. The choice between these two approaches represents a critical strategic decision for researchers designing experiments across diverse fields including developmental biology, cancer research, neuroscience, and drug development. This technical guide provides a comprehensive comparison of scRNA-seq and snRNA-seq technologies, drawing on current experimental evidence to outline their respective advantages, limitations, and appropriate use cases within the broader context of single-cell genomics research.

Single-Cell RNA Sequencing (scRNA-seq) Technologies

scRNA-seq technologies have evolved significantly since their inception, with current methods primarily falling into two categories based on transcript coverage: full-length transcript sequencing and 3'- or 5'-end counting protocols [30]. Full-length approaches such as Smart-seq2 [32] and MATQ-seq provide comprehensive transcript coverage, enabling isoform usage analysis, allelic expression detection, and RNA editing identification. These methods generally demonstrate superior sensitivity in detecting a greater number of expressed genes per cell [30]. In contrast, droplet-based technologies such as Drop-seq [33], InDrop, and 10X Chromium [31] capture only the 3' or 5' ends of transcripts but offer significantly higher throughput at a lower cost per cell, making them ideal for large-scale cell mapping efforts [30]. The fundamental workflow involves isolating individual cells through methods such as flow-activated cell sorting (FACS) or microfluidics, cell lysis, reverse transcription, cDNA amplification, and library preparation for sequencing [17] [30].

Single-Nucleus RNA Sequencing (snRNA-seq) Methodologies

snRNA-seq methodologies, including sNuc-DropSeq, DroNc-seq [31], and 10X Chromium for nuclei [32], have been developed to overcome specific challenges associated with whole-cell sequencing. These approaches sequence RNA primarily from the nuclear compartment, fundamentally changing the biological material being analyzed. The standard protocol involves tissue homogenization followed by nuclei isolation through density gradient centrifugation or filtration, eliminating the need for enzymatic dissociation that can damage cell integrity [33]. Early concerns about the sensitivity of snRNA-seq have been addressed by studies demonstrating comparable gene detection sensitivity between single-cell and single-nucleus platforms [31], with both methods capturing similar numbers of genes per cell when optimized. This technological advancement has expanded the range of samples accessible to single-cell transcriptomics, particularly for archived tissues and difficult-to-dissociate cell types.

Comparative Analysis: scRNA-seq vs snRNA-seq

Direct Experimental Comparisons

Several systematic studies have directly compared the performance of scRNA-seq and snRNA-seq across different tissue types. A comprehensive benchmark analysis compared seven methods for single-cell and/or single-nucleus profiling across cell lines, peripheral blood mononuclear cells, and brain tissue, generating 36 libraries in six separate experiments [32]. This study developed a unified computational pipeline (scumi) to enable fair cross-method comparisons, evaluating both basic performance metrics and the ability to recover known biological information.

A targeted comparison in adult mouse kidney tissue revealed striking differences between the approaches [31]. While scRNA-seq using the DropSeq platform identified ten cell clusters, it failed to capture glomerular cell types, and one cluster consisted primarily of artifactual dissociation-induced stress response genes. In contrast, snRNA-seq from all three platforms (sNuc-DropSeq, DroNc-seq, and 10X Chromium) captured a diverse array of kidney cell types not represented in the scRNA-seq dataset, including glomerular podocytes, mesangial cells, and endothelial cells. Notably, the snRNA-seq protocol yielded a 20-fold increase in podocyte representation compared to published scRNA-seq datasets (2.4% versus 0.12%, respectively) [31] [34].

Table 1: Performance Comparison of scRNA-seq vs snRNA-seq in Adult Mouse Kidney

| Parameter | scRNA-seq | snRNA-seq | Significance |

|---|---|---|---|

| Cell types captured | 10 clusters, missing glomerular types | Diverse types including podocytes, mesangial, endothelial | snRNA-seq reduces dissociation bias |

| Stress response genes | Present in one cluster | Not detected | snRNA-seq eliminates dissociation-induced stress |

| Podocyte yield | 0.12% | 2.4% | 20-fold improvement with snRNA-seq |

| Gene detection sensitivity | Equivalent | Equivalent | Comparable sensitivity |

| Compatibility with frozen tissue | Limited | Excellent | snRNA-seq works with archived samples |

Advantages and Limitations of Each Approach

scRNA-seq Strengths and Limitations

scRNA-seq provides a comprehensive view of the cellular transcriptome by capturing both cytoplasmic and nuclear RNA, making it particularly suitable for:

- Analysis of highly expressed genes where cytoplasmic RNA contributes significantly to the signal

- Cell types that dissociate easily and remain viable after processing

- Studies requiring immediate processing of fresh tissues

- Experiments where cytoplasmic transcripts are of primary interest

- Research questions benefiting from full-length transcript information [30]

However, scRNA-seq faces several limitations:

- Dissociation bias: Enzymatic and mechanical dissociation required for single-cell suspension preferentially selects certain cell types while damaging others, particularly fragile cells like neurons [33]

- Transcriptional stress responses: Dissociation procedures can induce artifactual stress response genes that confound biological interpretations [31]

- Limited sample compatibility: Generally requires fresh tissue processing, limiting use with archived samples [33]

- Underrepresentation of rare cell types: Specific populations may be lost during dissociation protocols [31]

snRNA-seq Strengths and Limitations

snRNA-seq offers distinct advantages for specific applications:

- Reduced dissociation bias: Nuclei are more resistant to physical stresses than whole cells, preserving fragile cell types [33]

- No stress response artifacts: Eliminates dissociation-induced transcriptional stress responses [31]

- Compatibility with frozen tissues: Enables analysis of biobanked and archived samples [31] [33]

- Access to difficult tissues: Particularly advantageous for brain, kidney, fat, and other tough-to-dissociate tissues [31] [33]

- Representation of rare cell types: Better captures vulnerable populations such as podocytes in kidney [34]

The limitations of snRNA-seq include:

- Loss of cytoplasmic RNA, potentially missing important transcripts

- Possible differences in RNA composition compared to whole cells

- Currently less established protocols and computational methods

- Potential underrepresentation of very small nuclei [31]

Table 2: Appropriate Use Cases for scRNA-seq vs snRNA-seq

| Research Scenario | Recommended Approach | Rationale |

|---|---|---|

| Fresh, easily dissociated tissues | scRNA-seq | Optimal for standard tissues with good dissociation characteristics |

| Frozen or archived samples | snRNA-seq | Only practical option for biobanked tissues |

| Brain tissue studies | snRNA-seq | Avoids neuronal damage during dissociation [33] |

| Rare cell type identification | snRNA-seq | Better representation of fragile populations [31] |

| Full-length transcript analysis | scRNA-seq (full-length protocols) | Requires protocols such as Smart-seq2 [30] |

| High-throughput cell mapping | Either (droplet-based) | Both approaches work with high-throughput platforms |

| Clinical samples with limited availability | snRNA-seq | Enables banking and batch processing |

Experimental Design and Protocol Considerations

Sample Preparation Methodologies

The critical differences between scRNA-seq and snRNA-seq begin at the sample preparation stage. For scRNA-seq, tissues undergo enzymatic dissociation using cocktails such as collagenase, trypsin, or tissue-specific enzymes, combined with mechanical disruption to create single-cell suspensions [30]. This process must be carefully optimized for each tissue type to balance yield against cellular stress and viability. Cells are then typically resuspended in appropriate buffers with viability often assessed using dye exclusion methods.

For snRNA-seq, the protocol involves mechanical homogenization of fresh or frozen tissue in hypotonic lysis buffers to release nuclei while preserving nuclear membrane integrity [31] [33]. Nuclei purification typically employs density gradient centrifugation (e.g., using sucrose or iodixanol gradients) or fluorescence-activated nucleus sorting (FANS) to remove cellular debris. The elimination of enzymatic treatment and the mechanical robustness of nuclei significantly reduce selection bias during sample preparation.

Single-Cell and Single-Nucleus Isolation Protocols

Cell and nucleus isolation strategies share some common platforms but require different optimization parameters. Droplet-based microfluidics systems such as 10X Genomics Chromium can be adapted for both applications, with specific reagent kits optimized for either whole cells or nuclei [31]. Plate-based methods using FACS also work for both, though nozzle sizes and sorting parameters differ. For nuclei, larger nozzle sizes (100-130 μm) are typically used to accommodate nuclear aggregates and avoid damage.

A key consideration is the assessment of input quality. For scRNA-seq, cell viability exceeding 80-90% is generally recommended, while for snRNA-seq, nuclei integrity and the absence of cytoplasmic contamination are crucial quality metrics. The optimal input concentration also varies, with nuclei often requiring higher loading concentrations than whole cells to achieve similar capture rates in droplet-based systems.

Decision Workflow for scRNA-seq vs snRNA-seq

Computational Analysis Considerations

Specialized Bioinformatics Pipelines

The computational analysis of scRNA-seq and snRNA-seq data shares many common steps but requires specific considerations for each data type. Standard analysis workflows include quality control, read mapping, gene expression quantification, normalization, dimensionality reduction, cell clustering, and differential expression analysis [17] [30]. However, snRNA-seq data typically exhibits a higher proportion of intronic reads compared to scRNA-seq, necessitating alignment strategies that properly assign these reads [32].

Quality control metrics differ between the two approaches. For scRNA-seq, common QC filters remove cells with low unique molecular identifier (UMI) counts, few detected genes, or high mitochondrial read percentages (indicating apoptosis or broken cells) [35]. For snRNA-seq, mitochondrial reads are less informative, while measures of nuclear integrity and the ratio of intronic to exonic reads provide better quality assessment.

Advanced Analytical Approaches

Machine learning approaches, particularly autoencoders, have shown promise in addressing the high dimensionality, sparsity, and technical noise inherent in both scRNA-seq and snRNA-seq data [36]. Autoencoder-based tools such as scAEspy provide effective dimensionality reduction that captures non-linear gene-gene relationships, improving downstream clustering and visualization. These approaches can be particularly valuable for integrating multiple datasets and batch effect correction, which is essential when combining data from different experiments or platforms [36].

For trajectory inference and developmental studies, both scRNA-seq and snRNA-seq data can be analyzed using pseudotime algorithms, though the biological interpretations may differ due to the distinct RNA pools being sequenced. Studies such as the analysis of iPSC-derived cardiomyocytes demonstrate the power of these approaches for reconstructing differentiation trajectories [35].

Computational Analysis Workflow

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Research Reagents and Platforms for Single-Cell/Nucleus RNA-seq

| Category | Specific Examples | Function and Application |

|---|---|---|

| Dissociation Reagents | Collagenase, Trypsin, Accutase, Tissue-specific enzyme cocktails | Enzymatic digestion of extracellular matrix for scRNA-seq sample preparation |

| Nuclei Isolation Kits | Sucrose gradient solutions, Iodixanol-based media, Commercial nuclei isolation kits | Purification of intact nuclei from fresh or frozen tissues for snRNA-seq |

| Microfluidic Platforms | 10X Genomics Chromium, Drop-seq, Seq-Well | High-throughput single-cell/nucleus capture and barcoding |

| Library Prep Kits | 10X 3' Gene Expression, SMART-Seq v4, Commercial snRNA-seq kits | Conversion of RNA to sequenceable libraries with cell/nucleus barcodes |

| Viability Assays | Trypan blue, Propidium iodide, Calcein AM | Assessment of cell viability prior to scRNA-seq |

| Nuclear Stains | DAPI, Hoechst, SYTOX compounds | Visualization and quantification of nuclei for quality assessment |