Statistical Fundamentals of Comparability Studies: A Comprehensive Guide for Pharmaceutical Researchers

This article provides a comprehensive guide to the statistical fundamentals underpinning successful comparability studies in biopharmaceutical development.

Statistical Fundamentals of Comparability Studies: A Comprehensive Guide for Pharmaceutical Researchers

Abstract

This article provides a comprehensive guide to the statistical fundamentals underpinning successful comparability studies in biopharmaceutical development. Tailored for researchers, scientists, and drug development professionals, it systematically addresses the core intents of understanding foundational concepts, applying appropriate methodological approaches, troubleshooting common challenges, and validating study outcomes. The content bridges regulatory guidance with practical application, covering essential statistical frameworks from hypothesis formulation and equivalence testing to advanced regression methods and tiered risk-based approaches, empowering teams to design robust studies that demonstrate product comparability throughout the manufacturing lifecycle.

Laying the Groundwork: Core Principles and Regulatory Expectations for Comparability

Within pharmaceutical development and manufacturing, demonstrating comparability following process changes is a regulatory requirement critical for ensuring continuous supply of biological products. This technical guide elaborates on the core principle that comparability does not signify that pre-change and post-change products are identical, but rather that they are highly similar and that any differences have no adverse impact on the product's safety, identity, purity, or efficacy [1] [2]. Framed within a broader thesis on the statistical fundamentals of comparability research, this document provides researchers and drug development professionals with an in-depth examination of the regulatory framework, statistical methodologies, and experimental protocols that underpin a successful comparability exercise.

Regulatory agencies acknowledge that changes to biopharmaceutical manufacturing processes are inevitable for reasons of scaling, cost optimization, and enhancing product safety and efficacy [2]. The manufacturer is responsible for demonstrating that the product's critical quality attributes (CQAs) remain highly similar after such a change. This demonstration relies on a totality-of-evidence approach, which strategically combines data from analytical testing, and sometimes non-clinical and clinical studies, to provide assurance of product quality [1] [2].

The foundational statistical question in a comparability study is: "Are products manufactured in the post-change environment comparable to those in the pre-change environment?" [2] The answer is not a simple "yes" or "no," but a statistically rigorous evaluation that determines if the existing knowledge is sufficiently predictive to ensure that any differences in CQAs have no adverse impact upon the drug product's safety or efficacy [2].

The Regulatory and Statistical Framework

The "Highly Similar" Paradigm

The principle of comparability is well-established in major regulatory guidances. The U.S. Food and Drug Administration (FDA) has shown growing confidence in advanced analytical methods. In a significant shift, a 2025 draft guidance proposes that for well-characterized therapeutic protein products, comparative efficacy studies (CES) may no longer be routinely required if sufficient evidence of biosimilarity can be provided by comparative analytical assessments (CAA) and human pharmacokinetic (PK) studies [1]. This evolution underscores that a CAA is generally more sensitive than a CES to detect differences between two products, should any exist [1].

Similarly, the European Pharmacopoeia (Ph. Eur.) chapter 5.27, "Comparability of alternative analytical procedures," describes how the comparability of an analytical procedure may be demonstrated through equivalence testing, generating comparable data for the analytical procedure performance characteristics (APPCs) of the two procedures [3].

Formulating the Hypotheses and the Role of Confidence Intervals

Statistically, comparability is formally evaluated using a structured approach involving hypothesis testing [2]. For Tier 1 CQAs (those with the highest potential impact on clinical outcomes), the most widely used procedure is equivalence testing, which is advocated by the U.S. FDA [2].

The hypotheses for an equivalence test are formulated as:

- Null Hypothesis (H₀): The absolute difference between the pre-change (reference) and post-change (test) group means is greater than or equal to a pre-defined equivalence margin (δ). |μᵣ − μₜ| ≥ δ

- Alternative Hypothesis (H₁): The absolute difference between the means is less than the equivalence margin. |μᵣ − μₜ| < δ

The goal of the statistical test is to reject the null hypothesis in favor of the alternative, thereby concluding equivalence [2]. This evaluation can be done algebraically or visually through the relationship of confidence intervals to the equivalence margins [2]. A common approach is to use a two-sided 90% confidence interval for the difference between means, which corresponds to the Two One-Sided Tests (TOST) procedure at a 5% significance level [2]. If the entire confidence interval falls within the pre-specified equivalence margins, comparability is demonstrated for that attribute.

A Structured, Risk-Based Approach to Comparability

A successful comparability study requires a systematic, risk-based strategy that prioritizes attributes based on their potential impact on product quality and clinical outcomes.

The Tiered Approach to Critical Quality Attributes (CQAs)

CQAs should be categorized into tiers to determine the appropriate statistical and acceptance criteria for each [2]. The table below summarizes this tiered approach.

Table 1: Tiered Approach for Critical Quality Attributes in Comparability Studies

| Tier | Potential Impact on Quality & Clinical Outcome | Objective of Comparison | Recommended Statistical Approach |

|---|---|---|---|

| Tier 1 | High | To conclude equivalence with high confidence | Equivalence testing (e.g., TOST) using a pre-defined equivalence margin (δ) based on clinical and analytical knowledge. |

| Tier 2 | Medium | To ensure the two products are sufficiently similar | Quality range approach (e.g., ± 3 standard deviations) or statistical process control (SPC) charts. |

| Tier 3 | Low | To display profiles and show they are comparable | Visual comparison of graphical displays (e.g., chromatographic profiles, glycan maps). |

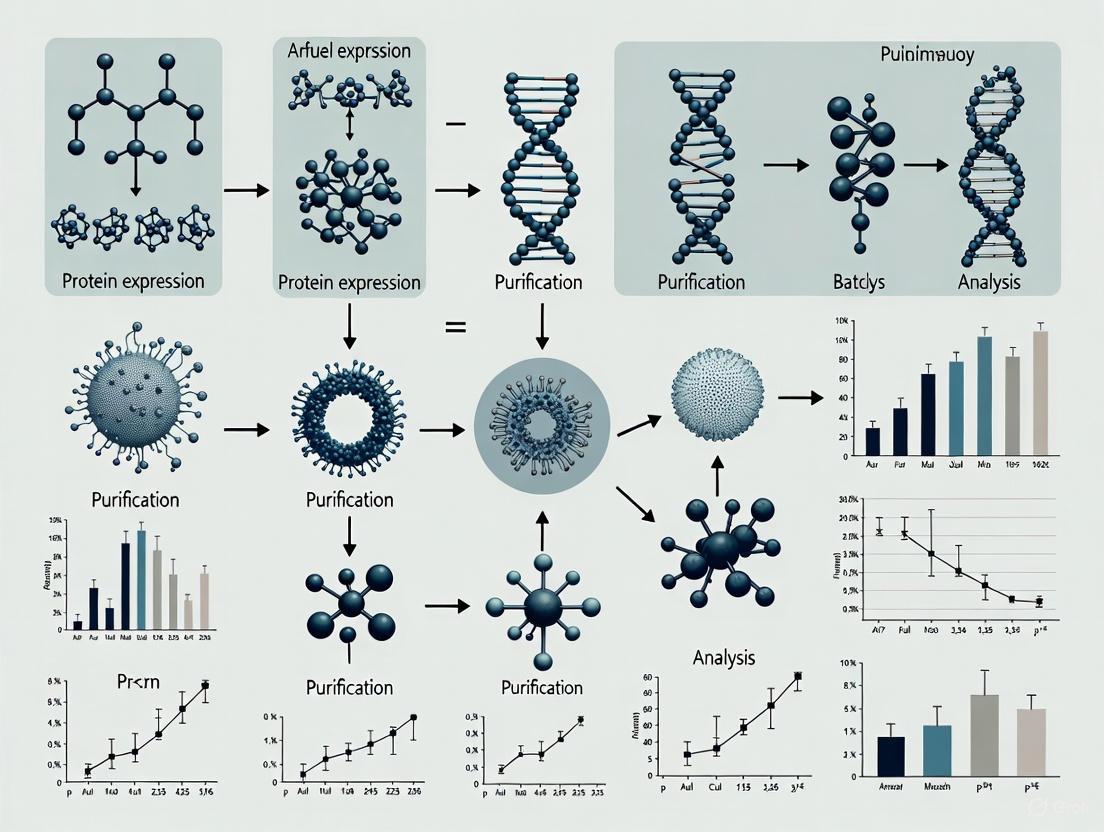

Experimental Workflow for a Comparability Study

The journey from a process change to a successful comparability conclusion follows a logical sequence of activities. The following diagram outlines the key stages in this workflow, from initial problem definition through the iterative process of data collection and analysis.

Key Statistical Methodologies and Protocols

Tier 1 Protocol: Equivalence Testing with Two One-Sided Tests (TOST)

For Tier 1 CQAs, equivalence is typically demonstrated using the TOST procedure [2]. This method effectively tests the composite null hypothesis by performing two separate one-sided tests.

- Assumptions: The measurements for both the Reference (pre-change) and Test (post-change) products are independent and follow a normal distribution. The variances of the two groups may be equal or unequal.

- Equivalence Margin (δ): This is a critical parameter that must be defined a priori based on process and product knowledge, and clinical relevance. It represents the largest difference that is considered clinically and analytically unimportant.

- Procedure:

- Calculate the (1-2α)% confidence interval for the difference in means (μᵣ - μₜ). Commonly, a 90% confidence interval is used for a significance level (α) of 0.05.

- Compare this confidence interval to the equivalence margin [-δ, +δ].

- Decision Rule: If the entire (1-2α)% confidence interval lies entirely within the equivalence margin [-δ, +δ], the null hypothesis is rejected, and equivalence is concluded for that CQA.

Table 2: Visual Interpretation of TOST Confidence Intervals

| Confidence Interval Scenario | Statistical Conclusion | Practical Interpretation |

|---|---|---|

[ <--[-δ]------------[CI]------------[+δ]--> ] |

Equivalence demonstrated | The entire confidence interval (CI) is within the equivalence margins. The difference is not practically significant. |

[ <--[-δ]----[CI]--------[+δ]--> ] |

Equivalence not demonstrated | The CI extends below the lower margin. The Test product may be significantly lower. |

[ <--[-δ]--------[CI]----[+δ]--> ] |

Equivalence not demonstrated | The CI extends above the upper margin. The Test product may be significantly higher. |

[ <--[-δ]------------[CI]------------[+δ]--> ] |

Equivalence not demonstrated | The CI spans beyond both margins. The result is inconclusive. |

The following diagram illustrates the statistical logic and decision-making process of the TOST procedure.

Method Comparability Protocol: Passing-Bablok Regression

When comparing two analytical methods as part of a comparability study (e.g., when implementing an alternative procedure), Passing-Bablok regression is a robust non-parametric method preferred for its insensitivity to outliers and because it does not assume measurement errors are normally distributed [2].

- Assumptions: The two measurement methods are positively correlated and exhibit a linear relationship.

- Objective: To estimate the intercept (constant bias) and the slope (proportional bias) between the two methods.

- Procedure:

- Measure a sufficient number of samples covering the assay range using both the reference and the alternative method.

- Calculate the slope and intercept using the Passing-Bablok algorithm, which is based on the median of all pairwise slopes.

- Construct confidence intervals for both the slope and intercept.

- Decision Rule for Comparability: The two methods are considered comparable if the confidence interval for the intercept contains 0 (indicating no constant bias) and the confidence interval for the slope contains 1 (indicating no proportional bias) [2]. As shown in the example in the search results, a regression line equation of y = -3.0 + 1.00x with 95% CIs for the intercept of [-3.8, -2.1] and for the slope of [0.98, 1.01] would indicate good agreement, as the intercept CI does not contain 0 and the slope CI contains 1 [2].

The Scientist's Toolkit: Essential Reagents and Materials

A robust comparability study relies on high-quality, well-characterized materials and analytical tools. The following table details key research reagent solutions essential for generating reliable data.

Table 3: Essential Research Reagent Solutions for Comparability Studies

| Item / Solution | Function in Comparability Studies |

|---|---|

| Reference Standard | A well-characterized material (e.g., drug substance or product) from the pre-change process that serves as the benchmark for all comparative testing. Its quality attributes are the reference values. |

| Test Article | The material produced by the post-change manufacturing process. Its quality attributes are directly compared to those of the Reference Standard. |

| Cell Bank System | For biologics, a qualified Master Cell Bank and Working Cell Bank ensure that any observed differences are due to the process change and not to genetic drift or instability of the production cell line. |

| Critical Reagents | These include antibodies, enzymes, substrates, and ligands used in identity, potency, and impurity assays (e.g., ELISA, cell-based bioassays). Their quality and consistency are vital for assay performance. |

| Reference Standards for Analytical Procedures | Separate from the product reference standard, these are qualified standards used to calibrate and control the performance of the analytical methods themselves (e.g., a standard for size exclusion chromatography). |

| Process-Specific Resins & Buffers | The specific chromatography resins, filtration membranes, and cell culture media components used in the manufacturing process. Consistency in these materials is crucial for a valid comparison. |

Defining comparability as "highly similar" rather than "identical" is a nuanced but powerful concept that enables biopharmaceutical innovation and improvement while safeguarding public health. This guide has detailed the statistical fundamentals—from the risk-based tiered system and the formulation of equivalence hypotheses to the application of TOST and Passing-Bablok regression—that provide the rigorous evidence base required for this demonstration. The consistent thread is a totality-of-evidence approach, built on a foundation of robust experimental design, appropriate statistical analysis, and transparent reporting. As regulatory science evolves, with increasing reliance on advanced analytical characterization [1], the statistical principles of comparability will remain the bedrock upon which process changes are justified, ensuring that patients continue to receive safe and efficacious medicines.

In the biopharmaceutical industry, manufacturing changes are inevitable due to the need for production scaling, cost optimization, and evolving regulatory requirements. The central research question—"Are products manufactured in the post-change environment comparable to those in the pre-change environment?"—forms the cornerstone of a rigorous scientific and statistical demonstration required by regulatory agencies worldwide [2]. Demonstrating comparability does not mean the products must be identical, but rather that they are highly similar and that any differences in quality attributes have no adverse impact upon safety or efficacy of the drug product [2] [4]. This assessment is founded on a totality-of-evidence strategy that integrates analytical testing, bioassays, and sometimes preclinical or clinical studies [5].

The statistical fundamentals of comparability provide the framework for this demonstration, moving beyond simple "yes" or "no" conclusions to a more nuanced evaluation of whether the evidence is sufficiently strong to claim comparability within a defined confidence level [2]. Properly executed, a comparability study ensures that process improvements and changes can be implemented without compromising product quality, thereby enabling manufacturers to innovate and improve processes while maintaining consistent product quality for patients.

Regulatory Framework and Guidance

Regulatory agencies acknowledge that product and process changes are necessary for the biotech industry to evolve, placing the responsibility on manufacturers to demonstrate that the safety, identity, purity, and potency of the biological product remain unaffected by manufacturing changes [2] [5]. The FDA guidance outlines a systematic approach where determinations of product comparability may be based on chemical, physical, and biological assays, and in some cases, other non-clinical data [5]. If a sponsor can demonstrate comparability through these assessments, additional clinical safety and/or efficacy trials with the new product will generally not be needed [5].

The ICH Q5E guideline specifically addresses comparability for biotechnological/biological products and emphasizes that the existing knowledge must be "sufficiently predictive to ensure that any differences in quality attributes have no adverse impact upon safety or efficacy of the drug product" [4]. This principle is applied throughout the product lifecycle, from early development through commercial manufacturing, with a phase-appropriate approach that recognizes the evolving understanding of the product and its critical quality attributes [4].

Risk-Based Approach to Acceptance Criteria

Setting appropriate acceptance criteria is considered one of the most crucial steps in equivalence testing [6] [7]. A risk-based approach should be employed where higher risks allow only small practical differences, and lower risks allow larger practical differences [6]. Scientific knowledge, product experience, and clinical relevance should be evaluated when justifying the risk, with consideration for the potential impact on process capability and out-of-specification (OOS) rates [6].

Table 1: Risk-Based Acceptance Criteria for Equivalence Testing

| Risk Level | Acceptable Difference Range | Considerations |

|---|---|---|

| High | 5-10% | Small practical differences allowed; potential high impact on safety/efficacy |

| Medium | 11-25% | Moderate differences acceptable with proper justification |

| Low | 26-50% | Larger differences acceptable for lower risk attributes |

The United States Pharmacopeia (USP) <1033> emphasizes that acceptance criteria should be chosen to "minimize the risks inherent in making decisions from bioassay measurements and to be reasonable in terms of the capability of the art" [6]. When existing product specifications are available, acceptance criteria can be justified based on the risk that measurements may fall outside of these specifications.

Statistical Hypothesis Formulation

The statistical evaluation of comparability formally answers the research question through a structured hypothesis-testing approach [2]. This involves formulating a null hypothesis (H₀), which essentially proposes that a significant difference exists between the pre- and post-change products, and an alternative hypothesis (H₁ or Hₐ), which posits that the products are comparable [2].

Equivalence Testing Framework

For critical quality attributes (CQAs), the most widely used procedure for statistically evaluating equivalence is the Two One-Sided Tests (TOST) approach, which is advocated by the United States FDA [2] [6]. The TOST approach establishes a practical equivalence margin (δ) within which differences are considered not clinically meaningful.

For a given equivalence margin, δ (>0), the equivalence hypotheses can be stated as follows:

- H₀: |μᵣ - μₜ| ≥ δ (The groups differ by more than a tolerably small amount)

- H₁: |μᵣ - μₜ| < δ (The groups differ by less than that amount, i.e., they are practically similar) [2]

The null hypothesis (H₀) is decomposed into two separate sub-null hypotheses:

- H₀₁: μᵣ - μₜ ≥ δ

- H₀₂: μᵣ - μₜ ≤ -δ

These two components give rise to the 'two one-sided tests' that form the basis of the TOST procedure [2]. The following diagram illustrates the TOST approach and decision framework:

Contrast with Traditional Significance Testing

It is crucial to distinguish equivalence testing from traditional significance testing [6]. Significance tests, such as t-tests, seek to establish a difference from some target value and are not appropriate for demonstrating comparability [6] [8]. A significance test with a p-value > 0.05 indicates there is insufficient evidence to conclude the parameter is different from the target value, but this is not the same as concluding the parameter conforms to its target value [6]. Equivalence testing specifically tests whether differences are within a pre-defined acceptable margin, making it the statistically appropriate approach for comparability studies [6].

Study Design and Planning Considerations

A well-designed comparability study is essential for generating meaningful, defensible results. The quality of method comparison study determines the quality of the results and validity of the conclusions [8]. The key to a successful method comparison is therefore a well-designed and carefully planned experiment [8].

Sample Selection and Sizing

Proper sample selection is critical for a meaningful comparability assessment. The following considerations should be addressed:

- Sample Size: At least 40 and preferably 100 patient samples should be used to compare two methods [8]. Larger sample sizes are preferable to identify unexpected errors due to interferences or sample matrix effects.

- Measurement Range: Samples should cover the entire clinically meaningful measurement range [8].

- Replication: Whenever possible, perform duplicate measurements for both current and new method to minimize random variation effect [8].

- Timing: Analyze samples within their stability period (preferably within 2 hours) and on the day of blood sampling [8].

- Study Duration: Measure samples over several days (at least 5) and multiple runs to mimic real-world situations [8].

For early phase development, when representative batches are limited, it is acceptable to use single batches of pre- and post-change material to establish biophysical characteristics using platform methods [4]. As development continues into Phase 3, extended characterization increases in complexity to include more molecule-specific methods and head-to-head testing of multiple pre- and post-change batches, ideally following the gold standard format: 3 pre-change vs. 3 post-change [4].

Analytical Testing Strategies

A comprehensive comparability package typically comprises several complementary studies:

- Extended Characterization: Provides orthogonal assessment with finer level of detail beyond release methods [4]

- Forced Degradation Studies: Reveals degradation pathways not observed in real-time stability studies [4]

- Stability Studies: Both real-time and accelerated to compare degradation profiles [4]

- Statistical Analysis: Of historical release data [4]

Table 2: Example Extended Characterization Testing Panel for Monoclonal Antibodies

| Attribute Category | Specific Analytical Methods | Purpose |

|---|---|---|

| Structural Characterization | LC-MS, ESI-TOF MS, SEC-MALS, CD, AUC | Confirm primary structure, higher order structure, and molecular weight |

| Charge Variants | IEC, cIEF, CE-SDS | Assess charge heterogeneity and post-translational modifications |

| Purity and Impurities | SEC, rCE-SDS, HP-RPC | Quantify product-related substances and impurities |

| Potency | Cell-based assays, binding assays (SPR) | Demonstrate biological activity and mechanism of action |

Table 3: Types of Forced Degradation Stress Conditions

| Stress Condition | Typical Parameters | Assessment Focus |

|---|---|---|

| Thermal Stress | Elevated temperatures (e.g., 5°C, 25°C, 40°C) | Structural stability and degradation products |

| pH Variation | Various pH conditions (e.g., pH 3-9) | Acid/base-induced degradation |

| Oxidative Stress | Exposure to oxidizing agents (e.g., hydrogen peroxide) | Oxidation-sensitive residues |

| Light Exposure | Specific light conditions per ICH guidelines | Photodegradation products |

| Mechanical Stress | Shaking, agitation, freezing/thawing | Aggregation and particle formation |

Statistical Methodologies and Data Analysis

Method Comparison Approaches

Three key statistical methods are widely used for method comparison in comparability studies:

- Passing-Bablok Regression: A nonparametric method robust against outliers that does not assume measurement error is normally distributed [2] [8]

- Deming Regression: Accounts for measurement error in both variables [8]

- Bland-Altman Analysis: Plots differences between methods against their averages to assess agreement [8]

Passing-Bablok regression is particularly valuable for comparing analytical methods expected to produce the same measurement values [2]. The intercept represents the bias between the two methods, while the slope indicates the proportional bias [2]. This method requires checks for the assumption that measurements are positively correlated and exhibit a linear relationship [2].

Graphical Data Analysis

Visual presentation of data is an essential first step in data analysis to ensure outliers and extreme values are detected [8]. Two primary graphical methods are employed:

- Scatter Plots: Describe variability in paired measurements throughout the range of measured values, with each pair represented by a point defined by the reference method (x-axis) against the comparison method (y-axis) [8]

- Difference Plots (Bland-Altman Plots): Describe agreement between two measurement methods by plotting differences, ratios, or percentages between methods on the y-axis against the average of methods on the x-axis [8]

The following workflow outlines the key stages in designing and executing a comprehensive comparability study:

Essential Research Reagents and Materials

Successful comparability studies require carefully selected reagents and materials to ensure reliable, reproducible results. The following table outlines key research reagent solutions and their functions in comparability assessments:

Table 4: Essential Research Reagent Solutions for Comparability Studies

| Reagent/Material | Function/Purpose | Key Considerations |

|---|---|---|

| Reference Standard | Serves as benchmark for quality attribute assessment | Should be fully characterized and representative of product [5] |

| Qualified Cell Banks | Ensure consistent production of biopharmaceuticals | Comprehensive characterization and stability data required |

| Characterization Assays | Orthogonal methods for structural and functional assessment | LC-MS, SEC-MALS, CD, SPR provide complementary information [4] |

| Biological Activity Assays | Measure pharmacological activity and potency | Cell-based assays, binding assays reflect mechanism of action [4] [5] |

| Forced Degradation Reagents | Indicate stability and degradation pathways | Hydrogen peroxide (oxidation), buffers (pH stress) [4] |

| Process-Related Impurity Assays | Detect residuals from manufacturing process | Host cell proteins, DNA, chromatography ligands, antibiotics |

Interpretation and Decision-Making

The final comparability assessment requires integration of all data sources through a totality-of-evidence approach [2] [5]. The conclusion is not necessarily a simple "yes" or "no" but may fall into an uncomfortable "don't know" region where the information isn't strong enough, given the level of confidence, to definitively claim comparability [2].

When unexpected results emerge from extended characterization and forced degradation studies, learning and communicating as much as possible about the molecular characterization and degradation patterns can help teams prepare for regulatory scrutiny [4]. A strong comparability package will leave regulators with confidence in the product and the company, paving the way for new drug approvals and future endeavors [4].

The ultimate goal of comparability assessment is to demonstrate that control is maintained in each version of the process, ensuring consistent delivery of high-quality product to patients throughout the product lifecycle [4].

In the biopharmaceutical industry, process changes are inevitable throughout a product's lifecycle. Regulatory agencies require evidence that products manufactured post-change are comparable to pre-change products in terms of quality, safety, and efficacy [2] [9]. Hypothesis testing provides the formal statistical framework for this demonstration, moving beyond simple "yes" or "no" conclusions to a more nuanced understanding that includes a "don't know" zone of uncertainty [2]. This formal procedure allows researchers to investigate ideas about the world using statistics by weighing evidence between competing claims [10]. In comparability studies, this structured approach to hypotheses transforms subjective assessment into an objective, statistically-defensible conclusion that meets regulatory standards while managing risk appropriately.

Fundamental Concepts of Null and Alternative Hypotheses

Definitions and Roles

The foundation of hypothesis testing rests on two complementary statements about a population parameter:

Null Hypothesis (H₀): This represents a presumption of status quo, no effect, or no difference [10] [11]. In the context of comparability, it asserts that pre-change and post-change processes are not comparable. It often includes an equality symbol (=, ≤, or ≥) and is never "proven" – it can only be rejected or not rejected based on evidence [10] [12].

Alternative Hypothesis (H₁ or Hₐ): This is the researcher's claim, typically representing an effect, difference, or relationship [10] [13]. For comparability studies, this generally states that the processes are comparable. It contains an inequality symbol (≠, <, or >) and is what researchers aim to support [11] [14].

These hypotheses are mutually exclusive and exhaustive – one must be true, and they cover all possible outcomes [10]. The alternative hypothesis can take different forms depending on the research question, leading to different types of tests as shown in Table 1.

Table 1: Types of Alternative Hypotheses and Corresponding Tests

| Research Question | Null Hypothesis (H₀) | Alternative Hypothesis (H₁) | Test Type | ||||

|---|---|---|---|---|---|---|---|

| Superiority | μ₁ = μ₂ | μ₁ ≠ μ₂ | Two-tailed | ||||

| Non-inferiority | μ₁ ≥ μ₂ | μ₁ < μ₂ | One-tailed | ||||

| Equivalence | μ₁ - μ₂ | ≥ δ | μ₁ - μ₂ | < δ | TOST |

Mathematical Symbolization

The mathematical representation of hypotheses follows specific conventions. H₀ always contains an equality symbol (=, ≥, or ≤), while H₁ never contains an equality symbol (≠, <, or >) [11] [14]. For example:

- Two-tailed test: H₀: μ = 100 vs. H₁: μ ≠ 100

- Left-tailed test: H₀: μ ≥ 100 vs. H₁: μ < 100

- Right-tailed test: H₀: μ ≤ 100 vs. H₁: μ > 100

Although some researchers use = in H₀ even with > or < in H₁, this practice is statistically acceptable because the decision is always about rejecting or not rejecting H₀ [11].

The Statistical Testing Framework and the "Don't Know" Zone

The Decision Matrix and Statistical Errors

In hypothesis testing, sample data is evaluated to make a decision about the population. Since this inference is based on probability, two types of errors can occur as shown in Table 2.

Table 2: Types of Statistical Errors in Hypothesis Testing

| Decision Reality | Fail to Reject H₀ | Reject H₀ |

|---|---|---|

| H₀ is True | Correct Decision | Type I Error (False Positive) |

| H₀ is False | Type II Error (False Negative) | Correct Decision |

A Type I error (α) occurs when we incorrectly reject a true null hypothesis, while a Type II error (β) occurs when we fail to reject a false null hypothesis [15] [12]. The power of a test (1-β) is the probability of correctly rejecting a false null hypothesis [12]. Conventionally, α is set at 0.05 (5%), and β at 0.1-0.2 (10-20%), giving power of 90-80% [15].

Figure 1: Hypothesis Testing Decision Pathways and Error Types

The "Don't Know" Zone Explained

The "don't know" zone represents the uncomfortable middle ground where evidence is insufficient to either reject H₀ or support H₁ with confidence [2]. In this zone, conclusions cannot be drawn, and more data or better study design is needed. For comparability studies, this means that when the answer isn't definitively "yes" to comparability, it doesn't automatically mean "no" – it may mean "we don't know based on current evidence" [2]. This occurs when:

- Sample sizes are too small to detect meaningful differences

- Measurement variability is too high to draw precise conclusions

- Confidence intervals are too wide to determine if equivalence margins are met

- Statistical power is insufficient to distinguish chance from real effects

This concept is particularly relevant for comparability studies where the consequence of a false conclusion can have significant regulatory and safety implications.

Application to Comparability Studies

Formulating Comparability Hypotheses

In comparability studies for biopharmaceuticals, the research question is: "Are products manufactured in the post-change environment comparable to those in the pre-change environment?" [2]. The hypotheses are formulated specifically to test this:

For equivalence testing using the Two One-Sided Tests (TOST) approach, which is widely used for Tier 1 Critical Quality Attributes (CQAs) [2]:

H₀: |μᵣ - μₜ| ≥ δ (the groups differ by more than a tolerably small amount) H₁: |μᵣ - μₜ| < δ (the groups differ by less than that amount, i.e., they are practically equivalent)

Here, μᵣ represents the mean of the reference (pre-change) product, μₜ represents the mean of the test (post-change) product, and δ is the equivalence margin [2]. The null hypothesis posits that the difference between means is greater than the equivalence margin, while the alternative states they are equivalent within the margin.

Experimental Protocols for Comparability Testing

Tier 1 CQAs: Equivalence Testing (TOST)

For Critical Quality Attributes with potential impact on safety and efficacy, the US FDA recommends the Two One-Sided Tests (TOST) approach [2]:

- Define equivalence margin (δ): Based on scientific justification, clinical experience, and regulatory input

- Decompose null hypothesis: H₀₁: μᵣ - μₜ ≥ δ and H₀₂: μᵣ - μₜ ≤ -δ

- Perform two one-sided t-tests: Test H₀₁ against H₁₁: μᵣ - μₜ < δ and H₀₂ against H₁₂: μᵣ - μₜ > -δ

- Establish equivalence: If both tests reject their respective null hypotheses at significance level α, conclude equivalence

- Use confidence intervals: A 100(1-2α)% confidence interval for the difference should lie entirely within (-δ, δ)

Figure 2: TOST Experimental Protocol for Comparability Testing

Method Comparison Studies

For analytical method comparison, Passing-Bablok regression is often used because it doesn't assume measurement error is normally distributed and is robust against outliers [2]:

- Assumption checking: Verify that measurements are positively correlated and exhibit a linear relationship

- Regression analysis: Fit the robust regression model to method comparison data

- Parameter estimation: Estimate intercept (bias) and slope (proportional bias) with confidence intervals

- Equivalence assessment: Check if confidence intervals for intercept contain 0 and for slope contain 1

- Interpretation: Determine if the two methods are comparable based on pre-defined criteria

The Researcher's Toolkit: Essential Materials and Methods

Table 3: Research Reagent Solutions for Comparability Studies

| Tool Category | Specific Methods/Techniques | Function in Comparability Assessment |

|---|---|---|

| Statistical Tests | Two One-Sided Tests (TOST) | Establishes equivalence for Tier 1 CQAs |

| Passing-Bablok Regression | Compares analytical methods robustly | |

| Deming Regression | Method comparison when both variables have error | |

| Bland-Altman Analysis | Assesses agreement between two measurement methods | |

| Statistical Intervals | Tolerance Intervals | Captures variability in future individual observations |

| Prediction Intervals | Estimates range for future observations | |

| Process Capability Intervals | Determines if process meets specifications | |

| Analytical Techniques | Size Exclusion Chromatography (SEC) | Quantifies aggregates and fragments |

| Ion-Exchange Chromatography (IEC) | Measures charge variants | |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Identifies chemical modifications | |

| Cell-Based Bioassays | Determines biological potency |

Implementing the Framework: A Case Study

Consider a scenario where a manufacturing process for a recombinant monoclonal antibody is changed to improve yield [9]. The comparability study must demonstrate that CQAs remain equivalent.

For a critical attribute like potency (measured via IC₅₀), the hypotheses would be:

H₀: |μᵣ - μₜ| ≥ 0.2 (the difference in potency is greater than 0.2 log units) H₁: |μᵣ - μₜ| < 0.2 (the difference in potency is less than 0.2 log units)

Where the equivalence margin of 0.2 log units is justified based on historical variability and clinical relevance. If the 90% confidence interval for the difference in means is (-0.15, 0.18), which falls entirely within the equivalence margin (-0.2, 0.2), we reject H₀ and conclude equivalence. If the interval is (-0.25, 0.05), it crosses the boundary, placing us in the "don't know" zone where we cannot conclude equivalence nor definitively claim non-equivalence without additional data.

Proper hypothesis formulation is the cornerstone of valid comparability conclusions. The framework of null and alternative hypotheses, coupled with recognition of the "don't know" zone, provides a statistically rigorous approach for demonstrating comparability in biopharmaceutical development. By implementing appropriate experimental protocols like TOST for equivalence testing and understanding the implications of statistical errors, researchers can make defensible decisions that satisfy regulatory requirements while advancing manufacturing improvements. This approach transforms subjective assessment into objective, evidence-based conclusions that protect patient safety while enabling necessary process evolution.

The Role of Critical Quality Attributes (CQAs) and Tiered Risk Assessment

In the pharmaceutical industry, a Critical Quality Attribute (CQA) is defined as a physical, chemical, biological, or microbiological property or characteristic that must be controlled within predefined limits, ranges, or distributions to ensure the desired product quality [16] [17]. These attributes form the foundation of the Quality by Design (QbD) paradigm, a systematic approach to development that emphasizes product and process understanding based on sound science and quality risk management [16] [18]. CQAs are directly linked to the Quality Target Product Profile (QTPP), which outlines the desired quality characteristics of the final drug product, ensuring that patient-focused quality metrics are built into the product from the earliest development stages [19].

The identification and control of CQAs are mandatory requirements from regulatory agencies worldwide, including the FDA and EMA, throughout the product lifecycle [16]. For complex biotherapeutics, CQAs are particularly crucial due to the molecular complexity and the potential for numerous product variants that may impact safety and efficacy [18]. Proper identification and control of CQAs ensure that biopharmaceutical products maintain their safety, identity, purity, and potency despite inevitable manufacturing process changes, forming the scientific basis for comparability assessments [4].

Identification and Categorization of CQAs

CQA Identification Process

The process of identifying CQAs begins with creating a comprehensive list of potential quality attributes derived from the QTPP [19]. This list typically includes all relevant product attributes that might impact product quality. Each attribute then undergoes a systematic risk assessment evaluating its potential impact on safety and efficacy, without considering the capability of the manufacturing process to control it [18]. According to ICH Q8(R2), a CQA is specifically a property or characteristic that should be within an appropriate limit, range, or distribution to ensure the desired product quality [17]. Attributes that pose no potential for harm to patients are classified as non-critical and may not require stringent control strategies [19].

Examples of Common CQAs

CQAs vary significantly depending on the dosage form, route of administration, and therapeutic indication [16]. Common CQAs for pharmaceutical products include:

- Purity and Impurity Profiles: Including degradation products and residual solvents [16]

- Potency: The strength of the active pharmaceutical ingredient (API) [16]

- Dissolution Rate: Particularly critical for oral solid dosage forms [16]

- Particle Size Distribution: Affects bioavailability and stability, especially in inhaled and injectable products [16]

- Biological Activity: Critical for biotherapeutics, encompassing mechanism of action and efficacy [18]

- Charge Variants: Including post-translational modifications that may impact stability and function [18]

- Aggregation: Particularly crucial for biologics due to potential immunogenicity concerns [18]

CQA Categorization Framework

For effective risk management, CQAs are typically categorized into tiers based on their potential impact on product quality and clinical outcomes [2] [18]:

- Tier 1 CQAs: Attributes with highest criticality, requiring stringent statistical equivalence testing using methods like Two One-Sided Tests (TOST) [2]

- Tier 2 CQAs: Attributes with moderate criticality, often evaluated using quality ranges or other statistical approaches

- Tier 3 CQAs: Attributes with lower criticality, typically monitored through general quality control measures

Table 1: CQA Categorization Framework Based on Risk Criticality

| Tier | Impact Level | Statistical Approach | Examples |

|---|---|---|---|

| Tier 1 | High | TOST equivalence testing | Potency, purity, aggregation |

| Tier 2 | Medium | Quality ranges, trending analysis | Charge variants, glycan profiles |

| Tier 3 | Low | General quality monitoring | Appearance, osmolality |

Tiered Risk Assessment Methodology

Fundamental Principles

The tiered risk assessment approach provides a structured framework for evaluating CQAs based on their potential impact on safety and efficacy and the uncertainty associated with that impact [18]. This methodology enables manufacturers to focus resources on the most critical attributes while implementing appropriate control strategies for each risk level [18]. The approach follows the principles outlined in ICH Q9 Quality Risk Management, utilizing a systematic process for assessment, control, communication, and review of risks [18].

Risk Scoring and Prioritization

A standardized scoring system is employed to prioritize CQAs based on two primary factors: impact and uncertainty [18]. The impact factor evaluates the potential severity of harm to patient safety or efficacy, while the uncertainty factor assesses the level of confidence in the available data [18]. These factors are scored independently using scales typically consisting of three to five levels, with higher weighting assigned to the impact factor reflecting its greater importance [18]. The multiplied scores create a risk priority ranking that guides subsequent control strategies.

Table 2: Risk Scoring Matrix for CQA Criticality Assessment

| Impact Score | Uncertainty Score | Risk Priority | Recommended Action |

|---|---|---|---|

| High (5) | High (5) | 25 (Critical) | Immediate mitigation required |

| High (5) | Medium (3) | 15 (High) | Additional studies needed |

| Medium (3) | Medium (3) | 9 (Medium) | Monitor with control strategy |

| Low (1) | Low (1) | 1 (Low) | Routine monitoring sufficient |

Tiered Assessment Workflow

The tiered risk assessment follows a sequential workflow that increases in complexity and data requirements at each stage [20]:

Tier 1: Initial Screening involves gathering bioactivity data and establishing bioactivity indicators through high-throughput screening methods such as ToxCast assays [20]. This tier focuses on hazard identification and preliminary ranking of attributes based on their potential biological activity.

Tier 2: Combined Risk Assessment explores the possibility of combined effects from multiple attributes or process parameters, examining interactions and potential cumulative impacts [20]. This stage often involves hypothesis testing regarding shared modes of action and evaluates correlations between different risk indicators.

Tier 3: Margin of Exposure Analysis applies more sophisticated tools such as toxicokinetic modeling to compare estimated exposure levels with bioactivity thresholds, identifying critical risk drivers and tissue-specific pathways [20].

Tier 4: Bioactivity Refinement utilizes advanced modeling approaches to improve effect assessments, including in vitro to in vivo extrapolations and more precise intracellular concentration estimations [20].

Tier 5: Comprehensive Risk Characterization integrates all available data to reach a definitive risk conclusion, considering both dietary and non-dietary exposure routes and establishing appropriate safety margins [20].

Statistical Fundamentals for Comparability Assessment

Statistical Framework for Comparability

Within comparability studies for biologics, statistical methods provide the objective foundation for determining whether pre-change and post-change products are highly similar [2]. The fundamental research question is: "Are products manufactured in the post-change environment comparable to those in the pre-change environment?" [2] This question is addressed through formal statistical hypothesis testing, with the null hypothesis (H0) typically stating that significant differences exist between the products, while the alternative hypothesis (H1) states that they are equivalent within predefined margins [2].

Equivalence Testing Using TOST

For Tier 1 CQAs, the most widely accepted statistical approach for demonstrating comparability is the Two One-Sided Tests (TOST) procedure, which is explicitly advocated by regulatory agencies including the FDA [2]. The TOST approach establishes equivalence by testing whether the true difference between population means is within a specified equivalence margin (δ) [2].

The hypotheses for TOST are formulated as:

- H01: μR - μT ≥ δ (Test product is significantly different from reference product in positive direction)

- H02: μR - μT ≤ -δ (Test product is significantly different from reference product in negative direction)

- H1: |μR - μT| < δ (Test and reference products are equivalent)

The TOST procedure can be visualized as a confidence interval approach where equivalence is demonstrated when the 90% confidence interval for the difference between means falls completely within the equivalence margin [-δ, +δ] [2].

Method Comparison Approaches

For analytical method comparison during comparability studies, several statistical approaches are employed depending on the data characteristics and testing requirements [2]:

Passing-Bablok Regression is a non-parametric method particularly valuable when comparing analytical methods as it does not assume normally distributed measurement errors and is robust against outliers [2]. This method evaluates both constant bias (through the intercept) and proportional bias (through the slope) between measurement methods.

Deming Regression accounts for measurement errors in both variables and is appropriate when errors follow normal distributions.

Bland-Altman Analysis assesses agreement between two measurement methods by plotting differences against averages, helping identify systematic biases or trends in the differences.

Experimental Protocols and Analytical Approaches

Extended Characterization Testing

For biologics comparability, extended characterization provides orthogonal methods to thoroughly understand molecule-specific attributes [4]. A comprehensive testing panel typically includes:

Table 3: Extended Characterization Testing Panel for Monoclonal Antibodies

| Test Category | Specific Methods | Critical Attributes Assessed |

|---|---|---|

| Structural Characterization | LC-MS, Peptide Mapping, CD, FTIR | Primary structure, higher order structure, post-translational modifications |

| Charge Variant Analysis | icIEF, CEX-HPLC | Charge heterogeneity, deamidation, sialylation |

| Size Variant Analysis | SEC-MALS, CE-SDS, SV-AUC | Aggregation, fragmentation, clipping |

| Purity and Impurity | HCP ELISA, Residual DNA, Host Cell Protein analysis | Process-related impurities, product-related substances |

| Functional Assays | Binding assays (SPR, BLI), cell-based bioassays | Potency, mechanism of action, Fc functionality |

Forced Degradation Studies

Forced degradation studies are critical for understanding the inherent stability of the molecule and identifying potential degradation pathways that might not be evident under normal storage conditions [4]. These studies apply controlled stress conditions to both pre-change and post-change materials to compare their degradation profiles [4].

Standard forced degradation protocols include [4]:

- Oxidative Stress: Exposure to hydrogen peroxide or other oxidizers

- Thermal Stress: Elevated temperatures to accelerate degradation

- pH Variation: Exposure to acidic and basic conditions

- Light Exposure: Photostability testing per ICH guidelines

- Mechanical Stress: Agitation and shear studies

The results are analyzed by comparing trendline slopes, bands, and peak patterns between pre-change and post-change materials, with similar degradation profiles supporting comparability [4].

Research Reagent Solutions and Essential Materials

Successful CQA assessment requires specific research tools and materials:

Table 4: Essential Research Materials for CQA Assessment

| Material/Reagent | Function | Application Examples |

|---|---|---|

| ToxCast Bioactivity Assays | High-throughput screening for bioactivity indicators | Tier 1 risk assessment, initial hazard identification [20] |

| OECD QSAR Toolbox | In silico predictions of toxicity based on chemical structure | DART risk assessment, Tier 0 screening [21] |

| Zebrafish (Danio rerio) Model | Vertebrate model for ecotoxicity and developmental toxicity testing | Environmental Risk Assessment, developmental toxicity studies [22] |

| Reporter Gene Assays (CALUX) | Screening for endocrine disruption and receptor-mediated toxicity | DART NAM toolbox, Tier 1 bioactivity assessment [21] |

| High-Resolution Mass Spectrometry | Precise characterization of molecular structure and modifications | Extended characterization, identification of post-translational modifications [4] |

Implementation in Control Strategy and Lifecycle Management

Control Strategy Development

The ultimate output of CQA identification and risk assessment is the development of a comprehensive control strategy that ensures consistent product quality throughout the product lifecycle [18]. This strategy integrates material attributes, process parameters, and procedural controls linked to CQAs [18]. The level of control rigor is commensurate with the criticality ranking of each attribute, with higher-risk CQAs warranting more stringent controls [18]. A well-designed control strategy may reduce reliance on end-product testing for attributes that are well-controlled through process parameter management and demonstrated to be stable throughout the product shelf-life [18].

Lifecycle Management and Knowledge Continuity

CQAs are not static; they evolve as additional product knowledge is gained through nonclinical studies, clinical experience, and manufacturing history [16] [18]. The iterative refinement of CQAs and their acceptable ranges continues throughout the product lifecycle, with periodic risk assessments incorporating new knowledge [18]. This approach aligns with the regulatory expectation of continued process verification and lifecycle management [16]. Proper documentation of the scientific rationale supporting CQA criticality assessments is essential for regulatory submissions and for maintaining knowledge continuity within organizations [18].

Comparability Demonstration

When manufacturing changes occur, a well-defined CQA framework facilitates structured comparability assessments [4]. The comparability exercise focuses on demonstrating that pre-change and post-change products are highly similar and that any differences in CQAs have no adverse impact on safety or efficacy [4]. The strength of the comparability data enables manufacturers to implement necessary process changes while maintaining consistent product quality, ultimately supporting the availability of medicines to patients through an efficient and adaptable manufacturing lifecycle [4].

The ICH Q5E guideline, titled "Comparability of Biotechnological/Biological Products Subject to Changes in Their Manufacturing Process," provides the foundational framework for assessing the impact of manufacturing changes on biologic products [23] [24]. Published in June 2005, this guidance assists manufacturers in collecting relevant technical information to demonstrate that process changes will not adversely affect the quality, safety, and efficacy of drug products [23]. The document emphasizes that the demonstration of comparability does not necessarily mean that the quality attributes of the pre-change and post-change products are identical, but rather that they are highly similar and that any differences have no adverse impact on safety or efficacy [25] [24].

The totality-of-evidence approach is a systematic strategy that integrates data from multiple studies to provide comprehensive evidence of product comparability. This approach, built upon the principles outlined in ICH Q5E, requires a rigorous, head-to-head comparison between the reference and changed product based on a stepwise evaluation comprising (i) analytical studies as the cornerstone, (ii) comparative nonclinical studies, and (iii) comparative clinical studies [26]. This holistic assessment strategy has become the gold standard for evaluating manufacturing changes throughout the product lifecycle, from early development through post-approval modifications [4] [27].

The Regulatory Framework: ICH Q5E Principles and Implementation

Core Principles of ICH Q5E

ICH Q5E establishes several fundamental principles for comparability exercises. The primary objective is to ensure that manufacturing process changes do not adversely impact the quality, safety, and efficacy of biological products [23]. The guideline acknowledges that while biotechnological products are expected to exhibit some degree of variability due to their complex nature, manufacturers must demonstrate thorough understanding and control of this variability [24]. The scope of ICH Q5E encompasses changes to both drug substance and drug product manufacturing processes, providing a flexible framework that can be adapted to various types of changes, from minor adjustments to major process modifications [23] [25].

The guideline operates on the principle that the extent of the comparability exercise should be commensurate with the level of risk associated with the specific manufacturing change [25]. This risk-based approach requires manufacturers to conduct a thorough assessment of how each change might potentially affect critical quality attributes (CQAs) and, consequently, product safety and efficacy. The demonstration of comparability provides the scientific justification for leveraging existing safety and efficacy data to the product manufactured with the changed process, potentially eliminating the need for additional nonclinical or clinical studies [25] [27].

Global Regulatory Adoption and Evolution

Since its publication, ICH Q5E has been adopted by regulatory authorities worldwide, including the U.S. Food and Drug Administration (FDA), the European Medicines Agency (EMA), and other major agencies [23] [24]. While the fundamental scientific principles for establishing comparability show notable alignment among advanced regulatory authorities, divergent requirements across regions have been reported to complicate the development pathway, potentially resulting in duplicative processes and unnecessary testing [26].

Recent research indicates a global trend toward regulatory convergence to streamline biosimilar development and evaluation. A 2023 study employing the Nominal Group Technique with an international panel of regulators, academics, and industry representatives identified enhancing stakeholder education on science-based biosimilarity principles and promoting regulatory convergence through reliance as the highest-rated recommendations, both achieving mean scores of 4.65/5 [26]. This movement toward harmonization aims to reduce development costs and timelines while maintaining rigorous standards for product quality, safety, and efficacy.

The Totality-of-Evidence Strategy: A Multidisciplinary Approach

Framework Components and Integration

The totality-of-evidence strategy employs a comprehensive, weight-of-evidence approach that integrates data from multiple analytical techniques and study types to form a complete picture of product comparability [2]. This multifaceted assessment includes extended characterization using orthogonal analytical methods, forced degradation studies to understand degradation pathways, stability testing under various conditions, and statistical analysis of historical data [4]. The integration of these diverse data sources provides a robust foundation for demonstrating that any differences observed between pre-change and post-change products are within acceptable limits and do not impact clinical performance.

The strategy follows a stepwise implementation process that begins with extensive analytical characterization, progresses through nonclinical assessments when warranted, and culminates in clinical evaluations only when previous steps have raised unresolved concerns [26] [27]. This tiered approach ensures that resources are allocated efficiently, with each step informing the scope and design of subsequent investigations. The hierarchical nature of the assessment is illustrated in Figure 1, demonstrating how evidence gathering progresses from foundational analytical studies to targeted clinical evaluations only when necessary.

Analytical Studies as the Cornerstone

Analytical studies form the foundation of the totality-of-evidence approach, providing the most sensitive and informative assessment of product quality attributes [26] [4]. According to recent research, there is growing consensus among stakeholders that advances in analytical technologies have strengthened the ability to detect clinically relevant differences, potentially reducing the need for certain comparative clinical studies [26]. The analytical comparability exercise typically includes three tiers of testing: routine release testing, extended characterization, and forced degradation studies [4].

Extended characterization provides a deeper understanding of molecular attributes through sophisticated analytical techniques that offer greater resolution and specificity than routine methods. For monoclonal antibodies, this typically includes comprehensive assessments of primary structure (amino acid sequence, post-translational modifications), higher-order structure (secondary and tertiary structure), charge variants, glycosylation patterns, and biological activity [4]. These orthogonal methods collectively provide a detailed fingerprint of the molecule, enabling detection of subtle differences that might not be apparent through standard testing alone.

Table 1: Example Extended Characterization Testing Panel for Monoclonal Antibodies

| Attribute Category | Specific Test Methods | Key Information Provided |

|---|---|---|

| Primary Structure | Peptide mapping with LC-MS, Intact mass analysis (ESI-TOF MS), Sequence variant analysis (SVA) | Confirmation of amino acid sequence, identification of post-translational modifications |

| Higher-Order Structure | Circular dichroism, Analytical ultracentrifugation, SEC-MALS | Secondary and tertiary structure confirmation, aggregation analysis |

| Charge Variants | icIEF, CEX-HPLC | Charge heterogeneity assessment, acidic and basic variant quantification |

| Glycosylation | Released glycan analysis, LC-MS | Glycan profile characterization, major glycoform quantification |

| Purity & Impurities | SEC-HPLC, CE-SDS (reduced and non-reduced), HCP ELISA, Residual Protein A ELISA | Product-related substance quantification, process-related impurity detection |

| Potency | Cell-based bioassay, Binding affinity assays | Biological activity measurement, mechanism of action assessment |

Forced degradation studies subject the product to stress conditions beyond typical stability challenges to deliberately induce degradation and elucidate potential degradation pathways [4]. These studies typically include exposure to elevated temperatures, light exposure, oxidative stress, acidic/basic conditions, and mechanical stress [4]. By comparing the degradation profiles of pre-change and post-change products, manufacturers can demonstrate that the molecular integrity and degradation pathways remain comparable despite process changes.

Statistical Fundamentals in Comparability Studies

Statistical Framework and Hypothesis Testing

The statistical evaluation of comparability studies employs a structured hypothesis-testing framework specifically designed for equivalence testing rather than traditional difference detection [2] [28]. The fundamental statistical question in comparability studies is whether the difference between pre-change and post-change products is sufficiently small to be considered practically insignificant [28]. This is formalized through equivalence hypotheses where the null hypothesis (H0) states that the groups differ by more than a tolerably small amount, while the alternative hypothesis (H1) states that the groups differ by less than that amount [2].

The most widely adopted statistical approach for Tier 1 CQAs (those with the highest potential impact on safety and efficacy) is the Two One-Sided Tests (TOST) procedure, which is advocated by the FDA and other regulatory agencies [2] [28]. The TOST approach decomposes the null hypothesis into two separate sub-null hypotheses: H01: μR - μT ≥ δ and H02: μR - μT ≤ -δ, where μR and μT represent the means of the reference (pre-change) and test (post-change) products, respectively, and δ represents the pre-specified equivalence margin [2]. This approach essentially tests whether the true difference between products is both statistically significantly greater than the lower equivalence margin and statistically significantly less than the upper equivalence margin.

Advanced Statistical Methods for Different Data Structures

The appropriate statistical methods for comparability assessment vary depending on the data structure and analytical methodology employed. For unpaired quality attributes (e.g., HPLC-generated data where non-paired observations are produced from both pre-change and post-change products), formal statistical approaches such as TOST are traditionally used to assess equivalence of means with pre-specified acceptance criteria [28]. More advanced methods incorporate tolerance intervals and plausibility intervals to define comparability criteria that account for both process and analytical variability [28].

For paired data structures (e.g., relative potency assays where both pre-change and post-change products are tested against a common reference standard), more complex statistical models are required. These may include linear structural measurement error models that account for variability in both the independent and dependent variables [28]. Method comparison studies often employ specialized regression techniques such as Passing-Bablok regression and Deming regression, which are more appropriate than ordinary least squares regression when both measurement systems contain error [2]. These methods are particularly valuable for assessing the comparability of analytical methods themselves, which is often a prerequisite for meaningful product comparability assessment.

Table 2: Statistical Methods for Different Comparability Study Scenarios

| Data Structure | Recommended Methods | Key Considerations |

|---|---|---|

| Unpaired Data (e.g., HPLC) | Two One-Sided Tests (TOST), Tolerance Intervals, Plausibility Intervals | Account for both process and analytical variability; number of batches depends on between-batch variability |

| Paired Data (e.g., potency) | Linear structural measurement error models, Paired t-tests | Requires appropriate modeling of measurement errors in both test systems |

| Method Comparison | Passing-Bablok regression, Deming regression, Bland-Altman analysis | Does not assume normally distributed measurement error; robust against outliers |

| Process Performance | Process capability indices, Statistical process control charts | Focuses on demonstrating process robustness and consistency between batches |

Equivalence Margin Setting and Risk Assessment

The determination of appropriate equivalence margins represents one of the most critical aspects of comparability study design [2]. The equivalence margin (δ) defines the boundary within which differences between pre-change and post-change products are considered practically insignificant. Setting excessively wide margins increases the likelihood of establishing equivalence but may invite regulatory scrutiny unless scientifically justified, while excessively narrow margins may lead to unnecessary failure to demonstrate comparability [2]. The equivalence margin should be based on a comprehensive risk assessment that considers the potential impact of attribute differences on safety and efficacy, analytical method capability, and historical manufacturing experience [28] [27].

The risk assessment process for comparability studies typically follows the principles outlined in ICH Q9, focusing on the product and its characteristics [25]. This assessment helps determine the scope of comparability studies, appropriate batch selection, analytical methods, and specific studies needed (e.g., extended characterization, forced degradation) [25]. The level of risk associated with a manufacturing change directly influences the extent of comparability testing required, with higher-risk changes necessitating more comprehensive assessment.

Experimental Design and Protocol Development

Batch Selection Strategy

The selection of appropriate batches for comparability assessment is a critical consideration that significantly impacts study validity. The number of batches included in a comparability study should be justified based on the product development stage, type of changes implemented, and the level of process and product understanding [25]. For major changes, regulatory guidance generally recommends testing ≥3 batches of commercial-scale samples representing the post-change process, while minor changes may be adequately assessed with fewer batches (generally ≥1 batch) [25].

The batch selection strategy should ensure that batches are representative of the pre- and post-change processes or sites [4]. Pre- and post-change batches should be manufactured as close together as possible to minimize age-related differences that could confound results [4]. Additionally, it is recommended to use the latest available batches that have passed release criteria to avoid the appearance of "cherry-picking" favorable results [4]. For products with significant batch-to-batch variability, a larger number of batches may be required to adequately characterize the inherent variability and establish appropriate comparability margins.

Acceptance Criteria Establishment

The establishment of scientifically justified acceptance criteria represents one of the most challenging aspects of comparability protocol development [27]. According to ICH Q5E, prospective acceptance criteria should be established before testing post-change batches [23] [27]. These criteria should be based on historical data from process and product quality characterization, with sufficient justification provided for excluding any data [25]. The set acceptance criteria should not be lower than the quality standard unless scientifically justified [25].

Acceptance criteria can be categorized as quantitative criteria (which must meet specific scope requirements) or qualitative criteria (based on the comparison of patterns or profiles) [25]. For quantitative attributes, acceptance criteria are often based on statistical limits derived from historical batch data, typically encompassing a specified percentage of the expected variability (e.g., ±3 standard deviations) [28]. For qualitative attributes, acceptance criteria should clearly define what constitutes comparable patterns or profiles, often through side-by-side visual comparison with predefined similarity standards.

Table 3: Example Acceptance Criteria for Different Analytical Methods

| Test Type | Specific Analysis | Acceptable Standards |

|---|---|---|

| Routine Release | Peptide Map | Comparable peak shapes based on retention time and relative intensity; no new or lost peaks |

| SEC-HPLC | Percentage of main peak within acceptance criteria based on statistical analysis; aggregates, monomers, and fragments with same retention time | |

| Charge Variants (CEX, cIEF) | Percentage of major peaks within acceptance criteria based on statistical analysis; no new peaks | |

| Cell-based Assays | Potency within acceptance criteria based on statistical analysis | |

| Extended Characterization | Molecular Weight (LC-MS) | Mass error within instrument accuracy range; same species |

| Peptide Mapping (LC-MS) | Confirmation of primary structure; percentage of post-translational modifications within acceptable range | |

| Circular Dichroism | No significant difference in spectra and conformational ratios | |

| Stability | Real-time and Accelerated | Equivalent or slower degradation rate; same degradation pathway |

Practical Implementation and Case Studies

Stepwise Implementation Process

Successful implementation of a comparability study requires a systematic, stepwise approach that begins well before the manufacture of post-change batches. The process typically starts with comprehensive preparatory activities, including gathering all relevant information on previously manufactured batches, preparing a list of product quality attributes (PQAs), and conducting a criticality assessment to identify CQAs potentially affected by the manufacturing change [27]. This foundational work provides the basis for developing a scientifically rigorous comparability protocol that addresses all potential quality impacts.

The subsequent phase involves experimental design and protocol development, including selection of appropriate analytical methods, determination of sample size and batch selection strategy, and establishment of predefined acceptance criteria [27]. The comparability protocol should be formally released before manufacturing post-change batches to ensure objectivity in assessment [27]. A well-constructed protocol typically includes detailed descriptions of all process changes, assessment of their potential effects on the product, comprehensive testing plans with predefined acceptance criteria, and plans for stability studies when applicable [27].

Common Challenges and Mitigation Strategies

Implementing successful comparability studies presents several common challenges that require proactive mitigation strategies. Unexpected results from extended characterization and forced degradation studies can open test methods and/or processes to intense scrutiny and further questions [4]. Facing these challenges early in development can save time and energy by enabling internal teams to identify and mitigate risks before initiating expensive, later phases of development [4]. Maintaining open communication with regulatory authorities through pre-submission meetings can help align on strategy and prevent unforeseen objections during formal review.

Another significant challenge involves managing subjectivity in the interpretation of complex analytical data, particularly for qualitative methods or methods with inherent variability [4]. Pre-defining both quantitative and qualitative acceptance criteria in the comparability study protocol can alleviate pressure to interpret complicated, subjective results as "comparable" or "not comparable" [4]. Including detailed evaluation criteria and, when possible, leveraging orthogonal methods for critical attributes can provide additional objectivity to the assessment.

Future Directions and Emerging Trends

Regulatory Convergence and Harmonization

The regulatory landscape for comparability assessment is evolving toward greater international harmonization to streamline biologic development globally. A recent study employing a modified Nominal Group Technique with international experts identified key priorities for regulatory convergence, with the highest-rated recommendations including enhancing stakeholder education on science-based biosimilarity principles, promoting regulatory convergence through reliance, aligning regulatory requirements based on current scientific knowledge, and reconsidering the requirement for comparative clinical efficacy studies [26]. These initiatives aim to reduce duplicative testing requirements while maintaining rigorous standards for product quality, safety, and efficacy.

There is growing consensus among stakeholders that certain traditional requirements for demonstrating comparability may no longer be justified based on advances in analytical capabilities and scientific understanding [26]. Specifically, recent research indicates strong expert support for eliminating in vivo animal studies (mean score: 4.50/5) and accepting clinical studies conducted for global submissions (mean score: 4.50/5) to reduce unnecessary duplication [26]. This evolution in regulatory thinking reflects increased confidence in the ability of sophisticated analytical methods to detect clinically relevant differences, potentially reducing the need for certain comparative clinical studies.

Advanced Analytical and Statistical Approaches

The future of comparability assessment will likely see increased adoption of advanced analytical technologies and sophisticated statistical methods to provide even more sensitive and comprehensive assessment of product quality attributes. Emerging technologies such as mass spectrometry with higher resolution and sensitivity, nuclear magnetic resonance (NMR) spectroscopy for detailed structural analysis, and microfluidic approaches for high-throughput characterization are expanding the capabilities for detecting subtle product differences [4]. These technological advances are complemented by development of more sophisticated statistical models that better account for the complex relationship between quality attributes and clinical outcomes.

There is also growing interest in the development of multivariate statistical approaches that can simultaneously evaluate multiple quality attributes and their potential interactions [28]. These methods may provide a more holistic assessment of comparability than traditional univariate approaches, particularly for complex biologics with numerous interdependent critical quality attributes. As the industry's understanding of the relationship between specific quality attributes and clinical performance deepens, there is potential for more targeted, risk-based comparability assessments that focus on the attributes most likely to impact safety and efficacy.

Figure 1: Totality-of-Evidence Assessment Flow

Essential Research Reagent Solutions

Table 4: Key Research Reagents for Comparability Studies

| Reagent Category | Specific Examples | Function in Comparability Assessment |

|---|---|---|

| Reference Standards | Pre-change reference standard, Pharmacopeial standards | Provides benchmark for quality attribute comparison, ensures assay performance qualification |

| Cell-Based Assay Reagents | Cell lines, Reporter gene systems, Ligands/receptors | Measures biological activity and mechanism of action for potency assessment |

| Chromatography Materials | HPLC/SEC columns, Ion-exchange resins, Binding buffers | Separates and quantifies product variants, impurities, and related substances |

| Mass Spectrometry Reagents | Trypsin/Lys-C enzymes, Digestion buffers, Calibration standards | Enables detailed structural characterization including sequence and modifications |

| Electrophoresis Supplies | cIEF reagents, CE-SDS capillaries, Gel matrices | Analyzes charge heterogeneity, size variants, and purity |

| Binding Assay Components | ELISA plates, Detection antibodies, Substrates | Quantifies process-related impurities and binding activity |

| Stability Study Reagents | Oxidation reagents, Light exposure systems, Proteolytic enzymes | Facilitates forced degradation studies to elucidate degradation pathways |

In the biopharmaceutical industry, demonstrating comparability between pre-change and post-change products is a critical regulatory requirement. This in-depth technical guide establishes confidence intervals as the fundamental statistical tool for visualizing and testing hypotheses of equivalence within a totality-of-evidence strategy. Framed within broader research on comparability study statistical fundamentals, this whitepaper provides drug development professionals with detailed methodologies for implementing two one-sided tests (TOST), analytical comparison techniques, and visual decision frameworks that form the bedrock of modern comparability assessment.

Regulatory agencies acknowledge that product and process changes are necessary for the biotech industry to evolve, placing the responsibility on manufacturers to demonstrate that products manufactured in post-change environments remain comparable to their pre-change counterparts in terms of safety, identity, purity, and potency [2]. The demonstration of comparability does not necessarily mean that the quality attributes of reference and test products are identical, but rather that they are highly similar, with existing knowledge sufficiently predictive to ensure any differences have no adverse impact upon safety or efficacy [2].