SVM vs Logistic Regression for Single-Cell Classification: A Comprehensive Benchmark and Practical Guide

Accurate cell type classification is a cornerstone of single-cell RNA sequencing (scRNA-seq) analysis, enabling discoveries in cellular heterogeneity, disease mechanisms, and drug development.

SVM vs Logistic Regression for Single-Cell Classification: A Comprehensive Benchmark and Practical Guide

Abstract

Accurate cell type classification is a cornerstone of single-cell RNA sequencing (scRNA-seq) analysis, enabling discoveries in cellular heterogeneity, disease mechanisms, and drug development. This article provides a systematic comparison of two fundamental machine learning algorithms—Support Vector Machine (SVM) and Logistic Regression (LR)—for automated cell annotation. Drawing from recent benchmark studies, we explore their foundational principles, practical implementation, and performance across diverse biological contexts. We detail methodological pipelines from data preprocessing to model training, address common challenges like high-dimensionality and dataset integration, and present empirical evidence from large-scale validation studies. Designed for researchers and biomedical professionals, this guide offers actionable insights for selecting, optimizing, and applying these classifiers to improve the accuracy and reproducibility of single-cell research.

The Critical Role of Automated Classification in Single-Cell Biology

Why Manual Cell Annotation is a Bottleneck in Modern scRNA-seq Workflows

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological and medical research by enabling the characterization of cellular heterogeneity at an unprecedented resolution [1]. However, a critical challenge in scRNA-seq data analysis is the interpretation of results, particularly the assignment of biological identity to cell clusters—a process known as cell type annotation [2]. This article explores why manual cell annotation remains a significant bottleneck, frames this challenge within the context of machine learning classification approaches, and provides experimental data comparing logistic regression and support vector machines (SVM) for single-cell classification.

The Manual Annotation Bottleneck

Labor-Intensive Nature and Subjectivity

Manual cell annotation is widely regarded as the gold standard in scRNA-seq analysis, but it is inherently labor-intensive and time-consuming [3] [4]. This process requires human experts to compare genes highly expressed in each cell cluster with canonical cell type marker genes, demanding substantial domain expertise [3]. The process is inherently subjective, with the concept of a "cell type" itself lacking clear definition, leading most practitioners to rely on a "I'll know it when I see it" intuition that is not amenable to computational analysis [2].

Limitations of Prior Knowledge

The manual annotation process bridges current datasets with prior biological knowledge, which is not always available in a consistent and quantitative manner [2]. While databases of cell markers exist, they primarily focus on a limited range of species, with emphasis on humans and mice, creating knowledge gaps for other organisms [4]. Furthermore, manual annotations exhibit inter-rater variability and systematic biases, particularly in datasets with ambiguous cell clusters [5].

Machine Learning Approaches to Cell Classification

Theoretical Foundations for scRNA-seq Classification

The classification of cell types in scRNA-seq data represents a classic machine learning problem where cells (observations) must be assigned to specific types (categories) based on their gene expression patterns (features). Two traditional yet powerful approaches to this problem are logistic regression and support vector machines.

Logistic regression is a predictive analysis that describes data and explains the relationship between variables, using a logistic (sigmoid) function to map any real-valued number to a value between 0 and 1 [6]. It's based on statistical approaches and provides probabilities for class membership.

Support vector machines create a hyperplane or decision boundary that separates data into classes by finding the "best" margin (distance between the line and the support vectors) that separates the classes [6]. SVM is based on geometrical properties of the data and uses the kernel trick to find optimal separators in high-dimensional space.

Comparative Performance in Biological Contexts

A direct comparison of these methods for predicting successful memory encoding using human brain electrophysiological data revealed that deep learning classifiers outperformed both SVM and logistic regression [7]. However, when comparing traditional machine learning approaches, the performance differences depend strongly on data characteristics and implementation details.

Table 1: Algorithm Characteristics Comparison

| Feature | Logistic Regression | Support Vector Machines |

|---|---|---|

| Theoretical Basis | Statistical approaches | Geometrical properties |

| Decision Function | Sigmoid function | Hyperplane with maximum margin |

| Kernel Trick | Not natively supported | Supported for nonlinear separation |

| Overfitting Risk | Higher without regularization | Lower due to margin maximization |

| Data Type Preference | Structured data with identified features | Unstructured and semi-structured data |

| Probability Output | Direct probability estimates | Requires additional calibration |

Experimental Data and Performance Benchmarks

Benchmarking Methodologies

Performance evaluation of classification methods for scRNA-seq data typically involves comparing automated annotations with manual expert annotations as a reference standard. Agreement is commonly measured using direct string comparison, Cohen's kappa (κ), or numerical scoring systems that account for full, partial, or no matches [3] [5] [8].

Recent advancements have introduced large language models (LLMs) as automated annotation tools. In one comprehensive benchmarking study, Claude 3.5 Sonnet demonstrated the highest agreement with manual annotation [8], while another study found GPT-4 annotations fully or partially matching manual annotations in over 75% of cell types in most studies and tissues [3].

Quantitative Performance Comparisons

Table 2: Performance Comparison of Classification Approaches

| Method | Agreement with Manual Annotation | Strengths | Limitations |

|---|---|---|---|

| Manual Expert Annotation | Gold Standard | Incorporates domain expertise | Labor-intensive, subjective, expertise-dependent |

| Logistic Regression | Varies by dataset and features [7] | Probabilistic outputs, interpretable | Vulnerable to overfitting [6] |

| Support Vector Machines | Varies by dataset and features [7] | Handles high-dimensional data well, less prone to overfitting [6] | Computationally intensive, black-box nature |

| LLM-based (GPT-4) | 75%+ full or partial match in most tissues [3] | Broad prior knowledge, no reference needed | Potential "hallucinations", training corpus opaque [3] |

| Multi-LLM Integration (LICT) | Mismatch reduced to 9.7% (from 21.5%) for PBMCs [5] | Combines strengths of multiple models | Complex implementation |

Experimental Protocols for Method Evaluation

Standard scRNA-seq Preprocessing Pipeline

To ensure fair comparison between classification methods, consistent preprocessing of scRNA-seq data is essential:

- Quality Control: Filtering cells based on mitochondrial content, number of features, and counts

- Normalization: Library size normalization and log-transformation using SCANPY or Seurat [3]

- Feature Selection: Identification of high-variance genes

- Dimensionality Reduction: Principal component analysis (PCA) followed by neighborhood graph construction

- Clustering: Application of Leiden or Louvain clustering algorithms [8]

- Differential Expression: Welch's t-test or Wilcoxon rank-sum test to identify marker genes [3]

Method-Specific Implementation Protocols

Logistic Regression Implementation:

- Input: Normalized expression matrix of selected features

- Regularization: L1 or L2 regularization to prevent overfitting

- Training: Maximum likelihood estimation with gradient descent

- Validation: k-fold cross-validation with stratified sampling

SVM Implementation:

- Input: Normalized expression matrix of selected features

- Kernel Selection: Linear, polynomial, or radial basis function (RBF) based on data characteristics

- Parameter Tuning: Grid search for cost parameter C and kernel-specific parameters

- Validation: k-fold cross-validation with performance assessment using AUC metrics [7]

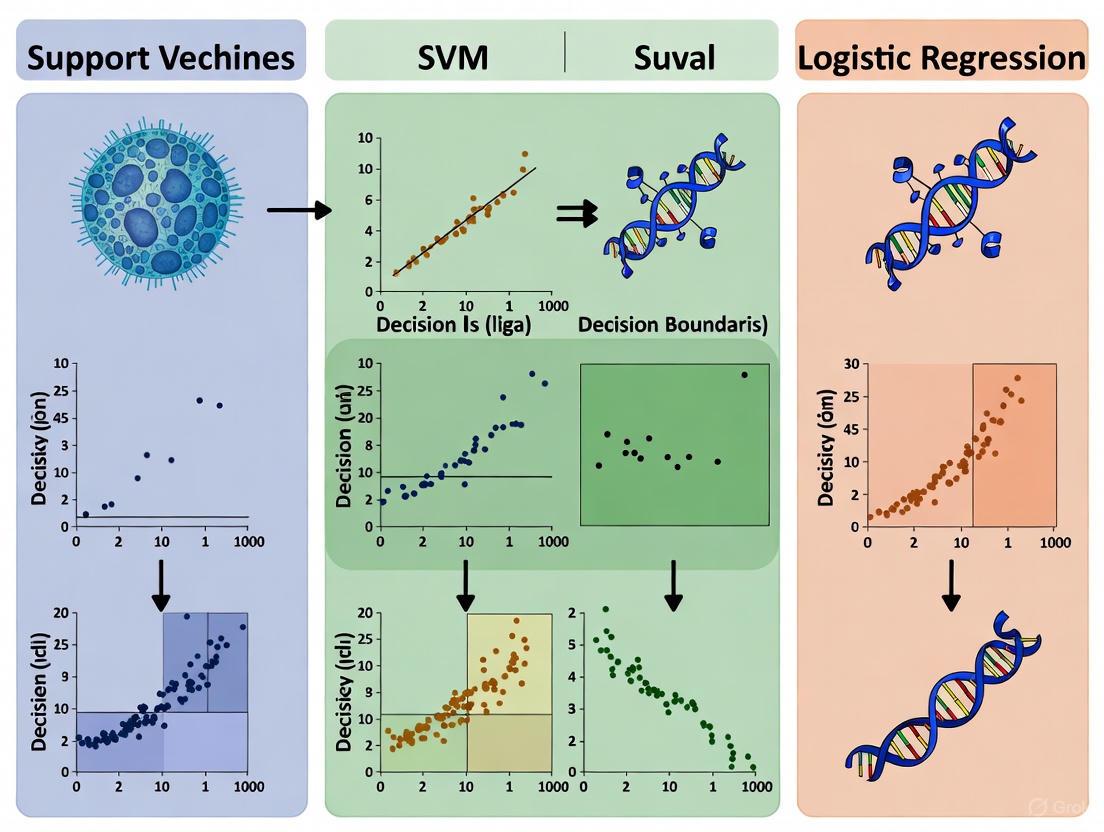

Visualization of Classification Approaches

Algorithmic Decision Boundaries

scRNA-seq Classification Workflow

Table 3: Key Resources for scRNA-seq Cell Type Annotation

| Resource | Function | Example Tools/Databases |

|---|---|---|

| Marker Gene Databases | Provide prior knowledge linking genes to cell types | singleCellBase, CellMarker, PanglaoDB [4] |

| Reference Atlases | Well-annotated datasets for comparison | Tabula Sapiens, Azimuth references [3] |

| Programming Frameworks | Implement analysis pipelines | Scanpy, Seurat, AnnDictionary [8] |

| LLM Integration Tools | Automated annotation using language models | GPTCelltype, CellAnnotator, LICT [3] [9] [5] |

| Benchmarking Platforms | Compare method performance | AnnDictionary, custom evaluation scripts [8] |

Manual cell annotation remains a significant bottleneck in scRNA-seq workflows due to its labor-intensive nature, subjectivity, and dependence on scarce domain expertise [2] [3]. While machine learning approaches like logistic regression and SVM offer automated alternatives, their performance depends heavily on data characteristics, implementation details, and the availability of high-quality training data.

The emergence of LLM-based annotation tools represents a promising direction, potentially combining the broad knowledge base of manual annotation with the scalability of automated methods [3] [5] [8]. However, these tools require validation by human experts to mitigate risks of artificial intelligence hallucination [3].

Future methodological development should focus on hybrid approaches that leverage the strengths of multiple methods, with rigorous benchmarking against manually curated gold standards. As single-cell technologies continue to evolve, overcoming the annotation bottleneck will be crucial for realizing the full potential of scRNA-seq in both basic research and therapeutic development.

In single-cell RNA sequencing (scRNA-seq) research, accurate cell type annotation is a fundamental prerequisite for analyzing cellular heterogeneity, understanding disease mechanisms, and identifying novel therapeutic targets. Machine learning algorithms, particularly Support Vector Machines (SVM) and Logistic Regression, have become cornerstone computational methods for this classification task. These supervised learning models are trained on reference datasets with known cell labels to learn patterns in high-dimensional gene expression data, enabling them to classify new, unlabeled cells efficiently. The selection between these algorithms significantly impacts annotation accuracy, computational efficiency, and biological interpretability, making it a critical consideration for researchers and drug development professionals analyzing complex single-cell transcriptomics data.

Core Mathematical Principles

Logistic Regression: A Probabilistic Approach

Logistic Regression is a linear classification model that relies on probabilistic principles to perform classification. Its core objective is to model the probability that a given single-cell expression profile belongs to a particular cell type. The model computes a weighted sum of input features (gene expression values), where each gene is assigned a coefficient that quantifies its contribution to cell type identification. The model transforms this linear combination using the sigmoid function, which outputs a value between 0 and 1, representing the predicted probability of class membership.

The decision boundary in Logistic Regression is linear and determined by setting a probability threshold (typically 0.5). Cells falling on one side of this boundary are classified into one category, while those on the opposite side are assigned to the alternative category. A key advantage of this approach for biological research is the inherent interpretability of the model parameters. The magnitude and sign of each coefficient provide direct insight into which genes are most influential in distinguishing specific cell populations, allowing researchers to identify potential biomarker genes for further experimental validation [10] [11].

Support Vector Machines: The Maximum Margin Classifier

Support Vector Machines employ a fundamentally different strategy centered on finding the optimal separating hyperplane that maximizes the margin between different cell types in a high-dimensional feature space. Unlike Logistic Regression, which models class probabilities, SVM focuses exclusively on identifying the decision boundary that provides the greatest separation between the closest observations of different classes, known as support vectors.

A critical innovation in SVM is the kernel trick, which enables the algorithm to project non-linearly separable data into higher dimensions where effective linear separation becomes possible without explicitly computing the coordinates in the new space. For single-cell data, which often contains complex, non-linear relationships between genes and cell states, the Radial Basis Function (RBF) kernel is particularly valuable, as it can capture intricate patterns in gene expression that may not be apparent in the original feature space [10]. This capability makes SVM exceptionally powerful for classifying cell types with subtle transcriptional differences, though it often comes at the cost of reduced model interpretability compared to Logistic Regression.

Table 1: Fundamental Principles of SVM and Logistic Regression

| Characteristic | Logistic Regression | Support Vector Machine (SVM) |

|---|---|---|

| Core Objective | Model class probability | Find maximum-margin decision boundary |

| Decision Boundary | Linear | Linear or non-linear (via kernels) |

| Output Type | Probability (0-1) | Class label + Distance from margin |

| Key Strength | Highly interpretable coefficients | Handles complex, non-linear relationships |

| Primary Optimization | Maximum likelihood estimation | Margin maximization |

| Kernel Trick Application | Not typically used | Frequently used (e.g., RBF kernel) |

Performance Comparison in Single-Cell Applications

Direct Benchmarking Studies

Recent comprehensive evaluations demonstrate that both SVM and Logistic Regression deliver robust performance in single-cell annotation tasks, though with notable differences in their effectiveness across various datasets. A 2025 comparative study evaluated seven machine learning techniques across four diverse single-cell datasets and found that SVM consistently outperformed other methods, ranking as the top performer in three out of the four datasets. The same study noted that Logistic Regression was the second-most effective algorithm, closely following SVM in classification accuracy [11].

These performance patterns are consistent with earlier research in genomics. A study on hypertension prediction using genotype information found that SVM significantly outperformed Logistic Regression in prediction accuracy, particularly as model complexity increased. The researchers observed that Logistic Regression models were more susceptible to overfitting when additional single-nucleotide polymorphisms (SNPs) were included, while SVM maintained more stable performance on test datasets [10].

Handling High-Dimensional Single-Cell Data

Single-cell RNA-seq data presents unique challenges for classification algorithms due to its high-dimensional nature, where the number of genes (features) vastly exceeds the number of cells (observations). In this context, SVM demonstrates particular advantages through implementations like the ActiveSVM framework, which efficiently identifies minimal gene sets capable of accurately classifying cell types. This approach iteratively selects maximally informative genes by analyzing misclassified cells, enabling the discovery of compact gene signatures (e.g., 15-150 genes) that maintain high classification accuracy (>85-90%) even in datasets containing over 1.3 million cells [12].

Logistic Regression remains highly valuable in scenarios where feature interpretability is prioritized. The model's coefficients directly indicate the direction and strength of each gene's association with specific cell types, providing biologically interpretable insights. However, effective application typically requires careful feature selection or regularization (L1/L2 penalty) to mitigate overfitting in high-dimensional spaces [10] [11].

Table 2: Performance Comparison from Experimental Studies

| Study Context | Logistic Regression Performance | SVM Performance | Experimental Notes |

|---|---|---|---|

| Cell Annotation (2025 Benchmark) | Second-highest accuracy, closely following SVM | Top performer in 3/4 datasets; highest overall accuracy | Evaluation across 4 diverse scRNA-seq datasets with hundreds of cell types [11] |

| Hypertension Prediction (Genotype Data) | Higher testing errors with >10 SNPs; overfitting issues | Outperformed logistic regression; comparable to permanental classification | Analysis of 62,735 SNPs; SVM showed better resistance to overfitting [10] |

| Minimal Gene Set Identification | Not primary for feature selection | ActiveSVM identified 15-gene sets with >85% accuracy for PBMC classification | Enabled analysis of 1.3M cells with minimal computational resources [12] |

| Hierarchical Classification | Baseline for comparison | Linear SVM outperformed one-class SVM (HF1-score: >0.9 vs ~0.8) | Evaluation on Allen Mouse Brain dataset with 92 cell populations [13] |

Experimental Protocols and Methodologies

Standard Single-Cell Classification Pipeline

The experimental workflow for comparing classification algorithms in single-cell studies follows a structured pipeline to ensure fair evaluation. Researchers typically begin with raw count data from scRNA-seq experiments, followed by quality control to remove low-quality cells and genes. Normalization (e.g., log(CP10K)) addresses varying sequencing depths, and feature selection identifies highly variable genes to reduce dimensionality. The labeled dataset is then split into training (80%) and testing (20%) sets, with the training set used to optimize model parameters through cross-validation. For Logistic Regression, this involves tuning regularization strength and penalty type (L1/L2), while for SVM, parameters like regularization (C) and kernel parameters (γ for RBF) are optimized. Finally, models are evaluated on the held-out test set using metrics like accuracy, F1-score, and area under the ROC curve [12] [11].

Model-Specific Configurations

For Logistic Regression implementations, studies typically employ L2 regularization (Ridge) to prevent overfitting in high-dimensional gene expression space, with maximum iteration limits (e.g., 100) to ensure convergence. The model is often implemented with cross-entropy loss minimization and optimized using stochastic gradient descent or L-BFGS algorithms [11].

SVM implementations for single-cell data frequently utilize the Radial Basis Function (RBF) kernel to capture non-linear relationships in gene expression patterns. Parameter tuning for SVM involves identifying optimal values for the regularization parameter C (controlling margin strictness) and γ (controlling kernel width), typically through grid search with 10-fold cross-validation on the training data [10] [11]. For large-scale single-cell datasets, linear SVM variants are sometimes preferred for computational efficiency while maintaining competitive performance.

Decision Framework and Research Applications

Selection Guidelines for Research Applications

The choice between SVM and Logistic Regression depends on multiple factors specific to the research objectives and dataset characteristics. The following decision framework can guide researchers in selecting the most appropriate algorithm:

Advanced Applications in Single-Cell Research

Both algorithms have been adapted for specialized applications in single-cell research. SVM has been successfully implemented in hierarchical classification frameworks like scHPL, which progressively learns cell identities across multiple datasets at different annotation resolutions. This approach leverages the hierarchical relationships between cell types to improve classification accuracy for closely related cell subtypes [13]. Similarly, ActiveSVM has demonstrated remarkable efficiency in identifying minimal gene sets for targeted single-cell sequencing, dramatically reducing sequencing costs while maintaining classification accuracy [12].

Logistic Regression has evolved to address specialized challenges, including the development of one-class Logistic Regression models for identifying novel cell states without reference data. This approach has proven valuable for detecting stem-like cells in tumor microenvironments, revealing cell populations that might be missed through conventional annotation methods [14] [15]. The probabilistic nature of Logistic Regression also makes it particularly suitable for uncertainty quantification in cell type assignment, allowing researchers to flag borderline cells for further investigation.

Essential Research Reagents and Computational Tools

Table 3: Essential Research Toolkit for Single-Cell Classification Studies

| Tool/Resource | Category | Function in Classification | Example Implementations |

|---|---|---|---|

| Annotated Reference Datasets | Biological Data | Training and benchmarking models for supervised classification | Human Cell Atlas, Tabula Muris, PanglaoDB [16] [11] |

| Quality Control Metrics | Computational Tools | Ensuring data integrity before classification | Seurat (nFeature_RNA, percent.mt), Scanny [14] [11] |

| Feature Selection Algorithms | Computational Methods | Identifying informative genes to improve classification performance | Highly Variable Genes (HVG), ActiveSVM, PCA [12] [11] |

| Model Validation Frameworks | Statistical Methods | Assessing performance and generalizability of classifiers | k-fold Cross-Validation, Train-Test Splits, Hierarchical F1-score [10] [13] |

| Single-Cell Software Ecosystems | Computational Platforms | Providing integrated environments for classification analysis | Seurat, Scanpy, SingleCellNet, scHPL [13] [11] |

SVM and Logistic Regression offer complementary strengths for single-cell classification tasks. SVM generally provides superior accuracy for complex, non-linear classification problems and scales efficiently to large datasets, while Logistic Regression offers greater interpretability and more natural probability calibration. The optimal choice depends on specific research priorities, with SVM favored for maximum classification performance and Logistic Regression preferred when biological interpretability and feature importance analysis are paramount. As single-cell technologies continue to evolve, both algorithms will remain essential components in the computational toolkit for deciphering cellular heterogeneity in health and disease.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by decoding gene expression profiles at the individual cell level, revealing cellular heterogeneity in unprecedented detail. This technology has become an indispensable tool for understanding embryonic development, immune regulation, and tumor progression. However, the high-dimensionality, technical noise, and inherent sparsity of single-cell data pose significant challenges for computational classification methods. Within this landscape, researchers must navigate the complex trade-offs between various machine learning approaches to accurately identify cell types and states. This article examines the performance of Support Vector Machines (SVM) against other classifiers, with particular attention to their application in single-cell research, and provides an objective comparison grounded in experimental data.

Characteristics of Single-Cell Data That Challenge Classification

The analysis of single-cell data introduces several unique characteristics that complicate the task of classification algorithms:

- High Dimensionality: Single-cell technologies routinely measure the expression of thousands of genes across tens of thousands of cells, creating data matrices of immense scale that demand computationally efficient analysis methods [17].

- Data Sparsity: The limited biological material per cell leads to a high prevalence of zero values (dropouts), where transcripts are present but undetected, creating substantial uncertainty in measurements [17].

- Technical Noise: Amplification artifacts, batch effects, and protocol-specific biases introduce systematic errors that can obscure biological signals and mislead classifiers [17] [18].

- Continuous Biological Processes: Cell states often exist on a continuum of differentiation or activation, defying simple discrete categorization and requiring algorithms that can infer trajectories and transitional states [17].

These characteristics collectively demand classifiers that are robust to noise, capable of handling high-dimensional sparse matrices, and sensitive enough to detect subtle biological differences in the presence of substantial technical variation.

SVM vs. Logistic Regression: A Theoretical Framework for Single-Cell Applications

Support Vector Machines (SVM)

SVMs are supervised learning models that identify the optimal hyperplane to separate classes in a high-dimensional space. Their theoretical advantages for single-cell data include:

- Effectiveness in High-Dimensional Spaces: SVMs remain effective even when the number of features (genes) far exceeds the number of samples (cells), a common scenario in scRNA-seq analysis [19].

- Memory Efficiency: By utilizing only a subset of training points (support vectors) in the decision function, SVMs conserve computational resources [19].

- Kernel Versatility: Non-linear kernel functions enable SVMs to handle complex, non-linear relationships in gene expression data [19].

Principal disadvantages include sensitivity to feature selection, the computational expense of probability calibration, and potential over-fitting when the number of features greatly exceeds samples without proper regularization [19].

Logistic Regression

Logistic Regression (LR) models the probability of class membership using a logistic function. While not extensively featured in the single-cell specific results, bibliometric analysis indicates continued comparison between machine learning approaches and logistic regression in biological domains [20]. In high-dimensional single-cell data, LR may face challenges with feature correlation and require substantial regularization to prevent overfitting.

Table 1: Theoretical Comparison of SVM and Logistic Regression for Single-Cell Data

| Characteristic | Support Vector Machines | Logistic Regression |

|---|---|---|

| High-dimensional handling | Excellent (utilizes support vectors) | Requires strong regularization |

| Non-linear separation | Strong (via kernel trick) | Limited (without feature engineering) |

| Probability outputs | Computationally expensive (5-fold cross-validation) | Native probability estimates |

| Feature selection importance | Critical for performance [21] | Beneficial but less critical |

| Overfitting risk | Moderate (controlled by regularization) | High in high-dimensional spaces |

Experimental Performance Benchmarking

Pan-Cancer RNA-seq Classification

A comprehensive evaluation of machine learning algorithms on RNA-seq gene expression data provides compelling evidence for SVM performance in biological classification tasks. The study assessed eight classifiers—including SVM, K-Nearest Neighbors, AdaBoost, Random Forest, Decision Tree, Quadratic Discriminant Analysis, Naïve Bayes, and Artificial Neural Networks—on the PANCAN dataset from the UCI Machine Learning Repository [22].

Employing a 70/30 train-test split and 5-fold cross-validation, the results demonstrated SVM's superior performance with a classification accuracy of 99.87% under 5-fold cross-validation, outperforming all other tested models [22]. This exceptional performance highlights SVM's capability to handle complex gene expression patterns across different cancer types.

Table 2: Experimental Performance of Classifiers on RNA-seq Data [22]

| Classifier | Reported Accuracy | Validation Method |

|---|---|---|

| Support Vector Machine | 99.87% | 5-fold cross-validation |

| Random Forest | Not specified | 5-fold cross-validation |

| Decision Tree | Not specified | 5-fold cross-validation |

| AdaBoost | Not specified | 5-fold cross-validation |

| K-Nearest Neighbors | Not specified | 5-fold cross-validation |

| Naïve Bayes | Not specified | 5-fold cross-validation |

| Artificial Neural Networks | Not specified | 5-fold cross-validation |

Single-Cell Specific Applications

While the aforementioned study utilized bulk RNA-seq data, its implications for single-cell analysis are significant. Bibliometric research tracking 3,307 publications at the intersection of machine learning and single-cell transcriptomics confirms that SVM, alongside Random Forest and deep learning models, represents a core analytical tool in this domain [23]. The integration of SVM with specialized feature selection techniques has proven particularly valuable for addressing the high-dimensionality of single-cell data.

Critical Methodological Considerations for Single-Cell Classification

Feature Selection Strategies

Optimal feature selection is crucial for SVM performance with single-cell data. Effective techniques include:

- Recursive Feature Elimination (RFE): Iteratively removes the least important features based on model performance, particularly effective with linear SVM kernels [21].

- Forward Feature Selection: Builds feature sets incrementally, adding the most beneficial features at each step [21].

- Backward Feature Selection: Starts with all features and eliminates the least valuable ones sequentially [21].

Implementation of RFE with SVM on the Breast Cancer Wisconsin dataset demonstrated how feature selection maintains high accuracy (94.7%) while significantly reducing model complexity [21].

Experimental Workflow for Single-Cell Classification

The following diagram illustrates a standardized workflow for implementing SVM classification in single-cell studies:

Reference Dataset Selection

For cancer classification, the selection of appropriate reference datasets of normal cells critically impacts performance. A benchmarking study of scRNA-seq copy number variation callers found that methods incorporating allelic information (like CaSpER and Numbat) performed more robustly for large droplet-based datasets, though with increased computational requirements [24]. This principle extends to gene expression-based classification, where careful reference selection reduces technical artifacts.

Table 3: Key Experimental Resources for Single-Cell Classification Studies

| Resource | Function | Example Applications |

|---|---|---|

| Droplet-based scRNA-seq platforms (Drop-seq, 10X Genomics) | High-throughput single-cell transcriptome profiling | Cell atlas construction, tumor heterogeneity studies [18] |

| Reference datasets (e.g., Human Cell Atlas) | Normalization baseline, classifier training | Identification of rare cell populations, cancer cell detection [24] |

| SVM implementations (scikit-learn, LIBSVM) | Model training and prediction | Cell type classification, gene signature identification [19] |

| Feature selection algorithms (RFE, SequentialFeatureSelector) | Dimensionality reduction | Improving classifier performance, identifying biomarker genes [21] |

| Benchmarking pipelines (e.g., Snakemake workflows) | Method validation and comparison | Objective performance assessment across multiple datasets [24] |

Discussion and Future Perspectives

The integration of machine learning, particularly SVM, with single-cell transcriptomics represents a rapidly evolving frontier. Bibliometric analysis reveals China and the United States dominate research output (combined 65%), with the Chinese Academy of Sciences and Harvard University emerging as core collaboration hubs [23]. Future development should focus on overcoming current technical bottlenecks, including data heterogeneity, model interpretability, and cross-dataset generalization capability [23].

As single-cell technologies mature toward multi-omic assays—simultaneously measuring transcriptomics, epigenomics, and proteomics—classifiers must adapt to integrate these complementary data modalities. Deep learning architectures show particular promise for this integration, though SVM remains relevant for its interpretability and efficiency with limited sample sizes [23] [17].

Within the challenging landscape of single-cell data, Support Vector Machines demonstrate distinct advantages for classification tasks, particularly their effectiveness with high-dimensional data and flexibility through kernel functions. Experimental evidence confirms SVM can achieve exceptional accuracy (99.87%) in gene expression-based classification. However, this performance is contingent upon appropriate feature selection, careful experimental design, and proper normalization against relevant reference data. As single-cell technologies continue to evolve, classifier selection must remain attuned to the specific characteristics of the biological question, dataset scale, and required interpretability. While newer deep learning approaches show promise for increasingly complex integration tasks, SVM maintains a strong position in the computational toolkit of single-cell researchers seeking robust, interpretable classification.

In the field of single-cell RNA sequencing (scRNA-seq) analysis, cell type classification is a fundamental task for understanding cellular heterogeneity. The choice between Support Vector Machines (SVM) and Logistic Regression (LR) involves critical trade-offs between predictive performance, computational efficiency, and interpretability. This guide provides an objective comparison of these algorithms, synthesizing experimental data from recent benchmarking studies to inform researchers and drug development professionals.

Quantitative analyses reveal that SVM can achieve superior accuracy in complex, high-dimensional classification tasks, with one study reporting up to 99.87% accuracy in cancer type classification [22]. Conversely, LR demonstrates strong performance in clinical prediction scenarios with structured data, sometimes outperforming more complex machine learning models, and offers advantages in interpretability and speed [25] [26]. The optimal choice is highly context-dependent, influenced by dataset size, biological complexity, and computational constraints.

Performance Comparison: SVM vs. Logistic Regression

The table below summarizes key performance metrics from recent experimental benchmarks comparing SVM and LR in biological classification tasks.

Table 1: Comparative Performance of SVM and Logistic Regression

| Study Context | Algorithm | Key Performance Metric | Reported Result | Experimental Notes |

|---|---|---|---|---|

| Cancer Type Classification from RNA-seq [22] | Support Vector Machine (SVM) | Accuracy | 99.87% | 5-fold cross-validation on UCI PANCAN dataset |

| Logistic Regression | Accuracy | Not Top Performer | Outperformed by SVM, Random Forest, and other models | |

| Osteoporosis Risk Prediction [25] | Logistic Regression | AUC (Area Under Curve) | 0.751 | Model included 9 predictors (age, sex, genetic factors, etc.) |

| Support Vector Machine (SVM) | AUC | 0.72 | Trained on data from 211 high cardiovascular-risk patients | |

| Single-Cell Annotation (Active Learning) [26] | Random Forest | Accuracy | Outperformed LR | Active learning context; LR was benchmarked baseline |

| Logistic Regression | Speed / Interpretability | Advantage | Simpler model, faster training, more interpretable coefficients |

Experimental Protocols and Methodologies

Protocol 1: High-Accuracy SVM for Cancer Classification

The study demonstrating 99.87% SVM accuracy employed a rigorous computational workflow [22]:

- Data Source: The PANCAN RNA-seq dataset from the UCI Machine Learning Repository.

- Data Preprocessing: Standard normalization of gene expression values.

- Model Training: Eight different classifiers, including SVM, were evaluated.

- Validation: A 70/30 train-test split was used alongside 5-fold cross-validation to ensure robustness and prevent overfitting.

- Performance Measurement: Classification accuracy was calculated on the held-out test set.

This protocol highlights SVM's strength in handling high-dimensional genomic data for complex discrimination tasks.

Protocol 2: Logistic Regression for Clinical Risk Prediction

The study where LR outperformed machine learning models, including SVM, focused on predicting osteoporosis in a high-risk clinical cohort [25]:

- Study Design: A cross-sectional investigation of 211 patients at high risk for cardiovascular diseases.

- Predictors: The model integrated nine demographic, clinical, and genetic variables (e.g., age, sex, fracture history, copy number variants).

- Model Comparison: LR was compared against four machine learning models: SVM, Random Forest, Decision Tree, and XGBoost.

- Evaluation Metrics: Models were compared using the Area Under the Receiver Operating Characteristic Curve (AUC) and calibration metrics (Brier score).

- Interpretation: The resulting LR model provided well-calibrated risk probabilities and interpretable coefficient estimates for each predictor.

Protocol 3: Active Learning for Single-Cell Annotation

A comprehensive benchmarking study assessed classifiers, including LR, within an active learning framework for single-cell data [26]. This strategy selectively labels the most informative cells to maximize annotation efficiency.

- Initialization: The process begins with a small, randomly selected set of labeled cells.

- Uncertainty Sampling: A classifier (e.g., LR) is trained, and its predictive uncertainty is calculated for all unlabeled cells. Cells with the highest uncertainty (e.g., highest entropy or lowest maximum probability) are selected for expert annotation.

- Iteration: The newly labeled cells are added to the training set, and the classifier is retrained. This loop continues until a labeling budget is exhausted.

- Key Finding: In this active learning context, Random Forest models generally outperformed Logistic Regression [26]. This underscores that model performance is task-dependent, even within the single-cell domain.

Table 2: Key Computational Tools for Single-Cell Classification

| Tool / Resource | Function | Relevance to SVM/LR |

|---|---|---|

| scikit-learn (Python) | Comprehensive machine learning library | Provides robust, optimized implementations for both SVM and Logistic Regression. |

| Single-Cell Atlases (e.g., Tabula Sapiens, Tabula Muris) | Reference datasets with curated cell labels | Essential as training data or benchmarks for developing and validating classifiers [27]. |

| Active Learning Frameworks | Reduces manual annotation effort | Algorithms can be wrapped around SVM or LR models to intelligently select cells for labeling, improving efficiency [26]. |

| UCI PANCAN | Curated RNA-seq dataset for cancer classification | A standard benchmark for evaluating classifier performance on high-dimensional genomic data [22]. |

| Cross-Validation (e.g., 5-fold) | Model validation technique | Critical for obtaining reliable, unbiased performance estimates, especially with limited data [22]. |

| AUC/ROC Analysis | Performance evaluation | Preferred over accuracy for imbalanced datasets; used to compare SVM and LR in clinical studies [25]. |

The competition between SVM and Logistic Regression for single-cell classification does not have a universal winner. The decision must be guided by the specific project goals, data characteristics, and resource constraints.

- Choose SVM when your primary objective is maximizing classification accuracy for a high-dimensional problem, such as discriminating closely related cell types or cancer subtypes from complex transcriptomic data, and computational resources are less constrained [22].

- Choose Logistic Regression when your task involves structured clinical or patient data, or when model interpretability, computational speed, and the ability to generate calibrated risk probabilities are critical [25] [26].

Future development in this area is likely to focus on hybrid and ensemble approaches that leverage the strengths of multiple algorithms, as well as the integration of these classical models into active learning frameworks to dramatically increase the efficiency of single-cell data annotation [26].

Building Your Classifier: A Step-by-Step Implementation Guide

Data Preprocessing and Feature Selection for Optimal Performance

Single-cell RNA sequencing (scRNA-seq) has revolutionized biology and medicine by enabling the detailed characterization of complex tissue composition, identification of new and rare cell types, and analysis of cellular responses to perturbations [11]. A critical step in scRNA-seq analysis is cell type annotation—the process of categorizing and labeling cells based on their gene expression profiles [11]. Accurate cell annotation is essential for studying disease progression, tumor microenvironments, and understanding cellular heterogeneity [11] [28].

In single-cell research, researchers must choose between various machine learning approaches for cell classification. Among traditional algorithms, Support Vector Machines (SVM) and Logistic Regression (LR) represent two important options with distinct characteristics. This guide provides an objective comparison of these methods specifically for single-cell classification tasks, supported by experimental data and detailed methodologies to inform researchers' analytical decisions.

Computational Foundations: SVM and Logistic Regression for Single-Cell Data

Algorithmic Principles in Biological Context

Support Vector Machines (SVM) operate by finding the optimal hyperplane that maximizes the margin between different cell types in high-dimensional gene expression space. When handling non-linearly separable single-cell data, SVM employs kernel functions (such as Radial Basis Function) to transform data into higher dimensions where effective separation becomes possible. This capability is particularly valuable for capturing complex relationships in high-dimensional scRNA-seq data [11].

Logistic Regression provides a probabilistic approach to cell classification by modeling the relationship between gene expression features and the probability of a cell belonging to a particular type using a logistic function. Despite being a linear classifier, its strength in single-cell analysis lies in its interpretability—feature weights directly indicate which genes contribute most significantly to cell type identification [11].

Experimental Evidence in Single-Cell Applications

A comprehensive 2025 comparative study evaluated multiple machine learning techniques for single-cell annotation across four diverse datasets comprising hundreds of cell types. The results revealed that SVM consistently outperformed other techniques, emerging as the top performer in three out of four datasets, followed closely by logistic regression [11]. Both methods demonstrated robust capabilities in annotating major cell types and identifying rare cell populations.

However, performance comparisons in other domains show context-dependent results. A 2025 study on osteoporosis risk prediction in high-risk cardiovascular patients found that logistic regression (AUC: 0.751) unexpectedly outperformed SVM (AUC: 0.72) and other machine learning models [25]. This suggests that dataset characteristics and biological context significantly influence model performance.

Performance Benchmarking: Experimental Data Comparison

Table 1: Comparative Performance of SVM and Logistic Regression in Classification Tasks

| Domain/Application | Dataset Characteristics | SVM Performance | Logistic Regression Performance | Key Metrics |

|---|---|---|---|---|

| Single-cell annotation [11] | 4 diverse datasets with hundreds of cell types | Top performer in 3/4 datasets | Close second, consistent performance | F1 scores, Accuracy |

| Osteoporosis prediction [25] | 211 patients, clinical & genetic data | AUC: 0.72 | AUC: 0.751 | AUC, Brier score |

| General scRNA-seq annotation [11] | Multiple tissues, cell types | Robust for major & rare cell types | Robust for major & rare cell types | Classification accuracy |

| Usher syndrome biomarker discovery [29] | 42,334 mRNA features | Robust classification performance | Robust classification performance | Feature selection stability |

Table 2: Computational Characteristics for Single-Cell Analysis

| Characteristic | Support Vector Machines (SVM) | Logistic Regression |

|---|---|---|

| Interpretability | Moderate (feature weights less directly interpretable) | High (direct gene importance weights) |

| Handling High-Dimensional Data | Excellent with appropriate kernels | Requires regularization for stability |

| Non-linear Relationships | Excellent with kernel tricks | Limited without feature engineering |

| Computational Efficiency | Lower for large datasets | Higher, especially with many cells |

| Probability Outputs | Requires Platt scaling | Native probabilistic output |

| Feature Selection Integration | Works well with various selection methods | Highly dependent on selected features |

Methodological Framework: Experimental Protocols for Single-Cell Classification

Data Preprocessing Workflow

Proper data preprocessing is crucial for optimal performance of both SVM and logistic regression in single-cell analysis. The standard workflow includes:

Quality Control and Normalization: Initial processing requires filtering low-quality cells based on metrics like detected genes per cell, total molecule count, and mitochondrial gene expression percentage [28]. Normalization addresses varying sequencing depths across cells, typically achieving the same total count for each cell [30].

Feature Selection Strategies: For single-cell data, feature selection dramatically impacts classifier performance. The high dimensionality of scRNA-seq data (thousands of genes) necessitates selecting informative features. Approaches include:

- Highly Variable Genes (HVGs): Selects genes with high cell-to-cell variation [31] [32]

- Statistical Methods: Principles like BigSur quantify biologically meaningful gene expression variation [31]

- Hybrid Sequential Selection: Combines variance thresholding, recursive feature elimination, and regularization (LASSO) [29]

For routine cell type identification where cell types differ greatly in gene expression, even randomly chosen features can perform well with sufficient features. However, for subtle distinctions (e.g., identifying T-regulatory cells representing 1.8% of cells), both the number of features and selection strategy strongly influence outcomes [31].

Model Training and Evaluation Protocol

Implementation Framework:

- Data Splitting: Split data into training (80%) and test (20%) sets [11]

- Hyperparameter Tuning: For SVM, optimize kernel choice (typically RBF) and regularization; for LR, select appropriate regularization strength [11]

- Cross-Validation: Use nested cross-validation to avoid overfitting, particularly when combining with feature selection [29]

- Performance Assessment: Evaluate using F1 scores, accuracy, AUC-ROC curves, and confusion matrices

Experimental Considerations:

- For SVM, use RBF kernel as default for capturing non-linear relationships in gene expression

- For logistic regression, apply L1 or L2 regularization to handle high-dimensional gene space

- For both methods, scale features to ensure comparable influence across genes

Figure 1: Single-Cell Data Preprocessing and Model Selection Workflow

Table 3: Essential Computational Tools for Single-Cell Classification

| Tool/Resource | Type | Function in Single-Cell Classification | Implementation |

|---|---|---|---|

| DANCE [30] | Benchmark platform | Standardized evaluation of classification methods across datasets | Python |

| Scanpy [31] [32] | Analysis toolkit | Preprocessing, normalization, and basic classification | Python |

| Seurat [32] | Analysis toolkit | Single-cell preprocessing, integration, and classification | R |

| scikit-learn [11] | Machine learning library | Implementation of SVM and Logistic Regression | Python |

| CellMarker [28] | Biological database | Marker gene reference for annotation validation | Database |

| PanglaoDB [28] | Biological database | Curated marker genes for cell type identification | Database |

Advanced Considerations: Feature Selection Impact and Model Selection Guidelines

Feature Selection Influence on Classifier Performance

The interaction between feature selection methods and classifier performance is crucial in single-cell analysis. Benchmark studies show that feature selection methods significantly affect integration and querying performance in scRNA-seq analysis [32]. For both SVM and logistic regression, using appropriately selected features (typically 500-2000 genes) dramatically improves performance over using all genes or randomly selected features.

Unexpectedly, research demonstrates that for datasets where cell types of interest are relatively abundant and well-separated in gene expression space, randomly chosen genes often perform nearly as well as algorithmically-selected features if the gene set is large enough [31]. However, for challenging tasks like identifying rare cell populations or distinguishing subtly different cell types, feature selection strategy becomes critical.

Decision Framework for Method Selection

Figure 2: Model Selection Decision Framework for Single-Cell Classification

Based on current experimental evidence, SVM generally demonstrates superior performance for complex single-cell classification tasks with non-linear relationships, while logistic regression provides strong baseline performance with enhanced interpretability and computational efficiency [11] [25].

The emerging field of single-cell foundation models (scFMs) presents future opportunities for enhancing classification performance. These models leverage large-scale pretraining on massive single-cell datasets to learn universal biological knowledge, potentially offering improved performance across diverse downstream tasks including cell classification [33]. However, current benchmarks indicate that no single foundation model consistently outperforms others across all tasks, emphasizing the continued relevance of traditional methods like SVM and logistic regression for specific applications [33].

For researchers implementing single-cell classification pipelines, we recommend including both SVM and logistic regression in benchmarking studies, as their relative performance depends on specific dataset characteristics, including the number of cells, gene selection strategy, and biological complexity of the classification task.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the analysis of gene expression at the level of individual cells, revealing cellular heterogeneity and complex biological processes previously obscured in bulk sequencing data. A fundamental step in scRNA-seq analysis is cell type identification, which allows researchers to decipher cellular composition, identify rare cell populations, and understand disease mechanisms. While unsupervised clustering methods have been widely used, supervised machine learning approaches have gained increasing popularity due to their better accuracy, robustness, and computational performance, especially with the accumulation of well-annotated public scRNA-seq data [34].

Among supervised methods, Support Vector Machines (SVM) have emerged as a particularly powerful tool for cell classification. Recent comprehensive evaluations have revealed that SVM consistently outperforms other techniques, emerging as the top performer across multiple diverse datasets [11]. This guide provides a detailed examination of SVM configuration for single-cell data, with particular emphasis on kernel selection and parameter optimization, while objectively comparing its performance against logistic regression within the context of single-cell classification research.

Experimental Performance: SVM vs. Logistic Regression

Quantitative Performance Comparison

Recent large-scale benchmarking studies provide empirical data on the comparative performance of SVM and logistic regression for single-cell classification tasks. The table below summarizes key findings from comprehensive evaluations:

Table 1: Performance comparison of SVM and logistic regression in single-cell classification

| Evaluation Metric | SVM Performance | Logistic Regression Performance | Dataset Context | Citation |

|---|---|---|---|---|

| Overall Ranking | Top performer in 3 out of 4 datasets | Second, closely following SVM | Diverse tissues, hundreds of cell types | [11] |

| F1-Score | Consistently high across datasets | Robust but slightly lower than SVM | 42 disease-related datasets | [11] [35] |

| Accuracy | 75%+ for most cell types | Competitive but less consistent | 10 datasets across five species | [11] |

| Handling High-Dimensional Data | Excellent with appropriate kernels | Good but may require more feature selection | scRNA-seq data with ~20,000 genes | [34] [11] |

In a 2025 comparative study that evaluated seven traditional machine learning models for cell type annotation using single-cell gene expression data, SVM consistently outperformed other techniques, emerging as the top performer in three out of the four datasets tested, followed closely by logistic regression. Both methods demonstrated robust capabilities in annotating major cell types and identifying rare cell populations [11].

Experimental Protocols and Methodologies

The superior performance of SVM is contingent upon proper configuration. The experimental protocols underlying these comparisons typically follow this structured methodology:

Data Preprocessing: Raw scRNA-seq data undergoes quality control, normalization, and log-transformation using standardized pipelines (e.g., Scanpy or Seurat). The top 2000 highly variable genes are typically selected as features to capture key biological differences while reducing dimensionality [35].

Data Splitting: Datasets are divided into training (70-80%) and testing (20-30%) sets, with some studies employing a three-way split (70% training, 15% validation, 15% testing) for more robust model evaluation [36].

Model Training: Both SVM and logistic regression models are trained on the reference data, with careful attention to hyperparameter optimization through grid search or more advanced frameworks like Hyperopt or Optuna [36].

Cross-Validation: A 5-fold cross-validation strategy is often performed to examine the generalizability and robustness of the classification models [36].

Performance Evaluation: Models are evaluated on held-out test data using multiple metrics including accuracy, F1-score, and area under the receiver operating characteristic curve (AUROC) [37] [11].

Table 2: Typical experimental workflow for SVM and logistic regression benchmarking

| Processing Stage | Key Steps | Purpose |

|---|---|---|

| Data Preparation | Quality control, normalization, highly variable gene selection | Reduce technical noise and dimensionality |

| Feature Engineering | Statistical, information theory, or deep learning-based features | Enhance biological signal representation |

| Model Training | Hyperparameter optimization, cross-validation | Prevent overfitting, ensure robustness |

| Validation | Testing on held-out datasets, performance metrics | Evaluate generalizability and accuracy |

SVM Configuration for Single-Cell Data

Kernel Functions for Single-Cell Data

The choice of kernel function significantly impacts SVM performance by determining how data is transformed to enable linear separation. For single-cell data, which is typically high-dimensional with complex gene expression patterns, the following kernels have been most extensively evaluated:

Radial Basis Function (RBF) Kernel: Also known as the Gaussian kernel, this generally demonstrates superior classification performance and generalization capability for single-cell data [38]. The RBF kernel excels at capturing complex, non-linear relationships between gene expression profiles, which is essential for distinguishing closely related cell types.

Linear Kernel: While simpler, the linear kernel can be effective for single-cell classification, particularly when combined with appropriate feature selection [34]. Some studies have identified linear SVM as a top performer in scRNA-seq benchmark evaluations [39].

The RBF kernel is particularly well-suited to the characteristics of single-cell data, as it can model the subtle, non-linear relationships in gene expression space that distinguish cell types and states without requiring explicit feature transformation.

Key Parameters and Optimization Strategies

The performance of SVM depends critically on proper parameter configuration. The two most important parameters are:

Regularization Parameter (C): This parameter balances the trade-off between achieving a low training error and maintaining a simple decision boundary. A smaller C value may lead to underfitting, while a larger C can result in overfitting [38]. For single-cell data, which often exhibits significant biological variability, appropriate regularization is crucial for generalization across datasets.

Kernel Coefficient (γ): For the RBF kernel, γ defines how far the influence of a single training example reaches. Lower values create a broader influence, while higher values make the model more localized and complex [36].

Advanced hyperparameter optimization (HPO) frameworks such as Hyperopt and Optuna have been successfully integrated with SVM to automate parameter selection, significantly enhancing classification accuracy [36]. These frameworks systematically search the parameter space to identify optimal configurations that might be missed through manual tuning.

Visualizing the SVM Workflow for Single-Cell Classification

The following diagram illustrates the optimized SVM configuration workflow for single-cell RNA sequencing data, highlighting the critical decision points for kernel selection and parameter optimization:

This workflow highlights two critical configuration points: (1) the kernel selection decision, where RBF is generally recommended for single-cell data, and (2) the hyperparameter optimization stage, where both C and γ require careful tuning for optimal performance.

Table 3: Key research reagents and computational solutions for SVM-based single-cell classification

| Resource Category | Specific Tools/Reagents | Function/Purpose | Application Context |

|---|---|---|---|

| Reference Datasets | CellMarker, PanglaoDB, CancerSEA | Provide curated marker genes for cell types | Training and validation of classifiers [11] |

| Feature Selection Methods | Highly Variable Genes (HVG), F-test, Seurat V2.0 | Identify informative genes for classification | Dimensionality reduction [34] [35] |

| Hyperparameter Optimization | Optuna, Hyperopt, Grid Search | Automated parameter tuning for SVM | Enhancing model accuracy [36] |

| Multi-Feature Fusion | scMFF framework (weighted sum, attention fusion) | Integrates diverse feature representations | Improving classification stability [35] |

| Batch Effect Correction | Harmony, CCA, MNNCorrect | Address technical variations between datasets | Enabling cross-dataset application [34] [11] |

Based on current experimental evidence, SVM demonstrates a slight but consistent performance advantage over logistic regression for single-cell classification tasks. However, this advantage is contingent upon proper configuration, particularly regarding kernel selection and parameter optimization.

For researchers working with single-cell data, the following recommendations emerge from recent comparative studies:

Default Kernel Choice: Begin with the RBF kernel, as it generally provides superior performance for capturing the complex, non-linear relationships in gene expression data [38].

Invest in Hyperparameter Optimization: Utilize advanced HPO frameworks like Optuna or Hyperopt rather than manual tuning, as they significantly enhance model performance [36].

Consider Multi-Feature Approaches: When possible, employ feature fusion frameworks like scMFF that integrate multiple feature types (statistical, information theory, matrix factorization, deep learning) to capture complementary aspects of the data [35].

Evaluate Cross-Dataset Performance: Assess model performance on independent datasets collected under different protocols to ensure biological relevance and generalizability across cohort shifts [37].

The choice between SVM and logistic regression should consider both the specific characteristics of the single-cell data and the computational resources available. SVM configured with an RBF kernel and proper hyperparameter optimization generally provides superior performance, though logistic regression remains a competitive alternative, particularly when interpretability and computational efficiency are prioritized.

In single-cell RNA sequencing (scRNA-seq) research, accurate cell classification is a foundational step for understanding cellular heterogeneity, developmental trajectories, and disease mechanisms. Two predominant machine learning approaches for this classification task are Support Vector Machines (SVM) and logistic regression, each with distinct theoretical foundations and practical implications. While SVM aims to find the "best" margin that separates classes based on geometrical properties, logistic regression employs statistical approaches to model class probabilities [6]. The choice between these algorithms significantly impacts interpretability, performance, and biological insights derived from single-cell data.

The fundamental difference lies in their optimization criteria: SVM tries to maximize the margin between the closest support vectors, creating the widest possible separation between classes, while logistic regression maximizes the likelihood of the observed data, effectively modeling posterior class probabilities [40]. This distinction becomes particularly important in single-cell research where both accurate classification and biological interpretability are paramount. As we explore the implementation of regularized logistic regression, we will contextualize its performance and interpretation advantages specifically for single-cell classification tasks within the broader comparison with SVM.

Theoretical Foundations: Optimization Objectives and Regularization

Core Algorithmic Differences

The mathematical foundations of SVM and logistic regression reveal their different approaches to classification problems. SVM is geometrically motivated, seeking to find an optimal separating hyperplane that maximizes the margin between classes, which reduces the risk of error on future data [40] [41]. The optimization objective can be summarized as minimizing (1/2)||w||² + CΣξᵢ subject to the constraint that yᵢ(wᵀXᵢ + b) ≥ 1 - ξᵢ for all observations, where ξᵢ are slack variables allowing some misclassification, and C controls the trade-off between maximizing margin and minimizing classification error [41].

In contrast, logistic regression is statistically motivated, modeling the probability that a given cell belongs to a particular class using the logistic function P(y=1|X) = 1/(1 + e^(-wᵀX)) [41]. The parameters are estimated by maximizing the likelihood function, or equivalently, minimizing the log-loss cost function: -Σ[yᵢlog(ŷᵢ) + (1-yᵢ)log(1-ŷᵢ)] [41].

Regularization Approaches for High-Dimensional Biological Data

Single-cell RNA-seq data typically contains thousands of genes (features) measured across far fewer cells (observations), creating a high-dimensional p >> n problem prone to overfitting [42]. Regularization techniques introduce penalty terms to the model's objective function to constrain parameter values and prevent overfitting:

Ridge Regression (L2 regularization): Adds the squared magnitude of coefficients as penalty term:

λΣwᵢ²[41]. This shrinks coefficients toward zero but rarely eliminates any entirely, handling correlated variables well [41].Lasso (L1 regularization): Adds the absolute value of coefficients as penalty term:

λΣ|wᵢ|[41]. This tends to force some coefficients to exactly zero, performing automatic feature selection [41].Elastic Net: Combines both L1 and L2 regularization:

λ(ρΣ|wᵢ| + (1-ρ)Σwᵢ²)[41]. This balances feature selection with handling correlated predictors, often outperforming either approach alone in biological data [43].

For SVM, a similar regularization effect is achieved mainly through the C parameter, which controls the trade-off between achieving a wide margin and allowing misclassifications [41].

Experimental Comparison in Single-Cell Applications

Performance Benchmarks in Biological Data

Multiple studies have evaluated the performance of SVM and logistic regression across various biological contexts. In single-cell research, both methods have demonstrated utility but with different strengths depending on the data characteristics and analytical goals.

Table 1: Comparative Performance of SVM and Logistic Regression in Single-Cell Applications

| Application Context | Best Performing Model | Key Performance Metrics | Data Characteristics | Reference |

|---|---|---|---|---|

| Immune cell classification | Elastic-net logistic regression | High accuracy across cell types; Feature selection capability | 452 selected genes; Multiple immune cell types | [43] |

| Cell sex identification | Ensemble (XGBoost, SVM, RF, LR) | AUPRC > 0.94 | 14-gene minimal set; Cross-tissue validation | [44] |

| Cell potency prediction | Deep learning (CytoTRACE 2) | Superior to 8 ML methods including SVM/LR | 406,058 cells; 125 cell phenotypes | [45] |

| Marker gene selection | Regularized logistic regression | Comparable to Wilcoxon test; Direct interpretation | 2,000 features; 497 cells (B vs NK) | [42] |

| Stemness prediction in tumors | One-class logistic regression | Identified stem-like populations missed by other methods | Multiple spatial omics technologies | [14] |

In a comprehensive evaluation for immune cell classification, elastic-net logistic regression successfully identified discriminative gene signatures across ten different immune cell types and five T helper cell subsets [43]. The model selected 452 informative genes, with specific genes like CYP27B1, INHBA, IDO1, NUPR1, and UBD showing high positive coefficients specifically for M1 macrophages, providing both classification capability and biological interpretability [43].

Practical Implementation Guidelines

Based on empirical evidence from single-cell studies, the choice between SVM and logistic regression depends on several data characteristics:

Table 2: Model Selection Guidelines for Single-Cell Classification Tasks

| Data Scenario | Recommended Approach | Rationale | Implementation Tips | |

|---|---|---|---|---|

| Large n, small p (1-10,000 features, 10-1,000 samples) | Logistic regression or SVM with linear kernel | Comparable performance; Computational efficiency | Start with logistic regression for interpretability | [6] |

| Small n, intermediate p (1-1,000 features, 10-10,000 samples) | SVM with non-linear kernel (Gaussian, polynomial) | Captures complex relationships; Better generalization | Use cross-validation to prevent overfitting | [6] |

| High-dimensional transcriptomics (>>10,000 features) | Regularized logistic regression (elastic-net) | Automatic feature selection; Handles correlated genes | Pre-filtering to reduce computational cost | [43] [42] |

| Requirement for probability estimates | Logistic regression | Natural probability output; Better calibrated | Platt scaling needed for SVM probability estimates | [40] |

| Need for biological interpretation | Regularized logistic regression | Direct gene coefficient interpretation | Examine top positive/negative weight genes | [43] [42] |

For single-cell RNA-seq data specifically, regularized logistic regression has demonstrated particular utility in marker gene selection, performing comparably to traditional statistical tests like the Wilcoxon rank-sum test while providing natural feature importance metrics through coefficient magnitudes [42].

Experimental Protocols for Single-Cell Classification

Protocol 1: Regularized Logistic Regression for Cell Type Annotation

Objective: Implement a regularized logistic regression model to classify cell types from scRNA-seq data with automatic feature selection.

Workflow:

- Data Preprocessing: Normalize single-cell counts using log normalization (LogNormalize method with scale factor 10,000) [42]. Scale data to z-scores to ensure features are comparable [42].

- Feature Selection: Pre-filter genes to retain the most variable features (typically 2,000-3,000 genes) using variance stabilizing transformation [42].

- Model Specification: Implement logistic regression with elastic-net regularization using

mixtureparameter (0 for ridge, 1 for lasso, intermediate for elastic-net) and tunablepenaltyparameter [42]. - Hyperparameter Tuning: Perform k-fold cross-validation (typically 10-fold) across a regularization grid (e.g.,

penalty range = c(-5, 5)with 50 levels) [42]. - Model Fitting: Finalize workflow with optimal parameters and fit on training data.

- Interpretation: Extract and examine coefficients using

tidy()function to identify important marker genes ranked by absolute coefficient magnitude [42].

Validation Approach:

- Split data into training (e.g., 70%) and test sets (e.g., 30%) with stratified sampling by cell type [42].

- Evaluate using accuracy, AUC-ROC, or cell-type specific metrics.

- Compare identified markers with known biological literature.

Protocol 2: SVM for Non-linearly Separable Cell Populations

Objective: Implement SVM with kernel functions for classifying cell types that are not linearly separable in gene expression space.

Workflow:

- Data Preprocessing: Similar normalization and scaling as Protocol 1.

- Kernel Selection: Based on data characteristics: linear kernel for linearly separable data, Gaussian RBF for complex boundaries, polynomial for known polynomial relationships.

- Parameter Optimization: Tune cost parameter

C(inverse regularization strength) and kernel-specific parameters (γ for RBF, degree for polynomial). - Model Training: Implement using efficient optimization algorithms (Sequential Minimal Optimization commonly used).

- Performance Evaluation: Assess using cross-validation and test set accuracy.

Key Considerations for Single-Cell Data:

- For large datasets (>10,000 cells), linear kernels are computationally efficient.

- For complex subpopulation structures, non-linear kernels may capture better decision boundaries.

- Probability calibration (Platt scaling) needed if probability estimates required.

Model Interpretation in Biological Context

Extracting Biological Insights from Model Parameters

A significant advantage of logistic regression in single-cell research is the direct interpretability of model parameters. The coefficients in a regularized logistic regression model represent the log-odds contribution of each gene to cell type classification, providing biologically meaningful insights [43] [42].

In immune cell classification, researchers found that regularized logistic regression not only accurately classified cell types but also identified meaningful gene signatures. For instance, positive coefficients for genes like CYP27B1, INHBA, IDO1, NUPR1, and UBD specifically marked M1 macrophages, while negative coefficients for E-cadherin (CDH1) in monocytes helped distinguish them from other cell types [43]. This direct mapping from coefficients to biological function enhances the utility of logistic regression beyond mere classification.

Similarly, in a study comparing B-cells and NK cells, regularized logistic regression identified known marker genes (NKG7, GZMB for NK cells; HLA-DRA for B-cells) among the top predictors based on coefficient magnitude, validating the biological relevance of the selected features [42].

Comparison of Interpretation Capabilities

Table 3: Interpretation Capabilities of SVM vs. Logistic Regression for Single-Cell Data

| Interpretation Aspect | Logistic Regression | Support Vector Machines | Biological Impact | |

|---|---|---|---|---|

| Feature Importance | Direct from coefficients | Indirect (requires additional analysis) | Enables hypothesis generation | [43] [42] |

| Probability Estimates | Natural output of model | Requires Platt scaling | Better confidence estimation | [40] |

| Marker Gene Discovery | Built-in via regularization | Post-hoc analysis needed | Streamlines discovery process | [42] |

| Pathway Analysis | Direct gene coefficients | Support vector analysis | Facilitates functional enrichment | [43] |

| Model Debugging | Transparent decision process | Complex kernel transformations | Easier error analysis | [6] |

Essential Research Reagent Solutions

Computational Tools for Single-Cell Classification

Table 4: Essential Research Reagents and Computational Tools for Single-Cell Classification

| Tool/Resource | Function | Implementation in Single-Cell Analysis | Availability |

|---|---|---|---|

| Seurat | Single-cell analysis toolkit | Data preprocessing, normalization, and initial clustering | R package [14] [42] |

| glmnet | Regularized logistic regression | Implementation of elastic-net with cross-validation | R/Python package [42] |

| tidymodels | Machine learning framework | Unified interface for model tuning and validation | R package [42] |

| SCIKIT-learn | Machine learning library | SVM implementation with various kernels | Python package |

| Single-cell potency atlas | Reference data | Training and validation for potency prediction | 406,058 cells across 125 phenotypes [45] |

| CellSexID gene panel | Minimal marker set | Sex prediction for cell origin tracking | 14-gene panel [44] |

In the context of single-cell classification research, both SVM and logistic regression offer distinct advantages. Logistic regression, particularly with elastic-net regularization, provides an optimal balance of performance and interpretability for high-dimensional transcriptomic data, directly generating biologically meaningful gene coefficients [43] [42]. SVM excels in scenarios with complex, non-linear decision boundaries and demonstrates robust performance across various data types [6].

The choice between these algorithms should be guided by research objectives: logistic regression when interpretability and biomarker discovery are prioritized, and SVM when dealing with complex classification boundaries and maximum separation between cell populations is critical. As single-cell technologies evolve toward spatial transcriptomics and multi-omics integration, both methods will continue to play important roles in extracting biological insights from increasingly complex datasets.

Future methodological developments will likely focus on integrating the strengths of both approaches—combining the geometrical advantages of SVM with the probabilistic interpretation of logistic regression—while adapting to the unique characteristics of emerging single-cell data modalities.

Accurate cell type annotation is a critical step in the analysis of single-cell RNA sequencing (scRNA-seq) data, enabling researchers to characterize cellular heterogeneity, identify rare cell populations, and understand biological processes and disease mechanisms at a unprecedented resolution [1] [11]. As the volume of scRNA-seq data grows, automated, supervised classification methods have become essential for efficient and reproducible analysis [46] [47]. These methods use previously annotated reference datasets to classify cells in new query data, posing a classic machine learning challenge.