The Promise and Pitfalls of Zero-Shot Single-Cell Foundation Models in Perturbation Prediction

Single-cell foundation models (scFMs), pretrained on millions of cells, promise to revolutionize the in-silico prediction of cellular responses to genetic and drug perturbations.

The Promise and Pitfalls of Zero-Shot Single-Cell Foundation Models in Perturbation Prediction

Abstract

Single-cell foundation models (scFMs), pretrained on millions of cells, promise to revolutionize the in-silico prediction of cellular responses to genetic and drug perturbations. However, rigorous benchmarking reveals significant limitations in their zero-shot capabilities, where models are used without task-specific fine-tuning. This article synthesizes recent evidence showing that zero-shot scFMs often fail to outperform deliberately simple baselines, struggle with distribution shifts, and offer limited improvements for predicting unseen perturbations. We explore the foundational causes of these shortcomings, survey emerging methodological fixes like efficient fine-tuning, provide a framework for troubleshooting model performance, and outline rigorous validation standards. For researchers and drug development professionals, this critical appraisal provides essential guidance for navigating the current landscape of scFMs, enabling more informed and effective application in perturbation biology and therapeutic discovery.

The Reality Check: Exposing the Fundamental Limits of Zero-Shot scFMs

Troubleshooting Guides & FAQs

Q1: Why does my zero-shot single-cell foundation model (scFM) underperform on basic cell type clustering compared to established methods?

A: Current benchmarking reveals that in zero-shot settings, scFMs like Geneformer and scGPT can be outperformed in cell type clustering by simpler methods, including the selection of Highly Variable Genes (HVG) or using established tools like Harmony and scVI [1]. This is measured by metrics such as the average BIO (AvgBio) score and average silhouette width (ASW) [1]. The underlying issue may be that the masked language model pretraining framework does not inherently produce cell embeddings that are optimal for this specific biological task without further, task-specific fine-tuning [1].

Q2: When predicting genetic perturbation effects, why do complex scFMs fail to beat simple baseline models?

A: Multiple independent studies have found that for predicting transcriptome changes after single or double genetic perturbations, several scFMs (including scGPT and scFoundation) and other deep learning models do not consistently outperform deliberately simple baselines [2] [3]. These baselines include:

- The 'additive' model: For a double perturbation, it predicts the sum of the individual logarithmic fold changes observed in single perturbations [2].

- The 'perturbed mean' baseline: It always predicts the average expression profile across all perturbed cells [3]. The performance gap suggests that current scFMs may be primarily capturing broad, systematic differences between control and perturbed cells rather than mastering the underlying perturbation biology [3].

Q3: My scFM performs well on batch integration for some datasets but fails on others. What is happening?

A: The performance of scFMs on batch integration is inconsistent. While they may successfully integrate data from different experiments using the same technique, they often struggle to correct for batch effects between different experimental techniques [1]. Quantitative evaluations show that methods like Harmony and scVI frequently outperform scFMs on this task, and in many cases, even simply selecting HVGs can achieve superior batch integration scores [1]. The effectiveness of an scFM can be highly dependent on the specific characteristics of the dataset and the nature of the batch effects.

Q4: Is there a single scFM that consistently outperforms all others across diverse tasks?

A: No. Comprehensive benchmarks indicate that no single scFM consistently outperforms all others across every task [4]. The best model for a given project depends on factors such as dataset size, task complexity, the need for biological interpretability, and available computational resources [4]. Model selection should therefore be a tailored decision based on the specific experimental context and goals.

Quantitative Performance Data

The tables below summarize key findings from recent benchmark studies, providing a direct comparison between scFMs and simpler baseline methods.

Table 1: Performance Comparison on Cell-Level Tasks (Zero-Shot)

| Task | Top-Performing Methods | Underperforming Methods | Key Metric(s) | Notes |

|---|---|---|---|---|

| Cell Type Clustering | HVG, scVI, Harmony [1] | scGPT, Geneformer [1] | AvgBIO, ASW [1] | scFMs show inconsistent performance across different datasets [1]. |

| Batch Integration | HVG, scVI, Harmony [1] | Geneformer, scGPT [1] | Batch mixing scores, PCR [1] | Geneformer often increases batch effect variance compared to input data [1]. |

Table 2: Performance Comparison on Perturbation Prediction Tasks

| Task | Simple Baseline Models | Complex Models Benchmarked | Key Finding | Reference |

|---|---|---|---|---|

| Double Perturbation Prediction | Additive Model, 'No Change' Model [2] | GEARS, CPA, scGPT, scFoundation, scBERT, Geneformer, UCE* [2] | "All models had a prediction error substantially higher than the additive baseline." [2] | [2] |

| Unseen Single Perturbation Prediction | Perturbed Mean, Linear Model [3] | CPA, GEARS, scGPT [3] | "Simple baselines performed comparatively or outperformed state-of-the-art methods." [3] | [3] |

| Unseen Combinatorial Perturbation Prediction | Matching Mean Baseline [3] | GEARS, scGPT [3] | The matching mean baseline "outperformed all other baselines by a considerable margin." [3] | [3] |

Experimental Protocols

Protocol for Benchmarking Zero-Shot Cell Embeddings

This protocol is adapted from evaluations of scFM zero-shot capabilities [1].

- Embedding Extraction: For the target dataset, obtain cell embeddings from the scFM (e.g., scGPT, Geneformer) without performing any further fine-tuning on the dataset.

- Baseline Generation: Generate comparable representations using baseline methods:

- HVG: Select Highly Variable Genes from the dataset.

- scVI: Process the dataset using the scVI model.

- Harmony: Process the dataset using the Harmony integration tool.

- Dimensionality Reduction: Apply a standard dimensionality reduction technique (e.g., UMAP) to all embeddings and baseline representations for visualization.

- Quantitative Evaluation: Calculate established clustering and batch correction metrics.

- Cell Type Clustering: Use Average BIO (AvgBio) score and Average Silhouette Width (ASW) to assess how well the embeddings separate known cell types.

- Batch Integration: Use batch mixing scores and Principal Component Regression (PCR) to assess the removal of technical batch effects while preserving biological variation.

- Visual Inspection: Qualitatively assess the 2D visualizations to check if the primary structure in the embeddings is driven by biology (cell type) or technical artifacts (batch).

Protocol for Benchmarking Perturbation Effect Prediction

This protocol is based on benchmarks comparing scFMs to simple baselines [2] [3].

- Data Partitioning: Split the perturbation dataset such that specific perturbations (e.g., a set of single-gene or double-gene perturbations) are held out from the training data entirely. This tests generalization to unseen perturbations.

- Model Training & Fine-tuning: Fine-tune the scFMs (e.g., scGPT, scFoundation, GEARS) on the training set of perturbations according to their specified procedures.

- Baseline Calculation:

- Perturbed Mean: Calculate the average expression profile across all perturbed cells in the training set.

- Additive Model (for double perturbations): For a double perturbation A+B, predict the expression by summing the log-fold changes of the individual perturbations A and B from the control.

- Matching Mean (for double perturbations): For a double perturbation A+B, predict the expression by averaging the centroid expression profiles of the single perturbations A and B from the training data.

- Prediction & Evaluation: On the held-out test perturbations, compare the model and baseline predictions against the ground-truth expression data.

- Metrics: Calculate standard metrics, including:

- L2 Distance / RMSE: The root mean-squared error between predicted and observed expression values.

- PearsonΔ: The Pearson correlation between predicted and observed expression changes (deltas) with respect to control for all genes.

- PearsonΔ20: The same as PearsonΔ, but calculated only on the top 20 differentially expressed genes.

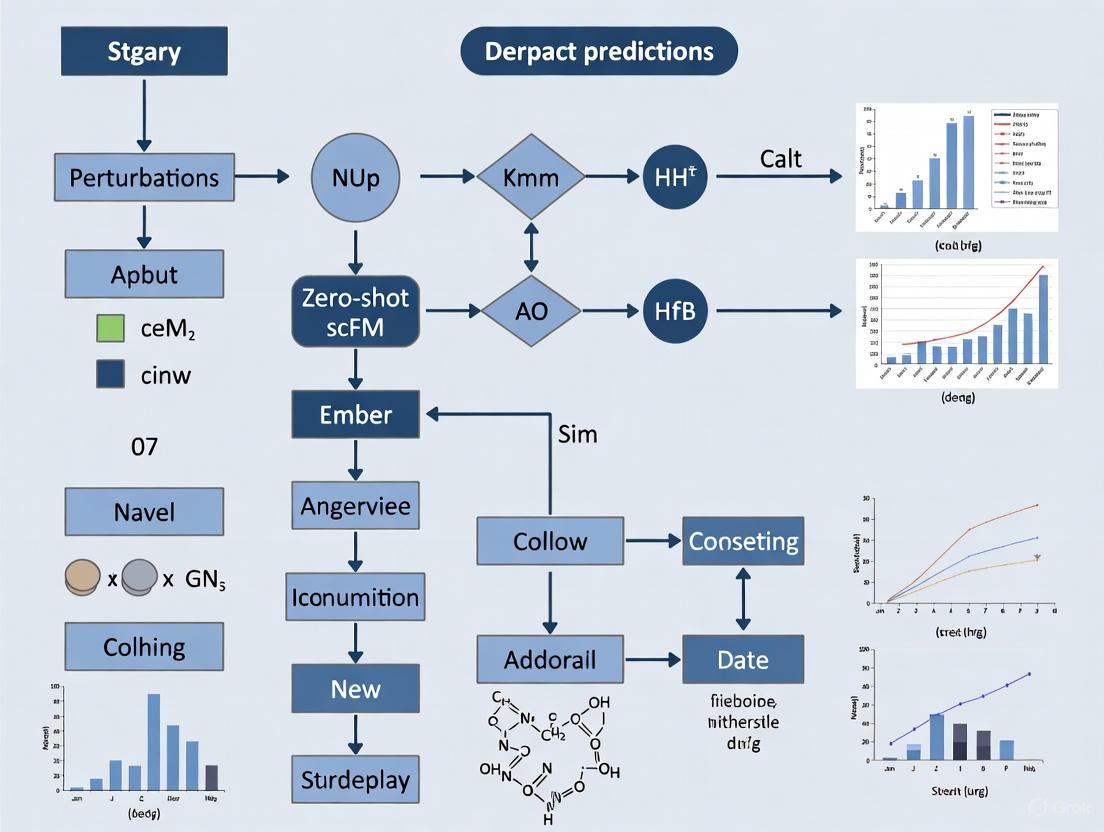

Visualization of Evidence for the Performance Gap

Key Evidence for the scFM Performance Gap

Experimental Workflow for Perturbation Benchmarking

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for scFM Benchmarking

| Item Name | Function / Application | Key Insight from Benchmarking |

|---|---|---|

| Highly Variable Genes (HVG) | A baseline method for feature selection prior to clustering or integration. | Surprisingly robust; often outperforms or matches scFMs in zero-shot cell type clustering and batch integration tasks [1]. |

| Harmony | Algorithm for integrating single-cell data across multiple batches or experiments. | A strong, established baseline for batch correction that frequently outperforms zero-shot scFM embeddings [1]. |

| scVI | A probabilistic generative model for scRNA-seq data analysis, including integration. | Consistently performs well on cell-level tasks and serves as a powerful benchmark against which to compare new scFMs [4] [1]. |

| 'Perturbed Mean' Baseline | A simple model that predicts the average expression profile of all perturbed cells. | Crucial for perturbation benchmarks; reveals that complex models may not capture much beyond this average effect for unseen perturbations [2] [3]. |

| 'Additive' Baseline | A model that predicts double perturbation effects as the sum of single perturbation effects. | Essential for evaluating combinatorial perturbation prediction; often outperforms specialized deep learning models [2]. |

| Systema Framework | An evaluation framework designed to control for systematic variation in perturbation data. | Helps distinguish models that capture true perturbation-specific biology from those that merely learn dataset-wide biases [3]. |

### Frequently Asked Questions (FAQs)

FAQ 1: What is the "Distribution Shift Problem" in the context of single-cell perturbation prediction?

The distribution shift problem refers to the significant performance deterioration that single-cell foundation models (scFMs) exhibit when they encounter strong or atypical genetic perturbations that differ from the data they were trained on. In a zero-shot setting, these models struggle to generalize to these out-of-distribution examples, often failing to accurately predict the transcriptional outcomes of such perturbations [5] [6].

FAQ 2: Why do current single-cell foundation models (scFMs) fail on atypical perturbations?

Benchmarking studies indicate that current-generation scFMs primarily capture systematic variation—the consistent transcriptional differences between pools of perturbed and control cells caused by selection biases or biological confounders—rather than genuine, perturbation-specific effects. When presented with an atypical perturbation that does not share these common systematic patterns, the models lack the specific biological knowledge to make an accurate prediction [7].

FAQ 3: Are there any standardized benchmarks to evaluate this issue?

Yes, the PertEval-scFM framework is a standardized benchmark specifically designed to evaluate models, including their performance on distribution shifts. It assesses whether zero-shot scFM embeddings genuinely enhance perturbation effect prediction compared to simpler baseline models [5] [6].

FAQ 4: What is a key pitfall in evaluating my own perturbation prediction model?

A common pitfall is relying on standard reference-based metrics (like Pearson correlation on differential expression) without accounting for systematic variation. A model can achieve a high score by simply learning the average difference between all perturbed and control cells, which does not reflect its ability to predict the unique effect of a specific, unseen perturbation. The Systema framework is a new evaluation method designed to mitigate this bias [7].

FAQ 5: What are some emerging solutions to improve generalization?

Emerging approaches focus on better integration of biological knowledge and representation learning. For instance:

- SynthPert: Enhances Large Language Models (LLMs) by fine-tuning them on high-quality, synthetic chain-of-thought explanations of perturbation mechanisms, which improves cross-cell-type generalization [8].

- scREPA: Aligns the internal representations of a prediction model with high-quality external representations from pre-trained scFMs, leading to more robust predictions on unseen conditions and noisy data [9].

### Troubleshooting Guides

Problem: My model's performance drops significantly on unseen or strong genetic perturbations.

Diagnosis: This is a classic symptom of the distribution shift problem. The model is likely overfitting to the systematic variation present in its training data and cannot extrapolate to novel scenarios.

Solution - Implement Rigorous Evaluation: Follow the protocol below to diagnose whether your model is capturing true biological signals or just systematic bias.

Experimental Protocol: Isolating Perturbation-Specific Effects with Systema

Objective: To evaluate a model's ability to predict perturbation-specific effects, free from the confounding influence of systematic variation.

Materials:

- Your trained perturbation prediction model.

- Perturbation dataset with held-out test perturbations (e.g., from Adamson, Norman, or Replogle datasets) [7].

- Baseline models: "Perturbed Mean" and "Matching Mean" [7].

Methodology:

- Benchmark Against Simple Baselines: Compare your model's performance on the held-out test set against the simple non-parametric baselines.

- The Perturbed Mean baseline predicts the average expression profile across all perturbed cells for every perturbation.

- The Matching Mean baseline (for combinatorial perturbations) predicts the average of the mean profiles of the two constituent single-gene perturbations [7].

- Quantify Systematic Variation: Use the methods described in Systema [7] to quantify the level of systematic variation in your dataset. This can involve:

- Gene Set Enrichment Analysis (GSEA): Identify pathways that are consistently enriched when comparing all perturbed cells against all control cells.

- AUCell: Score the activity of these systematically enriched pathways in single cells to visualize the stark difference between the perturbed and control populations [7].

- Apply the Systema Framework: Evaluate your model using the Systema framework, which emphasizes the model's ability to reconstruct the specific landscape of individual perturbations rather than just the average treatment effect [7].

Interpretation of Results:

- If your complex model performs similarly to or only marginally better than the simple "Perturbed Mean" baseline, it is likely just capturing systematic variation.

- A model that truly generalizes will significantly outperform these baselines under the Systema evaluation, particularly for perturbations that are functionally distinct from the bulk of the training data.

The following diagram illustrates this diagnostic experimental workflow.

Problem: My model lacks biological reasoning for its predictions, hindering trust and utility.

Diagnosis: The model has learned statistical associations but not the underlying mechanistic biology, making it an unreliable tool for hypothesis generation.

Solution - Incorporate Synthetic Biological Reasoning: Use a knowledge distillation approach to infuse biological reasoning into a smaller, more efficient model, as demonstrated by SynthPert [8].

Experimental Protocol: Knowledge Distillation with Synthetic Reasoning Traces

Objective: To enhance a model's biological reasoning capabilities for perturbation prediction through supervised fine-tuning on synthetic chain-of-thought explanations.

Materials:

- A frontier LLM (e.g., OpenAI o4-mini) to generate reasoning traces.

- A critic model (can be another frontier LLM) to grade explanation quality.

- A base LLM for fine-tuning (e.g., DeepSeek-R1 8B) [8].

- The PerturbQA benchmark dataset [8].

Methodology:

- Synthetic Data Generation: For each training data point (cell type, perturbation, gene, outcome), use the frontier model to generate a mechanistic explanation for the observed outcome.

- Quality Control with a Critic Model: Present the generated explanation and the ground truth to a separate critic model. Have the critic grade the explanation on a scale (e.g., 'excellent' to 'terrible'). Retain only the highest-quality explanations (e.g., those graded 'excellent') for training [8].

- Supervised Fine-Tuning: Fine-tune your base LLM not just on the raw (input, output) tuples, but on the (input, reasoning trace, output) sequences. This teaches the model the "how" and "why" behind the prediction.

- Evaluation: Rigorously evaluate the fine-tuned model on a held-out test set, paying special attention to its performance on unseen cell types or strong perturbations.

Interpretation of Results:

- Success is demonstrated by the fine-tuned model achieving state-of-the-art performance and showing strong cross-cell-type generalization, potentially even surpassing the capabilities of the larger frontier model that generated the training data [8].

The workflow for this solution is shown below.

### Performance Benchmarking Tables

Table 1: Benchmarking scFMs against Simple Baselines for Zero-Shot Perturbation Prediction [5] [6] [7]

| Model / Baseline | Core Principle | Performance on Unseen Perturbations | Performance under Distribution Shift | Key Limitation |

|---|---|---|---|---|

| Zero-shot scFMs | Contextualized embeddings from models pre-trained on large scRNA-seq atlases. | Limited improvement over baselines [5] [6]. | Significant performance deterioration, especially on strong/atypical perturbations [5] [6]. | Captures systematic variation rather than perturbation-specific effects [7]. |

| Perturbed Mean | Non-parametric baseline; predicts the average expression of all perturbed cells. | Surprisingly competitive or superior for unseen one-gene perturbations [7]. | Robust, as it represents the average systematic effect. | Cannot predict any perturbation-specific details; only the average treatment effect. |

| Matching Mean | Non-parametric baseline; for combo perturbation X+Y, averages the mean profiles of X and Y. | Outperforms complex models for unseen two-gene perturbations [7]. | Robust for combinations of seen single-gene perturbations. | Relies on having seen the constituent single-gene perturbations. |

Table 2: Emerging Methods for Improved Generalization [8] [9]

| Model | Core Methodology | Reported Advantage | Applicability |

|---|---|---|---|

| SynthPert | Supervised fine-tuning of LLMs on synthetic, quality-filtered chain-of-thought explanations. | Achieves 87% accuracy on unseen RPE1 cells; state-of-the-art on PerturbQA [8]. | Enhances biological reasoning and cross-cell-type generalization. |

| scREPA | Aligns VAE latent embeddings with biologically meaningful representations from pre-trained scFMs using cycle-consistent alignment. | Outperforms existing methods in predicting DEGs and whole-transcriptome responses; generalizes well to unseen conditions and noisy data [9]. | Improves representation quality for robust prediction under data limitations. |

Table 3: Essential Resources for scFM Perturbation Prediction Research

| Resource Name | Type | Function in Research | Example from Search Results |

|---|---|---|---|

| PertEval-scFM | Software Benchmark | Standardized framework to evaluate and compare the performance of single-cell foundation models for perturbation prediction in a zero-shot setting [5] [6]. | https://github.com/aaronwtr/PertEval [5] |

| Systema | Software Framework / Evaluation Metric | An evaluation framework that mitigates the confounding effects of systematic variation, providing a clearer readout of a model's ability to capture perturbation-specific biology [7]. | https://github.com/mlbio-epfl/systema [7] |

| PerturbQA | Dataset & Benchmark | A benchmark that reformulates perturbation experiments into natural language tuples, enabling the evaluation of LLM-based biological reasoning [8]. | Used as the primary evaluation dataset in SynthPert [8]. |

| Adamson & Norman Datasets | Experimental Data | Key single-cell perturbation datasets often used for training and benchmarking. They target specific biological processes but are known to contain significant systematic variation [7]. | Used in the Systema benchmark to demonstrate systematic variation [7]. |

| Replogle (RPE1) Dataset | Experimental Data | A large-scale, genome-wide perturbation screen used to study model generalization and artifacts like cell-cycle arrest induced by perturbations [7]. | Used in the Systema benchmark to demonstrate cell-cycle systematic bias [7]. |

Frequently Asked Questions

Q1: What does "zero-shot" performance mean for a single-cell foundation model (scFM), and why is it important? Zero-shot evaluation tests a foundation model's capabilities without any further task-specific training or fine-tuning. You use the model's pre-trained internal representation, or "embedding," directly for downstream analysis. This is critical for exploratory research where predefined labels don't exist or the ability to fine-tune is excluded, such as in discovery settings where the biological outcomes are unknown [1].

Q2: Our team is getting poor results using scGPT and Geneformer for zero-shot perturbation prediction. Are we doing something wrong? Not necessarily. Benchmarking studies have consistently found that these models in a zero-shot setting do not outperform, and are sometimes outperformed by, deliberately simple baseline methods. This appears to be a fundamental limitation of current model architectures and pretraining, not user error [6] [2].

Q3: What are the main types of failures when predicting genetic interactions? Models struggle with several specific scenarios:

- Strong or Atypical Effects: Predicting the impact of strong or highly unusual perturbations [6].

- Synergistic Interactions: Most models fail to correctly identify or predict synergistic genetic interactions, where the combined effect is greater than the sum of individual effects [2].

- Distribution Shifts: Performance degrades when models encounter data that differs significantly from their pretraining sets [6].

Q4: Is there a way to improve the accuracy of these models for our perturbation experiments? Yes, recent research suggests moving from an "open-loop" to a "closed-loop" framework can significantly improve performance. This involves iteratively fine-tuning the foundation model with experimental perturbation data (e.g., from Perturb-seq). This approach has been shown to triple the Positive Predictive Value (PPV) of predictions [10].

Troubleshooting Guides

Issue 1: Poor Zero-Shot Performance on Cell Type Clustering and Batch Integration

Problem: When using scGPT or Geneformer embeddings for tasks like cell type identification or removing batch effects without fine-tuning, the performance is inconsistent and worse than established methods.

Investigation & Diagnosis:

- Compare against simple baselines. Always include a method like Highly Variable Genes (HVG) selection in your benchmark. Research shows HVG can outperform foundation model embeddings in both cell type clustering and batch correction tasks [1].

- Check for dataset overlap. Verify if your evaluation dataset was part of the model's pretraining corpus. Surprisingly, models do not consistently perform better on datasets they were trained on, but this knowledge is crucial for interpretation [1].

- Quantify performance with robust metrics. Use metrics like Average BIO score for clustering and a combination of batch mixing and biological conservation scores (e.g., principal component regression score) for integration [1].

Solution: For zero-shot tasks, rely on proven, simpler methods as your primary baseline.

- For Cell Type Clustering: Use Harmony or scVI.

- For Batch Integration: Use Harmony, scVI, or start with HVG selection.

- Re-evaluate Model Choice: If your workflow depends entirely on zero-shot performance, consider that current foundation models may not be the optimal tool for these specific tasks [1].

Issue 2: Inaccurate Prediction of Genetic Perturbation Effects

Problem: The model's predictions for gene expression changes after single or double genetic perturbations do not match experimental validation data.

Investigation & Diagnosis:

- Benchmark against additive and "no change" models. Compare your model's predictions to a simple baseline that sums the individual logarithmic fold changes (the additive model) or one that predicts no change from the control condition. Current foundation models and other deep learning models often fail to outperform these simple baselines [2].

- Analyze specific interaction types. Check if your model is systematically failing at certain types of genetic interactions, such as synergistic effects. Many models are biased towards predicting "buffering" interactions and rarely correctly predict synergistic ones [2].

- Inspect prediction variability. Ensure the model's predictions vary sufficiently across different perturbations. Some models show surprisingly little variation in their outputs for different perturbations, acting similarly to the "no change" baseline [2].

Solution:

- Incorporate a linear baseline. Implement a simple linear model that uses dimension-reducing embeddings of your training data. This can serve as a strong, hard-to-beat baseline [2].

- Implement a "Closed-Loop" Framework. Instead of relying on a single round of prediction, use a small set of experimental perturbation data to fine-tune the foundation model.

Issue 3: Model Fails to Predict Effects of Unseen Perturbations

Problem: The model cannot accurately extrapolate to predict the effects of perturbing a gene that was not present in its fine-tuning dataset.

Investigation & Diagnosis: This is a known weakness. Claims that foundation models can inherently generalize to unseen perturbations through pretraining are not yet fully supported by benchmarks [2].

Solution:

- Use a linear model with pretrained embeddings. Extract the gene embedding matrix (G) from scFoundation or scGPT, and a perturbation embedding matrix (P) from a model like GEARS. Use these in a linear model framework, which can perform as well as or better than the models' own complex decoders [2].

- Leverage perturbation data for pretraining. If possible, pretrain the linear model's perturbation embedding (P) on existing large-scale perturbation datasets. This has been shown to provide a greater benefit than pretraining on single-cell atlas data alone [2].

Performance Benchmarking Data

Table 1: Zero-Shot Cell Embedding Performance vs. Baselines

This table summarizes the performance of scFM embeddings compared to established methods on common tasks, as measured in independent evaluations. ASW (Average Silhouette Width) and AvgBIO score measure how well cell types are separated, while Batch Score measures how well technical batch effects are removed [1].

| Task | Metric | HVG | Harmony | scVI | scGPT | Geneformer |

|---|---|---|---|---|---|---|

| Cell Type Clustering | AvgBIO / ASW | Outperforms | Outperforms | Outperforms | Inconsistent | Underperforms |

| Batch Integration | Batch Score | Best | Good | Good | Moderate | Underperforms |

| Key Finding | A simple, established method often provides the most robust zero-shot performance for these tasks. |

Table 2: Perturbation Prediction Performance

This table compares the performance of various models against simple baselines for predicting gene expression changes after genetic perturbations. L2 Distance measures the error in predicting expression values, while AUROC (Area Under the Receiver Operating Characteristic Curve) measures the ability to classify genetic interactions correctly [2] [10].

| Model / Method | L2 Distance (vs. Additive) | AUROC | Notes |

|---|---|---|---|

| Additive Model | (Baseline) | N/A | Sums individual gene effects. A surprisingly strong benchmark [2]. |

| No Change Model | Higher | 0.63* | Predicts control expression. Foundation models can perform similarly [2]. |

| GEARS, scGPT, scFoundation | Higher | <0.63 | Do not consistently outperform additive/no-change baselines [2]. |

| Open-loop ISP (Geneformer) | N/A | 0.63 | PPV of 3%, similar to differential expression [10]. |

| Closed-loop ISP (Geneformer) | N/A | 0.86 | PPV of 9% (3x improvement) with only ~20 perturbation examples [10]. |

| Key Finding | Deliberately simple models are not outperformed by current complex scFMs for this task. |

Experimental Protocols for Key Benchmarks

Protocol 1: Benchmarking Zero-Shot Embeddings

Objective: Evaluate the quality of scFM cell embeddings for cell type clustering and batch integration without any fine-tuning.

Methodology:

- Data Preparation: Obtain a labeled dataset with known cell types and batch information (e.g., Pancreas dataset with 5 batches [1]).

- Generate Embeddings:

- Process the dataset through the scFM (e.g., scGPT, Geneformer) to extract cell embeddings.

- Process the same dataset through baseline methods: HVG selection, Harmony, and scVI.

- Dimensionality Reduction & Visualization: Use UMAP or t-SNE to visualize all embeddings.

- Quantitative Evaluation:

- Cell Type Clustering: Calculate metrics like Average Silhouette Width (ASW) and AvgBIO score to quantify cell type separation.

- Batch Integration: Calculate a batch integration score (e.g., using

scib.metricspackage) to quantify the removal of batch effects while preserving biological variance.

- Analysis: Compare the scores of the scFM embeddings against the baselines. A well-performing embedding should score high on cell type separation and low on batch effect retention [1].

Protocol 2: Implementing a Closed-Loop ISP Framework

Objective: Dramatically improve the accuracy of in-silico perturbation predictions by incorporating experimental data.

Methodology:

- Base Model Fine-tuning: Fine-tune a pre-trained scFM (e.g., Geneformer) on your single-cell RNA-seq dataset to distinguish relevant cell states (e.g., diseased vs. healthy) [10].

- Open-loop ISP: Perform an initial round of in-silico perturbation predictions across the genome using the fine-tuned model.

- Experimental Validation: Conduct a targeted Perturb-seq screen on a subset of genes (as few as 10-20) predicted in the previous step.

- Closed-loop Fine-tuning: Further fine-tune the model using the scRNA-seq data from the Perturb-seq experiment. The labels should be the cell state (e.g., activated), not the gene perturbed.

- Closed-loop ISP: Run a new round of ISP predictions with the refined model. Benchmarking shows this step can triple the Positive Predictive Value [10].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Perturbation Validation Experiments

This table lists key reagents and tools required for experimental validation of computational predictions, a critical step in the closed-loop framework.

| Item | Function / Description | Example Use Case |

|---|---|---|

| CRISPRa/i System | A system for gene activation (a) or interference (i) to perturb gene function. | Genetically perturbing target genes in primary human T cells to study activation [10]. |

| Perturb-seq Protocol | A single-cell RNA sequencing method that captures the transcriptomic effects of genetic perturbations in pooled screens. | Generating experimental data for fine-tuning foundation models in a closed-loop [10]. |

| ATAC-seq Kit | Assay for Transposase-Accessible Chromatin to map genome-wide chromatin accessibility. | Providing complementary epigenetic data to understand regulatory mechanisms [11]. |

| ChIPmentation Kit | A technology that combines chromatin immunoprecipitation (ChIP) with tagmentation for efficient library prep. | Mapping histone modifications or transcription factor binding sites in low-input samples [12]. |

| Flow Cytometry Assays | Measures protein expression and cytokine production (e.g., IL-2, IFN-γ) at the single-cell level. | Providing orthogonal, non-transcriptomic validation of perturbation effects on cell function [10]. |

Frequently Asked Questions (FAQs)

Q1: What does "zero-shot" evaluation mean for single-cell foundation models (scFMs), and why is it critical for my research?

A1: Zero-shot evaluation tests a foundation model's performance on a new task or dataset without any additional training (fine-tuning). This is critical for exploratory biology because, in many discovery settings, the biological labels or outcomes you are looking for are unknown, making fine-tuning impossible. A model's zero-shot capability demonstrates its true generalizability and the fundamental biological understanding it gained during pre-training [1].

Q2: My zero-shot scFM embeddings are performing poorly in cell type clustering. What could be the cause?

A2: Recent benchmarks have identified that scFMs like scGPT and Geneformer can underperform simpler methods like Highly Variable Gene (HVG) selection or established integration tools like Harmony and scVI in zero-shot cell type clustering [1]. This suggests that the masked language model pre-training objective used by many scFMs may not automatically produce high-quality cell embeddings for every downstream task without fine-tuning. If you encounter this, consider using a simpler baseline method as a benchmark for your specific dataset [1].

Q3: Can current scFMs accurately predict genetic interaction effects in a zero-shot setting?

A3: Current evidence suggests they cannot. When predicting effects of double genetic perturbations, foundation models and other deep learning models have failed to outperform a deliberately simple "additive" baseline, which just sums the effects of single perturbations. Furthermore, these models struggle to correctly predict synergistic genetic interactions, often defaulting to predicting no interaction [2].

Q4: Are there any scFMs that show promising zero-shot capabilities?

A4: Some newer models are being designed with a stronger focus on zero-shot performance. For example, scShift is a deep identifiable model that, when scaled up, has demonstrated remarkable zero-shot capabilities in characterizing cell types and biological states while overcoming batch effects across datasets [13]. This indicates that model architecture and training objectives are key factors for successful zero-shot application.

Troubleshooting Guides

Problem 1: Poor Batch Integration in Cell Embeddings

Symptoms: When visualizing your scFM cell embeddings, the data clusters strongly by batch or dataset source instead of by biological cell type.

Diagnosis: The model has failed to learn batch-invariant representations of cells in its zero-shot setting.

Solutions:

- Benchmark against baselines: Compare your scFM's performance against a simple HVG selection and established batch integration tools like scVI or Harmony. One study found that HVG selection achieved the best batch integration scores across several datasets [1].

- Check pre-training data: Investigate if your scFM was pre-trained on data similar to your query dataset. Performance can be variable even on previously seen data, but understanding the pre-training corpus can help diagnose issues [1].

Problem 2: Inaccurate Prediction of Genetic Perturbation Effects

Symptoms: Your model's predictions for gene expression changes after a perturbation are inaccurate, particularly for double-gene perturbations, and are worse than a simple additive model.

Diagnosis: The model has not learned the underlying biological rules that govern genetic interactions.

Solutions:

- Use a linear baseline: Before deploying a complex scFM, test its performance against a simple linear model or an "additive" model. If the simple model is superior, it indicates the scFM has not achieved its claimed goal of learning generalizable biological principles [2].

- Inspect gene embeddings: If the model allows it, extract its internal gene embeddings. Research has shown that using these embeddings in a simple linear predictor can sometimes perform as well as the model's own complex decoder, suggesting the embeddings themselves may contain useful, though under-utilized, information [2].

Quantitative Performance Data

Table 1: Zero-shot Performance Comparison on Cell Type Clustering (AvgBIO Score) [1]

| Model / Method | Pancreas Dataset | PBMC (12k) Dataset | Tabula Sapiens Dataset | Immune Dataset |

|---|---|---|---|---|

| HVG (Baseline) | 0.671 | 0.620 | 0.672 | 0.625 |

| scVI (Baseline) | 0.659 | 0.621 | 0.653 | 0.581 |

| Harmony (Baseline) | 0.622 | 0.615 | 0.579 | 0.549 |

| scGPT | 0.581 | 0.649 | 0.651 | 0.545 |

| Geneformer | 0.551 | 0.556 | 0.502 | 0.508 |

A higher AvgBIO score indicates better cell type separation. HVG often outperforms or matches foundation models.

Table 2: Performance on Double Genetic Perturbation Prediction (L2 Distance) [2]

| Model / Method | Prediction Error (L2 Distance) | Outperforms Additive Baseline? |

|---|---|---|

| Additive Baseline | ~0.28 | N/A |

| No Change Baseline | ~0.40 | No |

| GEARS | ~0.38 | No |

| scGPT | ~0.42 | No |

| Geneformer* | ~0.45 | No |

| scBERT* | ~0.43 | No |

Models marked with * were repurposed with a linear decoder. Lower L2 distance is better. No model outperformed the simple additive baseline.

Experimental Protocols

Protocol 1: Benchmarking Zero-Shot Cell Type Clustering

Objective: To evaluate the quality of cell embeddings generated by an scFM for separating known cell types without fine-tuning.

Materials: A labeled single-cell dataset with known cell types (e.g., a subset of Tabula Sapiens). The pre-trained scFM model (e.g., scGPT, Geneformer). Baseline methods (HVG, scVI, Harmony).

Methodology:

- Generate Embeddings: Input your dataset into the scFM and extract the cell embeddings without performing any fine-tuning.

- Run Baselines: Generate cell embeddings or reduced-dimensionality representations using your chosen baseline methods (e.g., select HVGs and perform PCA).

- Cluster and Score: Use a clustering algorithm (e.g., Leiden, K-means) on all embeddings. Calculate clustering metrics like Average BIO (AvgBIO) score or Average Silhouette Width (ASW) to quantify how well the clusters correspond to the known cell types.

- Compare: Compare the scores achieved by the scFM against the baseline methods [1].

Protocol 2: Evaluating Perturbation Effect Prediction

Objective: To test an scFM's ability to predict transcriptome-wide gene expression changes caused by single or double genetic perturbations.

Materials: A perturbation dataset (e.g., Norman et al. or Replogle et al. data). The scFM (e.g., scGPT, scFoundation). Baselines (Additive model, No-change model, simple linear model).

Methodology:

- Data Splitting: For double perturbation prediction, fine-tune the model on all single perturbations and a portion of the double perturbations. Hold out the remaining double perturbations for testing.

- Generate Predictions: Use the model to predict the gene expression values for the held-out test perturbations.

- Calculate Error: For each prediction, compute the L2 distance between the predicted and observed expression values for the top 1,000 highly expressed genes.

- Compare to Baselines: Compare the model's average prediction error against the errors from the simple additive and no-change baselines [2].

Visualizations

Diagram 1: Workflow for Zero-Shot Benchmarking of scFMs

Diagram 2: Simple Baselines Outperform scFMs in Perturbation Prediction

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Resources for Zero-Shot scFM Evaluation

| Item | Function in Evaluation |

|---|---|

| Pre-trained Model Weights (e.g., for scGPT, Geneformer) | Provides the foundational model to be tested in a zero-shot context without further training [1] [2]. |

| Benchmarking Datasets (e.g., Norman et al. perturbation data, Tabula Sapiens) | Serves as the standardized, ground-truthed test bed for evaluating model performance on specific tasks like perturbation prediction or cell type identification [1] [2]. |

| Simple Baseline Models (e.g., Additive model, HVG selection, linear models) | Critical controls to determine if the complexity of an scFM provides any tangible benefit over simple, established methods [1] [2]. |

| Quantitative Metrics (e.g., L2 distance, AvgBIO score, ASW) | Provides objective, numerical measures of model performance for tasks like prediction accuracy and cluster quality, enabling direct comparison between models [1] [2]. |

| Integration Tools (e.g., Harmony, scVI) | Established methods for comparison against scFMs for tasks like batch correction and dimensionality reduction [1]. |

Beyond Zero-Shot: Methodological Advances for Practical Application

Frequently Asked Questions (FAQs)

Q1: What is Parameter-Efficient Fine-Tuning (PEFT) and why is it important for large language models (LLMs)?

PEFT refers to a set of techniques that adapt a large pre-trained model to a new task by training only a small number of parameters, rather than the entire model. This is crucial because LLMs can have billions of parameters, making full fine-tuning computationally expensive, time-consuming, and prone to overfitting, especially on smaller datasets. PEFT methods, such as adapters, achieve performance comparable to full fine-tuning while dramatically reducing computational costs and storage requirements [14] [15].

Q2: How do adapter layers work, and where are they inserted in a transformer model?

Adapters are small, bottleneck-shaped neural network modules inserted into the layers of a pre-trained transformer model. A typical adapter consists of two fully connected layers with a non-linear activation in between. The first layer projects the input down to a lower dimension (the bottleneck), and the second layer projects it back up to the original input dimension [14] [15]. In the original adapter method proposed by Houlsby et al. (2019), two adapter layers are inserted into each transformer block: one after the multi-head attention module and one after the feed-forward network [14].

Q3: What are the primary advantages of using adapters over full fine-tuning?

- Reduced Computational Cost: Requires fewer GPUs and less training time [15].

- Lower Hardware Barrier: Enables fine-tuning of large models on consumer-grade GPUs with less VRAM [15].

- Modularity and Storage Efficiency: The large base model remains frozen and can be shared across multiple tasks. Only the small adapter weights need to be saved for each new task, reducing storage overhead [14] [15].

- Mitigated Catastrophic Forgetting: By keeping the original model parameters frozen, adapters help prevent the model from forgetting its general knowledge acquired during pre-training [15].

Q4: In the context of single-cell biology, what is a key limitation of foundation models that PEFT could help address?

Recent rigorous benchmarks have revealed that single-cell foundation models (scFMs), like scGPT and Geneformer, often fail to outperform simple baseline models in zero-shot settings for predicting genetic perturbation effects [2] [1] [5]. This means that using these models "out-of-the-box" without any further training yields unreliable results. PEFT, through methods like adapter tuning, provides a pathway to specialize these general scFMs on specific, high-quality perturbation datasets, potentially bridging this performance gap without the cost of full fine-tuning.

Q5: How does the parameter efficiency of adapters compare to simply fine-tuning the top layers of a model?

Adapters can achieve superior performance with a comparable or even smaller number of trained parameters. For example, a BERT model trained with adapters matched the performance of a fully fine-tuned model while only training 3.6% of the parameters. In a direct experiment with a DistilBERT model, fine-tuning adapter layers outperformed fine-tuning only the top two layers on a sentiment classification task, despite using a similar number of parameters (599,424 for adapters vs. 592,130 for the top layers) [14].

Troubleshooting Guides

Issue 1: Poor Task Performance After Adapter Tuning

Problem: Your model, after adapter tuning, is not achieving the expected performance on the downstream task.

Potential Causes and Solutions:

- Cause: Inadequate Bottleneck Dimension

- Solution: The bottleneck dimension of the adapter is a key hyperparameter. A dimension that is too small may not provide enough capacity for the task, while one that is too large defeats the purpose of efficiency. Experiment with different sizes (e.g., 8, 16, 32, 64) [14].

- Cause: Data Quality or Mismatch

- Solution: This is particularly critical when fine-tuning scFMs for perturbation prediction, as benchmarks show they struggle with strong or atypical perturbations [2]. Ensure your fine-tuning dataset is of high quality and covers the specific biological context or perturbation types you are targeting.

- Cause: Suboptimal Training Hyperparameters

- Solution: Even though fewer parameters are being trained, the learning rate and number of epochs still need to be tuned. A learning rate that is too high can cause instability, while one that is too low can lead to slow or insufficient convergence.

Issue 2: Unexpectedly High Memory Usage During Training

Problem: Your GPU memory usage is still high even though you are using adapters.

Potential Causes and Solutions:

- Cause: Large Batch Size

- Solution: Reduce the batch size. While adapters reduce the number of trainable parameters, the activations from the large base model still need to be stored in memory during the forward and backward passes. A smaller batch size reduces memory pressure from activations [15].

- Cause: Large Base Model

- Solution: Combine adapters with model quantization techniques, such as QLoRA. This involves loading the base model in a lower-bit precision (e.g., 4-bit) while training the adapters in higher precision (e.g., 16-bit), offering further significant memory savings [15].

Experimental Protocols & Data

Protocol: Implementing and Tuning Adapters in a Transformer

This protocol outlines the steps to insert and train adapter layers in a transformer-based model like DistilBERT, based on the experiments by Sebastian Raschka [14].

Materials:

- Pre-trained Model: A pre-trained transformer model (e.g.,

distilbert-base-uncasedfrom Hugging Face). - Dataset: A labeled dataset for a downstream task (e.g., the movie review dataset for sentiment classification).

- Software: PyTorch or TensorFlow, and the Transformers library.

- Hardware: A GPU with sufficient VRAM (e.g., a 16GB T4 GPU can be sufficient for models like DistilBERT).

Methodology:

- Model Loading: Load the pre-trained model and its tokenizer.

- Adapter Module Definition: Define a function to create an adapter module. This is typically a sequential module with a down-projection, a non-linearity (e.g., GELU), and an up-projection.

- Adapter Insertion: Iterate through the transformer blocks of the model and insert the adapter layers at the desired locations (e.g., after the attention output and after the feed-forward network).

- Freeze Base Model: Freeze all parameters of the original pre-trained model.

- Training Loop: Train the model on the downstream task. Only the parameters in the adapter layers will be updated.

Protocol: Benchmarking Fine-Tuning Methods

This protocol describes how to compare different fine-tuning strategies, as performed in [14].

Methodology:

- Establish Baselines:

- Full Fine-Tuning: Train all parameters of the base model.

- Top-Layer Tuning: Freeze all but the last few classification layers of the model and train only those.

- Test PEFT Methods:

- Adapter Tuning: Insert and train only the adapter layers, as described above.

- Other PEFT Methods: Compare against other techniques like LoRA or prompt tuning [15].

- Evaluation: Use a fixed validation or test set to evaluate all methods on the same metrics (e.g., accuracy, F1-score).

- Metrics Comparison: Record and compare the performance, number of trainable parameters, and training time for each method.

Quantitative Performance Comparison of Fine-Tuning Methods

The table below summarizes results from a sentiment classification task using a DistilBERT model, comparing different fine-tuning strategies [14].

Table 1: Comparison of Fine-Tuning Methods on DistilBERT

| Fine-Tuning Method | Trainable Parameters | Test Accuracy | Training Time (min) |

|---|---|---|---|

| Top Layers Only | 592,130 | 86.4% | 2.89 |

| Adapters (Bottleneck=32) | 599,424 | 88.4% | 5.69 |

| Full Fine-Tuning | ~66.9 Million | 93.0% | 7.12 |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for Adapter-based Fine-Tuning Experiments

| Item | Function | Example / Note |

|---|---|---|

| Pre-trained LLM | The foundation model that provides general language or biological knowledge. | Models like DistilBERT, LLaMA, or single-cell models like scGPT [14] [1]. |

| Task-Specific Dataset | The labeled data used to adapt the model to a new domain or task. | For scFMs, this would be a high-quality dataset of genetic perturbations [2]. |

| Adapter Modules | The small, trainable networks inserted into the base model. | Bottleneck architecture with a configurable hidden dimension [14]. |

| Deep Learning Framework | Software used to implement and train the model. | PyTorch or TensorFlow with the Hugging Face Transformers library. |

| GPU Acceleration | Hardware to handle the computational load of training and inference. | Consumer GPUs (e.g., 16GB T4) are often sufficient for adapter tuning [15]. |

Workflow and Architecture Diagrams

Adapter Architecture in a Transformer Block

Adapter Tuning Experimental Workflow

Single-Cell FM Benchmarking Context

Incorporating External Biological Knowledge to Enhance Predictions

Single-cell foundation models (scFMs), such as Geneformer and scGPT, are pre-trained on massive single-cell transcriptomics datasets with the goal of learning universal biological patterns. A primary application is in silico perturbation (ISP) prediction, where these models forecast how a cell's transcriptome changes in response to a genetic intervention. In discovery research where labels are unknown, models must often operate in a zero-shot setting without task-specific fine-tuning.

Recent rigorous benchmarking, however, has revealed a significant limitation: the zero-shot performance of these scFMs for perturbation prediction frequently fails to outperform deliberately simple baselines [1] [2] [6]. This technical support guide addresses this performance gap by providing actionable strategies for incorporating external biological knowledge to enhance prediction reliability.

FAQs & Troubleshooting Guides

Q1: Why do my model's zero-shot perturbation predictions underperform simple baselines?

This is a commonly reported issue. Quantitative benchmarks have demonstrated that even state-of-the-art scFMs do not consistently outperform simple models like an "additive" baseline (summing individual logarithmic fold changes) or predicting no change from the control condition [2].

Root Cause Analysis:

- Trivial Mechanisms: The masked language model pre-training framework may not inherently produce cell embeddings that are optimal for perturbation tasks. Models can sometimes rely on superficial patterns rather than learning causal biological relationships [1].

- Distribution Shift: Models struggle significantly when making predictions on data that differs from their pre-training distribution [6].

- Knowledge Gap: Pre-training on general transcriptome data may not capture the specific dynamics of gene regulatory networks under perturbation.

Solution: Implement a "Closed-Loop" Fine-Tuning Framework.

- Description: Move beyond "open-loop" ISP by incorporating a limited set of experimental perturbation data into the model's training regimen. This uses experimental results as feedback to guide the model toward more accurate predictions [10].

- Protocol:

- Start with a pre-trained scFM (e.g., Geneformer).

- Fine-tune the model on a composite dataset that includes:

- Standard single-cell RNA sequencing (scRNA-seq) data from your biological context (e.g., resting and activated T-cells).

- scRNA-seq data from Perturb-seq (or similar) experiments, even if from a limited number of gene perturbations.

- Use this refined model to perform ISP on a broader set of genes [10].

Evidence: In a T-cell activation study, this approach increased the positive predictive value (PPV) of ISP three-fold, from 3% to 9%, while also improving sensitivity and specificity [10].

Q2: How can I improve predictions for a rare disease with limited patient data?

This scenario is challenging due to sample scarcity, but external knowledge can be leveraged.

Root Cause: scFMs require sufficient contextual data to make meaningful predictions. For rare diseases, the model may not have encountered enough relevant patterns during pre-training.

Solution: Utilize Engineered Cell Models and Cross-Validation.

- Description:

- Create an in vitro model of the disease by engineering relevant mutations (e.g., RUNX1 loss-of-function for a platelet disorder) in human stem cells.

- Validate that the engineered cells closely mirror the transcriptomic profile of actual patient-derived cells.

- Fine-tune the scFM to distinguish between the engineered disease-model cells and isogenic control cells.

- Perform ISP to identify genes whose perturbation shifts the disease state toward a healthy state [10].

- Description:

Example Workflow for a Rare Hematologic Disorder:

- Model Creation: Engineer RUNX1-knockout in human hematopoietic stem cells (HSCs).

- Validation: Confirm high concordance with patient HSC transcriptomes (e.g., reduced expression of known RUNX1 targets).

- Fine-tuning: Specialize Geneformer to classify RUNX1-knockout vs. control HSCs.

- Prediction & Prioritization: Run ISP and cross-reference results with differential expression analysis to select high-confidence therapeutic targets for experimental validation [10].

Q3: My model fails to predict strong or atypical perturbation effects. What can I do?

This is a known weakness of current scFMs, as they tend to be biased towards predicting minimal changes [6].

Root Cause: The models may be averaging over possible outcomes or are not trained on sufficient examples of strong genetic interactions.

Solution: Integrate Lineage-Specific Gene Embeddings and Prioritize Data Quality.

- Description:

- Leverage External Embeddings: Instead of relying solely on the model's internal representations, use gene embeddings pre-trained on large-scale perturbation datasets (e.g., from another cell line). These embeddings can be integrated into a simpler linear model that has demonstrated competitive performance [2].

- Curate Training Data: Rigorously quality-control your perturbation datasets. Exclude perturbations that do not affect their target gene's expression, as these may represent failed experiments that confound the model [2].

- Focus on Data Breadth: The key to predicting atypical effects may lie not in more complex models, but in higher-quality and more diverse datasets that capture a wider range of cellular states and strong perturbation effects [6].

- Description:

Q4: How can I biologically validate my scFM's internal representations?

It is crucial to verify that the model's latent space captures biologically meaningful relationships.

Root Cause: Without validation, it's unclear if the model has learned relevant biology or just technical artifacts.

Solution: Use Ontology-Informed Metrics.

- Description: Move beyond standard clustering metrics. Implement novel metrics that evaluate the biological plausibility of the model's outputs.

- Recommended Metrics:

- scGraph-OntoRWR: Measures the consistency between cell-type relationships in the embedding space and established biological knowledge from cell ontologies.

- Lowest Common Ancestor Distance (LCAD): For cell type annotation tasks, this metric assesses the severity of misclassification by measuring the ontological proximity between the predicted and true cell type. A smaller LCAD indicates a less severe error (e.g., confusing two T-cell subtypes vs. confusing a T-cell with a neuron) [4].

Quantitative Performance Data

Table 1: Benchmarking scFMs against simple baselines for double perturbation prediction. Prediction error is measured as L2 distance on top 1,000 genes (lower is better). Adapted from [2].

| Model / Baseline | Prediction Error (L2) | Outperforms Additive Baseline? |

|---|---|---|

| Additive Model (Simple Baseline) | ~1.5 | (Baseline) |

| No Change Model (Simple Baseline) | ~4.5 | No |

| scGPT | ~4.5 | No |

| Geneformer* | ~4.2 | No |

| scBERT* | ~4.5 | No |

| UCE* | ~4.5 | No |

| GEARS | ~3.8 | No |

| scFoundation | ~3.2 | No |

Note: Models marked with * were repurposed with a linear decoder for this task.

Table 2: Impact of "closed-loop" fine-tuning on perturbation prediction accuracy for T-cell activation. PPV: Positive Predictive Value; NPV: Negative Predictive Value. Data from [10].

| Fine-Tuning Approach | PPV | NPV | Sensitivity | Specificity |

|---|---|---|---|---|

| Open-Loop (Standard) ISP | 3% | 98% | 48% | 60% |

| Differential Expression | 3% | 78% | 40% | 50% |

| Closed-Loop ISP | 9% | 99% | 76% | 81% |

Experimental Protocols

Protocol 1: Closed-Loop Fine-Tuning for Enhanced ISP

This methodology details how to incorporate experimental perturbation data to improve a pre-trained scFM [10].

- Base Model Selection: Begin with a pre-trained foundation model (e.g., Geneformer-30M-12L).

- Task Specialization Fine-tuning: Fine-tune the model on your target biological state (e.g., classify resting vs. activated T-cells) using relevant scRNA-seq data. This creates a specialized "open-loop" model.

- Incorporate Perturbation Data:

- Gather scRNA-seq data from a perturbation screen (e.g., Perturb-seq) in the same biological context. The data should be labeled with the resulting cellular state (e.g., activated/rested), but not necessarily with the perturbed gene's identity.

- Combine this perturbation dataset with the initial state-defining data.

- Closed-Loop Fine-tuning: Further fine-tune the "open-loop" model on this combined dataset.

- ISP Execution: Use the resulting "closed-loop" model to perform in silico perturbations on genes not included in the perturbation training data.

Protocol 2: Target Prioritization for a Rare Disease

This protocol outlines a strategy to overcome data scarcity in rare disease research [10].

- Generate Disease Model: Engineer a loss-of-function mutation in the gene of interest (e.g., RUNX1) in appropriate human primary cells (e.g., HSCs).

- Transcriptomic Validation: Sequence the engineered cells and validate them by confirming that known downstream pathways of the target gene are differentially expressed, matching patterns observed in scarce patient data.

- Model Fine-tuning: Fine-tune the scFM to distinguish between the disease-model cells and isogenic control cells.

- Cross-Method Prediction:

- Perform ISP using the fine-tuned model to get a list of candidate genes.

- Independently, perform a standard differential expression (DE) analysis between disease and control models.

- Target Prioritization: Select genes that are identified as significant by both ISP and DE analysis. This consensus approach increases confidence in the predictions.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential resources for enhancing scFM perturbation predictions.

| Reagent / Resource | Function in Context | Example Use Case |

|---|---|---|

| Geneformer-30M-12L | A pre-trained scFM based on the Transformer architecture. Can be fine-tuned for specific tasks. | Base model for closed-loop fine-tuning in T-cell activation and rare disease modeling [10]. |

| Perturb-seq Data | Single-cell RNA sequencing data from genetic perturbation screens. Provides ground-truth data on transcriptional outcomes. | Incorporated during fine-tuning to teach the model the causal links between gene perturbation and cell state [10]. |

| Engineered Cell Models | In vitro models of disease created via CRISPR/Cas9 editing. Bypasses the need for large numbers of patient samples. | Used to generate abundant, relevant transcriptomic data for rare diseases like RUNX1-FPD [10]. |

| Cell Ontologies | Structured, controlled vocabularies for cell types. Define the hierarchical relationships between different cell classes. | Used to compute biology-aware validation metrics like scGraph-OntoRWR and LCAD [4]. |

| Linear Model with Embeddings | A simple, interpretable baseline model that uses pre-trained gene/perturbation vectors. | Serves as a strong benchmark; can outperform complex scFMs in predicting unseen perturbations [2]. |

| RUNX1-FPD Model | A specific engineered model for RUNX1-familial platelet disorder using human HSCs. | Used to identify therapeutic targets (e.g., mTOR, CD74-MIF axis) via ISP [10]. |

Frequently Asked Questions

Q1: My zero-shot perturbation predictions are outperformed by a simple "no change" baseline. What could be wrong? This is a known limitation identified in recent benchmarks [2]. The "no change" baseline, which always predicts expression identical to the control condition, and the "additive" baseline, which sums individual logarithmic fold changes, have been found to be highly competitive. Current foundation models often struggle to learn representations that generalize better than these simplistic assumptions for predicting unseen perturbation effects [2] [6].

Q2: How can I improve my model's prediction of genetic interactions from double perturbations? Benchmarks reveal that models frequently misclassify interaction types, often predicting "buffering" interactions but rarely correctly identifying "synergistic" or "opposite" interactions [2]. If your model shows this behavior, it may not be capturing the underlying biological complexity. Consider enriching your training data with confirmed interaction examples or exploring alternative model architectures that move beyond current foundation model limitations.

Q3: Can pretrained gene embeddings from large models enhance prediction for unseen single-gene perturbations? Evidence suggests limited benefits. A linear model using embeddings from scFoundation or scGPT did not consistently outperform a linear model with embeddings derived directly from the training data [2]. The most effective strategy identified was pretraining the perturbation embedding matrix (P) on existing large-scale perturbation data (e.g., from a different cell line), which provided more predictive power than atlas-scale single-cell pretraining [2].

Q4: My model works well on the training data but fails to generalize. What steps should I take? This indicates poor out-of-distribution performance, a common challenge. First, implement the simple linear baseline and "mean prediction" baseline to quantify the performance gap [2]. Ensure your training data encompasses a wide range of perturbation strengths and types, as models tend to struggle with strong or atypical effects [6]. Also, verify that your dataset does not suffer from the biases often present in public drug combination databases [16].

Troubleshooting Guides

Issue: Poor Zero-Shot Performance on Unseen Perturbations

Problem Model fails to accurately predict transcriptome changes for genetic perturbations or drug combinations not seen during training.

Investigation & Diagnosis

- Benchmark Against Baselines: Compare your model's performance against these simple baselines [2]:

- No Change Baseline: Always predicts the control condition expression.

- Additive Baseline: For double perturbations, predicts the sum of the individual logarithmic fold changes.

- Mean Prediction Baseline: For unseen single perturbations, predicts the average expression across the training set perturbations.

- Check Embedding Utility: If using pretrained gene or drug embeddings, test their effectiveness by plugging them into a simple linear model (see Experimental Protocol 2). Their performance may be limited [2].

Solution If outperformed by baselines, consider:

- Leverage Perturbation Data: Pretrain your perturbation embeddings on existing large-scale perturbation datasets from related contexts (e.g., a different cell line) [2].

- Reframe the Problem: For drug synergy, consider sequential model optimization (SMO) frameworks like RECOVER, which actively select informative experiments, enriching for synergistic combinations without requiring exhaustive screening [16].

Issue: Inaccurate Prediction of Genetic Interactions in Double Perturbations

Problem Model cannot correctly identify or classify genetic interactions (e.g., synergistic, buffering).

Investigation & Diagnosis

- Calculate Ground Truth Interactions: From your data, identify true genetic interactions by finding double perturbations where the phenotype differs from the additive expectation more than expected under a normal distribution null model [2].

- Generate Interaction Predictions: Compute the difference between your model's predicted expression and the additive expectation for each double perturbation.

- Create ROC Curves: For all possible prediction thresholds, compute the true-positive rate and false discovery proportion to visualize performance [2].

Solution

- If the model's performance curve is similar to or worse than the "no change" baseline, this aligns with published findings, and current architectures may be insufficient [2].

- Focus on improving the model's capacity to represent non-linear biological relationships beyond simple additive effects.

Table 1: Benchmarking Results for Double Perturbation Prediction (based on Norman et al. data) [2]

| Model / Baseline | Prediction Error (L2 Distance) | Performance in Genetic Interaction Prediction |

|---|---|---|

| Additive Baseline | Reference | Does not predict interactions by definition |

| No Change Baseline | Higher than Additive | Not better than random |

| scGPT | Higher than Additive | Not better than random |

| GEARS | Higher than Additive | Not better than random |

| Geneformer | Higher than Additive | Not better than random |

| scBERT | Higher than Additive | Not better than random |

Table 2: Performance of Models on Unseen Single Perturbations [2]

| Model / Approach | Performance on Adamson (K562) & Replogle (K562, RPE1) Data |

|---|---|

| Mean Prediction Baseline | Competitive, often not outperformed |

| Linear Model (P from training data) | Competitive |

| scGPT (with its own decoder) | Did not consistently outperform baseline |

| GEARS (with its own decoder) | Did not consistently outperform baseline |

| Linear Model (with scGPT's G, training P) | Outperformed scGPT's native decoder |

| Linear Model (P pretrained on Replogle data) | Consistently outperformed all other models |

Experimental Protocols

Protocol 1: Benchmarking Against Simple Baselines for Double Perturbations

Objective: Quantify if a complex model provides value over simple baselines [2].

Materials: Dataset with single and double perturbation phenotypes (e.g., log-transformed expression values).

Method:

- Data Preparation: Split double perturbations into training and test sets.

- Baseline Predictions:

- No Change: For any test double perturbation, predict the control condition expression values.

- Additive: For a double perturbation of genes A and B, predict:

LFC(A+B) = LFC(A) + LFC(B), where LFC is the logarithmic fold change versus control.

- Model Training & Prediction: Fine-tune your model on the training set and generate predictions for the test set.

- Evaluation: Calculate the L2 distance between predicted and observed expression values for the top 1,000 highly expressed genes. Compare your model's error to that of the baselines.

Protocol 2: Linear Model with Embeddings for Unseen Perturbation Prediction

Objective: Test the predictive utility of pretrained embeddings using a simple, interpretable model [2].

Materials:

- Data matrix

Y_train(genes x perturbations) for training. - Pretrained gene embedding matrix

G(optional). - Pretrained perturbation embedding matrix

P(optional).

Method:

- Embedding Generation: If

GorPare not provided, create them via dimension reduction (e.g., PCA) on the training data. - Model Fitting: Solve the linear equation:

Y_train ≈ (G * W * P^T) + b.G: Gene embedding matrix (number of genes x K dimensions).P: Perturbation embedding matrix (number of perturbations x L dimensions).W: The learned weight matrix (K x L).b: The vector of row means fromY_train.

- Prediction: For a new perturbation, use its embedding

p_new(fromPor a lookup) to predict gene expression:y_pred = (G * W * p_new^T) + b.

Experimental Workflow and Pathway Diagrams

Experimental Design Flow

Zero Shot Prediction Pipeline

Evidence for ScFM Limitations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Datasets

| Item Name | Function / Description | Relevance to Troubleshooting |

|---|---|---|

| Simple Linear Baseline Models | Provides a critical performance benchmark for any complex model. | Confirms if a complex model adds value; essential for diagnosing poor performance [2]. |

| Perturbation Datasets (e.g., Norman, Replogle) | Standardized, publicly available datasets for training and benchmarking. | Allows for reproducible benchmarking and comparison of model performance against published results [2]. |

| Gene & Perturbation Embeddings (G, P) | Low-dimensional representations of genes and perturbations. | Their predictive utility can be tested in a linear model framework to isolate embedding quality from architecture complexity [2]. |

| Sequential Model Optimization (SMO) Framework | An active learning approach that selects the most informative experiments to run next. | Efficiently explores large drug combination spaces, enriching for synergistic hits with minimal experimentation [16]. |

| Large Language Model (LLM) Embeddings | Context-enriched embeddings for drugs and cell lines generated from models like GPT-3.5. | Can be used as input features to represent drugs and cell lines in a unified pipeline for tasks like drug synergy prediction [17]. |

Troubleshooting Guides and FAQs

Common Problem: Poor Model Performance on Novel Perturbations

Q: My foundation model for predicting chemical perturbation effects performs poorly on novel compounds or cell lines it wasn't trained on. What could be wrong?

A: This is a known limitation in current single-cell foundation models (scFMs). Recent benchmarking studies show that even advanced models like scGPT and Geneformer often fail to outperform simple baselines in zero-shot settings—where models are used without any further training on new data [2] [1]. Performance issues are particularly pronounced when predicting effects for unseen single or double perturbations [2].

Diagnosis Steps:

- Benchmark against simple baselines: Compare your model's performance against a deliberately simple baseline, such as an additive model that predicts the sum of individual logarithmic fold changes (LFCs) for perturbations [2].

- Check for dataset overlap: Verify whether your evaluation dataset was part of the model's original pretraining data. Models may not perform well even on previously seen data, but performance can be more variable on truly novel data [1].

- Evaluate embedding utility: Test if the pretrained gene or cell embeddings from the foundation model (e.g., from scGPT or scFoundation) provide any benefit when used with a simple linear predictor. Research indicates these embeddings may offer little to no advantage over random embeddings or those derived from the training data itself [2].

Solution Steps:

- Implement a linear baseline: Use a simple linear model as a performance benchmark. This model can be formulated to use embeddings for genes (G) and perturbations (P), solving for a matrix W that minimizes prediction error [2].

- Incorporate perturbation data in pretraining: If possible, pretrain your model or its embeddings on relevant perturbation data, as this has been shown to increase predictive performance more than pretraining on single-cell atlas data alone [2].

- Consider alternative architectures for chemical perturbations: For predicting responses to novel chemical perturbations, consider specialized models like PRnet. This is a perturbation-conditioned deep generative model designed to generalize to unseen compounds by using their SMILES string representations, and it has shown better performance in this specific domain [18].

Common Problem: Inaccurate Prediction of Genetic Interactions

Q: My model is unable to accurately predict non-additive genetic interactions (like synergy or buffering) from double perturbation data. How can I improve this?

A: Many deep learning models struggle to correctly identify true genetic interactions, often exhibiting a strong bias towards predicting "buffering" interactions and rarely correctly predicting synergistic effects [2].

Diagnosis Steps:

- Analyze prediction patterns: Classify your model's predictions for double perturbations into interaction types (e.g., buffering, synergistic, opposite). Compare the frequency of predicted synergistic interactions against the ground truth; a very low rate of correct synergistic predictions indicates a common model limitation [2].

- Check for prediction invariance: Investigate whether your model's predictions vary meaningfully across different perturbations. Some models may predict little to no change from the control condition, regardless of the perturbation applied [2].

Solution Steps:

- Use a robust null model: Establish a rigorous null model (e.g., assuming a Normal distribution for deviations from additivity) to identify true genetic interactions in your data at a defined false discovery rate (e.g., 5%) [2].

- Quantify model performance on interactions: For each model, compute true-positive rate (TPR) and false discovery proportion curves across all possible prediction thresholds to see if any model outperforms a simple "no change" baseline. Current research suggests that many do not [2].

Common Problem: Failure in Zero-Shot Cell Type Clustering and Batch Integration

Q: The cell embeddings produced by my foundation model in a zero-shot setting fail to separate cell types effectively or remove batch effects. Why?

A: Zero-shot evaluation of foundation models like scGPT and Geneformer reveals that their cell embeddings often underperform compared to established methods for tasks like cell type clustering and batch correction. The primary structure in the embeddings may be driven by batch effects rather than biological signal [1].

Diagnosis Steps:

- Visualize embeddings: Use dimensionality reduction (e.g., UMAP, t-SNE) to visually inspect the embeddings. If the primary clustering is by batch or data source rather than known cell type, the embeddings are not performing well [1].

- Compare to established baselines: Calculate clustering and batch integration metrics (e.g., Average BIO score, ASW, batch mixing scores) for your foundation model embeddings and compare them against simpler methods like Highly Variable Genes (HVG), Harmony, or scVI [1].

Solution Steps:

- Rely on proven methods for clustering and integration: For zero-shot tasks, methods like HVG selection, scVI, or Harmony currently provide more reliable performance for cell type separation and batch integration [1].

- Re-evaluate the pretraining objective: The masked language model pretraining used by many scFMs may not inherently produce high-quality cell embeddings for all downstream tasks. Consider if your task aligns with the model's pretraining objective [1].

Quantitative Performance Data