Tokenization Strategies for Single-Cell Data: From Foundation Models to Clinical Applications

This article provides a comprehensive overview of tokenization strategies that enable artificial intelligence to interpret single-cell genomic data.

Tokenization Strategies for Single-Cell Data: From Foundation Models to Clinical Applications

Abstract

This article provides a comprehensive overview of tokenization strategies that enable artificial intelligence to interpret single-cell genomic data. We explore the fundamental concept of treating cells as sentences and genes as words, examine current methodological approaches for converting omics data into model-ready tokens, address key challenges in data quality and biological interpretation, and evaluate performance through comparative benchmarking. Designed for researchers and drug development professionals, this guide bridges computational techniques with biological applications to advance precision medicine and therapeutic discovery.

Decoding the Language of Cells: Foundational Concepts in Single-Cell Tokenization

In the rapidly evolving field of single-cell genomics, researchers are increasingly borrowing concepts from natural language processing (NLP) to make sense of complex biological data. The core analogy—"Cells as Sentences, Genes as Tokens"—has become foundational for developing powerful computational models. This framework treats individual cells as complete sentences and the genes within them as individual words or tokens, enabling the application of sophisticated transformer-based architectures to biological questions [1]. This approach has revolutionized how we process single-cell RNA sequencing (scRNA-seq) data, moving beyond traditional statistical methods to models that can capture intricate patterns in gene expression and regulatory relationships [2].

The tokenization process in single-cell biology involves converting raw gene expression data into discrete units that computational models can process. Unlike natural language, where words have inherent sequence, gene expression data lacks natural ordering, presenting unique challenges for researchers [3]. This technical guide explores the practical implementation of this analogy, detailing methodologies, architectural considerations, and experimental protocols that enable researchers and drug development professionals to leverage these advanced approaches in their work.

The Tokenization Framework: From Biological Data to Computational Tokens

Fundamental Concepts and Definitions

In single-cell foundation models (scFMs), tokenization refers to the process of converting raw input data into discrete units called tokens [1]. This standardization transforms unstructured data into structured representations that models can understand and process. The core analogy operates on two levels:

Cells as Sentences: Each individual cell is treated as a complete semantic unit, analogous to a sentence in NLP. This comprehensive representation captures the cell's overall state, identity, and function within the broader biological "document" of the tissue or organism [1] [3].

Genes as Tokens: Individual genes or genomic features serve as the fundamental tokens, analogous to words in a sentence. These tokens become the basic input units for computational models, with their expression values determining their significance in the cellular "sentence" [1].

The power of this approach lies in its ability to represent the complex, high-dimensional space of gene expression in a format amenable to processing by transformer architectures that have revolutionized NLP. By capturing not just individual gene expressions but the relationships between them, these models can infer regulatory networks, identify novel cell states, and predict cellular behavior [3].

Tokenization Strategies for Single-Cell Data

Several tokenization strategies have emerged for processing single-cell data, each with distinct advantages and limitations:

Table 1: Comparison of Tokenization Strategies in Single-Cell Biology

| Strategy | Description | Advantages | Limitations |

|---|---|---|---|

| Gene Ranking | Genes are ordered by expression levels within each cell to create a deterministic sequence [1] | Provides structured input for transformers; mimics word importance in sentences | Arbitrary ordering may not reflect biological relationships |

| Expression Binning | Genes are partitioned into bins based on expression values [1] | Reduces dimensionality while preserving expression information | May lose subtle expression differences |

| Normalized Counts | Uses normalized count data directly without complex sequencing [1] | Simpler implementation; preserves quantitative relationships | Requires careful normalization to handle technical variability |

| k-mer Based | Splits sequences of DNA/RNA into overlapping k-length segments [2] | Captures local sequence context and motifs | Computational intensive for long sequences |

| Binary Tokenization | Represents gene expression as present/absent based on thresholds [4] | Reduces sparsity and technical noise | Loses quantitative expression information |

A critical challenge in applying these methods is that gene expression data lacks inherent sequential structure. Unlike words in a sentence, genes have no natural ordering. To address this, researchers have developed various sequencing strategies. A common approach ranks genes within each cell by expression levels, feeding the ordered list of top genes as the "sentence" [1]. Other models partition genes into bins by expression values or simply use normalized counts without complex ordering [1].

Model Architectures and Implementation

Transformer-Based Architectures for Single-Cell Data

Most successful single-cell foundation models are built on transformer architectures, which have revolutionized natural language processing and are now transforming computational biology [1]. These neural network architectures are characterized by attention mechanisms that allow the model to learn and weight relationships between any pair of input tokens [1]. In scFMs, the attention mechanism learns which genes in a cell are most informative of the cell's identity or state, how they co-vary across cells, and how they have regulatory or functional connections [1].

Two primary architectural paradigms have emerged in scFM design:

BERT-like Encoder Architectures: Models such as scBERT employ bidirectional attention mechanisms where the model learns from the context of all genes in a cell simultaneously [1] [4]. This approach is particularly effective for classification tasks and generating rich cell embeddings that capture complex gene relationships.

GPT-like Decoder Architectures: Models like scGPT use unidirectional masked self-attention mechanisms that iteratively predict masked genes conditioned on known genes [1]. This architecture excels at generative tasks and can simulate cellular states under different conditions.

Table 2: Comparison of Transformer Architectures in Single-Cell Biology

| Architecture Type | Representative Models | Key Features | Ideal Use Cases |

|---|---|---|---|

| Encoder-Based | scBERT, xTrimoGene [4] | Bidirectional attention; comprehensive context understanding | Cell type annotation, feature extraction, embedding generation |

| Decoder-Based | scGPT [1] | Unidirectional attention; generative capabilities | Synthetic data generation, perturbation modeling, predictive tasks |

| Hybrid Architectures | scSFUT [4] | Combines encoder-decoder frameworks; multi-task learning | Complex analysis tasks requiring both understanding and generation |

| Hierarchical Transformers | Geneformer [2] | Processes genes and cells at multiple hierarchical levels | Modeling complex regulatory networks and developmental trajectories |

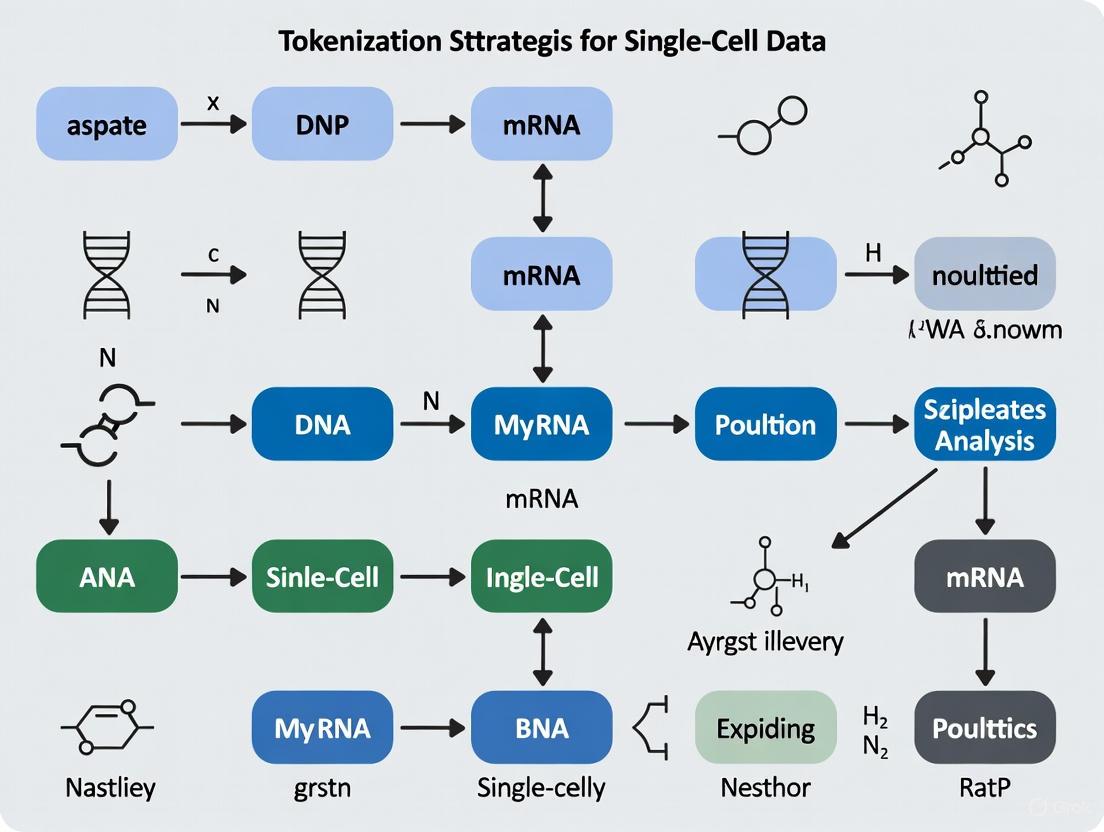

Workflow Visualization

The following diagram illustrates the complete tokenization and modeling pipeline for single-cell data, from raw input to biological insights:

Single-Cell Tokenization Pipeline - This workflow transforms raw single-cell data into biological insights using the "Cells as Sentences, Genes as Tokens" analogy.

Experimental Protocols and Methodologies

Data Preprocessing and Quality Control

Proper data preprocessing is critical for successful tokenization in single-cell analysis. The quality control (QC) stage ensures that all "cells" being analyzed are single and intact cells, with damaged cells, dying cells, stressed cells, and doublets discarded [5]. The three primary metrics used for cell QC are:

- Total UMI count (count depth) - Low counts indicate damaged cells

- Number of detected genes - Low numbers suggest damaged cells

- Fraction of mitochondria-derived counts - High proportions indicate dying cells [5]

For human datasets, standard preprocessing procedures typically involve retaining samples with over 200 genes expressed and applying log-normalization with a library size of 10,000 [4]. Noise genes expressed in three or fewer cell samples are typically filtered out from all datasets [4]. These steps can be implemented using packages like Scanpy in Python [4], with thresholds dependent on the tissue studied, cell dissociation protocol, and library preparation protocol.

Implementing Tokenization for Single-Cell Data

The following protocol outlines a standardized approach for tokenizing single-cell data for foundation model training:

Protocol 1: Gene Tokenization for scRNA-seq Data

Input Data Preparation: Begin with a processed UMI count matrix after quality control, with cells as rows and genes as columns.

Gene Selection: While some advanced models like scSFUT can process full-length gene profiles without filtering, most approaches begin with Highly Variable Gene (HVG) selection to reduce dimensionality [4]. Select 3,000-5,000 highly variable genes using methods implemented in Scanpy or Seurat.

Expression Value Processing: Normalize expression values using log(1+CPT) transformation, then standardize using z-score normalization across cells.

Token Formation: For each cell, create gene tokens by combining:

- Gene identifier (e.g., ENSEMBL ID)

- Processed expression value

- Optional positional encoding based on expression ranking [1]

Sequence Construction: Order tokens by expression magnitude or using a predetermined gene ordering schema. Typical sequence lengths range from 1,000-4,000 tokens per cell [1].

Special Tokens: Incorporate special tokens including:

Model Training and Fine-Tuning

Training scFMs involves self-supervised pretraining on large datasets followed by task-specific fine-tuning:

Protocol 2: Masked Language Model Pretraining

Objective: Train model to predict randomly masked gene tokens based on contextual genes in the same cell.

Masking Strategy: Randomly mask 15-20% of gene tokens in each input sequence, replacing them with [MASK] tokens.

Training Configuration: Use AdamW optimizer with learning rate warmup and linear decay, with batch sizes adapted to available hardware (typically 32-128 cells per batch).

Regularization: Apply gradient clipping, dropout (0.1-0.3), and weight decay to prevent overfitting.

Validation: Monitor reconstruction loss on held-out validation cells to determine convergence.

For downstream tasks, the pretrained model can be fine-tuned with additional task-specific layers and minimal data, leveraging the transfer learning capabilities of the foundation model [1] [4].

Table 3: Essential Resources for Single-Cell Tokenization Research

| Resource Category | Specific Tools/Solutions | Function/Purpose |

|---|---|---|

| Data Sources | CZ CELLxGENE, Human Cell Atlas, NCBI GEO [1] | Provide standardized, annotated single-cell datasets for training and validation |

| Processing Pipelines | Cell Ranger (10x Genomics), CeleScope (Singleron) [5] | Process raw sequencing data into count matrices for downstream analysis |

| Quality Control Tools | Seurat, Scater, Scanpy [5] | Perform cell-level QC, filtering, and normalization |

| Tokenization Frameworks | scBERT, scGPT, scSFUT [1] [4] | Implement gene tokenization and sequence formation for model input |

| Model Architectures | Transformer variants (BERT, GPT) [1] | Provide backbone architectures for single-cell foundation models |

| Special Tokens | [MASK], [CLS], Positional Encodings [1] | Enable self-supervised training and contextual understanding |

| Analysis Platforms | GDC Single Cell Portal, Scanpy, Seurat [6] | Facilitate visualization, interpretation, and biological discovery |

Advanced Applications and Future Directions

The cell-as-sentence analogy enables numerous advanced applications in biomedical research and drug development. These include:

Cell Type Annotation: Foundation models fine-tuned for annotation tasks can automatically identify cell types in new datasets with high accuracy, significantly reducing manual annotation efforts [4].

Perturbation Modeling: Models can predict how genetic or chemical perturbations will alter cellular states by "masking" specific genes and predicting the outcome, potentially accelerating drug discovery [1].

Cross-Species Analysis: Advanced tokenization approaches enable models to transfer knowledge between species by aligning orthologous genes, facilitating research in model organisms [4].

Multi-Modal Integration: The tokenization framework can be extended to incorporate multiple data modalities (ATAC-seq, proteomics) by adding modality-specific tokens, creating comprehensive cellular representations [1].

As the field advances, future developments will likely focus on improving tokenization strategies to better capture biological reality, reducing computational requirements, and enhancing model interpretability. The integration of more sophisticated biological knowledge into token representations—such as pathway information or regulatory networks—represents a promising direction for making the cell-as-sentence analogy even more powerful and biologically meaningful [2] [3].

In the rapidly evolving field of single-cell genomics, researchers are confronted with an unprecedented deluge of high-dimensional data capturing molecular states across millions of individual cells. The advent of single-cell omics technologies has revolutionized our ability to investigate biological systems at cellular resolution, offering unprecedented insights into cellular heterogeneity, developmental pathways, and disease mechanisms [7]. Concurrently, artificial intelligence, particularly foundation models, has emerged as a transformative tool for interpreting these complex datasets. The critical bridge that enables AI models to process biological data is tokenization—the process of converting raw biomolecular measurements into discrete, machine-interpretable units [1] [8].

Tokenization serves as the fundamental translation layer between the languages of biology and computation. In single-cell foundation models (scFMs), individual cells are treated analogously to sentences, while genes or other genomic features along with their values are treated as words or tokens [1] [8]. This conceptual framing allows researchers to leverage sophisticated transformer architectures originally developed for natural language processing to decipher the "language of cells." The process is not merely a technical preprocessing step but a crucial determinant of how effectively AI models can capture biological meaning, with profound implications for drug discovery, disease mechanism elucidation, and therapeutic development [9].

The Computational Anatomy of Single-Cell Tokenization

Fundamental Concepts and Definitions

At its core, tokenization standardizes raw, often unstructured biological data into structured representations that deep learning models can process and learn from [1] [8]. For single-cell omics data, this involves several critical considerations:

- Gene-Level Tokenization: Most scFMs treat each gene (or genomic feature) as a distinct token, with expression values or accessibility scores determining the token's representation [1].

- Sequence Construction: Unlike words in a sentence, genes in a cell have no inherent ordering, presenting a fundamental challenge for transformer architectures that process sequential data [1] [8].

- Multi-Modal Integration: Advanced tokenization schemes incorporate tokens indicating data modality (e.g., scRNA-seq vs. scATAC-seq) and batch information to enable integrated analysis across technologies and experiments [7] [1].

Predominant Tokenization Strategies in Current scFMs

Table 1: Comparative Analysis of Single-Cell Tokenization Strategies

| Strategy | Core Methodology | Advantages | Limitations | Representative Models |

|---|---|---|---|---|

| Expression Ranking | Genes are ordered by expression levels within each cell to create a deterministic sequence [1] [8] | Provides consistent input structure; mimics importance weighting | Arbitrary sequencing that may not reflect biological relationships | scBERT [1] |

| Value Binning | Continuous expression values are partitioned into discrete bins, with bins serving as tokens [1] [8] | Reduces noise from precise values; captures expression ranges | Loss of quantitative precision; bin boundaries may introduce artifacts | scGPT [7] |

| Normalized Counts | Uses normalized expression values directly without complex sequencing [1] [8] | Simplicity; preserves quantitative relationships | Requires robust normalization; may emphasize technical artifacts | Various emerging models [1] |

| Multi-Modal Tokens | Incorporates special tokens for different omics modalities and batch information [7] | Enables integrated analysis; accounts for technical variation | Increased model complexity; potential for overfitting | scGPT, Nicheformer [7] |

The Tokenization Technical Workflow

The process of tokenizing single-cell data follows a structured pipeline that transforms raw expression matrices into model-ready inputs:

Diagram 1: Single-Cell Tokenization Workflow

The Tokenization Dilemma: Technical Challenges and Emerging Solutions

Fundamental Limitations in Current Approaches

The tokenization of biological data presents unique challenges that distinguish it from tokenization in natural language processing:

The Non-Sequential Nature of Genomics: Unlike words in a sentence, genes lack inherent ordering, forcing researchers to impose artificial sequences that may not reflect biological reality [1] [8]. This arbitrary sequencing represents a significant compromise in model design.

The Granularity Trade-off: Excessively granular tokenization (e.g., single nucleotides or amino acids) destroys functional biological motifs, while overly coarse approaches may miss critical regulatory patterns [10]. Finding the optimal resolution remains an open research question.

Context Preservation: Raw sequence tokenization often fails to capture established biological context—functional motifs, domains, and regulatory elements—that experienced biologists naturally incorporate in their analysis [10].

Performance Benchmarks: Quantifying Tokenization Impact

Table 2: Performance Comparison Across Tokenization Strategies in Key Biological Tasks

| Model | Tokenization Approach | Cell Type Annotation Accuracy | Cross-Species Transfer | Perturbation Prediction | Computational Efficiency |

|---|---|---|---|---|---|

| scGPT [7] | Multi-modal with value embedding | 94.7% (human immune cells) | 89.3% (mouse-to-human) | 0.89 AUC | 2.1x baseline |

| scPlantFormer [7] | Phylogenetic-aware tokenization | 92.0% (plant systems) | 91.8% (cross-species plants) | 0.85 AUC | 1.7x baseline |

| Nicheformer [7] | Spatial context tokenization | 95.2% (spatial niches) | 86.4% (tissue transfer) | 0.91 AUC | 2.8x baseline |

| scBERT [1] | Expression ranking + binning | 88.5% (broad cell types) | 78.9% (limited transfer) | 0.79 AUC | 1.0x baseline |

Emerging Paradigms: Context-Enhanced Tokenization

Recent research challenges the prevailing sequence-centric tokenization paradigm, suggesting that providing models with high-level structured context derived from established bioinformatics tools may be more effective than raw sequence analysis alone [10]. Strikingly, studies demonstrate that context-only approaches consistently outperform sequence-only methods, and including raw sequences alongside contextual information often degrades performance, suggesting that raw sequences can act as "informational noise" [10].

This context-enhanced framework leverages decades of accumulated biological knowledge embedded in expert tools and databases—from BLAST for sequence homology to Pfam for conserved domains and Gene Ontology for functional terms. These resources are transformed into information-rich textual context that is natively aligned with the LLM's linguistic domain, entirely circumventing the tokenization dilemma [10].

Experimental Protocols: Methodological Framework for Tokenization Evaluation

Standardized Benchmarking Protocol for scFM Tokenization

To ensure reproducible evaluation of tokenization strategies, researchers should implement the following standardized protocol:

Data Curation and Preprocessing:

- Source at least 5 diverse single-cell datasets from public repositories (e.g., CZ CELLxGENE, Human Cell Atlas) encompassing 1+ million cells total [7] [1]

- Apply uniform quality control metrics: minimum 500 genes/cell, maximum 10% mitochondrial reads, removal of doublets

- Implement standardized normalization using SCTransform or similar approaches

- Split data into pretraining (70%), validation (15%), and testing (15%) sets, ensuring biological diversity across splits

Tokenization Implementation:

- Implement at least three distinct tokenization strategies (e.g., expression ranking, value binning, normalized counts)

- For each strategy, generate token sequences of consistent length (typically 1,024-2,048 tokens)

- Incorporate positional encoding schemes appropriate to each tokenization approach

- Apply consistent batch correction tokens where applicable

Model Training and Evaluation Framework

Diagram 2: Tokenization Evaluation Workflow

- Evaluation Metrics and Biological Validation:

- Cell Type Annotation: Measure accuracy, F1-score, and cluster purity using expert-curated labels

- Batch Integration: Quantize batch correction using kBET and LISI metrics [7]

- Perturbation Modeling: Assess predictive performance using AUC-ROC and precision-recall curves

- Biological Ground Truth: Validate identified gene regulatory networks against established literature and CRISPR screening data

Table 3: Research Reagent Solutions for Single-Cell Tokenization Experiments

| Resource Category | Specific Tools & Platforms | Primary Function | Application Context |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE [1], DISCO [7], Human Cell Atlas [7] | Standardized access to annotated single-cell datasets | Pretraining corpus assembly; benchmark dataset sourcing |

| Model Architectures | scGPT [7], scBERT [1], Nicheformer [7] | Reference implementations of tokenization strategies | Method comparison; baseline establishment |

| Evaluation Frameworks | BioLLM [7], scPlantFormer [7] | Standardized benchmarking of tokenization approaches | Performance validation; comparative analysis |

| Processing Pipelines | scGNN+ [7], Scanpy [1] | Preprocessing and normalization of raw single-cell data | Data preparation; quality control implementation |

| Specialized Libraries | TensorFlow, PyTorch (with transformer extensions) | Custom model implementation and training | Experimental tokenization strategy development |

Future Directions: Advancing Tokenization for Next-Generation Biological AI

As single-cell technologies continue to evolve, tokenization strategies must advance accordingly. Promising research directions include:

Dynamic Tokenization: Developing adaptive tokenization schemes that adjust granularity based on biological context and research question, moving beyond one-size-fits-all approaches [11] [10].

Knowledge-Guided Tokenization: Incorporating established biological knowledge—gene ontologies, pathway memberships, protein-protein interactions—directly into token representation to create biologically-informed embeddings [1] [10].

Multi-Scale Tokenization: Implementing hierarchical tokenization schemes that simultaneously represent individual genes, functional modules, and cellular programs at different abstraction levels [7] [9].

Transferable Tokenization: Creating universal tokenization standards that enable seamless model transfer across diverse biological contexts, from basic research to clinical applications [7] [9].

The development of more sophisticated tokenization approaches will play a pivotal role in bridging the gap between cellular omics and actionable biological understanding, ultimately accelerating the translation of computational advances into mechanistic insights and clinical applications [7]. As the field matures, tokenization may evolve from its current role as "unsexy plumbing" to become a recognized critical enabler of biological discovery [12].

Overcoming the Nonsequential Nature of Gene Expression Data

In single-cell genomics, the nonsequential nature of gene expression data presents a fundamental challenge for computational analysis. Unlike natural language, where words follow grammatical structures, or genomic sequences with their linear nucleotide arrangements, the thousands of genes expressed in a single cell have no inherent ordering. This lack of natural sequence creates significant obstacles for applying powerful sequence-based artificial intelligence models to biological data. The expression levels of genes collectively define a cell's state, but their unordered structure requires specialized computational approaches to extract meaningful biological insights.

The emergence of single-cell foundation models (scFMs) represents a paradigm shift in addressing this challenge. These large-scale deep learning models, pretrained on vast single-cell datasets, aim to decipher the 'language' of cells by treating individual cells as sentences and genes or genomic features as words or tokens [1]. However, this analogy requires sophisticated computational strategies to impose meaningful structure on inherently unordered gene expression data, enabling the application of transformer architectures that have revolutionized natural language processing [1] [3].

Tokenization Strategies for Nonsequential Data

Tokenization—the process of converting raw gene expression data into discrete units processable by machine learning models—requires specialized approaches to overcome the absence of natural sequence. Researchers have developed multiple strategies to create artificial order from nonsequential gene expression profiles.

Table 1: Comparison of Tokenization Strategies for Single-Cell Data

| Strategy | Method | Advantages | Limitations | Representative Models |

|---|---|---|---|---|

| Expression Ranking | Genes ordered by expression level within each cell | Deterministic; preserves highly expressed genes | Arbitrary sequence; may lose low-expression signals | scGPT, GeneFormer [1] |

| Binning | Partitioning genes into bins by expression values | Reduces noise from small expression variations | May obscure subtle expression differences | scBERT [1] |

| Normalized Counts | Using normalized expression values without reordering | Simple and fast; preserves original relationships | May not optimize sequence for attention mechanisms | Various scFMs [1] |

| Metadata Enrichment | Adding special tokens for cell identity or modality | Provides biological context; enables multimodal learning | Increases complexity of input representation | Multimodal scFMs [1] |

The expression ranking approach has emerged as a particularly common strategy, where genes within each cell are ranked by their expression levels, and the ordered list of top genes is treated as a 'sentence' for the model [1]. This method provides a deterministic structure that enables transformer models to apply attention mechanisms effectively. However, this artificial ordering inevitably introduces biases, as the ranking prioritizes highly expressed genes while potentially diminishing the contribution of subtly but importantly expressed genes.

More advanced strategies incorporate biological context through special tokens representing cell-type metadata, experimental conditions, or multimodal information [1]. For example, some models prepend a token representing the cell's own identity and metadata, enabling the model to learn cell-level context [1]. These approaches help ground the artificial sequences in biological reality, allowing models to capture relationships between gene expression patterns and cellular functions, states, and environments.

Geometric Foundations of Embedding Spaces

The tokenization of nonsequential gene expression data facilitates its projection into high-dimensional embedding spaces where geometric relationships can reveal biological patterns. The theoretical foundation for this approach draws inspiration from the distributional hypothesis in linguistics, which equates semantic similarity with contextual proximity [3].

In single-cell biology, an analogous hypothesis operates: cells occurring in similar biological contexts (e.g., the same tissues, developmental stages, or disease states) should occupy proximate regions in embedding space [3]. This principle enables self-supervised training of foundation models, where the model learns to position cells with similar expression profiles closer in the embedding space, effectively creating a geometric representation of biological similarity.

A significant challenge in these embedding spaces is the phenomenon of cellular polysemy, where cells with similar transcriptional profiles may have different biological functions or identities depending on context [3]. For example, blood vascular endothelial cells share consistent transcriptional profiles across different tissues due to their similar structural roles, potentially mapping to the same embedding region despite their anatomical separation [3]. This ambiguity can be resolved through dynamic embedding approaches that adjust a cell's representation based on additional contextual information, such as spatial position or protein markers, similar to how context-aware language models handle polysemous words [3].

Table 2: Experimental Protocols for Single-Cell RNA Sequencing

| Protocol | Isolation Strategy | Transcript Coverage | UMI | Amplification Method | Applications |

|---|---|---|---|---|---|

| Smart-Seq2 | FACS | Full-length | No | PCR | Enhanced sensitivity for low-abundance transcripts [13] |

| Drop-Seq | Droplet-based | 3'-end | Yes | PCR | High-throughput, low cost per cell [13] |

| CEL-Seq2 | FACS | 3'-only | Yes | IVT | Linear amplification reduces bias [13] |

| SPLiT-Seq | Not required | 3'-only | Yes | PCR | Combinatorial indexing without physical separation [13] |

| MATQ-Seq | Droplet-based | Full-length | Yes | PCR | Increased accuracy in quantifying transcripts [13] |

Experimental Protocols and Data Generation

Generating high-quality single-cell RNA sequencing data requires careful selection of experimental protocols, each with distinct advantages for specific research applications. The fundamental steps encompass single-cell isolation and capture, cell lysis, reverse transcription, cDNA amplification, and library preparation [13].

Protocols differ significantly in their transcript coverage strategies. Full-length methods such as Smart-Seq2 and MATQ-Seq excel in detecting isoform usage, allelic expression, and RNA editing due to their comprehensive coverage of transcripts [13]. These protocols are particularly valuable for discovering novel splice variants or studying transcriptional regulation mechanisms. In contrast, 3'-end counting methods like Drop-Seq and inDrop enable higher throughput at lower cost per cell, making them ideal for large-scale atlas projects aimed at comprehensive cell type cataloging [13].

The choice of amplification method also significantly impacts data quality. Most protocols utilize polymerase chain reaction (PCR) amplification, while others such as inDrop and CEL-Seq2 rely on in vitro transcription (IVT) for amplification [13]. Each method introduces different biases that must be considered during experimental design and computational analysis. The incorporation of Unique Molecular Identifiers (UMIs) in most modern protocols enables accurate quantification by correcting for amplification biases [13].

Visualization and Analysis Frameworks

Effective visualization tools are essential for interpreting the high-dimensional relationships in single-cell data. Vitessce represents an advanced framework for integrative visualization of multimodal and spatially resolved single-cell data, enabling simultaneous exploration of transcriptomics, proteomics, genome-mapped, and imaging modalities [14].

This visualization framework addresses the challenge of exploring connections across modalities through coordinated multiple views, where interactions such as gene or cell type selections are reflected across all visualizations simultaneously [14]. This capability is particularly valuable for validating cell types characterized by markers in both RNA and protein modalities, as demonstrated in CITE-seq data where natural killer cells can be identified based on both CD56 protein levels and expression of genes GZMB, GZMK, and PRF1 [14].

For quality control assessment, the Single-Cell Toolkit (SCTK-QC) pipeline provides a comprehensive solution for generating and visualizing quality control metrics [15]. This pipeline performs crucial QC tasks including empty droplet detection, doublet prediction, and estimation of ambient RNA contamination—all essential steps for ensuring data quality before applying tokenization strategies [15].

Tokenization Workflow for Nonsequential Data

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Poly[T]-primers | Selective capture of polyadenylated mRNA | Sample preparation to minimize ribosomal RNA contamination [13] |

| Unique Molecular Identifiers (UMIs) | Barcoding of individual mRNA molecules | Correction of amplification biases in droplet-based protocols [13] [15] |

| Cell Barcodes | Labeling individual cells during sequencing | Demultiplexing cells in high-throughput protocols [15] |

| Vitessce | Interactive visualization of multimodal data | Visual exploration of spatial and single-cell data relationships [14] |

| SCTK-QC Pipeline | Comprehensive quality control metrics | Detection of empty droplets, doublets, and ambient RNA [15] |

| SingleCellExperiment Object | Standardized data container | Storage of single-cell data with cell-level annotations in R [15] |

Overcoming the nonsequential nature of gene expression data requires an integrated approach combining sophisticated tokenization strategies, appropriate experimental protocols, and advanced visualization frameworks. The geometric properties of embedding spaces created by single-cell foundation models provide a powerful framework for extracting biological meaning from inherently unordered gene expression profiles.

Future developments in this field will likely focus on dynamic embedding approaches that more effectively handle cellular polysemy by incorporating rich contextual information about cellular environments, spatial relationships, and multimodal measurements. As these methods mature, they will increasingly enable researchers to move beyond static cell type classifications toward dynamic models of cellular states and transitions, ultimately advancing our understanding of developmental biology, disease mechanisms, and therapeutic interventions.

The integration of multimodal data through unified tokenization schemes represents another promising direction, allowing models to simultaneously reason about gene expression, chromatin accessibility, protein abundance, and spatial context. Such integrated approaches will be essential for building comprehensive virtual cell models that capture the full complexity of cellular function and organization.

The emergence of sophisticated machine learning models in single-cell biology has created an unprecedented demand for high-quality, standardized, and scalable data sources for model pretraining. The choice of data repository directly impacts model performance, generalizability, and biological relevance through the fundamental process of tokenization—where biological entities (cells, genes, samples) are transformed into computable representations. This technical guide provides researchers and drug development professionals with a comprehensive analysis of major public single-cell data repositories, focusing on their quantitative characteristics, data standardization frameworks, and practical integration into pretraining pipelines for single-cell data research.

The following tables provide a structured comparison of the scale, content, and technical specifications of key data sources relevant for pretraining foundational models in single-cell biology.

Table 1: Core Quantitative Metrics of Primary Single-Cell Data Platforms

| Repository | Unique Cells | Datasets/Collections | Cell Types | Key Species | Primary Data Types |

|---|---|---|---|---|---|

| CZ CELLxGENE Discover | 93.6 million+ (as of Oct 2024) [16] | 1,550+ datasets [16] | 700+ in Cell Guide [17] | Human, mouse, roundworm, zebrafish, fruit fly [18] | scRNA-seq, scATAC-seq, multi-modal, spatial (Visium, Slide-seq) [18] |

| Human Cell Atlas (HCA) | Not specified (across multiple platforms) | Multiple Biological Networks (e.g., Lung, Immune, Kidney) [19] | Varies by tissue atlas | Human, model organisms | scRNA-seq, scATAC-seq, with raw FASTQs [20] [21] |

| GEO/SRA | Varies by study | Repository-wide (not standardized) | Varies by study | Multiple organisms | Bulk RNA-seq, scRNA-seq, microarray, other NGS [22] |

| Single Cell Portal (Broad) | Varies by study | Study-centric | Varies by study | Human, mouse | scRNA-seq, with visualization tools [22] |

Table 2: Technical Specifications for Data Access and Integration

| Repository | Standardization Level | Programmatic Access | Metadata Schema | Raw Data Availability | Batch Effect Annotation |

|---|---|---|---|---|---|

| CZ CELLxGENE Discover | High (minimal schema with 11 required fields) [16] | Census API (R/Python) [17] [16] | Versioned minimal schema with ontology terms [16] | Processed matrices (raw counts required) [18] [16] | Optional batch condition fields in metadata [18] |

| Human Cell Atlas | Tiered system (Tier 1 for integration, Tier 2 for analysis) [19] | Multiple access methods | Three-tier schema with managed access for sensitive fields [19] [20] | FASTQ files + processed data [20] [21] | Tier 1 fields identify technical batch effects [19] |

| GEO/SRA | Low (study-dependent) | Limited (SRA tools) | Study-specific, variable quality | FASTQ and processed data | Not standardized |

| EMBL Expression Atlas | Medium (curated but not universal) | Web services, downloads | Baseline vs. differential studies [22] | Processed matrices + raw data links | Limited standardization |

Repository-Specific Architectures and Data Models

CZ CELLxGENE Discover: A Standardized Corpus for Large-Scale Integration

CZ CELLxGENE employs a minimal schema approach with 11 required fields designed specifically for cross-dataset integration, a critical feature for model pretraining [16]. The platform's architecture enforces ontology-based standardization for key biological variables including development stage, sex, self-reported ethnicity, and tissue type, ensuring consistent tokenization across studies [18] [16]. All submitted data must include raw count matrices, enabling proper normalization and comparison across datasets—a fundamental requirement for training robust models [16].

The platform's Explorer feature provides no-code visualization of dataset embeddings, allowing researchers to qualitatively assess cluster quality and dataset structure before incorporation into training pipelines [17]. For computational access, the Census API provides efficient programmatic access to custom data slices in standard data structures compatible with popular analysis frameworks [17] [16].

Human Cell Atlas: Tiered Metadata for Secure and Comprehensive Data

HCA implements a sophisticated three-tier metadata schema that separates data based on integration utility and privacy requirements [19]. Tier 1 metadata provides the foundational fields required for computational integration (e.g., sample identification, batch effect identification), making it particularly valuable for pretraining data curation [19]. Tier 2 metadata contains more detailed biological context and potential identifiers, protected through a managed access system via the DUOS platform [19] [20].

The HCA ecosystem spans multiple platforms: CELLxGENE Discover stores matrices and Tier 1 metadata, the HCA Data Repository stores FASTQs and Tier 2 metadata, and the Cell Annotation Platform (CAP) enables collaborative cell type annotation [20]. This distributed architecture balances accessibility with privacy protection for sensitive donor information.

Complementary Public Repositories

GEO/SRA serves as a comprehensive but less standardized repository, accepting diverse data types including microarray, bulk RNA-seq, and scRNA-seq [22]. While lacking the standardization of dedicated single-cell platforms, its vast scope makes it valuable for certain pretraining scenarios, particularly when accessed through reprocessing pipelines like ARCHS4 or Recount3 that add standardization layers [22].

The Single Cell Expression Atlas from EMBL provides curated single-cell datasets with baseline (steady-state) and differential (comparative) categorizations, offering intermediate standardization between raw GEO data and highly curated platforms [22]. The Single Cell Portal from Broad Institute enables study-specific exploration with embedded visualizations, useful for due diligence on individual datasets before inclusion in training corpora [22].

Experimental Protocols and Data Processing Workflows

Data Retrieval and Standardization Pipeline

The following diagram illustrates the complete workflow from raw data retrieval to analysis-ready dataset for model pretraining:

CELLxGENE Data Submission and Curation Protocol

Understanding the data submission process provides insight into data quality and standardization, critical for assessing training data suitability:

Data Eligibility Screening: Researchers submit data descriptions to CELLxGENE team for approval, ensuring compatibility with supported species (human, mouse, zebrafish, etc.) and assays (scRNA-seq, scATAC-seq, multi-modal) [18].

File Preparation: Contributors prepare AnnData files (version 0.8) containing:

- Raw counts in

Xorraw.X(required) - Normalized counts (strongly recommended)

- Cell metadata in

obswith ontology terms - Embeddings in

obsm(at least one 2D embedding required) - Gene features in

varusing Ensembl IDs [18]

- Raw counts in

Metadata Annotation: Application of standardized ontologies to key fields:

- Development stage: HsapDv (human), MmusDv (mouse)

- Sex: PATO ontology terms

- Ethnicity: HANCESTRO for human data

- Tissue: UBERON ontology

- Cell type: Cell Ontology (CL) [18]

Quality Control and Validation: CELLxGENE curators collaboratively review submissions, validating schema compliance and metadata accuracy before publication [16].

Automated Retrieval and Integration with Celline

For large-scale pretraining data acquisition, automated tools like Celline provide efficient workflows:

- Unified Data Access: Celline executes single-line commands to gather raw single-cell RNA-seq data from multiple public repositories, eliminating manual curation of accessions [23].

- Metadata Standardization: The tool leverages large language models to extract and standardize metadata across sources, addressing a key challenge in multi-dataset integration [23].

- End-to-End Processing: Celline wraps established tools (Scrublet for doublet removal, Seurat/Scanpy for quality control, Harmony/scVI for batch correction) into a unified pipeline [23].

- Validation Protocol: Applied to mouse brain cortex datasets, Celline demonstrated capability to remove low-quality cells, annotate 11 major cell types, improve integration quality (scIB score +0.22), and complete trajectory analysis [23].

Table 3: Computational Tools and Resources for Data Processing and Analysis

| Tool/Resource | Function | Application in Pretraining | Access Method |

|---|---|---|---|

| Census API | Programmatic access to CELLxGENE data | Efficient retrieval of custom data slices for training | R/Python package [17] [16] |

| Celline | Automated retrieval and integration pipeline | End-to-end processing of multi-source data | Python package [23] |

| Scrublet | Doublet detection in scRNA-seq data | Quality control during data preprocessing | Python package [23] |

| Harmony/scVI | Batch effect correction | Data integration across studies | R/Python packages [23] |

| Seurat/Scanpy | Single-cell analysis workflows | Data preprocessing, normalization, and visualization | R/Python packages [22] [23] |

| ARCHS4/Recount3 | Reprocessed GEO/SRA data | Access to standardized bulk and single-cell RNA-seq | Web resource/R package [22] |

| Cell Annotation Platform (CAP) | Collaborative cell type annotation | Consensus cell labeling for training data | HCA web portal [19] |

Implications for Tokenization Strategies in Single-Cell Research

The choice of data source directly influences tokenization effectiveness in several critical dimensions:

Metadata Tokenization: Highly standardized repositories like CELLxGENE enable consistent tokenization of biological variables through ontology-term-based representations, while heterogeneous sources require extensive normalization. The 11 required fields in CELLxGENE's minimal schema provide a foundation for structured biological tokenization [16].

Gene Expression Tokenization: The universal requirement for raw counts across CELLxGENE datasets enables proper normalization and comparison, creating consistent numerical tokenization streams. Platforms accepting only processed data introduce normalization artifacts that complicate cross-dataset token alignment [18] [16].

Batch Effect Management: Tokenization strategies must account for technical variability. HCA's Tier 1 metadata specifically identifies batch effect sources, enabling targeted normalization during token preprocessing [19]. CELLxGENE's optional batch condition fields serve similar functions [18].

Cross-Modality Tokenization: Emerging support for multi-modal assays (10x multiome, CITE-seq) in CELLxGENE creates opportunities for aligned tokenization across measurement types, enabling multimodal pretraining approaches [18].

Scalability Considerations: With CELLxGENE hosting over 93 million unique cells, efficient tokenization strategies must handle petabyte-scale data through distributed processing and incremental loading patterns enabled by tools like the Census API [16].

By aligning tokenization strategies with the standardization frameworks and data models of these major repositories, researchers can develop more robust and biologically meaningful pretraining approaches that effectively leverage the expanding universe of single-cell data.

The distributional hypothesis, a cornerstone of computational linguistics, posits that the meaning of a word can be understood by analyzing the company it keeps within linguistic contexts. This principle, famously summarized as "you shall know a word by the company it keeps," has revolutionized natural language processing (NLP) by enabling machines to learn semantic relationships from large text corpora without explicit supervision [24] [25]. Modern transformer-based architectures and large language models (LLMs) have operationalized this hypothesis through word embeddings and contextual representations, fundamentally changing how computers process human language.

In parallel, molecular biology faces a remarkably similar conceptual challenge: understanding gene function across diverse biological contexts. Genes exhibit pleiotropy, where a single gene can perform multiple seemingly unrelated functions depending on cellular context, tissue environment, spatial positioning, and temporal state [24]. This biological complexity mirrors the polysemy of words in language, where a single word form can have multiple meanings based on sentence context. The central proposition of this whitepaper is that the distributional hypothesis, when applied to single-cell omics data through sophisticated tokenization strategies, offers a transformative framework for modeling gene function as a dynamic, context-dependent property rather than a fixed annotation.

The Distributional Hypothesis: From Linguistics to Biological Systems

Historical Foundations and Modern implementations in NLP

The distributional hypothesis originated in linguistic theory, particularly through the work of Zellig Harris and John Rupert Firth, who argued that semantic similarity could be quantified through distributional similarity in language data [25]. This theoretical foundation was technologically realized decades later through advances in computational power, accumulation of digital text repositories, and new machine learning approaches. Early implementations included word embedding models like Word2Vec and GloVe, which represented words as vectors in a high-dimensional semantic space based on their co-occurrence patterns [24].

The advent of transformer architectures marked a revolutionary advancement, employing attention mechanisms to create contextualized word representations that dynamically adapt to specific sentence contexts [24]. These models learn semantic representations through self-supervised pretraining objectives, such as masked language modeling, where the model learns to predict missing words based on their surrounding context. This approach has proven extraordinarily successful in capturing nuanced semantic relationships and powering modern NLP applications.

Structural Correspondences Between Language and Biology

The translation of distributional principles from linguistics to biology rests on identifiable structural correspondences between these domains:

- Words and Genes: Just as words are the fundamental units of language, protein-coding genes represent functional units in biology. Both can have multiple meanings/functions depending on context.

- Sentences and Cells: Sentences provide contextual frames that determine word meaning, analogous to how individual cells provide biological contexts that determine gene function.

- Documents and Tissues/Organisms: Larger corpora represent broader contextual frames, similar to how tissues or entire organisms represent higher-order biological contexts.

- Semantic Space and Functional Space: The high-dimensional vector spaces that capture semantic relationships between words correspond to spaces capturing functional relationships between genes across cellular contexts [24].

This structural alignment suggests that similar computational approaches may successfully capture biological principles, particularly the context-dependent nature of gene function.

Single-Cell Omics: The Technological Foundation for a Biological Distributional Hypothesis

Technological Advances Enabling High-Resolution Cellular Profiling

Single-cell RNA sequencing (scRNA-seq) and related omics technologies have revolutionized biological research by enabling the characterization of individual cells rather than population averages. These technologies reveal the cellular heterogeneity that underlies tissue function, development, and disease pathogenesis [26] [27]. Several technological approaches have been developed for single-cell isolation and analysis:

- Droplet-Based Methods: Technologies such as 10X Genomics Chromium and Drop-seq use microfluidics to encapsulate individual cells in droplets with barcoded beads, enabling high-throughput analysis of thousands to millions of cells [26] [28].

- Plate-Based Methods: Approaches like CEL-seq2, MARS-seq, and SMART-seq utilize cell sorting or microwell plates to isolate individual cells, often providing greater sequencing depth per cell [26].

- Combinatorial Indexing: Methods such as SPLiT-seq use combinatorial barcoding to label cells in situ without physical isolation, enabling massive scalability [26].

These technological advances have produced increasingly large-scale single-cell datasets, with repositories like CZ CELLxGENE now providing access to over 50 million unique cells across diverse tissues and conditions [1] [17].

From Bulk to Single-Cell Resolution: Capturing Biological Context

Traditional bulk sequencing approaches average signals across heterogeneous cell populations, obscuring important cellular nuances and context-dependent gene functions [26] [27]. Single-cell technologies overcome this limitation by capturing gene expression patterns individual cells, thereby preserving the biological context essential for applying distributional principles. The molecular and biochemical configuration of a cell—including its cell type, developmental state, spatial position, environmental exposures, and disease status—constitutes the biological equivalent of "sentence context" that determines gene function [24].

Single-cell multiomics technologies further enhance this contextual understanding by simultaneously measuring multiple molecular layers within the same cell, such as combining transcriptomic, epigenomic, and proteomic measurements [26] [27]. This multi-modal approach provides a more comprehensive view of cellular state and regulatory mechanisms, creating richer contextual representations for understanding gene function.

Tokenization Strategies for Single-Cell Data: Operationalizing the Distributional Hypothesis

Foundational Concepts and Challenges

Tokenization represents the process of converting raw biological data into discrete units (tokens) that can be processed by computational models. For single-cell data, this presents unique challenges compared to NLP:

- Non-Sequential Nature: Unlike words in a sentence, genes in a cell have no inherent ordering, requiring artificial sequencing strategies for transformer-based models [1].

- High-Dimensionality: Single-cell datasets typically measure thousands to tens of thousands of genes per cell, creating computational challenges for model training.

- Sparsity: Single-cell expression matrices are highly sparse, with many genes showing zero counts in individual cells due to biological and technical factors.

- Technical Noise: Batch effects, sampling noise, and other technical artifacts can obscure biological signals and must be addressed during data preprocessing [1].

Current Tokenization Approaches for Single-Cell Foundation Models

Several tokenization strategies have emerged in developing single-cell foundation models (scFMs), each with distinct advantages and limitations:

Table 1: Tokenization Strategies for Single-Cell Foundation Models

| Strategy | Mechanism | Advantages | Limitations | Representative Models |

|---|---|---|---|---|

| Expression Ranking | Genes are ordered by expression level within each cell | Deterministic; preserves most highly expressed genes | Arbitrary ordering; may lose lowly expressed signals | scGPT, GeneFormer [1] |

| Expression Binning | Genes are partitioned into bins based on expression values | Reduces dimensionality; captures expression ranges | Coarse-grained; loses precise expression values | Various scFMs [1] |

| Normalized Counts | Uses normalized expression values without explicit ordering | Preserves continuous expression information | Requires specialized positional encoding | scBERT [1] |

| Multi-Modal Tokens | Incorporates multiple omics measurements as separate tokens | Enables integration of diverse data types; richer context | Increased complexity; data integration challenges | Multi-modal scFMs [1] |

Incorporating Biological Context Through Specialized Tokens

Beyond basic gene tokenization, effective scFMs incorporate additional tokens to represent biological context:

- Cell Identity Tokens: Special tokens prepended to represent cell-level metadata, such as cell type, tissue origin, or donor information [1] [27].

- Modality Indicators: Tokens that indicate the measurement type (e.g., RNA, ATAC, protein) in multi-omics approaches [1].

- Biological Context Tokens: Representations of pathway membership, gene ontology terms, or chromosomal location to provide additional biological priors [1].

- Batch Correction Tokens: Special tokens that encode batch information to help models distinguish technical artifacts from biological signals [1].

These contextual tokens enable models to learn the distributional patterns of gene function across the rich tapestry of biological contexts captured in single-cell atlases.

Experimental Framework and Methodological Considerations

Data Preprocessing and Quality Control

Robust preprocessing pipelines are essential for generating high-quality single-cell data for foundation model training:

Diagram 1: Single-Cell Data Preprocessing Workflow

Key quality control steps include [28]:

- Cell Quality Filtering: Removal of cells with low unique gene counts, high mitochondrial content, or other indicators of poor cell quality.

- Gene Filtering: Exclusion of genes detected in very few cells, which may represent technical noise.

- Normalization: Correction for sequencing depth variations between cells using methods like log(CP10K) or SCTransform.

- Batch Effect Correction: Application of integration methods to remove technical variations while preserving biological signals.

Model Architecture and Pretraining Strategies

Current scFMs predominantly utilize transformer architectures, adapted for single-cell data:

Diagram 2: Single-Cell Foundation Model Architecture

Common pretraining strategies include [1]:

- Masked Gene Modeling: Randomly masking a portion of gene tokens and training the model to reconstruct them based on context, analogous to masked language modeling in NLP.

- Next Gene Prediction: Autoregressive prediction of subsequent genes in a sequence, similar to GPT-style training.

- Contrastive Learning: Training models to identify similar versus dissimilar cellular contexts.

Key Research Reagents and Computational Tools

Table 2: Essential Research Reagents and Tools for Single-Cell Distributional Analysis

| Category | Tool/Resource | Primary Function | Application Context |

|---|---|---|---|

| Data Platforms | CZ CELLxGENE [17] | Curated single-cell data repository | Data access, standardization, and exploration |

| Analysis Suites | Seurat, Scanpy [29] [26] | Single-cell data analysis toolkit | Data preprocessing, visualization, and basic analysis |

| Visualization Tools | scViewer [29] | Interactive visualization of gene expression | Exploratory data analysis and hypothesis generation |

| Foundation Models | scGPT, GeneFormer [1] | Pretrained transformer models for single-cell data | Transfer learning for various downstream tasks |

| Benchmarking | CellXGene Census [17] | Standardized data slices for model evaluation | Model validation and comparative performance assessment |

Downstream Applications and Biological Insights

Predicting Gene Function and Functional Pleiotropy

The distributional approach enables probabilistic prediction of gene function across diverse cellular contexts, moving beyond the limitations of static ontological annotations. By learning embeddings that capture how gene function varies across contexts, scFMs can [24]:

- Predict novel functions for poorly characterized genes based on their distributional similarity to well-characterized genes.

- Identify context-specific functions of pleiotropic genes that perform different roles in different cell types.

- Discover regulatory relationships and functional modules that vary across cellular contexts.

Cell Type Annotation and Novel Cell State Discovery

scFMs pretrained on large cellular atlases can be fine-tuned for cell type annotation, achieving state-of-the-art performance by leveraging learned representations of cellular identity [1]. These models can:

- Automatically annotate cell types in new datasets without manual curation.

- Identify novel cell states or transitional states that don't match existing classifications.

- Reveal continuous trajectories of cellular differentiation or activation.

Disease Mechanism Elucidation and Drug Target Identification

By capturing the distributional patterns of gene expression across healthy and diseased tissues, scFMs provide powerful tools for [1] [27]:

- Mapping disease-associated genetic variants from GWAS to specific cell types and contexts.

- Identifying cell-type-specific expression quantitative trait loci (eQTLs).

- Predicting candidate drug targets based on their restricted expression to specific pathological cell populations.

- Understanding drug mechanism of action by modeling how treatments shift cellular states.

Multi-Modal Integration and Cross-Species Analysis

The distributional framework naturally extends to multi-modal data integration, enabling models to learn joint representations that connect different molecular layers [26] [1]. This facilitates:

- Prediction of epigenetic regulation from transcriptomic data.

- Cross-species analysis of cellular function and conservation.

- Integration of spatial transcriptomics data to incorporate geographical context.

Future Directions and Concluding Perspectives

The application of distributional semantics to single-cell biology represents a paradigm shift in how we conceptualize and model gene function. This approach acknowledges that gene function emerges from context—that cellular environments shape molecular activity in much the same way that sentence context shapes word meaning. As single-cell technologies continue to evolve, generating increasingly comprehensive maps of cellular states across tissues, organisms, and conditions, distributional approaches will become increasingly powerful for deciphering the complex regulatory logic of biological systems.

Key future directions include:

- Development of more sophisticated tokenization strategies that better capture biological hierarchy and organization.

- Creation of unified foundation models that span multiple species, tissues, and experimental modalities.

- Improved methods for interpreting model predictions and extracting biologically meaningful insights.

- Integration of temporal dynamics to model how gene functions evolve during processes like development and disease progression.

The convergence of single-cell genomics and distributional approaches represents more than just a technical advancement—it offers a fundamentally new way of understanding biological function as a dynamic, context-dependent property that can be learned from data rather than predefined by annotation. As these methods mature, they promise to accelerate therapeutic development and deepen our understanding of biological systems across scales.

From Expression Matrices to Model Inputs: Methodological Approaches to Single-Cell Tokenization

In single-cell biology, the surge of high-throughput sequencing technologies has necessitated computational frameworks capable of interpreting complex, high-dimensional data. Gene-level tokenization serves as the foundational step in this process, translating raw gene expression profiles from single-cell RNA sequencing (scRNA-seq) into a structured, discrete format that machine learning models, particularly transformer-based architectures, can process. This translation is paramount for constructing single-cell foundation models (scFMs) that learn universal patterns from vast cell atlases [1]. The process treats a cell's transcriptome as a "sentence," where individual genes or features act as "words," thereby enabling the application of sophisticated natural language processing (NLP) techniques to biological data [1] [30]. This guide details the core methodologies, experimental protocols, and practical implementations of gene-level tokenization, framing it as a critical tokenization strategy for advancing single-cell research and drug discovery.

The Principles of Tokenization in Single-Cell Data

Tokenization converts raw, continuous gene expression values into a sequence of discrete units or tokens. This is a critical prerequisite because modern deep learning models, unlike traditional statistical tools, require structured, discrete inputs. The primary challenge lies in the non-sequential nature of genomic data; unlike words in a sentence, genes have no inherent order [1]. Furthermore, scRNA-seq data is characterized by high dimensionality, sparsity due to dropout events (where a gene is undetected despite being expressed), and technical noise [13] [4]. Tokenization strategies must overcome these challenges to create meaningful, information-dense representations that preserve biological signal.

The concept is motivated by the distributional hypothesis in linguistics, which suggests that words occurring in similar contexts have similar meanings. In single-cell biology, this translates to an assumption that cells with similar expression profiles share similar biological functions or states [3]. By applying self-supervised learning objectives, such as masked language modeling, on tokenized data, scFMs can learn this contextual representation of genes and cells, capturing fundamental biological principles without explicit labeling [1] [30].

Core Methodologies for Gene-Level Tokenization

Several methodologies have been developed to convert gene expression values into tokens. The following table summarizes the predominant approaches used in current single-cell large language models (scLLMs).

Table 1: Key Methodologies for Gene-Level Tokenization

| Method | Core Principle | Gene Ordering | Expression Value Handling | Example Models |

|---|---|---|---|---|

| Rank-based Tokenization | Genes are ranked by expression level within each cell to create a sequence. | Descending order of expression. | Implicitly encoded via position. | Geneformer [30] |

| Binning-based Tokenization | Continuous expression values are discretized into predefined bins. | Fixed, canonical gene order or expression-based ranking. | Each bin corresponds to a discrete token. | scBERT, scGPT [30] |

| Value-Embedding Integration | Gene identity and its continuous expression value are separately embedded and summed. | Fixed, canonical gene order. | A separate embedding layer processes the normalized value. | scGPT [1] [30] |

| Scale-Free Tokenization | The high-dimensional expression vector is segmented into sub-vectors using a fixed window. | Sequential based on original gene order. | Preserved and processed locally by 1D-convolutions. | scSFUT [4] |

Detailed Workflow of Binning-Based Tokenization

Binning is a widely adopted tokenization strategy. The following diagram illustrates the logical workflow and data transformation in this process.

The binning process involves several key steps, which also constitute a standard protocol for data preparation:

- Input: A raw gene expression vector for a single cell, typically containing integer counts for thousands of genes.

- Normalization: The expression values are normalized to account for variations in sequencing depth between cells. A common approach is to normalize the total counts per cell to a standard value (e.g., 10,000) and then apply a logarithmic transformation [30] [4].

- Discretization (Binning): The normalized, continuous expression values are mapped into a finite number of discrete bins. For a gene j in cell i with normalized expression value X_{i,j}:

- xj^(i) = 0 if X{i,j} = 0 (handling dropout events).

- xj^(i) = k if X{i,j} > 0 and X_{i,j} falls into the k-th bin, where k ranges from 1 to the total number of bins [30]. The bin boundaries can be defined using percentiles of the non-zero expression distribution or fixed intervals.

- Gene Identifier Mapping: Each gene is mapped to a unique integer identifier from a predefined vocabulary. This vocabulary is curated during model pretraining and defines the set of genes the model understands.

- Token Construction: A token for gene j in cell i is constructed as a combination of its gene identifier id(g_j) and its expression bin x_j^(i).

- Embedding: The final token is passed through two embedding layers:

- An embedding layer embg that converts the gene ID into a vector.

- An embedding layer embv that converts the expression bin into a vector. These two vectors are summed to create the initial input representation for the model: h^(i) = embg(tg^(i)) + embv(xv^(i)) [30].

The Scientist's Toolkit: Essential Reagents for Tokenization

The following table lists key computational "reagents" and tools required for implementing gene-level tokenization.

Table 2: Research Reagent Solutions for Tokenization Workflows

| Item / Tool | Function / Description | Application in Tokenization |

|---|---|---|

| scanpy [4] | A Python toolkit for analyzing single-cell gene expression data. | Used for quality control, normalization (e.g., log-transformation), and filtering of raw count data before tokenization. |

| Predefined Gene Vocabulary | A curated list of gene identifiers (e.g., ENSEMBL IDs) that the model can recognize. | Maps gene names to unique integer IDs. Genes not in the vocabulary are typically masked or ignored. |

| Expression Binning Algorithm | Code logic to discretize continuous expression values into k levels. |

Converts normalized expression values (e.g., log(CPM+1)) into discrete categories, creating the value part of the token. |

| Embedding Layers (embg, embv) | Trainable neural network layers that map discrete IDs/values to dense vectors. | Transform the token's gene ID and expression bin into a numerical representation the transformer model can process. |

Advanced Tokenization Strategies and Experimental Comparisons

Dynamic vs. Static Tokenization

A critical consideration is whether the tokenization is static or dynamic. Static embeddings, like those from early models such as word2vec, assign a fixed vector to each gene regardless of context. This can be problematic in biology, as a gene may play different roles (similar to polysemy in language) in different cellular contexts [3]. Modern transformer-based scFMs use dynamic embeddings enabled by the self-attention mechanism. In this approach, the representation of a gene token is dynamically adjusted based on the context of all other genes expressed in the same cell, leading to a more nuanced and accurate representation [3].

Experimental Protocol for Model Comparison

When benchmarking different tokenization strategies or scFMs, a standardized experimental protocol is essential. The following workflow outlines a typical benchmarking study, as used in several cited papers.

- Data Curation: Collect a large and diverse set of scRNA-seq datasets from public repositories like CELLxGENE [1] [30] or PanglaoDB [30]. For the specific benchmark, use multiple annotated datasets from different tissues or species (e.g., human and mouse) [4].

- Data Preprocessing: Apply a consistent preprocessing pipeline. This includes quality control (filtering cells with too few genes and genes expressed in too few cells), normalization (e.g., log(CPM+1)), and, for some models, highly variable gene selection (though models like scSFUT avoid this step) [4].

- Data Partitioning: Split the data into training and test sets. A rigorous approach involves a "hold-out" strategy where specific cell types or entire studies are withheld from training to assess the model's ability to generalize to unseen data [4].

- Model Training & Fine-Tuning: Compare different models (e.g., scGPT, scBERT, scSFUT) on a downstream task like cell type annotation. Given that zero-shot performance of scLLMs can be limited, Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA are often employed to adapt the foundation models to the specific benchmark data with minimal parameter updates [30].

- Evaluation: Use metrics such as classification accuracy and F1-score (especially important for imbalanced datasets) to evaluate performance. The results should be compared against baseline methods, including traditional autoencoder-based models and alignment-based techniques where applicable [31] [4].

Quantitative Performance Comparison

The table below synthesizes findings from benchmark studies comparing models that use different tokenization and architectural strategies.

Table 3: Comparative Performance of Models on Cell Type Annotation

| Model | Core Tokenization Strategy | Reported Performance (Accuracy) | Key Strengths |

|---|---|---|---|

| scSFUT [4] | Scale-free, segmentation with 1D-convolution. | Outperformed other models on cross-species benchmarks. | No need for gene selection; processes full gene vector; better generalization. |

| scGPT [30] [4] | Binning-based with value embedding. | Shows strong performance but requires fine-tuning; outperformed Geneformer in some studies. | Flexible framework; supports multi-omics integration. |

| Geneformer [30] | Rank-based tokenization. | Performance varies; outperformed scGPT in some studies but not others. | Captures strong gene-gene context relationships. |

| scBERT [4] | Binning-based tokenization. | Strong performance on human data. | Based on the established BERT architecture. |

| MMseqs2 [31] (Alignment-based) | Not applicable (sequence alignment). | High accuracy on sequences similar to reference database. | High accuracy for known sequences; does not require training. |

Gene-level tokenization is far more than a simple data preprocessing step; it is a fundamental strategy that bridges the gap between the complex, continuous world of biology and the discrete, structured world of deep learning. The choice of tokenization strategy—whether binning, ranking, or scale-free segmentation—directly influences a model's ability to capture the intricate patterns of gene regulation and cellular identity. As the field progresses, future developments in tokenization will likely focus on better handling of multi-omic data, improving computational efficiency for ever-larger datasets, and enhancing the biological interpretability of the token embeddings themselves. By providing a standardized yet flexible approach to converting expression values into discrete units, gene-level tokenization lays the groundwork for the next generation of virtual cell models, ultimately accelerating drug discovery and the development of personalized therapeutics.

In single-cell genomics, the analysis of transcriptomes involves interpreting complex, high-dimensional data where genes lack inherent sequential order. Expression-based ranking has emerged as a fundamental tokenization strategy that transforms this non-sequential data into deterministic gene sequences, enabling the application of advanced artificial intelligence models. This transformation is crucial because it allows researchers to apply transformer-based architectures—originally designed for sequential data like text—to single-cell biology, where it has opened new frontiers in classifying cell types, predicting cellular states, and understanding disease mechanisms [1].

Treating individual cells as "sentences" and their genes as "words" forms the core analogy that makes this approach powerful. By creating a structured, deterministic order from otherwise unordered gene expression data, researchers can leverage the pattern-recognition capabilities of large language models to extract meaningful biological insights from millions of single-cell transcriptomes [1] [32]. This technical guide explores the methodologies, applications, and practical implementations of expression-based ranking strategies, providing researchers with the foundational knowledge needed to advance single-cell research and drug development.

Core Methodologies for Expression-Based Ranking

Fundamental Ranking Approaches

Expression-based ranking strategies convert gene expression profiles into ordered sequences suitable for AI model processing. The table below summarizes the primary techniques employed in single-cell foundation models (scFMs).

Table 1: Expression-Based Ranking Strategies for Gene Sequence Creation

| Ranking Strategy | Core Methodology | Key Advantages | Model Examples |

|---|---|---|---|

| Expression Magnitude Ranking | Ranks genes from highest to lowest expression value within each cell [1]. | Simple, interpretable, preserves strongest signals [1]. | scGPT, scBERT [1] |

| Expression Binning | Partitions genes into bins based on expression values, then ranks by bin membership [1]. | Reduces noise from small expression variations [1]. | Various scFMs [1] |

| Deterministic Arbitrary Sequencing | Uses normalized counts without complex ranking; relies on fixed gene order [1]. | Computationally efficient, simple implementation [1]. | Multiple scFMs [1] |

Advanced Tokenization Enhancements

Beyond basic ranking, several enhancement techniques improve the biological relevance of tokenized sequences: