Tokenization Strategies for Single-Cell RNA Sequencing Data in Foundation Models: A Comprehensive Guide

Single-cell foundation models (scFMs) are transforming biomedical research by enabling large-scale analysis of cellular heterogeneity.

Tokenization Strategies for Single-Cell RNA Sequencing Data in Foundation Models: A Comprehensive Guide

Abstract

Single-cell foundation models (scFMs) are transforming biomedical research by enabling large-scale analysis of cellular heterogeneity. Tokenization—the process of converting raw scRNA-seq data into model-processable units—is a critical yet challenging step that directly impacts model performance on tasks like cell type annotation, batch integration, and drug sensitivity prediction. This article provides a comprehensive overview of tokenization strategies for scRNA-seq data in scFMs, covering foundational concepts, methodological approaches, troubleshooting guidelines, and validation frameworks. Drawing from the latest research and benchmarking studies, we offer practical insights for researchers and drug development professionals seeking to implement scFMs effectively, highlighting how optimal tokenization strategies can enhance biological discovery and clinical applications.

Understanding Tokenization: The Bridge Between Single-Cell Biology and Foundation Models

Defining Tokenization in the Context of Single-Cell Genomics

Tokenization serves as the critical first step in processing single-cell RNA sequencing (scRNA-seq) data for analysis with foundation models (scFMs), bridging the gap between biological complexity and computational analysis. In natural language processing (NLP), tokenization converts raw text into discrete units (tokens) that models can process. Similarly, for single-cell genomics, tokenization transforms gene expression profiles from individual cells into structured sequences that transformer-based architectures can interpret [1]. This process enables researchers to apply advanced deep learning techniques to explore cellular heterogeneity and gene regulatory networks at unprecedented resolution [2] [1].

The fundamental challenge in single-cell data tokenization stems from the non-sequential nature of genomic data. Unlike words in a sentence, genes in a cell have no inherent ordering, requiring researchers to impose artificial sequences that preserve biological meaning while enabling computational efficiency [1]. This technical guide examines current tokenization strategies within the broader thesis that effective tokenization methodologies are paramount for advancing single-cell foundation models in research and therapeutic development.

Fundamental Concepts and Biological Background

Single-Cell RNA Sequencing Basics

Single-cell RNA sequencing (scRNA-seq) has revolutionized genomics by enabling researchers to measure gene expression at the resolution of individual cells, unlike traditional bulk RNA sequencing which only provides population averages [2]. This technology captures the fundamental unit of biological organization, revealing cellular heterogeneity within tissues that was previously obscured [3] [2]. The typical scRNA-seq workflow involves cell isolation, library preparation, sequencing, and computational analysis, generating complex datasets where each cell is represented by expression levels of thousands of genes [3].

From Bulk to Single-Cell Resolution

Bulk RNA sequencing averages expression across thousands to millions of cells, masking differences between individual cells. In contrast, scRNA-seq preserves cellular heterogeneity, allowing identification of rare cell populations, transitional states, and complex cellular hierarchies [2]. This resolution is particularly valuable for understanding tumor microenvironments, developmental biology, and immune system complexity, where cellular diversity drives functional outcomes [2].

The Data Structure of scRNA-seq

A typical scRNA-seq dataset consists of a gene-cell matrix with rows representing genes (features) and columns representing individual cells (observations) [3]. The values in this matrix represent molecular counts, which are notably sparse due to both biological and technical factors, including dropout events where genes are detected in some cells but not others despite being expressed [4]. This high-dimensional sparsity presents unique challenges for analysis and interpretation that tokenization strategies must address.

Tokenization Methodologies for scRNA-seq Data

Core Principle: Analogizing Biological Data to Language

In single-cell foundation models, the tokenization process establishes a conceptual analogy between genomics and natural language: cells represent documents, genes represent vocabulary, and expression patterns represent sentences [1]. This framework allows researchers to leverage advanced NLP architectures for biological discovery. As noted in a recent Nature review, "In these scFMs, individual cells are treated analogously to sentences, and genes or other genomic features along with their values are treated as words or tokens" [1].

Primary Tokenization Strategies

Table 1: Comparison of Primary Tokenization Strategies for scRNA-seq Data

| Strategy | Method Description | Advantages | Limitations |

|---|---|---|---|

| Expression Ranking | Genes are ordered by expression level within each cell to create a deterministic sequence [1] | Provides consistent ordering; captures most highly expressed genes | May overlook co-expression patterns of moderately expressed genes |

| Expression Binning | Continuous expression values are discretized into bins, with each bin representing a token category [1] [5] | Handles continuous nature of expression data; reduces dimensionality | May lose subtle expression differences; introduces arbitrary bin boundaries |

| Binary Tokenization | Genes are represented as present or absent based on detection thresholds [4] | Reduces technical noise; simplifies model input | Loses quantitative expression information |

| Hybrid Embedding | Combines gene identity embeddings with expression value embeddings [5] | Preserves both gene identity and expression level information | Increases model complexity and computational requirements |

The Tokenization Workflow

The tokenization process follows a structured pipeline to convert raw gene expression data into model-ready tokens:

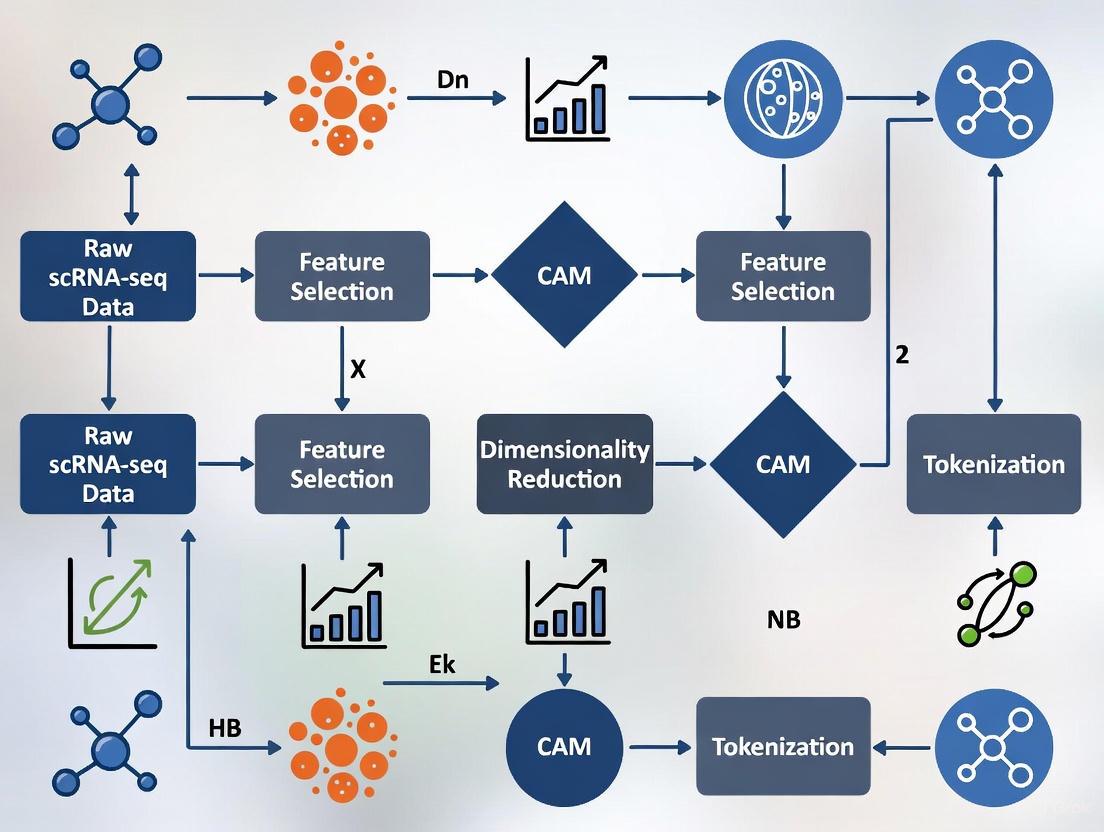

Figure 1: The sequential workflow for tokenizing scRNA-seq data, from raw counts to model input representation.

Advanced Tokenization Considerations

Incorporating Biological Prior Knowledge

Advanced tokenization approaches integrate biological context through gene metadata inclusion, such as chromosomal location, pathway membership, or protein-protein interaction data [1]. For example, some models prepend special tokens representing cell type or experimental conditions, enabling the model to learn context-dependent gene interactions [1] [5].

Multi-Modal Tokenization

With the rise of multi-omics technologies, tokenization strategies have expanded to incorporate diverse data types including chromatin accessibility (scATAC-seq), spatial coordinates, and protein abundance [1]. This requires modality-specific tokens that allow the model to distinguish between data types while learning integrated representations [1].

Integration with Single-Cell Foundation Model Architectures

Transformer Architectures for Single-Cell Data

Single-cell foundation models predominantly utilize transformer architectures, which employ self-attention mechanisms to weight the importance of different genes when making predictions [1] [4]. These architectures come in several variants:

- Encoder-only models (e.g., BERT-style): Use bidirectional attention to learn from all genes simultaneously, ideal for classification tasks like cell type annotation [1] [5]

- Decoder-only models (e.g., GPT-style): Employ masked self-attention that iteratively predicts genes conditioned on known values, suited for generation tasks [1]

- Encoder-decoder models: Combine both approaches for complex tasks requiring both understanding and generation [1]

Comprehensive scFM Architecture

Figure 2: End-to-end architecture of single-cell foundation models showing tokenization's role.

Positional Encoding Strategies

Since gene expression data lacks natural ordering, positional encoding provides artificial sequence information to the model. Common approaches include:

- Expression-based positioning: Genes are positioned in sequences according to expression magnitude [1]

- Learnable positional embeddings: Each position in the sequence receives a trainable embedding vector [4]

- Biological prior positioning: Genes are ordered according to chromosomal location or functional relationships [1]

Experimental Validation and Performance Metrics

Benchmarking Tokenization Strategies

Table 2: Performance Comparison of Tokenization Methods on Cell Type Annotation

| Model | Tokenization Approach | Accuracy | F1-Score | Computational Efficiency |

|---|---|---|---|---|

| scBERT [5] | Gene embedding + expression binning | 85.1% (NeurIPS) | 0.815 | Moderate |

| scGPT [1] | Expression ranking + value normalization | 84.3% (Benchmark) | 0.801 | High requirements |

| scSFUT [4] | Fixed-window sub-vector segmentation | 86.7% (Cross-species) | 0.839 | High efficiency |

| ACTINN [4] | Traditional feature selection | 80.1% (Benchmark) | 0.745 | High efficiency |

| Seurat [5] | Reference mapping | 80.1% (NeurIPS) | 0.640 | Moderate |

Case Study: scBERT Validation on NeurIPS Dataset

Experimental Protocol

A comprehensive evaluation of scBERT was conducted on the NeurIPS dataset, comprising single-cell multi-omics data from mobilized peripheral CD34+ hematopoietic stem and progenitor cells (HSPCs) [5]. The experimental workflow followed these steps:

- Data Acquisition: Gene expression data was obtained from the NeurIPS 2022 Kaggle competition, generated using 10X Chromium Single Cell Multiome ATAC + Gene Expression technology [5]

- Cell Population: The dataset encompassed seven distinct cell types: B-cell progenitor (BP, n=262), erythrocyte progenitor (EryP, n=3,402), haematopoietic stem cell (HSC, n=10,757), mast cell progenitor (MasP, n=2,175), megakaryocyte progenitor (MkP, n=3,394), monocyte progenitor (MoP, n=258), and neutrophil progenitor (NeuP, n=3,663) [5]

- Data Splitting: The dataset was divided with 70% for training and 30% for testing, with the training subset further split 80:20 for training and validation [5]

- Model Training: scBERT was fine-tuned using the established protocol with a learning rate of 5e-5 and batch size of 32 for 50 epochs [5]

- Performance Assessment: Model predictions were compared against ground truth annotations using accuracy, F1-score, and confusion matrix analysis [5]

Results and Interpretation

scBERT achieved a validation accuracy of 85.1%, outperforming Seurat (80.1%) on the same dataset [5]. On held-out test data, scBERT maintained strong performance with 83.97% accuracy compared to Seurat's 81.6% [5]. The statistical significance of this improvement was confirmed with a p-value of 0.0004 from a paired t-test [5].

Notably, the model demonstrated robust performance despite significant class imbalance in the dataset, with HSC cells representing 10,757 observations compared to only 258 MoP cells [5]. This highlights the resilience of properly tokenized transformer models to real-world data distribution challenges.

Table 3: Essential Research Resources for scRNA-seq Tokenization and Analysis

| Resource Category | Specific Tools/Platforms | Primary Function | Application in Tokenization |

|---|---|---|---|

| Data Repositories | CZ CELLxGENE [1], PanglaoDB [5], Human Cell Atlas [1] | Provide curated single-cell datasets | Source of diverse training data for tokenizer development |

| Processing Tools | Scanpy [5], Seurat [5] | Quality control, normalization, and preprocessing | Prepare raw data for tokenization through filtering and normalization |

| Foundation Models | scBERT [5], scGPT [1], scSFUT [4] | Pretrained models for single-cell analysis | Implement various tokenization strategies for specific analytical tasks |

| Gene Reference | Ensembl Gene Database [1], Gene Ontology [1] | Standardized gene annotations | Provide biological context for gene token embedding |

| Benchmark Datasets | Zheng68k [5], MacParland [5], NeurIPS Multiome [5] | Standardized evaluation datasets | Enable comparative assessment of tokenization methodologies |

Challenges and Future Directions

Current Limitations in Tokenization Practices

Despite significant advances, current tokenization approaches face several challenges:

- Sequence arbitrariness: Artificial gene ordering lacks biological justification and may introduce bias [1]

- Batch effects: Technical variations between experiments can confound biological signals [1]

- Computational intensity: Processing millions of cells with full gene complements demands substantial resources [4]

- Cross-species generalization: Models trained on human data may not transfer effectively to other organisms [4]

Emerging Innovations

Promising research directions aim to address these limitations through:

- Biological attention mechanisms: Incorporating known gene-gene interactions to guide model attention [1]

- Adaptive tokenization: Dynamically adjusting tokenization strategy based on data characteristics [4]

- Multi-resolution approaches: Combining gene-level, pathway-level, and cell-level tokens [1]

- Transferable representations: Developing tokenization schemes that generalize across technologies and species [4]

Tokenization represents a fundamental preprocessing step that translates continuous, high-dimensional scRNA-seq data into structured representations amenable to analysis by single-cell foundation models. As the field progresses toward increasingly integrated multi-omic assays and larger-scale cellular atlases, sophisticated tokenization strategies will play an ever more critical role in unlocking biological insights. The development of biologically informed, computationally efficient tokenization methods remains an active area of research with significant potential to advance both basic science and therapeutic development.

Tokenization serves as the foundational bridge that transforms the complex, high-dimensional language of biology into a structured format that artificial intelligence models can comprehend and process. In the context of single-cell RNA sequencing (scRNA-seq) data and single-cell foundation models (scFMs), effective tokenization strategies are paramount for capturing cellular heterogeneity, gene-gene interactions, and regulatory networks. This technical guide examines current tokenization methodologies, their computational implementations, and their impact on downstream biological discovery. We provide a comprehensive framework for researchers seeking to implement robust tokenization pipelines that preserve biological signal while enabling scalable machine learning applications in drug development and basic research.

Single-cell RNA sequencing has revolutionized our understanding of cellular heterogeneity, revealing striking differences in gene expression between individual cells that were previously masked in bulk sequencing approaches. The transcriptome of each cell represents a complex, high-dimensional molecular signature of its identity, state, and function [6]. However, this biological complexity presents substantial computational challenges: scRNA-seq data is characterized by extreme sparsity, technical noise, high dimensionality, and dropout events where transcripts fail to be detected even when present in the cell [7].

Single-cell foundation models (scFMs) represent a promising approach to deciphering this complexity, leveraging transformer architectures originally developed for natural language processing (NLP) [1]. The core premise is intuitive: if we can represent biological data in a format that AI can understand, we can uncover patterns beyond human analytical capacity. In this framework, tokenization—the process of converting raw gene expression data into discrete, machine-readable units—becomes the critical first step that determines what patterns the model can and cannot learn [1] [8].

Without effective tokenization, even the most sophisticated neural network architectures struggle to extract meaningful biological signals from the sparse, noisy matrices that characterize scRNA-seq data. This whitepaper examines how tokenization strategies enable researchers to transform cellular heterogeneity into machine-readable data, facilitating discoveries in cell development, disease mechanisms, and therapeutic interventions.

The Computational Challenge: From Gene Expression to Token Sequences

Fundamental Data Characteristics

ScRNA-seq data presents several unique computational challenges that tokenization must address. The data is typically represented as a matrix with cells as rows and genes as columns, with each entry representing the expression count of a particular gene in a particular cell. This structure exhibits:

- High dimensionality: Tens of thousands of genes measured per cell [6]

- Extreme sparsity: Typically 80-95% zero values due to biological and technical factors [7]

- Technical noise: Amplification bias, batch effects, and dropout events [7]

- Non-sequential nature: Unlike natural language, genes have no inherent ordering [1]

The Tokenization Solution Space

Tokenization strategies for scRNA-seq data must transform this challenging data structure into sequential token representations compatible with transformer architectures. The following table summarizes the primary approaches and their characteristics:

Table 1: Comparative Analysis of Tokenization Strategies for scRNA-seq Data

| Strategy | Core Methodology | Advantages | Limitations | Representative Models |

|---|---|---|---|---|

| Gene-level Tokenization | Each gene represents a unique token ID | Direct biological interpretability; Preserves gene identity | Requires fixed gene vocabulary; Cannot handle unseen genes | scBERT, scGPT, Geneformer |

| Expression-based Ranking | Genes ordered by expression magnitude within each cell | Creates deterministic sequences from non-sequential data | Arbitrary ordering may not reflect biological relationships | scGPT, TOSICA |

| Binning Approaches | Expression values discretized into bins | Captures expression level information beyond presence/absence | Introduces ordinality assumptions; Information loss | scBERT |

| Hybrid Methods | Combines gene identity with expression information | Richer representation of transcriptional state | Increased computational complexity | scSFUT |

| Dynamic Token Adaptation | Modifies token embeddings based on external data (e.g., DNA sequence) | Enables multi-modal integration; Context-aware representations | Requires additional data processing | Bio-DTA |

Tokenization Architectures and Implementation Frameworks

Core Tokenization Workflow

The tokenization process typically follows a structured pipeline that transforms raw count data into model-ready token sequences. The following diagram illustrates this generalized workflow:

Advanced Tokenization Methodologies

Expression-Based Ranking and Sequencing

A predominant strategy for overcoming the non-sequential nature of gene expression data involves creating an artificial sequence by ranking genes based on their expression values. In this approach, each cell is treated as a "sentence" where genes are ordered from highest to lowest expression, creating a deterministic sequence that captures the most biologically relevant signals [1]. Models such as scGPT and Geneformer employ variations of this method, typically selecting the top 1,000-2,000 highly variable genes based on expression magnitude [9].

The ranking process follows this protocol:

- Library size normalization: Normalize counts per cell to account for varying sequencing depths

- Log transformation: Apply log(1+x) transformation to stabilize variance

- Gene ranking: Sort genes by expression value in descending order

- Sequence truncation: Select top N genes (typically 1,000-2,000) based on computational constraints

- Token ID assignment: Map each gene to a unique token identifier in the model's vocabulary

Bin-Based Tokenization

An alternative approach, implemented in models like scBERT, discretizes expression values into bins or categories [8]. This method represents both gene identity and expression level information:

- Expression value discretization: Categorize expression values into bins (e.g., low, medium, high)

- Composite tokens: Create tokens that represent gene-bin combinations (e.g., "GENEAhigh")

- Sequence construction: Order tokens based on biological knowledge or expression levels

This approach preserves more quantitative information about expression levels but increases the vocabulary size and requires careful handling of expression value normalization across cells and datasets.

Dynamic Token Adaptation

Recent advances in multi-modal single-cell foundation models have introduced dynamic token adaptation (DTA), which modifies token embeddings based on external data sources [9]. Bio-DTA implements this approach by:

- DNA sequence processing: Generating embeddings from DNA sequences around transcriptional start sites using Enformer

- Adapter projection: Mapping DNA sequence embeddings to the token embedding space via a multilayer perceptron

- Contextual integration: Using these dynamic embeddings as input to transformer encoders alongside standard gene tokens

This approach enables the model to learn connections between genetic variation and gene expression patterns, providing a more comprehensive view of cellular function.

Experimental Protocols and Implementation Guidelines

Standardized Tokenization Protocol for scRNA-seq Data

Based on current best practices across multiple scFMs, we recommend the following detailed protocol for tokenizing scRNA-seq data:

Input Requirements:

- Raw or normalized count matrix (cells × genes)

- Gene identifiers (e.g., ENSEMBL IDs, gene symbols)

- Metadata (e.g., batch information, sample conditions)

Processing Steps:

Quality Control and Filtering

Normalization

- Apply library size normalization (e.g., 10,000 counts per cell)

- Log-transform using log(1+x) [10]

- Optionally regress out technical covariates (e.g., batch effects)

Gene Selection

- Identify highly variable genes using Seurat v3 or SCANPY workflows

- Select top 1,000-2,000 genes for computational efficiency [9]

Token Sequence Construction

- For each cell, sort selected genes by normalized expression values

- Truncate to top N genes (N determined by model constraints)

- Map each gene to its corresponding token ID

Special Tokens and Metadata Integration

- Add [CLS] token for cell-level representation [1]

- Include special tokens for batch information or experimental conditions

- Append [PAD] tokens to maintain consistent sequence length

Validation and Quality Assessment

To ensure tokenization preserves biological signal, implement the following quality checks:

- Reconstruction accuracy: Measure ability to reconstruct original expression values from token sequences

- Batch effect monitoring: Assess whether batch information leaks into token representations

- Biological fidelity: Verify that known cell type markers remain discriminative after tokenization

Table 2: Research Reagent Solutions for Tokenization Implementation

| Reagent/Resource | Function | Implementation Examples |

|---|---|---|

| CellRanger | Processing raw sequencing data to count matrices | 10x Genomics pipeline for initial data generation |

| SCANPY/Seurat | Quality control, normalization, and gene selection | Standard preprocessing workflows in Python/R |

| HVG Selection | Identifying highly variable genes for token reduction | Seurat v3, SCANPY highlyvariablegenes() |

| Tokenizer Libraries | Mapping genes to token IDs with vocabulary management | Hugging Face Tokenizers, custom implementations |

| UMAP/t-SNE | Visual validation of tokenization quality | Projection of token embeddings to 2D space |

| Batch Correction | Removing technical artifacts pre-tokenization | Combat, Harmony, Scanorama |

Technical Innovations in Tokenization Architectures

The scSFUT Approach: Scale-Free and Unbiased Tokenization

The Single-Cell Scale-Free and Unbiased Transformer (scSFUT) introduces a novel tokenization approach that processes full-length gene vectors without requiring gene selection [8]. This method addresses key limitations in existing approaches:

Gene Embedding Algorithm:

- Segments each cell's gene expression vector into fixed-size windows

- Applies 1D-convolution to capture local gene-gene correlations

- Generates token sequences that preserve global gene context

Bias-Free Attention Mechanism:

- Implements precision-preserving attention computation

- Avoids the low-rank approximations that introduce performance bias in models like scBERT and xTrimoGene [8]

End-to-End Trainable Architecture:

- Jointly optimizes tokenization and model objectives

- Enables learning of token representations specific to annotation tasks

Multi-Modal Token Integration

Advanced scFMs are increasingly incorporating multiple data modalities through specialized tokenization strategies. The sciRED framework demonstrates how factor analysis can guide tokenization for improved interpretability [11]. This approach:

- Decomposes variation into technical and biological components

- Guides token selection based on factors with strong biological signatures

- Enables residual tokenization that focuses on unexplained biological variation

The following diagram illustrates how multi-modal tokenization integrates diverse data sources:

Impact on Biological Discovery and Therapeutic Applications

Effective tokenization strategies have enabled significant advances in biological discovery and drug development applications:

Enhanced Cell Type Annotation

Optimized tokenization enables more precise and automated cell type identification, a fundamental task in single-cell analysis. Models leveraging sophisticated tokenization strategies demonstrate:

- Cross-species generalization: Ability to annotate cell types across different organisms [8]

- Rare cell detection: Improved identification of rare populations through preservation of subtle expression patterns [11]

- Continuous state identification: Capture of transitional cell states through fine-grained token representations

Disease Mechanism Elucidation

In rheumatoid arthritis research, latent factor models guided by appropriate tokenization identified novel disease-associated pathways:

- OSMR signaling signature in synovial fibroblasts [12]

- MERTK-mediated efferocytic signature in synovial monocytes [12]

These discoveries were enabled by tokenization approaches that preserved subtle expression patterns in specific cellular subpopulations that might be lost with aggressive gene filtering.

Toxicological Applications

In toxicology, tokenization strategies that maintain sensitivity to dose-dependent changes have revealed:

- Cell type-specific responses to chemical exposures [10]

- Alterations in cell type proportions following toxicant exposure [10]

- Pathway-specific perturbations that inform mechanistic toxicology

Future Directions and Emerging Challenges

As single-cell technologies continue to evolve, tokenization strategies must adapt to several emerging challenges and opportunities:

Scaling Constraints and Solutions

The exponential growth in single-cell dataset sizes presents ongoing challenges for tokenization:

- Memory limitations with millions of cells and tens of thousands of genes

- Computational efficiency requirements for processing large-scale atlases

- Distributed tokenization approaches for multi-institutional datasets

Multi-Modal Integration

Future tokenization strategies must seamlessly integrate diverse data types:

- Spatial transcriptomics incorporating positional information

- Epigenetic data from scATAC-seq and methylation profiling

- Protein abundance from CITE-seq and related technologies

- Time-series and perturbation data capturing dynamic responses

Interpretability and Biological Validation

As tokenization strategies become more complex, maintaining interpretability is crucial:

- Benchmarking standards for evaluating tokenization quality

- Biological validation frameworks connecting token representations to known biology

- Visualization tools for exploring token-level contributions to model predictions

Tokenization represents the critical interface between biological complexity and computational analysis in single-cell genomics. By transforming high-dimensional, sparse gene expression data into structured token sequences, researchers can leverage the full power of modern foundation models to unravel cellular heterogeneity, disease mechanisms, and therapeutic opportunities. The continuing evolution of tokenization strategies—from simple gene ranking to dynamic, multi-modal approaches—will undoubtedly drive further advances in both basic biology and translational applications. As the field progresses, developing standardized, validated, and interpretable tokenization pipelines will be essential for ensuring that biological insights keep pace with technological capabilities.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the investigation of cellular heterogeneity at an unprecedented resolution. However, the analysis of scRNA-seq data is fraught with computational challenges that stem from its inherent properties. This technical guide examines three core challenges—sparsity, high dimensionality, and the non-sequential nature of the data—within the specific context of developing tokenization strategies for single-cell foundation models (scFMs). As the field moves toward analyzing millions of cells and integrating multi-omic modalities, addressing these challenges becomes paramount for unlocking the full potential of single-cell genomics. The emergence of scFMs, which treat cells as "sentences" and genes as "words," offers a promising framework for unified analysis but requires specialized approaches to handle the unique structure of single-cell data.

The Challenge of Data Sparsity

Understanding scRNA-seq Sparsity

Sparsity in scRNA-seq data refers to the abundance of zero counts, which can exceed 90% of all measurements in a dataset. These zeros represent a mixture of biological and technical factors: true absence of transcripts (biological zeros) and failure to detect present transcripts due to limited sequencing depth (technical zeros or "dropouts") [13]. The sparsity challenge has intensified as technological advances have enabled the sequencing of exponentially more cells. Analysis of 56 datasets published between 2015 and 2021 revealed a clear trend: as the number of cells per dataset increases, the detection rate (fraction of non-zero values) decreases [13]. This inverse relationship means that newer, larger datasets are becoming progressively sparser, presenting substantial analytical difficulties.

Consequences of Sparsity

The preponderance of zeros in scRNA-seq data creates significant problems for conventional analysis methods. Standard count distribution models (e.g., Poisson) do not account for this excess of zeros, leading to biased inferences [13]. Sparsity can obscure true biological signals, particularly for rare cell types and lowly-expressed genes, potentially leading to their misclassification or complete omission from analyses [14]. Furthermore, traditional analytical approaches that rely on count-based metrics may become less informative as sparsity increases, necessitating alternative computational frameworks.

Binarization as a Strategy for Sparse Data

Table 1: Performance Comparison of Count-Based vs. Binary-Based Analysis Methods

| Analysis Task | Count-Based Approach | Binary-Based Approach | Performance Comparison |

|---|---|---|---|

| Dimensionality Reduction | PCA on normalized counts | PCA on binarized data | Highly similar UMAP visualizations (r ≥ 0.73 correlation) [13] |

| Data Integration | Harmony on count-based PCA | Harmony on binary-based PCA | Improved mixing for binary representation (LISI: 1.18 vs. 1.12) [13] |

| Cell Type Identification | scPred/SingleR on counts | scPred/SingleR on binarized data | Highly similar performance (median F1-score ~0.93) [13] |

| Differential Expression | Pseudobulk with mean expression | Pseudobulk with detection rate | Strong correlation (Spearman's ρ ≥ 0.99) [13] |

Interestingly, the very sparsity that complicates analysis also presents opportunities. With the increasing prevalence of zeros, a binary representation (where zero counts remain zero and non-zero counts become one) can capture most of the biological signal while offering substantial computational advantages [13]. Research has demonstrated that the correlation between normalized expression counts and their binarized counterparts is remarkably strong (point-biserial correlation p = 0.93 on average across ~1.5 million cells), indicating that binarization preserves the essential biological information [13]. This strong correlation is primarily explained by the detection rate and the variance of non-zero counts, with sparser datasets showing higher correlations between count and binary representations.

Specialized methods have been developed to leverage binarized data. For instance, scBFA is a dimensionality reduction method specifically designed for binarized scRNA-seq data that demonstrates improved visualization and classification of cell identity [13]. Similarly, Binary Differential Analysis (BDA) enables differential expression analysis from binarized data, faithfully capturing biological variation across cell types and conditions [13]. These approaches highlight how embracing sparsity through appropriate computational strategies can yield robust biological insights while offering computational efficiency.

The Challenge of High Dimensionality

The Dimensionality Problem

A typical scRNA-seq dataset measures expression levels of thousands of genes across thousands to millions of cells, creating a high-dimensional space where each gene represents a dimension. This high dimensionality presents multiple analytical challenges, including increased computational demands, the "curse of dimensionality" where distance metrics become less meaningful, and difficulty in visualizing the underlying structure of the data [14]. The problem is exacerbated by technical noise and skewed distributions that can obscure true biological signals.

Dimensionality Reduction Strategies

Table 2: Dimensionality Reduction Methods for scRNA-seq Data

| Method | Type | Key Mechanism | Advantages | Limitations |

|---|---|---|---|---|

| PCA | Linear | Identifies orthogonal axes of maximum variance | Computationally efficient, preserves global structure | Assumes linear relationships, sensitive to outliers |

| t-SNE | Non-linear | Minimizes KL divergence between high-/low-dim distributions | Effective at preserving local structure | Poor preservation of global structure, results sensitive to parameters |

| UMAP | Non-linear | Minimizes cross-entropy between high-/low-dim distributions | Better global structure preservation than t-SNE | Cluster distances may not reflect true biological differences |

| scLENS | Non-linear | RMT-based noise filtering with L2 normalization | Data-driven dimension determination, handles sparsity well | Relatively new method, less widely adopted |

| supCPM | Supervised non-linear | Capacity-adjusted distance with cluster label guidance | Preserves global structure, tracks cluster variance | Requires accurate cluster labels as input |

Dimensionality reduction (DR) methods are essential for navigating high-dimensional scRNA-seq data. These techniques project the data into a lower-dimensional space while attempting to preserve important biological relationships. Principal Component Analysis (PCA) identifies orthogonal axes of maximum variance in the data and is widely used for initial exploration [15]. Non-linear methods such as t-Distributed Stochastic Neighbor Embedding (t-SNE) and Uniform Manifold Approximation and Projection (UMAP) have become standards for visualization, with UMAP particularly valued for preserving both local and global relationships [15].

However, conventional DR methods have limitations. Most require subjective user decisions to set thresholds that differentiate signal from noise, introducing potential bias and reducing reproducibility [14]. Methods like t-SNE and UMAP may not optimally preserve global geometric structure, potentially resulting in misleading visualizations where clusters appear close in the embedded space despite being distant in the original high-dimensional space [16].

Advanced Solutions for Dimensionality Reduction

Recent methodological advances address these limitations through automated, data-driven approaches. scLENS (single-cell Low-dimension Embedding using effective Noise Subtraction) incorporates random matrix theory (RMT)-based noise filtering to automatically identify biologically meaningful signals without subjective user input [14]. This method first applies L2 normalization after log normalization to prevent signal distortion caused by variations in total gene counts between cells, then uses RMT to distinguish true biological signals from random noise, and finally applies a signal robustness test to filter out low-quality signals caused by dropouts.

Supervised approaches represent another advancement. supCPM (supervised Capacity Preserved Mapping) incorporates cluster label information to guide dimensionality reduction, addressing the crowding issue common in other methods while preserving global geometric structure and tracking cluster variance [16]. This method uses a capacity-adjusted distance metric that accounts for differences in intrinsic dimensionality across the data, enabling more faithful visualizations that maintain both local and global relationships.

The Non-Sequential Nature of scRNA-seq Data

The Sequence Problem for Foundation Models

The successful application of transformer architectures from natural language processing to single-cell biology presents a fundamental challenge: unlike words in a sentence, genes in a cell have no inherent ordering [17]. This non-sequential nature contradicts the basic assumption of transformer models, which process input as ordered sequences where position carries meaningful information. Developing effective tokenization strategies that impose a meaningful sequence on gene expression data is therefore crucial for the development of effective single-cell foundation models (scFMs).

Tokenization Strategies for scFMs

Table 3: Tokenization Strategies for Single-Cell Foundation Models

| Strategy | Description | Advantages | Challenges |

|---|---|---|---|

| Expression Ranking | Orders genes by expression level within each cell | Deterministic, emphasizes highly expressed genes | May overlook important low-expression genes |

| Expression Binning | Groups genes into bins based on expression values | Reduces sensitivity to small expression variations | Requires careful bin definition |

| Gene Identifier Sequencing | Uses fixed gene ordering (e.g., alphabetical, genomic position) | Consistent across cells, simple to implement | May not reflect biological relationships |

| Metadata Incorporation | Includes gene or cell metadata as special tokens | Provides additional biological context | Increases model complexity |

| Modality Indicators | Adds tokens indicating data modality (RNA, ATAC, etc.) | Enables multi-omic integration | Requires harmonization across data types |

Several tokenization strategies have emerged to address the non-sequential nature of scRNA-seq data for foundation models. A common approach involves imposing an artificial ordering based on expression levels, such as ranking genes within each cell by their expression values and feeding the ordered list as the "sentence" for the model [17]. This provides a deterministic sequence that emphasizes highly expressed genes. Alternative approaches partition genes into bins based on expression values or simply use normalized counts without complex ranking schemes [17].

Beyond basic tokenization, scFMs often incorporate special tokens to enrich the input representation. These may include tokens representing cell identity and metadata, modality indicators for multi-omic data, and gene metadata such as gene ontology terms or chromosomal locations [17]. Positional encoding schemes are then adapted to represent the relative order or rank of each gene in the cell, enabling the transformer architecture to process the artificially sequenced data.

Implications for Model Architecture and Performance

The tokenization strategy directly impacts model performance and interpretability. While some models report robustness to technical biases without incorporating batch-specific tokens, others explicitly include batch information as special tokens to account for technical variation [17]. The resulting latent embeddings from scFMs capture gene-gene and cell-cell relationships, enabling various downstream tasks including cell type annotation, data correction, and simulation of cellular responses to perturbations.

Integrated Experimental Framework

A Combined Protocol for Addressing scRNA-seq Challenges

This section outlines an integrated experimental protocol that simultaneously addresses sparsity, high dimensionality, and the non-sequential nature of scRNA-seq data within the context of scFM development.

Step 1: Data Preprocessing and Sparsity Management

- Begin with quality control to remove low-quality cells and genes.

- Apply L2 normalization following log normalization to prevent signal distortion caused by variations in sequencing depth [14]. This step ensures that cell vector lengths are uniform, addressing artifacts that can arise from differences in total gene counts.

- Consider binarization for extremely sparse datasets or when computational efficiency is paramount, particularly for large-scale studies with >100,000 cells [13].

Step 2: Automated Dimensionality Reduction

- Implement RMT-based noise filtering to automatically determine signal dimensions without subjective user input [14].

- Calculate the cell similarity matrix from normalized data and perform eigenvalue decomposition.

- Fit eigenvalues to the Marchenko-Pastur distribution to distinguish biological signals from random noise, using the Tracy-Widom distribution to establish significance thresholds.

- Apply a signal robustness test through binary sparse perturbation to remove low-quality signals caused by dropouts.

Step 3: Tokenization for Foundation Models

- For scFM development, select an appropriate tokenization strategy based on data characteristics and analytical goals.

- For cell-type identification tasks, expression-based ranking often provides effective sequencing.

- For multi-omic integration, incorporate modality-specific tokens and consider metadata enrichment.

- Convert tokens to embedding vectors with positional encoding that reflects the chosen sequencing strategy.

Step 4: Validation and Interpretation

- Validate the resulting representations through downstream tasks including clustering, visualization, and differential expression analysis.

- Compare binary and count-based representations to ensure essential biological signals are preserved.

- Assess embedding quality using silhouette scores, clustering concordance, and biological consistency of identified patterns.

Experimental Workflow for Addressing scRNA-seq Challenges

Essential Research Reagents and Computational Tools

Table 4: Essential Research Reagents and Tools for scRNA-seq Analysis

| Category | Item | Function/Purpose |

|---|---|---|

| Computational Frameworks | Seurat, Scanpy | Comprehensive scRNA-seq analysis platforms providing preprocessing, normalization, and basic dimensionality reduction [14] |

| Dimensionality Reduction | scLENS | Automated dimensionality reduction with RMT-based noise filtering and signal robustness testing [14] |

| Dimensionality Reduction | supCPM | Supervised visualization preserving global structure and cluster variance [16] |

| Binarization Methods | scBFA | Dimensionality reduction specifically designed for binarized scRNA-seq data [13] |

| Foundation Models | scBERT, GeneFormer | Transformer-based models for single-cell data analysis requiring specialized tokenization [17] |

| Data Resources | CZ CELLxGENE, Human Cell Atlas | Curated single-cell data repositories providing standardized datasets for model training and validation [17] |

| Integration Tools | Harmony | Batch effect correction and data integration capable of processing both count and binary representations [13] |

The core challenges of sparsity, high dimensionality, and non-sequential nature in scRNA-seq data represent significant but surmountable obstacles in the development of single-cell foundation models. Strategic approaches including binarization for sparse data, automated dimensionality reduction, and innovative tokenization strategies provide powerful solutions that not only address these challenges but also leverage them to extract meaningful biological insights. As single-cell technologies continue to evolve, producing ever-larger and more complex datasets, the computational frameworks outlined in this guide will become increasingly essential for unlocking the full potential of single-cell genomics in basic research and therapeutic development.

The rapid advancement of single-cell RNA sequencing (scRNA-seq) technologies has fundamentally transformed our ability to listen to the intricate conversations occurring within biological systems. This technological revolution provides an unprecedented view of cellular heterogeneity, enabling researchers to decompose tissues into their constituent cell types and states with remarkable resolution. As the scale and complexity of scRNA-seq datasets grow, the field increasingly borrows conceptual frameworks and computational techniques from other domains dealing with high-dimensional, sequential data. Among the most powerful of these borrowed paradigms is the analogy between natural language and cellular biology, where cells can be viewed as sentences and their constituent genes or genomic features as words [18].

This analogy forms the foundational premise for developing single-cell Foundation Models (scFMs)—large-scale neural networks pre-trained on massive corpora of single-cell data. Just as modern large language models (LLMs) learn the statistical relationships between words in vast text collections, scFMs aim to learn the fundamental "grammar" and "syntax" of cellular identity and function. The process of tokenization, which converts raw genetic features into model-readable numerical representations, stands as the critical first step in this analytical pipeline, directly influencing all downstream tasks from cell type annotation to perturbation response prediction [18]. This whitepaper explores the theoretical underpinnings, methodological considerations, and practical implementations of tokenization strategies for scRNA-seq data within scFM research, providing technical guidance for researchers and drug development professionals working at this interdisciplinary frontier.

The Core Analogy: Deconstructing the Linguistic Framework

Semantic Units in Biological Context

The linguistic analogy for single-cell data transforms our conceptual approach to cellular analysis. In this framework:

- Vocabulary (Genes/Features): The complete set of genes measurable by a platform constitutes the model's vocabulary, typically numbering 20,000-30,000 for full-length scRNA-seq. Each gene represents a discrete semantic unit, analogous to a word in a language.

- Sentences (Cells): Individual cells represent sentences formed from the vocabulary. The expression levels of genes within a cell create a unique "statement" describing that cell's current molecular state.

- Documents (Samples/Tissues): Collections of cells from related biological contexts (e.g., a tissue sample, patient, or experimental condition) form documents comprising multiple cellular "sentences."

- Corpus (Reference Atlases): Large-scale integrated datasets, such as the Human Cell Atlas, serve as the training corpus, containing billions of cellular "sentences" across diverse tissues, donors, and conditions [19].

This structural analogy enables the application of NLP techniques to biological data. However, important distinctions exist: biological "sentences" (cells) lack the explicit sequential ordering of linguistic sentences, and gene-gene relationships form complex, non-linear networks rather than simple linear dependencies.

Tokenization Strategies for Genetic Vocabulary

Tokenization converts the continuous, high-dimensional space of gene expression into discrete tokens suitable for model input. Current approaches in scFM research include:

Table 1: Tokenization Strategies for scRNA-seq Data in scFM Development

| Strategy | Mechanism | Advantages | Limitations | Example Applications |

|---|---|---|---|---|

| Gene-based Tokenization | Each gene represents a unique token ID | Simple implementation, preserves gene identity | Fixed vocabulary size, poor handling of novel genes | scGPT, scFoundation [18] |

| Binned Expression Tokenization | Expression values discretized into bins (e.g., low/medium/high) | Captures expression magnitude, ordinal relationships | Increased sequence length, bin boundaries arbitrary | Geneformer |

| Hybrid Tokenization | Combines gene ID + expression level tokens | Rich representation of both identity and quantity | Complex implementation, longer sequences | - |

| Feature-based Tokenization | Uses highly variable genes (HVGs) as vocabulary | Reduced dimensionality, computational efficiency | Potential information loss, selection method critical | Seurat, Scanpy [19] |

The choice of tokenization strategy profoundly impacts model performance. Gene-based tokenization maintains biological interpretability but faces challenges with the curse of dimensionality. Conversely, feature selection methods (e.g., highly variable gene selection) reduce computational burden but may discard biologically relevant information if not carefully implemented [19].

Benchmarking Tokenization: Insights from Feature Selection Studies

The Critical Role of Feature Selection

Feature selection serves as the biological equivalent of vocabulary pruning in NLP, identifying the most informative genes for downstream analysis. Recent benchmarking studies demonstrate that feature selection methods significantly impact the performance of scRNA-seq data integration and query mapping—key tasks for scFM development [19].

Comprehensive evaluations reveal that using highly variable genes (HVGs) consistently produces high-quality integrations, validating common practice in the field. However, the specific implementation details—including the number of features selected, batch-aware selection criteria, and integration method interactions—require careful consideration [19]. Studies assessing over 20 feature selection methods using metrics spanning five performance categories (batch effect removal, biological conservation, query mapping, label transfer, and unseen population detection) provide quantitative frameworks for evaluating tokenization strategies.

Quantitative Performance Comparisons

Table 2: Benchmarking Metrics for Feature Selection and Tokenization Strategies

| Metric Category | Key Metrics | High-Performing Approaches | Performance Range |

|---|---|---|---|

| Integration (Batch Correction) | Batch PCR, CMS, iLISI | Highly variable features (2000-3000 genes) | 30-50% improvement over random features [19] |

| Integration (Biology Conservation) | Isolated Label F1, bNMI, cLISI | Batch-aware HVG selection | 25-40% better biological preservation [19] |

| Query Mapping | Cell Distance, Label Distance, mLISI | Lineage-specific feature selection | Mapping accuracy: 60-85% [19] |

| Label Transfer | F1 (Macro/Micro/Rarity) | Integration-specific feature selection | F1 scores: 0.7-0.9 [19] |

| Unseen Population Detection | Milo, Unseen Cell Distance | Larger feature sets (3000-5000 genes) | Detection precision: 45-75% [19] |

These benchmarks reveal several critical insights for tokenization in scFMs. First, the number of selected features significantly impacts performance, with 2,000-3,000 features often representing a sweet spot between information content and noise reduction. Second, batch-aware feature selection methods—which account for technical variation across datasets—consistently outperform batch-agnostic approaches. Third, the optimal tokenization strategy depends on the specific downstream task, suggesting that scFMs may benefit from task-specific tokenization approaches [19].

Experimental Protocols for Tokenization Benchmarking

Data Acquisition and Preprocessing

Robust evaluation of tokenization strategies requires standardized data processing pipelines. The following protocol outlines the essential steps for preparing scRNA-seq data for tokenization benchmarking:

- Data Collection: Obtain raw count matrices from public repositories (e.g., GEO, ArrayExpress) or process FASTQ files using established pipelines. For 10X Genomics data, use Cell Ranger (

cellranger count) or alternative pseudo-alignment methods (e.g.,alevin,kallisto-bustools) [20]. - Quality Control: Filter cells based on quality metrics using tools like

scuttle: - Normalization: Perform library size normalization and variance stabilization. The

scranpackage provides effective methods for multi-batch data: - Data Integration: For multi-sample datasets, apply integration methods such as scVI, Harmony, or Seurat's CCA to remove batch effects while preserving biological variation [19] [21].

Feature Selection Methodologies

Different feature selection approaches directly correspond to alternative tokenization strategies for scFMs:

- Highly Variable Gene Selection: Identify genes with higher-than-expected variability across cells using the Seurat (implemented in Scanpy) or scran methods:

- Batch-Aware Feature Selection: Extend HVG selection to multi-batch datasets by selecting features that are variable across batches:

- Lineage-Specific Feature Selection: Identify features associated with specific differentiation trajectories using pseudotime methods (e.g., Slingshot, Monocle3) or marker gene detection.

Performance Evaluation Framework

Comprehensive benchmarking requires multiple metric categories assessed through the following protocol:

- Integration Quality:

- Calculate batch correction metrics (Batch ASW, iLISI, Batch PCR) using the

scibpackage [19]. - Evaluate biological conservation using metrics (cLISI, ARI, NMI) on known cell type labels.

- Calculate batch correction metrics (Batch ASW, iLISI, Batch PCR) using the

- Query Mapping Accuracy:

- Split datasets into reference and query subsets.

- Map query cells to reference using integration method.

- Calculate mapping confidence scores (kNN purity, mapping entropy).

- Downstream Task Performance:

- Assess cell type classification accuracy (F1-score, balanced accuracy).

- Evaluate rare cell population detection (precision-recall curves).

- Test differential expression consistency across batches.

Visualizing Tokenization Workflows in scFM Development

The following diagram illustrates the complete tokenization and modeling pipeline for single-cell foundation models, highlighting the critical role of feature selection as the biological equivalent of vocabulary construction in NLP.

Tokenization Workflow for Single-Cell Foundation Models

This workflow highlights how raw single-cell data undergoes progressive transformation into tokenized representations suitable for foundation model training. The feature selection/tokenization step serves as the critical bridge between biological measurements and computational modeling, directly determining which aspects of cellular identity are preserved for downstream analysis.

Successful implementation of tokenization strategies for scFM development requires both wet-lab reagents for data generation and computational tools for analysis. The following table details essential resources in the researcher's toolkit.

Table 3: Research Reagent Solutions for scRNA-seq and scFM Development

| Category | Item | Specification/Function | Application in scFM Development |

|---|---|---|---|

| Wet-Lab Reagents | 10X Genomics Chromium Chip | Microfluidic device for single-cell partitioning | High-throughput single-cell library preparation for training data generation |

| Reverse Transcriptase Master Mix | Converts RNA to cDNA with cell barcoding | Creates uniquely labeled transcriptomes for cell-specific "sentence" construction | |

| Nucleotide Unique Molecular Identifiers (UMIs) | Molecular barcodes for transcript counting | Enables accurate digital gene expression quantification for token values | |

| Poly(dT) Magnetic Beads | mRNA capture via poly-A tail selection | Isolates protein-coding genes for vocabulary definition | |

| Computational Tools | Cell Ranger (10X) | Processing pipeline for droplet-based data | Generates initial count matrices from raw sequencing data [20] |

| Scanpy/Seurat | Python/R toolkits for single-cell analysis | Implements feature selection, normalization, and preliminary analysis [19] | |

| scVI/scANVI | Deep generative models for single-cell data | Performs batch correction and generates integrated embeddings [19] [21] | |

| scGPT/scFoundation | Foundation models for single-cell biology | Implements transformer architectures pretrained on massive single-cell datasets [18] | |

| Reference Data | Human Cell Atlas | Comprehensive reference of all human cells | Provides training "corpus" for generalizable scFMs [19] |

| Tabula Sapiens/Sapiens/Muris | Cross-species cell atlases | Enables comparative biology and cross-species model transfer |

This toolkit enables the complete pipeline from experimental data generation through computational analysis and model development. The wet-lab reagents ensure high-quality input data, while the computational tools implement the tokenization strategies and model architectures that bring the biological analogy to life.

Emerging Challenges and Opportunities

As single-cell foundation models evolve, several frontiers in tokenization strategy demand attention:

- Multi-modal Tokenization: Current approaches primarily focus on gene expression data, but emerging multi-omics technologies (simultaneous measurement of gene expression, chromatin accessibility, protein abundance, etc.) require integrated tokenization schemes that can represent diverse data types within a unified embedding space.

- Dynamic Vocabulary Adaptation: Fixed vocabularies based on static gene sets struggle to incorporate newly discovered genes or handle cross-species applications. Future tokenization approaches may benefit from hierarchical or compositional representations that can adapt to expanding biological knowledge.

- Spatial Context Integration: Spatial transcriptomics technologies add geographical coordinates to gene expression measurements, creating an additional dimension beyond the "sentence" analogy that requires novel tokenization strategies incorporating spatial relationships.

- Perturbation Modeling: Current benchmarking reveals limitations in predicting cellular responses to perturbations [18]. Improved tokenization strategies that better capture gene regulatory relationships may enhance perturbation prediction accuracy.

The analogy between biological systems and natural language—cells as sentences, genes as words—provides a powerful conceptual framework and practical methodology for advancing single-cell computational biology. Tokenization strategies derived from this analogy serve as the critical bridge connecting raw biological measurements to sophisticated foundation models capable of decoding cellular identity, function, and response.

Benchmark studies consistently demonstrate that feature selection methods significantly impact downstream analysis performance, with highly variable gene selection emerging as a robust approach for biological tokenization [19]. However, optimal implementation requires careful consideration of dataset-specific factors including batch effects, cellular heterogeneity, and analytical objectives.

As the field progresses toward increasingly comprehensive single-cell atlases and more sophisticated foundation models, the development of refined tokenization strategies will remain essential for maximizing model performance and biological insight. By thoughtfully applying and extending the linguistic analogy, researchers can continue to advance our ability to "read" and interpret the fundamental language of biology, with profound implications for basic research and therapeutic development.

Within the research on single-cell foundation models (scFMs), tokenization strategies form the critical bridge that transforms raw single-cell RNA-sequencing (scRNA-seq) data into a structured input that deep learning models can process. The concept is borrowed directly from Natural Language Processing (NLP), where it has been a foundational step for transformer-based models. In NLP, tokenization converts unstructured text into discrete units (tokens), enabling models like BERT to learn complex linguistic patterns. Similarly, in single-cell biology, tokenization aims to convert gene expression profiles into a 'language' that models can understand, treating cells as documents and genes as words to decipher the underlying biological grammar [1] [17].

However, the application of NLP-style tokenization to biological data is not a simple one-to-one mapping. ScRNA-seq data possesses unique characteristics—such as its non-sequential nature and high-dimensional sparsity—that create significant challenges and necessitate method adaptations. This guide provides an in-depth technical examination of the parallels and critical differences between tokenization in NLP and its application in scFMs, framing the discussion within the broader thesis of developing effective tokenization strategies for scRNA-seq data. It is intended for researchers, scientists, and drug development professionals who need to understand the core computational techniques driving innovations in single-cell analysis.

Fundamental Parallels with NLP Tokenization

The development of tokenization methods for scFMs draws heavily from established NLP principles. The core analogy treats a single cell as a sentence or document and its constituent genes as individual words. This conceptual parallel allows model architects to leverage the powerful transformer architecture for biological discovery [1] [17].

Table 1: Core Conceptual Parallels Between NLP and scFM Tokenization

| Aspect | NLP Tokenization | scFM Tokenization | Functional Purpose |

|---|---|---|---|

| Basic Unit | Words/Subwords | Genes/Genomic Features | Define fundamental semantic building blocks for the model [1]. |

| Composite Structure | Sentences/Documents | Individual Cells | Create a structured context from individual units for pattern learning [1] [17]. |

| Model Architecture | Transformer | Transformer (BERT, GPT variants) | Process token sequences to capture long-range dependencies and complex relationships [8] [22]. |

| Pretraining Task | Masked Language Modeling | Masked Gene/Token Modeling | Learn robust, context-aware representations through self-supervised learning [8] [22]. |

A key parallel lies in the self-supervised pretraining objective. Inspired by masked language modeling in NLP, where random words in a sentence are masked and predicted, scFMs like scBERT and scGPT employ a mask-then-reconstruct proxy task. By masking a portion of the input gene tokens and training the model to recover them based on the remaining context, the model learns the complex gene-gene co-expression relationships and underlying regulatory grammar from vast amounts of unlabeled scRNA-seq data [8] [22]. This process enables the model to develop a general understanding of cellular biology before being fine-tuned for specific downstream tasks like cell type annotation or perturbation response prediction.

Critical Differences and Technical Challenges

Despite the conceptual parallels, fundamental differences between natural language and genomic data necessitate significant adaptations in tokenization strategies.

The Non-Sequential Nature of Gene Expression

A paramount difference is the lack of a natural sequence in gene expression data. In a sentence, word order is semantically critical; however, genes within a cell have no inherent biological ordering. This presents a fundamental challenge for transformer models, which inherently process sequential data. To overcome this, scFMs impose an artificial sequence. Common strategies, as utilized by models like scBERT and Geneformer, include ranking genes by their expression value within each cell, effectively creating a "sentence" of genes from highest to lowest expresser [1] [17]. Other approaches involve partitioning genes into bins based on expression levels. This imposed order, while computationally necessary, is biologically arbitrary and represents a key divergence from NLP.

High Dimensionality and Sparsity

ScRNA-seq data is characterized by its extremely high dimensionality (tens of thousands of genes) and pronounced sparsity, largely due to dropout events where genes are measured as unexpressed even when present. This creates a scenario vastly different from the dense, lower-vocabulary setting of most NLP tasks. Naively representing each gene as a token leads to computational intractability and difficulties in model learning. To address this, the field has developed specialized techniques. The scSFUT model, for instance, introduces a gene embedding algorithm that uses sequential tokenization with a fixed window size and 1D-convolution. This method segments high-dimensional cell samples into information-dense sub-vectors, expanding the attention receptive field while maintaining manageable computational loads [8]. This approach seeks to learn directly from the full gene length without relying on pre-filtering steps like Highly Variable Gene (HVG) selection, which can introduce bias and lead to biological information loss [8].

Incorporating Biological Metadata

The "vocabulary" of scFMs is more complex than in NLP. While a word token in NLP is a discrete entity, a gene token in an scFM often needs to encapsulate more than just an identifier. To enrich the biological context and improve model generalization, advanced tokenization schemes incorporate special tokens for metadata. This can include tokens for cell-level context (e.g., tissue of origin, donor), experimental batch information to correct for technical artifacts, and even multi-omic modalities when integrating data from assays like scATAC-seq [1] [17]. Furthermore, some models explore incorporating gene metadata, such as Gene Ontology terms or chromosomal location, directly into the token embeddings to provide a richer prior of biological function [1].

Table 2: Key Technical Challenges in scFM Tokenization vs. NLP

| Technical Challenge | Manifestation in NLP | Manifestation in scFMs | Proposed/Current Solutions |

|---|---|---|---|

| Input Sequence | Natural word order. | No inherent gene order. | Impose order by expression value ranking or binning [1] [17]. |

| Input Sparsity | Dense token embeddings. | Highly sparse expression vectors (many zeros). | Specialized embedding layers; modeling techniques robust to dropouts [8]. |

| Data Structure | Sequential, contextual. | Non-sequential, co-expressive. | Use of attention mechanisms to model gene-gene interactions without relying on position [8] [22]. |

| Scalability | Large but finite vocabulary. | Very high dimensionality (~20-30k genes/cell). | Gene embedding with compression (e.g., scSFUT's windowing); HVG selection (common but lossy) [8]. |

| Generalization | Across dialects, languages. | Across species, tissues, platforms. | Incorporation of species/tissue tokens; training on massively diverse datasets (e.g., CELLxGENE) [1]. |

Figure 1: A comparative workflow of tokenization in NLP versus single-cell foundation models, highlighting the key additional steps required for biological data.

Experimental Protocols and Methodologies

Evaluating the efficacy of a tokenization strategy is integral to scFM development. The following section outlines standard experimental protocols for benchmarking these methods.

Benchmarking Cell Type Annotation

Objective: To assess how effectively a tokenization scheme enables an scFM to accurately annotate cell types in a hold-out dataset. Protocol:

- Pretraining: A foundation model (e.g., scBERT, scSFUT) is pretrained on a large, diverse corpus of scRNA-seq data (e.g., from CELLxGENE or PanglaoDB) using a self-supervised task like masked gene reconstruction [8] [22].

- Fine-tuning: The pretrained model is fine-tuned on a smaller, labeled reference dataset where cell types are known. This step adapts the general model to the specific annotation task.

- Validation: The fine-tuned model is used to predict cell types on a completely unseen test dataset. Performance is quantified using metrics such as Accuracy, Macro F1-score (which is crucial for imbalanced cell type distributions), and Cohen's Kappa [8] [22].

- Comparative Analysis: Performance is compared against baseline methods, which may include other scFMs with different tokenization approaches, autoencoder-based models (e.g., ACTINN), and traditional supervised learning methods.

Cross-Species and Cross-Tissue Generalization

Objective: To evaluate the robustness and generalizability of the tokenization and model when applied to data from different species or tissues not seen during training. Protocol:

- Model Training: An scFM is pretrained and/or fine-tuned on datasets primarily from one species (e.g., human).

- Zero-Shot or Few-Shot Transfer: The model is directly applied (zero-shot) or minimally adapted (few-shot) to datasets from a different species (e.g., mouse) or a novel tissue.

- Evaluation: Annotation accuracy or the quality of learned cell embeddings is measured on the out-of-distribution data. Successful tokenization strategies will enable the model to align the latent representations of biologically similar cell types across the technical and biological divides [8]. Models like scSFUT specifically highlight performance on cross-species datasets as a key benchmark [8].

In Silico Perturbation Prediction

Objective: To test the model's capacity to predict cellular responses to genetic or chemical perturbations, a task of high value for drug discovery. Protocol:

- Baseline Modeling: A model is fine-tuned to represent a specific cellular state (e.g., a disease state like RUNX1-familial platelet disorder) [23].

- Open-Loop Prediction: The model performs in silico perturbation (ISP) by manipulating input tokens (e.g., setting a gene's expression to zero to simulate knockout) and predicting the resulting cell state.

- Closed-Loop Validation: As proposed in recent work, the model's predictions are experimentally validated, and the results are fed back into the model for further fine-tuning. This "closes the loop," dramatically improving prediction accuracy for subsequent rounds, as evidenced by a three-fold increase in positive predictive value [23].

- Metric Analysis: Predictions are compared against experimental validation data (e.g., from Perturb-seq) using metrics like Positive Predictive Value (PPV), Negative Predictive Value (NPV), Sensitivity, and Specificity [23].

Figure 2: A core experimental workflow for developing and evaluating single-cell foundation models, showing key downstream tasks and their associated evaluation metrics.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and data resources that are essential for research and experimentation in scFM tokenization.

Table 3: Essential Research Reagents and Resources for scFM Development

| Resource Name | Type | Primary Function in Research | Relevance to Tokenization |

|---|---|---|---|

| CZ CELLxGENE [1] [17] | Data Platform | Provides unified access to standardized, annotated single-cell datasets (>100M cells). | Source of diverse, high-quality data for pretraining and benchmarking tokenization strategies. |

| PanglaoDB [1] [22] | Curated Database | A collection of annotated scRNA-seq data with marker genes. | Used as a training corpus and for evaluating cell type annotation performance. |

| Scanpy [8] | Computational Toolkit | A Python library for pre-processing and analyzing single-cell data. | Used for essential preprocessing steps (QC, normalization) before tokenization. |

| spaCy [24] | NLP Library | A library for advanced natural language processing in Python. | Provides NER models (e.g., ennercraft_md) for extracting biological entities from text, aiding in automated marker gene curation. |

| scGPT / scBERT [8] [22] | Foundation Models | Open-source, pretrained scFMs for various downstream tasks. | Serve as reference architectures and baselines for comparing novel tokenization methods. |

| Gene Vocabulary [24] | Feature List | A predefined list of human/mouse protein-coding genes (e.g., from Cell Ranger). | Acts as the standard "dictionary" for gene tokenization, enabling consistent input representation across datasets. |

Tokenization is the foundational step that enables single-cell foundation models to "read" the language of biology, drawing powerful inspiration from NLP but requiring significant innovation to address the unique challenges of genomic data. The parallels are strong in concept and overall architecture, but the critical differences—the non-sequential nature of gene expression, extreme sparsity, and high dimensionality—demand specialized solutions like expression-value-based ordering, innovative gene embedding algorithms, and the incorporation of biological metadata. The evaluation of these strategies through rigorous benchmarking on tasks like cell type annotation, cross-species generalization, and in silico perturbation is paramount. As the field progresses, the development of more biologically informed, efficient, and scalable tokenization methods will be a key driver in realizing the full potential of scFMs to power drug discovery and advance our understanding of cellular function and disease.

Implementing Tokenization Strategies: From Gene Ranking to Genomic Positioning

The emergence of single-cell foundation models (scFMs) represents a paradigm shift in computational biology, leveraging large-scale deep learning to interpret the vast datasets generated by single-cell genomics [17]. A core innovation enabling this progress is gene-centric tokenization, a process that converts raw gene expression data from individual cells into a structured format that deep learning models can process. In the architecture of scFMs, individual cells are treated analogously to sentences, while genes and their expression values become the words or tokens that form these cellular "sentences" [17]. This approach allows models to learn the fundamental principles of cellular biology by exposing them to millions of cells encompassing diverse tissues and conditions.

Tokenization serves a critical function in scFM development because it standardizes raw, often unstructured single-cell data into discrete units that transformer-based architectures can efficiently process [17] [25]. Unlike natural language, where words have inherent sequence, gene expression data lacks natural ordering, presenting a fundamental challenge for sequential models. Researchers have therefore developed specialized ranking and binning strategies to impose meaningful structure on gene tokens, enabling the application of powerful transformer architectures that have revolutionized natural language processing and computer vision [17]. These tokenization strategies form the foundational layer upon which scFMs build their understanding of cellular heterogeneity, gene regulatory networks, and biological mechanisms at single-cell resolution.

Core Methodologies for Expression-Based Tokenization

Expression-Based Ranking Strategies

A primary challenge in applying transformer architectures to single-cell data is the non-sequential nature of gene expression profiles. Unlike words in a sentence, genes in a cell have no inherent ordering [17]. To address this, researchers have developed deterministic ranking strategies that impose sequence structure based on expression values. The most common approach involves ranking genes within each cell by their expression levels and feeding the ordered list of top-expressed genes as the representative "sentence" for that cell [17]. This method transforms the unstructured gene expression profile into a deterministic sequence where gene position reflects its relative abundance in that specific cell.