Transformer Architecture in Single-Cell Biology: A Comprehensive Guide to Foundation Models, Applications, and Benchmarking

The integration of transformer architectures into single-cell biology is revolutionizing how we interpret complex cellular systems.

Transformer Architecture in Single-Cell Biology: A Comprehensive Guide to Foundation Models, Applications, and Benchmarking

Abstract

The integration of transformer architectures into single-cell biology is revolutionizing how we interpret complex cellular systems. This article provides a comprehensive overview for researchers and drug development professionals, covering the foundational concepts of single-cell foundation models (scFMs), their diverse methodological applications across omics data, current limitations and optimization strategies, and rigorous validation through benchmarking studies. We synthesize key insights from recent literature to offer a clear roadmap for leveraging these powerful AI tools to unlock deeper biological insights, enhance drug discovery pipelines, and advance clinical translation.

Demystifying Single-Cell Foundation Models: From NLP Concepts to Biological Insights

The analysis of single-cell genomics data represents one of the most computationally challenging problems in modern biology. The field has witnessed a paradigm shift with the introduction of transformer-based architectures, originally developed for natural language processing (NLP). This shift is underpinned by a powerful central analogy: cells as sentences and genes as tokens [1] [2]. In this framework, the gene expression profile of an individual cell is treated as a meaningful sentence, with each expressed gene representing a discrete word or token within that sentence [3]. The collective corpus of single-cell data across tissues, conditions, and species thus forms a complex "language of biology" that foundation models can learn to decipher. This analogy provides the conceptual foundation for single-cell foundation models (scFMs), which are revolutionizing how researchers interpret cellular heterogeneity, regulatory networks, and disease mechanisms [1] [4].

Architectural Foundations: From Natural Language to Biological Grammar

Core Transformer Components in Biology

Transformer architectures adapted for single-cell analysis retain the fundamental components of their NLP counterparts but apply them to biological data [3]:

Self-Attention Mechanism: Enables the model to learn contextual relationships between all genes in a cell simultaneously. Instead of focusing on word relationships in a sentence, it identifies which genes co-vary, potentially revealing functional pathways or regulatory relationships [1] [3]. The attention mechanism is mathematically defined as

Attention(Q, K, V) = softmax(QK^T/√d_k)V, where Q (Query), K (Key), and V (Value) are matrices derived from the input gene embeddings [3].Multi-Head Attention: Allows the model to jointly attend to information from different representation subspaces, potentially capturing diverse biological relationships (e.g., metabolic pathways, signaling cascades, stress responses) in parallel [3].

Positional Encoding: Since gene expression data lacks natural sequence order, scFMs implement various strategies to impose structure, most commonly by ranking genes by expression level or binning them into expression value ranges [1].

Feed-Forward Networks: Transform the representations produced by the attention layers, enabling complex, non-linear combinations of biological features [3].

Model Architecture Variants for Single-Cell Data

Different transformer architectures have been adapted for single-cell analysis, each with distinct advantages:

Table 1: Transformer Architecture Variants in Single-Cell Biology

| Architecture Type | Key Characteristics | Biological Applications | Example Models |

|---|---|---|---|

| Encoder-Only | Uses bidirectional attention; views all genes simultaneously | Cell type annotation, embedding generation | scBERT, scReformer-BERT [1] [5] |

| Decoder-Only | Uses masked self-attention; predicts genes based on context | Generative modeling, perturbation prediction | scGPT [1] |

| Hybrid Architectures | Combines local and global attention mechanisms | Long-range genomic interaction modeling | OmniReg-GPT [6] |

| Efficient Transformers | Employs techniques to handle high-dimensional gene space | Processing full transcriptomes without gene filtering | Reformer-based models [5] |

Tokenization Strategies: From Continuous Expression to Discrete Tokens

Defining Biological Tokens

Tokenization converts raw gene expression data into discrete units processable by transformer models. Several approaches have emerged:

Gene Identity Tokens: Each gene is treated as a unique token, analogous to words in a vocabulary. Expression values are incorporated through additional encoding strategies [1].

Expression-Bin Tokens: Genes are categorized into bins based on expression levels (e.g., low, medium, high), with each bin representing a different token [1] [7].

Rank-Based Ordering: Genes are sorted by expression magnitude within each cell, creating a deterministic sequence for transformer processing [1].

Multimodal Tokens: Incorporate multiple data types by adding special tokens indicating modality (e.g., scATAC-seq, spatial transcriptomics, proteomics) [1] [4].

Handling the Non-Sequential Nature of Genomic Data

A fundamental challenge in applying transformers to single-cell data is that gene expression lacks inherent sequence, unlike natural language. The field has developed several innovative solutions:

Deterministic Ordering: Most models impose sequence by ranking genes based on expression values, creating a consistent input structure [1].

Positional Encoding Adaptations: Standard sinusoidal positional encodings are often replaced with learned embeddings that can better accommodate the arbitrary nature of gene ordering [1].

Metadata Enrichment: Some models prepend special tokens representing cell-level metadata (e.g., tissue type, disease state) to provide biological context [1].

Experimental Protocols and Benchmarking

Standardized Evaluation Frameworks

Rigorous benchmarking is essential for comparing scFMs. The community has developed standardized evaluation protocols:

Data Sourcing and Curation: Models are typically pretrained on large, integrated atlases such as CZ CELLxGENE (containing over 100 million cells), Human Cell Atlas, Tabula Sapiens, and other publicly available resources [1] [5]. Careful filtering and quality control are critical steps.

Train-Test Splits: To prevent data leakage, datasets are split at the study or batch level rather than at the cell level, ensuring that models are evaluated on truly novel biological contexts [8].

Task-Specific Fine-Tuning: After pretraining, models are adapted to specific downstream tasks (e.g., cell type annotation, perturbation response prediction, gene regulatory network inference) with limited task-specific labeled data [1] [8].

Performance Benchmarks

Quantitative evaluation across multiple benchmarks demonstrates the effectiveness of transformer-based approaches:

Table 2: Performance Comparison of Single-Cell Foundation Models

| Model | Pretraining Scale | Key Applications | Reported Performance |

|---|---|---|---|

| scGPT | 33+ million cells [4] | Cell type annotation, multi-omic integration, perturbation response | Superior cross-task generalization, zero-shot annotation [4] |

| scGREAT | Not specified | Gene regulatory network inference | 91.30% average AUROC on 7 benchmark datasets [8] |

| scBERT | Millions of cells [5] | Cell type classification | Effective classification of major cell categories [5] |

| scReformer-BERT | ~15 million cells [5] | Automated cell type classification | Superior classification accuracy on heart cell datasets [5] |

| OmniReg-GPT | Human reference genome (20kb windows) [6] | Cis-regulatory elements identification, gene expression prediction | State-of-the-art on 9/13 genome understanding tasks [6] |

| scPlantFormer | 1 million Arabidopsis thaliana cells [4] | Cross-species annotation | 92% cross-species annotation accuracy [4] |

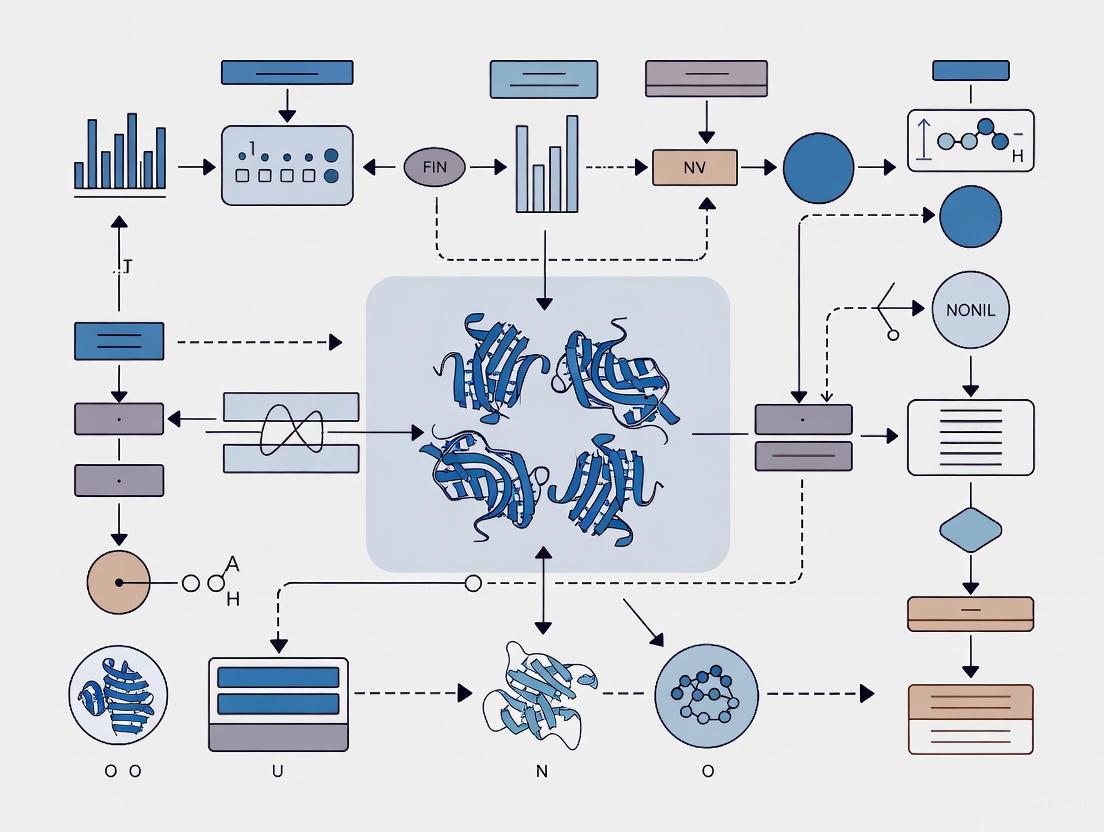

Visualization of Model Architectures and Workflows

Single-Cell Transformer Architecture

Single-Cell Data Processing Workflow

Table 3: Essential Resources for Single-Cell Foundation Model Research

| Resource Category | Specific Examples | Function and Utility |

|---|---|---|

| Data Repositories | CZ CELLxGENE [1] [4], Human Cell Atlas [1], DISCO [4], Tabula Sapiens [5] | Provide standardized, annotated single-cell datasets for model pretraining and benchmarking |

| Computational Frameworks | BioLLM [4], scGPT [1] [4], scGREAT [8] | Offer standardized interfaces and implementations of foundation models for single-cell analysis |

| Benchmarking Platforms | BEELINE [8], Nucleotide Transformer Benchmark [6] | Provide standardized evaluation pipelines and datasets for comparing model performance |

| Pretrained Models | scGPT [4], OmniReg-GPT [6], scBERT [5] | Ready-to-use models that can be fine-tuned for specific applications without costly pretraining |

| Specialized Architectures | Reformer encoders [5], Hybrid attention mechanisms [6], Sparse transformers | Enable efficient processing of long genomic sequences and high-dimensional gene expression data |

Future Directions and Challenges

While transformer-based models have demonstrated remarkable success in single-cell biology, several challenges remain. Model interpretability continues to be a significant hurdle, as understanding the biological relevance of latent embeddings and attention weights remains nontrivial [1]. Computational intensity for training and fine-tuning presents practical barriers to widespread adoption [1]. Additionally, inconsistencies in data quality and batch effects across studies can impact model robustness [1] [4]. Future developments will likely focus on enhancing model efficiency through improved architectures, developing better interpretation tools, and creating more standardized benchmarking frameworks [1] [4]. The integration of multimodal data at scale and the development of generative capabilities for in silico experimentation represent particularly promising directions for advancing both computational methodology and biological discovery [4] [6].

The transformer architecture, first introduced in the seminal paper "Attention Is All You Need," has revolutionized natural language processing (NLP) and is now fundamentally reshaping computational biology [9] [10]. This neural network architecture, which relies solely on attention mechanisms rather than recurrence or convolution, provides a powerful framework for capturing complex relationships in sequential data. In single-cell biology, researchers have creatively adapted this architecture to decipher the "language of cells," where individual cells are treated as sentences and genes or genomic features as words [11]. This paradigm shift enables the development of sophisticated single-cell foundation models (scFMs) that learn from millions of cells across diverse tissues and conditions, then adapt to various downstream analytical tasks through fine-tuning [11].

The integration of transformers with self-supervised learning (SSL) has been particularly transformative for single-cell genomics (SCG). SSL allows models to learn meaningful representations from vast, unlabeled datasets by solving pretext tasks, capturing universal patterns that transfer well to specific biological questions with limited labeled examples [12] [11]. As single-cell technologies rapidly generate data at an unprecedented scale, transformer-based scFMs offer a unified framework to integrate and analyze this complex biological information, providing insights into cellular heterogeneity, gene regulatory networks, and disease mechanisms that were previously challenging to uncover [11].

Architectural Foundations: The Core Transformer Building Blocks

Self-Attention Mechanism

The self-attention mechanism forms the fundamental operating principle of transformer models, enabling them to dynamically weigh the importance of different elements in a sequence when processing each element. Unlike recurrent neural networks that process sequences sequentially, self-attention computes relationships between all elements in parallel, making it highly efficient for modern hardware accelerators [9] [10].

The mechanism operates through three learned vectors for each input element: the Query (Q), Key (K), and Value (V) vectors. For a given element, the Query vector represents what the element is looking for, the Key vector represents what the element contains, and the Value vector represents the actual information the element contributes [9]. The attention output for a position is computed as a weighted sum of Value vectors, where the weights are determined by the compatibility between the Query vector of that position and the Key vectors of all positions in the sequence.

The mathematical formulation of self-attention is expressed as: [ \text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{dk}}\right)V ] where (dk) is the dimension of the Key vectors, and the scaling factor (\frac{1}{\sqrt{d_k}}) prevents the softmax function from entering regions with extremely small gradients [9].

Multi-Head Attention and Positional Encoding

Transformers enhance the basic self-attention through multi-head attention, which allows the model to jointly attend to information from different representation subspaces at different positions [9] [10]. Instead of performing a single attention function, the model linearly projects the Q, K, and V vectors multiple times with different learned projections and performs the attention function in parallel across these projected versions. The outputs are concatenated and projected again to produce the final result [9]. This architecture enables the model to capture different types of relationships—for instance, some attention heads might focus on syntactic patterns while others capture semantic relationships.

Since transformers process all tokens in parallel without inherent sequential processing, they require positional encoding to incorporate information about the position of each token in the sequence [9]. In single-cell applications, this presents a unique challenge because gene expression data lacks natural ordering. Common strategies include ranking genes by expression levels within each cell or partitioning genes into expression bins to create a deterministic sequence [11]. Positional encodings are then added to the token embeddings to provide positional context to the model.

Encoder-Decoder Architecture

The original transformer architecture follows an encoder-decoder structure [9]. The encoder processes the input sequence and generates contextualized representations. It consists of multiple identical layers, each containing a multi-head self-attention mechanism and a position-wise feed-forward network, with residual connections and layer normalization after each sub-layer [9].

The decoder generates the output sequence autoregressively. It shares similar components with the encoder but includes an additional multi-head cross-attention layer that attends to the encoder's output. To prevent the decoder from "peeking" at future tokens during training, it employs masked self-attention, which ensures that predictions for position i can only depend on known outputs at positions less than i [9].

Table: Core Components of Transformer Architecture

| Component | Function | Single-Cell Adaptation |

|---|---|---|

| Self-Attention | Computes contextual relationships between all sequence elements | Models gene-gene interactions and co-expression patterns |

| Multi-Head Attention | Attends to different representation subspaces simultaneously | Captures distinct biological relationships (e.g., regulatory, functional) |

| Positional Encoding | Provides sequence order information | Ranks genes by expression level or uses biological gene groupings |

| Feed-Forward Network | Applies non-linear transformation to each position independently | Enriches representations through biological pathway information |

| Layer Normalization | Stabilizes training by normalizing activations | Standardizes gene expression scales across different cell types |

| Residual Connections | Preserves gradient flow through deep networks | Enables training of deep biological models without degradation |

Self-Supervised Learning Paradigms for Single-Cell Data

Pretext Tasks for Biological Representation Learning

Self-supervised learning in single-cell genomics employs various pretext tasks that enable models to learn meaningful biological representations without explicit labeling. The most common approach adapts the masked language modeling objective from NLP, where randomly selected portions of the input data are masked, and the model is trained to reconstruct them [12] [11]. In single-cell applications, this translates to masking certain genes in a cell's expression profile and training the model to predict their values based on the remaining genes.

More sophisticated masking strategies have been developed for biological data. Gene program masking involves masking biologically coherent sets of genes that function together in pathways or complexes, forcing the model to learn higher-order functional relationships [12]. Contrastive learning methods represent another important SSL approach, where the model learns to identify similar and dissimilar pairs of cells or gene expression patterns [12]. Negative-pair-free methods like Bootstrap Your Own Latent (BYOL) and Barlow Twins have shown particular promise in single-cell applications [12].

Empirical Performance of SSL in Single-Cell Genomics

Recent large-scale benchmarking studies have illuminated the nuanced effectiveness of SSL in single-cell applications. Research evaluating SSL methods on over 20 million cells from the CELLxGENE census data has demonstrated that SSL particularly excels in transfer learning scenarios where models are pre-trained on large auxiliary datasets then fine-tuned on smaller target datasets [12].

Table: Performance of Self-Supervised Learning on Single-Cell Tasks

| Task | Dataset | Baseline Performance | SSL Performance | Key Improvement |

|---|---|---|---|---|

| Cell-type prediction | PBMC (422k cells, 30 types) | 0.7013 ± 0.0077 (Macro F1) | 0.7466 ± 0.0057 (Macro F1) | Better identification of underrepresented cell types |

| Cell-type prediction | Tabula Sapiens (483k cells, 161 types) | 0.2722 ± 0.0123 (Macro F1) | 0.3085 ± 0.0040 (Macro F1) | Correct classification of 6,881 type II pneumocytes vs. 2,441 baseline |

| Gene expression reconstruction | Multiple datasets | Varies by dataset | Significant improvement (weighted explained variance) | Better capture of technical and biological variations |

| Zero-shot cell typing | Multiple datasets | N/A | Competitive performance with kNN classification | Enables annotation without labeled training data |

Masked autoencoders have demonstrated particular effectiveness in single-cell genomics, outperforming contrastive methods—a finding that diverges from trends in computer vision [12]. The performance gains from SSL are most pronounced when the pre-training dataset is substantially larger and more diverse than the fine-tuning dataset, highlighting the importance of rich biological context for effective representation learning [12].

Experimental Framework: Implementing Transformers in Single-Cell Research

Tokenization Strategies for Single-Cell Data

A critical implementation challenge for transformers in single-cell biology is tokenization—the process of converting raw gene expression data into discrete input tokens [11]. Unlike natural language, where words have natural token boundaries, gene expression data is continuous and lacks inherent sequential structure. The most common approach represents each gene as a separate token, with the expression value incorporated through the token embedding [11].

Several strategies have emerged for ordering genes into sequences for transformer input:

- Expression-based ranking: Genes are sorted by expression level within each cell [11]

- Binning approaches: Genes are partitioned into expression bins that determine their position [11]

- Biological grouping: Genes are ordered based on biological knowledge (chromosomal location, pathway membership) [11]

- Fixed vocabulary: Some models use a standardized gene ordering across all cells [11]

Special tokens are often prepended to the gene token sequence, including a [CELL] token that aggregates cell-level information and modality indicators for multi-omics applications [11]. Positional encodings are then added to inform the model of each gene's position in the sequence.

Model Pre-training and Fine-tuning Protocols

The development of single-cell foundation models follows a two-stage process: self-supervised pre-training on large-scale diverse datasets followed by task-specific fine-tuning [12] [11].

Pre-training Protocol:

- Data Collection: Curate large-scale single-cell datasets from public repositories (CELLxGENE, Human Cell Atlas, GEO, SRA)

- Quality Control: Filter cells and genes based on quality metrics (mitochondrial content, detected genes)

- Normalization: Standardize expression values (CP10k normalization, log transformation)

- Pretext Task Training: Implement masked autoencoding or contrastive learning objectives

- Validation: Evaluate representation quality through zero-shot performance on benchmark tasks

Fine-tuning Protocol:

- Task Formulation: Define specific downstream task (cell type annotation, perturbation response, disease classification)

- Data Preparation: Split target dataset into training/validation/test sets

- Model Adaptation: Add task-specific layers to pre-trained backbone

- Transfer Learning: Fine-tune entire model or only task-specific layers on labeled data

- Evaluation: Assess performance on held-out test set using task-relevant metrics

Transformer Pre-training and Fine-tuning Workflow in Single-Cell Biology

Research Reagent Solutions: Essential Tools for scFM Development

Table: Key Research Resources for Single-Cell Foundation Models

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Data Resources | CELLxGENE Census [12] [11], Human Cell Atlas [11], GEO/SRA [11] | Provide standardized, annotated single-cell datasets for model training |

| Preprocessing Tools | Scanpy [13], Seurat | Perform quality control, normalization, and feature selection |

| Model Architectures | scBERT [11], GeneFormer [11], scGPT [11] | Offer pre-designed transformer architectures for single-cell data |

| Tokenization Methods | Expression ranking [11], Gene binning [11], Biological grouping [11] | Convert continuous expression values to discrete token sequences |

| SSL Methods | Masked Autoencoders [12], Contrastive Learning (BYOL, Barlow Twins) [12] | Enable self-supervised pre-training on unlabeled data |

| Benchmarking Suites | Custom evaluation pipelines [12] | Standardized assessment of model performance across multiple tasks |

Advanced Applications and Future Directions

Emerging Applications in Single-Cell Biology

Transformer models are enabling new analytical capabilities across diverse single-cell applications. In cell type annotation, scBERT and similar models achieve high accuracy by framing annotation as a token prediction task [11]. For gene expression reconstruction, transformers can impute missing values or predict expression under different conditions [12]. In cross-modality prediction, models can translate between different molecular measurements (e.g., RNA to protein expression) [12]. Data integration represents another powerful application, where transformers remove batch effects and align cells across different experiments or technologies [12].

The DiffFormer model exemplifies architectural innovation, combining diffusion models with transformers for bulk RNA-seq deconvolution [13]. This approach reframes deconvolution as a conditional generation task, where the transformer's attention mechanism models complex, non-linear dependencies between bulk expression profiles and cell-type proportions [13]. Similarly, the White-Box Diffusion Transformer integrates mathematical interpretability with generative modeling for scRNA-seq data generation [14].

Technical Challenges and Research Frontiers

Despite rapid progress, several challenges remain in applying transformer architectures to single-cell biology. The non-sequential nature of genomic data continues to motivate research into optimal tokenization and positional encoding strategies [11]. Computational intensity presents practical constraints, especially as model sizes and dataset volumes continue to grow [11]. Interpretability remains challenging, as researchers seek to extract biologically meaningful insights from model attention patterns and latent representations [11].

Future research directions include developing more efficient attention mechanisms tailored to biological data, creating multi-modal foundation models that integrate transcriptomic, epigenomic, proteomic, and spatial information, and improving zero-shot capabilities for predicting cellular responses to unseen conditions or perturbations [11]. As these technical advances mature, transformer-based models are poised to become increasingly central tools for extracting biological knowledge from single-cell data.

Technical Challenges in Single-Cell Foundation Model Development

The transformer architecture, with its core attention mechanism and compatibility with self-supervised learning paradigms, has emerged as a powerful backbone for single-cell genomic analysis. By enabling the development of foundation models trained on millions of cells, this technology provides researchers with versatile tools that can be adapted to diverse downstream tasks through fine-tuning. The capacity of transformers to capture long-range dependencies and complex gene-gene interactions has proven particularly valuable for modeling the intricate regulatory networks underlying cellular identity and function.

As single-cell technologies continue to evolve, generating increasingly large and complex datasets, transformer-based approaches will likely play an expanding role in extracting biological insights from this data deluge. Future advances in model architecture, training efficiency, and interpretability will further enhance the utility of these methods, potentially transforming how researchers analyze cellular heterogeneity, decipher disease mechanisms, and develop targeted therapeutic interventions.

The analysis of single-cell RNA sequencing (scRNA-seq) data presents unique computational challenges due to its high-dimensionality, sparsity, and complex biological noise [5] [15]. Transformer-based foundation models, pre-trained on massive-scale single-cell atlases, have emerged as powerful tools to address these challenges. These models adapt the core architectural paradigms of natural language processing—specifically encoder-based (BERT-like) and decoder-based (GPT-like) models—to interpret the "language of cells," where genes are treated as words and individual cells as sentences [11] [3]. This technical guide examines these two architectural frameworks within the context of single-cell biology research, providing researchers and drug development professionals with a comprehensive comparison of their underlying mechanisms, applications, and experimental implementations.

Core Architectural Principles

The Transformer Foundation

The transformer architecture serves as the fundamental building block for both BERT-like and GPT-like models. Its core innovation is the self-attention mechanism, which allows the model to weigh the importance of different elements in a sequence when processing each element [3] [16]. For single-cell data, this enables the model to capture complex gene-gene interactions and regulatory relationships.

The multi-head self-attention mechanism is mathematically defined as:

Attention(Q,K,V) = softmax(QKᵀ/√dₖ)V [3]

Where:

- Q (Query) represents the current focus of attention

- K (Key) represents what the model can attend to

- V (Value) represents the actual information to extract

- dₖ is the dimensionality of the key vectors

In biological terms, this allows the model to learn which genes are most informative about a cell's identity or state, and how they co-vary across different cellular contexts [11].

Encoder-Based (BERT-like) Architectures

Encoder-based models utilize the transformer encoder stack to process all tokens in the input sequence simultaneously. This bidirectional attention enables the model to understand context from both directions, making it particularly effective for comprehension-oriented tasks [17] [18].

Key Characteristics:

- Architecture: Encoder-only transformer

- Attention Type: Multi-head attention (unmasked)

- Context Handling: Considers both left and right context simultaneously

- Training Objective: Masked Language Modeling (MLM)

- Primary Purpose: Understanding and extracting meaning from data [17]

In single-cell applications, BERT-like models such as scBERT [5] and Geneformer [15] excel at tasks requiring deep biological understanding, including cell type annotation, gene function prediction, and identifying disease-specific cellular signatures.

Decoder-Based (GPT-like) Architectures

Decoder-based models employ a causal attention mechanism that processes sequences autoregressively—each token can only attend to previous tokens in the sequence. This unidirectional approach is inherently suited for generative tasks [17] [18].

Key Characteristics:

- Architecture: Decoder-only transformer

- Attention Type: Masked multi-head attention

- Context Handling: Considers only left context

- Training Objective: Causal Language Modeling

- Primary Purpose: Generating coherent and contextually relevant sequences [17]

In single-cell biology, GPT-like models such as scGPT [15] demonstrate exceptional capability in generating synthetic cell profiles, predicting cellular responses to perturbations, and simulating developmental trajectories.

Architectural Comparison

Table 1: Fundamental Differences Between BERT-like and GPT-like Architectures

| Feature | BERT-like (Encoder) | GPT-like (Decoder) |

|---|---|---|

| Architecture Type | Encoder-only Transformer | Decoder-only Transformer |

| Attention Mechanism | Bidirectional, unmasked | Causal, masked |

| Context Processing | Full sequence simultaneously | Left-to-right sequentially |

| Training Objective | Masked Language Modeling (MLM) | Causal Language Modeling |

| Primary Strength | Understanding & classification | Generation & prediction |

| Computational Complexity | O(n²) for sequence length n | O(n²) for sequence length n |

| Typical Output | Classifications, embeddings | Generated sequences, completions |

Implementation in Single-Cell Biology

Data Tokenization Strategies

Applying transformer architectures to single-cell data requires innovative tokenization approaches since gene expression data lacks the inherent sequential order of natural language [11] [15]. The tokenization process converts raw gene expression values into discrete tokens that can be processed by transformer models.

Common Tokenization Methods:

- Expression-based ranking: Genes are ordered by expression level within each cell

- Value binning: Expression values are discretized into bins

- Genomic position ordering: Genes are ordered by genomic coordinates

- HVG selection: Using only highly variable genes as tokens [15]

Table 2: Tokenization Approaches in Single-Cell Foundation Models

| Model | Tokenization Strategy | Input Genes | Value Representation |

|---|---|---|---|

| Geneformer | Ranking by expression level | 2,048 ranked genes | Ordering |

| scGPT | HVG selection + value binning | 1,200 HVGs | Value binning |

| scFoundation | Full gene set | ~19,000 genes | Value projection |

| UCE | Sampling by expression + genomic position | 1,024 non-unique genes | Binary expression |

Model Architectures and Pre-training

Single-cell foundation models adapt the core transformer architecture with specific modifications for biological data. The pre-training phase typically uses self-supervised learning on large-scale single-cell atlases containing millions of cells [11] [15].

Encoder-based Pre-training (BERT-like):

- Masked Gene Modeling (MGM): Randomly mask a percentage of gene tokens and train the model to predict them using contextual information

- Binary Expression Prediction: Predict whether a gene is expressed or not

- Dataset: Models pre-trained on 30-50 million cells from diverse tissues and conditions [15]

Decoder-based Pre-training (GPT-like):

- Next-Gene Prediction: Autoregressively predict the next gene in the sequenced expression profile

- Generative Pre-training: Learn to generate plausible gene expression profiles

- Multi-task Learning: Combine multiple self-supervised objectives [15]

Experimental Protocols and Applications

Cell Type Annotation Protocol

Objective: Automatically identify and label cell types in scRNA-seq data Input: Raw count matrix (cells × genes) Protocol:

Data Preprocessing:

- Quality control and normalization of raw counts

- Gene filtering based on expression thresholds

- Library size normalization and log transformation

Tokenization:

- Select top 2,000 highly variable genes

- Rank genes by expression level within each cell

- Convert to token sequences with special [CLS] token

Model Inference:

- Process token sequences through transformer encoder (e.g., scBERT)

- Extract [CLS] token embedding as cell representation

- Pass through classification layer for cell type prediction

Validation:

Perturbation Response Prediction

Objective: Predict how cells respond to genetic or chemical perturbations Input: Baseline gene expression profile + perturbation information Protocol:

Data Preparation:

- Collect single-cell data from perturbation experiments

- Create paired samples (control vs. treated)

- Tokenize expression profiles with perturbation indicators

Model Architecture:

- Use decoder-based model (e.g., scGPT) with causal attention

- Incorporate perturbation tokens as special inputs

- Implement teacher forcing during training

Training:

- Pre-train on large-scale single-cell atlases

- Fine-tune on perturbation datasets

- Use mean squared error loss between predicted and actual expression

Evaluation:

Visualization of Model Architectures and Workflows

Performance Benchmarking and Evaluation

Quantitative Performance Comparison

Table 3: Performance Comparison on Common Single-Cell Tasks

| Task Type | Best Performing Architecture | Key Metrics | Representative Performance |

|---|---|---|---|

| Cell Type Annotation | Encoder-based (BERT-like) | Accuracy: 85-95% [5] | scBERT: >90% on major cell types [5] [19] |

| Batch Integration | Encoder-based (BERT-like) | ASW: 0.7-0.9 [15] | Geneformer: Superior batch correction [15] |

| Perturbation Prediction | Decoder-based (GPT-like) | MSE: 0.1-0.3 [15] | scGPT: Accurate response simulation [15] |

| Novel Cell Generation | Decoder-based (GPT-like) | MMD: 0.05-0.15 [3] | scGPT: Realistic profile generation [15] |

| Gene Network Inference | Both (Task-dependent) | AUROC: 0.8-0.95 [15] | Varies by biological context [15] |

Computational Requirements

Table 4: Computational Characteristics of Single-Cell Foundation Models

| Model Characteristic | Encoder-based (BERT-like) | Decoder-based (GPT-like) |

|---|---|---|

| Pre-training Scale | 30-50 million parameters [15] | 40-100 million parameters [15] |

| Pre-training Data | 30-50 million cells [15] | 27-33 million cells [15] |

| Memory Usage | High (full attention matrices) | High (causal attention) |

| Inference Speed | Faster (parallel processing) | Slower (sequential generation) |

| Fine-tuning Efficiency | Excellent (few-shot learning) | Good (requires careful prompting) |

The Scientist's Toolkit: Essential Research Reagents

Table 5: Key Computational Tools and Resources for Single-Cell Foundation Models

| Tool/Resource | Type | Function | Example Applications |

|---|---|---|---|

| CELLxGENE Census [11] [20] | Data Platform | Provides standardized access to ~100 million single cells | Model pre-training, benchmarking, transfer learning |

| Geneformer [15] | Encoder Model | BERT-like model for cell state understanding | Cell classification, mechanism identification |

| scGPT [15] | Decoder Model | GPT-like model for generative tasks | Perturbation prediction, hypothesis generation |

| AnnDictionary [19] | LLM Integration | Interfaces LLMs with single-cell data | Automated annotation, biological interpretation |

| CellWhisperer [20] | Multimodal AI | Joint embedding of transcriptomes and text | Natural language querying, interactive exploration |

| Reformer Encoders [5] | Efficient Architecture | Handles long sequences via LSH attention | Full-transcriptome analysis without gene filtering |

| scReformer-BERT [5] | Hybrid Model | Combines BERT architecture with Reformer efficiency | Large-scale cell classification with full gene set |

The integration of encoder-based (BERT-like) and decoder-based (GPT-like) transformer architectures has fundamentally transformed computational single-cell biology. Encoder models excel at understanding cellular states and extracting biologically meaningful patterns, while decoder models show remarkable capability in generating hypotheses and predicting cellular behaviors. The emerging paradigm involves combining both architectures—using encoders for robust feature extraction and decoders for generative modeling and prediction.

Future developments will likely focus on multimodal integration (combining transcriptomics with epigenomics, proteomics, and spatial data), more efficient attention mechanisms to handle complete transcriptomes, and improved interpretability to extract novel biological insights. As these models continue to evolve, they will play an increasingly central role in drug discovery, personalized medicine, and our fundamental understanding of cellular biology.

The development of transformer-based foundation models in single-cell biology research is critically dependent on the scale, diversity, and quality of the data used for pretraining [1]. A foundation model is a large-scale deep learning model pretrained on vast datasets that can be adapted to a wide range of downstream tasks through self-supervised learning [1]. The remarkable success of single-cell foundation models (scFMs) in tasks ranging from cell type annotation to gene regulatory network inference is fundamentally underpinned by the massive, curated biological datasets that serve as their training corpora [1] [21]. This technical guide examines the primary data sources, processing methodologies, and experimental frameworks that enable researchers to construct effective pretraining datasets for scFMs, with particular emphasis on their application within transformer architectures.

Major Public Data Repositories and Atlas Projects

The pretraining of robust scFMs requires access to large-scale, well-annotated single-cell datasets. Researchers typically aggregate data from multiple public repositories to create comprehensive training corpora. The table below summarizes key data sources used in recent scFM development efforts.

Table 1: Major Data Repositories for Single-Cell Foundation Model Pretraining

| Repository/Atlas Name | Scale | Data Content | Notable Use Cases |

|---|---|---|---|

| CZ CELLxGENE [1] | >100 million cells [1] | Annotated single-cell datasets, standardized for analysis [1] | General-purpose scFM pretraining [1] |

| Arc Virtual Cell Atlas [22] | >300 million cells [22] | scBaseCount: 200M+ cells from 21 species; Tahoe-100M: 100M perturbed cells [22] | Perturbation response modeling [22] |

| Human Cell Atlas [1] [23] | Cross-tissue atlas scale [1] | Cells from various tissues and organs, healthy reference [23] | Reference cell state modeling [23] |

| SpatialCorpus-110M [21] | 110 million cells (57M dissociated + 53M spatial) [21] | Integrated dissociated and spatially-resolved transcriptomics [21] | Spatially-aware models (Nicheformer) [21] |

| PanglaoDB [1] | Curated compendium [1] | Data from multiple sources and studies [1] | Supplemental pretraining data [1] |

| NCBI GEO/SRA & EBI Expression Atlas [1] | Thousands of studies [1] | Diverse single-cell sequencing studies [1] | Dataset aggregation [1] |

These repositories provide the foundational data necessary for training models that capture the broad spectrum of cellular heterogeneity across tissues, species, and experimental conditions. The integration of data from multiple sources is crucial for developing models that generalize well to unseen data and downstream tasks [1] [15].

Data Processing and Tokenization Strategies

From Cellular Measurements to Model Input

Raw single-cell data must be transformed into a structured format compatible with transformer architectures. This process, known as tokenization, converts gene expression profiles into discrete input units that the model can process.

Table 2: Tokenization Strategies in Single-Cell Foundation Models

| Model | Tokenization Approach | Gene Ordering | Special Tokens | Value Representation |

|---|---|---|---|---|

| General scFMs [1] | Genes as tokens [1] | Ranked by expression level [1] | Cell identity, modality [1] | Normalized counts, bins [1] |

| Nicheformer [21] | Ranked gene tokens [21] | Expression level relative to corpus mean [21] | Species, modality, technology [21] | Technology-specific normalization [21] |

| scPRINT [24] | Gene ID + expression + genomic location [24] | No inherent ordering [24] | Cell embeddings [24] | MLP-processed log-normalized counts [24] |

| Geneformer [15] | 2,048 ranked genes [15] | Expression-based ranking [15] | Not specified | Ordering as value representation [15] |

| scGPT [15] | 1,200 highly variable genes [15] | Not specified | Not specified | Value binning [15] |

A fundamental challenge in applying transformers to single-cell data is that gene expression data lacks natural sequential ordering, unlike words in a sentence [1]. To address this, most models employ deterministic ordering schemes based on expression magnitude, such as ranking genes within each cell by their expression levels [1]. This creates an arbitrary but consistent sequence that enables the transformer to learn gene-gene relationships through its attention mechanism.

Data Processing Workflow

The transformation of raw sequencing data into model-ready inputs follows a multi-stage pipeline that ensures data quality and compatibility.

Diagram 1: Single-Cell Data Processing Workflow

Experimental Protocols for Pretraining and Evaluation

Pretraining Objectives and Methodologies

scFMs employ self-supervised pretraining tasks that enable the model to learn meaningful biological representations without extensive manual labeling. The most common approach is masked gene modeling (MGM), where random portions of the gene expression profile are masked and the model must predict the missing values based on context [1] [24]. Alternative strategies include:

- Denoising tasks: Training the model to reconstruct clean expression profiles from artificially corrupted inputs [24]

- Bottleneck learning: Forcing the model to compress cellular information into lower-dimensional embeddings [24]

- Multi-task learning: Combining multiple objectives such as label prediction alongside reconstruction [24]

Model Evaluation Frameworks

Comprehensive benchmarking is essential for validating scFM performance. Recent studies have established rigorous evaluation protocols assessing models across diverse downstream tasks [15] [25].

Table 3: Downstream Tasks for Evaluating Single-Cell Foundation Models

| Task Category | Specific Tasks | Evaluation Metrics | Key Insights |

|---|---|---|---|

| Cell-level tasks [15] [25] | Cell type annotation, Batch integration, Cancer cell identification [15] [25] | Accuracy, Cluster separation, Biological conservation [15] [25] | No single scFM dominates all tasks [15] |

| Gene-level tasks [15] [25] | Gene network inference, Gene function prediction [15] [24] | Network accuracy, GO term enrichment [15] | Gene embeddings capture functional relationships [15] |

| Spatial tasks [21] | Spatial composition prediction, Niche identification [21] | Spatial context accuracy [21] | Dissociated data alone cannot capture spatial variation [21] |

| Perturbation tasks [22] | Drug response prediction, Genetic perturbation effects [22] | Response accuracy [22] | Perturbation datasets enable therapeutic applications [22] |

Diagram 2: scFM Evaluation Framework

Research Reagent Solutions

The successful development and application of single-cell foundation models relies on a ecosystem of computational tools and resources. The table below details essential components of the scFM research toolkit.

Table 4: Essential Research Resources for Single-Cell Foundation Model Development

| Resource Type | Specific Tools/Platforms | Function | Application Context |

|---|---|---|---|

| Pretraining Datasets [22] | Arc Virtual Cell Atlas, CELLxGENE [22] | Large-scale, curated single-cell data | Model pretraining, Transfer learning [22] |

| Model Architectures [1] [21] | Transformer, BERT, GPT variants [1] [21] | Neural network backbones | Feature extraction, Pattern recognition [1] |

| Benchmarking Suites [15] [24] | BenGRN, GrnnData [24] | Performance evaluation | Model validation, Comparison [15] |

| Specialized Models [21] [26] [24] | Nicheformer, CellPLM, scPRINT [21] [26] [24] | Task-optimized scFMs | Spatial analysis, Network inference [21] [24] |

| Processing Tools [23] | Bioconductor, Scanpy, Seurat [23] | Data preprocessing | Quality control, Normalization [23] |

The development of effective single-cell foundation models hinges on strategic leveraging of diverse public data repositories and sophisticated processing methodologies. As the field advances, several key principles have emerged: data diversity is more critical than sheer volume alone [21] [15]; dataset composition should reflect the intended application domains [21] [22]; and rigorous benchmarking across multiple biological tasks is essential for validating model utility [15] [25]. The rapid expansion of curated single-cell data resources, coupled with innovative transformer architectures designed for high-dimensional sparse data [5], promises to accelerate the development of more powerful, biologically-relevant foundation models that will transform our understanding of cellular function and disease mechanisms.

The application of transformer architectures to single-cell biology represents a paradigm shift in how researchers analyze cellular heterogeneity and function. Unlike natural language, where words follow grammatical structures and sequential dependencies, gene expression data exists in a fundamentally non-sequential space where genes have no inherent ordering, yet exhibit complex, coordinated relationships. This creates a fundamental tokenization challenge: how to convert this high-dimensional, non-sequential data into structured model inputs that preserve biological meaning while enabling computational efficiency. Foundation models like scGPT and Geneformer have demonstrated that effective tokenization is not merely a preprocessing step but a critical determinant of model performance across diverse downstream tasks including cell type annotation, perturbation response prediction, and gene regulatory network inference [1] [4].

The tokenization process must overcome several domain-specific obstacles: the high dimensionality and sparsity of single-cell RNA sequencing (scRNA-seq) data; the absence of natural gene ordering; technical noise from batch effects; and the need to preserve biological signal amidst these complexities. This technical guide examines current tokenization methodologies, their theoretical underpinnings, empirical performance, and practical implementation considerations for researchers developing and applying transformer-based models in single-cell biology and drug development.

Fundamental Concepts: From Biological Data to Model Tokens

The Nature of Single-Cell Omics Data

Single-cell technologies generate molecular profiles measuring the expression levels of thousands of genes across thousands to millions of individual cells. Each cell is represented as a high-dimensional vector where values correspond to gene expression counts or chromatin accessibility measurements. Unlike sequential data like text or DNA, where element order carries critical semantic meaning, the genes in these vectors exist in an unordered set [1]. This fundamental characteristic necessitates the development of specialized tokenization strategies that impose meaningful structure without introducing artificial biases.

The data presents additional challenges including extreme sparsity (many zero values representing both biological absence and technical dropouts), high technical variance across experimental batches and platforms, and complex biological covariance patterns that reflect underlying regulatory networks [1] [25]. Effective tokenization must preserve biological signal while mitigating the impact of these confounding factors.

Tokenization in the Context of Transformer Architectures

In natural language processing, tokenization converts raw text into discrete units (tokens) that serve as model inputs. Similarly, for single-cell data, tokenization transforms raw gene expression values into a structured sequence the transformer can process. This typically involves two components: (1) defining what constitutes a token, and (2) establishing an ordering for these tokens [1].

The tokenization step is crucial because it determines how biological information is presented to the model's attention mechanism. Different tokenization strategies emphasize different aspects of the data, potentially leading the model to learn distinct representations and relationships. As such, tokenization is not merely an engineering consideration but a fundamental modeling choice that influences what biological patterns the model can discover [1] [27].

Table 1: Core Components of Single-Cell Tokenization

| Component | Description | Common Implementations |

|---|---|---|

| Gene Token | Representation of individual genes | Gene identifier (e.g., ENSG00000139618 for human BRCA1) |

| Value Representation | Encoding of expression magnitude | Normalized counts, bins, or continuous values |

| Positional Encoding | Information about token order | Learned embeddings, fixed sinusoidal functions |

| Special Tokens | Additional contextual information | Cell-level metadata, modality indicators, batch identifiers |

Current Tokenization Strategies: Methodologies and Implementations

Rank-Based Tokenization

Rank-based approaches order genes by their expression level within each cell, converting the non-sequential gene set into a deterministic sequence. In this framework, the most highly expressed genes appear first in the sequence, followed by progressively lower-expressed genes [1] [21]. This strategy is employed by models including Geneformer and Nicheformer, which leverage the intuition that the relative ranking of gene expression may be more robust to technical variance than absolute expression values.

The implementation typically involves sorting genes by expression value in descending order, then selecting the top-k genes (typically 1,000-2,000) to form the input sequence [21]. Each token combines information about gene identity and its relative expression rank. A key advantage is reduced sensitivity to batch effects and normalization artifacts, as the relative ordering within a cell may be preserved even when absolute values shift. However, this approach potentially discards information from lower-ranked genes and may disrupt co-expression patterns that exist across magnitude ranges [1].

Binning-Based Tokenization

Binning strategies partition gene expression values into discrete levels or categories, similar to how words might be categorized by frequency. Models like scBERT often employ this approach, creating expression bins such as "low," "medium," and "high" based on predefined thresholds [1] [11]. Each gene is then represented by both its identifier and its expression bin.

This method can capture non-linear relationships in expression values and reduces the model's sensitivity to small fluctuations that may not be biologically meaningful. Some implementations use learned bin boundaries that adapt during training, potentially discovering optimal discretization thresholds for different biological contexts. The primary limitation is information loss from discretizing continuous expression values, which may obscure subtle but biologically important expression differences [27].

Scale-Free and Unbiased Tokenization

Emerging approaches like scSFUT (Single-Cell Scale-Free and Unbiased Transformer) aim to address limitations of gene selection-based methods by processing the full gene set without preliminary filtering [27]. These methods use techniques like fixed-size windowing to segment the high-dimensional input into manageable chunks, preserving information across the entire transcriptome rather than just highly variable or highly expressed genes.

The scSFUT model specifically employs an encoder-decoder framework with sequential tokenization and 1D-convolution to expand the attention receptive field [27]. This approach demonstrates that with architectural innovations, models can effectively process full-length gene vectors without preselection, potentially capturing patterns that would be missed when focusing only on the most variable or highly expressed genes. This is particularly valuable for detecting rare but biologically significant expression events or identifying patterns across comprehensively correlated gene sets [27].

Table 2: Comparative Analysis of Tokenization Strategies

| Strategy | Mechanism | Advantages | Limitations | Representative Models |

|---|---|---|---|---|

| Rank-Based | Orders genes by expression level | Robust to technical variance; Intuitive biological interpretation | May lose information from low-ranked genes; Disrupts natural covariance | Geneformer, Nicheformer |

| Binning | Discretizes expression into categories | Handles non-linearities; Reduces noise sensitivity | Loss of continuous value information; Bin boundary selection arbitrary | scBERT, xTrimoGene |

| Scale-Free | Processes full gene set | Maximally preserves biological information; No selection bias | Computationally intensive; Requires specialized architectures | scSFUT |

| Value-Inclusive | Combines gene ID with continuous value | Maintains precise expression information; Flexible representation | Sensitive to normalization; May amplify technical artifacts | scGPT, UCE |

Multi-Modal and Integrated Tokenization

As single-cell technologies advance, integrating multiple data modalities from the same cells has become increasingly important. Multi-omic approaches require tokenization strategies that can handle diverse data types including gene expression, chromatin accessibility, protein abundance, and spatial coordinates [4] [28].

Advanced models address this through modality-specific tokens that indicate the data type, allowing the transformer to learn both modality-specific and cross-modality relationships [1] [28]. For example, scPairing uses a contrastive learning framework to embed different modalities from the same cells into a common embedding space, enabling integration and generation of multi-omics data [28]. Similarly, Nicheformer incorporates spatial context through specialized tokens that capture microenvironment information, enabling the model to learn spatially aware representations [21].

Experimental Protocols and Implementation Guidelines

Standardized Preprocessing Pipeline

Implementing effective tokenization requires careful data preprocessing to ensure biological signal is preserved and technical artifacts are minimized. Based on benchmarking studies and model documentation, the following protocol represents current best practices:

Quality Control: Filter cells based on quality metrics—typically retaining cells with 200-2500 detected genes and mitochondrial content below 5-20% (tissue-dependent) [27].

Normalization: Apply library size normalization (e.g., counts per 10,000) followed by log transformation to stabilize variance [1] [27].

Gene Filtering: Remove lowly expressed genes (e.g., detected in fewer than 10 cells) to reduce noise, though this step is omitted in scale-free approaches [27].

Batch Effect Consideration: For multi-dataset training, incorporate batch correction methods or include batch information as special tokens [1] [4].

Tokenization: Apply the selected strategy (rank-based, binning, etc.) to convert each cell's expression profile into a token sequence.

Sequence Formulation: Combine gene tokens with special tokens (e.g., [CLS] for cell-level representation, modality indicators) and apply positional encoding [1].

Tokenization-Specific Methodologies

For rank-based tokenization:

- Sort all genes by expression value in descending order

- Select the top N genes (typically 1,000-2,000) based on model constraints

- Represent each gene by its identifier embedded with positional information reflecting its rank

- Append special tokens representing cell metadata or experimental conditions [21]

For binning-based tokenization:

- Normalize expression values to a consistent scale

- Define bin boundaries based on quantiles or expression thresholds

- Assign each gene to appropriate bin based on expression level

- Create token combining gene ID and bin identity [1]

For scale-free tokenization:

- Normalize but do not filter genes (or use minimal filtering)

- Segment full gene vector into fixed-size windows using 1D convolution

- Apply sequence ordering based on original gene positioning or random shuffling

- Use unbiased attention mechanisms to process segments [27]

Visualization of Tokenization Workflows

Rank-Based Tokenization Process

Multi-Modal Tokenization Architecture

Table 3: Research Reagent Solutions for Tokenization Implementation

| Resource Category | Specific Tools/Platforms | Function in Tokenization Pipeline |

|---|---|---|

| Data Repositories | CZ CELLxGENE, Human Cell Atlas, PanglaoDB | Provide standardized single-cell datasets for model training and benchmarking |

| Preprocessing Tools | Scanpy, Seurat, scikit-learn | Perform quality control, normalization, and initial feature selection |

| Model Frameworks | scGPT, Geneformer, scBERT | Reference implementations of tokenization strategies and model architectures |

| Benchmarking Suites | BioLLM, GenBench | Standardized evaluation of tokenization approaches across diverse tasks |

| Specialized Architectures | scSFUT, Nicheformer | Implementations of advanced tokenization methods (scale-free, spatial-aware) |

Performance Evaluation and Biological Validation

Quantitative Benchmarking Across Strategies

Recent benchmarking studies have evaluated tokenization strategies across diverse biological tasks. Performance varies significantly based on task type, data characteristics, and evaluation metrics [25].

For cell type annotation, binning-based methods like scBERT achieve high accuracy on human datasets but show reduced performance in cross-species transfer tasks. Rank-based approaches demonstrate stronger generalization across tissues and species, while scale-free methods show particular advantage for rare cell type identification [27] [25].

For spatial context prediction, models incorporating spatial tokenization (e.g., Nicheformer) significantly outperform methods trained solely on dissociated data, highlighting the importance of task-specific tokenization strategies [21]. Nicheformer achieves up to 30% improvement in spatial composition prediction compared to non-spatial models [21].

For batch integration, rank-based methods generally show superior performance in removing technical variance while preserving biological heterogeneity, though the incorporation of batch information as special tokens can further enhance integration capabilities [1] [4].

Biological Insight Capture

Beyond task-specific metrics, tokenization strategies differ in their ability to capture biologically meaningful relationships. Evaluation using ontology-informed metrics like scGraph-OntoRWR reveals that while all tokenization approaches capture broad biological patterns, scale-free and value-inclusive methods better preserve fine-grained functional relationships between genes and cell types [25].

Gene embedding analysis shows that tokenization approaches that maintain continuous expression information (rather than discretizing) tend to produce embeddings that better reflect known biological pathways and protein-protein interactions, suggesting they preserve more nuanced functional information [25].

Future Directions and Emerging Challenges

The rapid evolution of single-cell technologies presents ongoing challenges for tokenization strategies. Several promising directions are emerging:

Multi-modal fusion represents a frontier where tokenization must harmonize fundamentally different data types including images, sequences, and spatial coordinates [4] [28]. Approaches like scPairing demonstrate the potential of contrastive alignment methods for creating unified embedding spaces [28].

Dynamic tokenization that adapts to specific biological contexts or tasks may outperform static approaches. Preliminary work suggests that learned tokenization policies can optimize for specific objectives like rare cell detection or perturbation response prediction.

Cross-species generalization requires tokenization methods that can handle orthologous genes and evolutionary divergence. Models like Nicheformer that incorporate multispecies training with orthology mapping show promise in this direction [21].

Computational efficiency remains a critical concern, particularly as dataset sizes exceed millions of cells. Scalable tokenization strategies that maintain biological fidelity while reducing memory and computational requirements will be essential for continued progress.

As transformer architectures continue to evolve in single-cell biology, tokenization strategies will likely become increasingly specialized and sophisticated, potentially incorporating biological prior knowledge more explicitly and adapting to the unique characteristics of specific tissue types, disease states, and experimental modalities.

From Theory to Practice: Transformer Applications in Single-Cell Omics Analysis

The advent of single-cell RNA sequencing (scRNA-seq) has revolutionized our understanding of cellular heterogeneity and complex biological systems [15]. Concurrently, transformer-based architectures have emerged as a powerful tool in computational biology, leading to the development of single-cell foundation models (scFMs) [1]. These large-scale deep learning models, pretrained on vast datasets containing millions of cells, are capable of learning universal biological knowledge in a self-supervised manner [1] [15]. This technical guide explores how these transformer-based scFMs are applied to three core downstream tasks in single-cell analysis: cell type annotation, batch integration, and atlas construction. By providing a structured overview of methodologies, performance benchmarks, and practical protocols, this document serves as a resource for researchers, scientists, and drug development professionals seeking to leverage these advanced computational techniques.

Transformer Architectures in Single-Cell Biology

Fundamental Concepts

Single-cell foundation models adapt the transformer architecture, originally developed for natural language processing (NLP), to interpret biological data [1]. In this analogy, individual cells are treated as "sentences," while genes or other genomic features, along with their expression values, are treated as "words" or "tokens" [1]. The self-attention mechanism inherent to transformers allows these models to learn and weight relationships between any pair of input tokens (genes), enabling them to capture complex gene-gene interactions and regulatory networks without prior biological knowledge [1] [29].

Model Architectures and Tokenization Strategies

A critical challenge in applying transformers to single-cell data is that gene expression data lacks natural sequential ordering, unlike words in a sentence [1] [15]. To address this, various tokenization strategies have been developed. A common approach ranks genes within each cell by expression levels, feeding the ordered list of top genes as a sequence to the model [1]. Other methods partition genes into bins based on expression values or use normalized counts directly [1]. Gene tokens typically combine a gene identifier embedding with a value embedding representing its expression level [15].

Table 1: Common scFM Architectures and Their Key Characteristics

| Model Name | Primary Architecture | Tokenization Strategy | Key Features | Applicable Tasks |

|---|---|---|---|---|

| scBERT [1] | BERT-like Encoder | Gene ranking or value binning | Bidirectional attention; trained on millions of cells | Cell type annotation |

| scGPT [1] [15] | GPT-like Decoder | Value binning with 1200 HVGs | Unidirectional attention; multi-omics capability | Generation, integration, annotation |

| Geneformer [15] | Encoder | 2048 ranked genes | Employs gene ranking by expression | Network inference, annotation |

| scReformer-BERT [5] | BERT with Reformer encoders | Full gene set (>10,000 genes) | Uses LSH attention for efficiency with long sequences | Cell type classification |

| UCE [15] | Encoder | 1024 non-unique genes sampled by expression | Incorporates protein embeddings from ESM-2 | Multi-modal analysis |

Most scFMs utilize either encoder-based architectures (like BERT) for classification and embedding tasks, or decoder-based architectures (like GPT) for generation tasks [1]. Hybrid designs are also being explored. A key innovation is the development of models like scReformer-BERT, which incorporates Reformer encoders with locality-sensitive hashing (LSH) attention to handle the full spectrum of over 10,000 genes per cell without requiring aggressive gene filtering, thereby preserving more biological information [5].

Core Downstream Task 1: Cell Type Annotation

Task Definition and Significance

Accurate cell type identification is a critical prerequisite for interpreting single-cell transcriptomic data and understanding complex biological systems [30] [5]. Traditional methods rely on manual annotation using known marker genes, which is time-consuming, subjective, and challenging for rare or novel cell populations. Transformer-based scFMs offer a powerful approach for automated, standardized, and scalable cell type annotation [30].

Experimental Protocols and Methodologies

The standard protocol for cell type annotation using scFMs follows a "pretrain-then-fine-tune" paradigm [15]:

- Feature Extraction: Utilize a pretrained scFM to generate latent embeddings for each cell in the target dataset. These embeddings are dense, low-dimensional representations that capture essential biological features of each cell. In zero-shot scenarios, these embeddings can be used directly with simple classifiers without further model tuning [15].

- Supervised Fine-Tuning: For optimal performance on specific datasets, the pretrained scFM can be fine-tuned using labeled reference data. This involves:

- Preparing a high-quality reference dataset with accurate cell type labels.

- Adding a task-specific classification layer on top of the base model.

- Training the entire model or a subset of its layers on the reference data, typically using cross-entropy loss.

- Prediction and Validation: Apply the fine-tuned model to annotate cell types in new, unlabeled datasets. Predictions should be validated using known marker genes and, if available, held-out validation sets.

Performance Evaluation and Benchmarking

Recent benchmarking studies have evaluated multiple scFMs against traditional methods for cell type annotation. Performance is often assessed using metrics such as accuracy, F1-score, and the novel Lowest Common Ancestor Distance (LCAD), which measures the ontological proximity between misclassified cell types to assess the severity of errors [15].

Table 2: Benchmarking Results for Cell Type Annotation (Summary of Key Findings from [15])

| Model / Approach | Reported Strengths | Reported Limitations | Context for Optimal Use |

|---|---|---|---|

| scFMs (Zero-shot) | Capture biological insights into relational structures; robust to dataset variations [15]. | May not consistently outperform simpler models on small, specific datasets [15]. | Large, diverse datasets; when biological interpretability is prioritized. |

| scFMs (Fine-tuned) | High accuracy; leverage transfer learning from large-scale pretraining [15]. | Require computational resources for fine-tuning; risk of overfitting on small datasets [15]. | When sufficient labeled data and computational resources are available. |

| Traditional ML (e.g., SVM, HVGs) | Efficient and effective for specific, small-scale datasets with limited computational resources [15]. | Poor generalization to cell types not in the source data; limited by manual feature selection [15]. | Small, focused datasets with well-defined, known cell types. |

Notably, no single scFM consistently outperforms all others across all tasks and datasets. Model selection must be tailored based on factors like dataset size, task complexity, need for biological interpretability, and computational resources [15].

Core Downstream Task 2: Batch Integration

The Challenge of Batch Effects

Integrating multiple scRNA-seq datasets is a standard but challenging step in single-cell analysis. Technical differences between experiments (e.g., sequencing depth, protocols) and biological variations (e.g., different donors, species) create "batch effects" that can confound biological signals [31]. Effective integration is crucial for constructing large-scale atlases and for cross-study comparisons [31].

Transformer-Based Integration Approaches

Transformer-based scFMs like scGPT are designed to integrate diverse datasets by learning a unified representation of single-cell data that is robust to technical variations [1] [15]. The self-attention mechanism can theoretically learn to distinguish technical noise from biological signal after exposure to vast amounts of diverse data during pretraining. Some models incorporate batch information as special tokens during training to explicitly model and correct for these effects [1].

Benchmarking Integration Performance

Integration methods are evaluated on two key aspects: batch correction (how well technical variations are removed) and biological preservation (how well true biological variation is retained) [31]. Common metrics include:

- iLISI: Measures the mixing of batches in local cell neighborhoods [31].

- NMI: Assesses the preservation of cell type clusters after integration [31].

Benchmarks indicate that while methods like conditional Variational Autoencoders (cVAEs) are popular, they can struggle with substantial batch effects (e.g., across species or technologies) and may lose biological information when increasing batch correction strength [31]. Advanced methods like sysVI, which combines VampPrior and cycle-consistency constraints, have been shown to improve integration across systems while better preserving biological signals [31].

Diagram 1: Batch Integration Workflow

Core Downstream Task 3: Atlas Construction

The Vision of Single-Cell Atlases

Single-cell atlases aim to create comprehensive maps of all cell types across tissues, organs, and organisms, serving as foundational references for biology and medicine [1] [31]. These large-scale efforts, such as the Human Cell Atlas, integrate data from thousands of individuals and conditions to capture the full spectrum of cellular diversity [1].

The Role of Foundation Models in Atlas Building

scFMs are uniquely positioned to address the central challenges of atlas construction:

- Scalability: They can process millions of cells from diverse sources [1] [15].

- Data Integration: Their pretraining on massive, heterogeneous datasets enables them to harmonize data across studies, technologies, and even species [1] [31].

- Unified Representation: They learn a consistent latent space that facilitates the comparison of cells across different biological contexts [1].

- Knowledge Capture: The embeddings generated by scFMs capture deep biological relationships between cell types and states [15].

Metrics for Atlas Quality Assessment

Beyond standard clustering metrics, novel ontology-informed metrics are being developed to evaluate the biological relevance of constructed atlases. The scGraph-OntoRWR metric, for instance, measures the consistency of cell type relationships captured by the model with prior biological knowledge encoded in cell ontologies [15].

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Single-Cell Analysis

| Item / Resource | Function | Example Sources / Tools |

|---|---|---|

| 10x Genomics Chromium | High-throughput single-cell RNA sequencing platform for generating scRNA-seq data. | 10x Genomics [32] |

| Public Data Repositories | Sources of large-scale, diverse scRNA-seq data for model pretraining and validation. | CZ CELLxGENE, Human Cell Atlas, GEO, SRA, PanglaoDB [1] |

| Pretrained scFMs | Foundational models that can be adapted for specific downstream tasks. | Geneformer, scGPT, scBERT, UCE, scFoundation [1] [15] |

| Data Processing Pipelines | Tools for processing raw sequencing data into analyzable gene expression matrices. | Cell Ranger (10x Genomics) [32] |

| Quality Control Tools | Software for assessing data quality and filtering low-quality cells. | Loupe Browser, SoupX, CellBender [32] |

| Benchmarking Frameworks | Standardized protocols and metrics for evaluating model performance on biological tasks. | scGraph-OntoRWR, LCAD, iLISI, NMI [15] [31] |

Transformer-based single-cell foundation models represent a paradigm shift in the analysis of scRNA-seq data. For the core downstream tasks of cell type annotation, batch integration, and atlas construction, these models offer powerful, scalable, and increasingly biologically informed approaches. While challenges remain—including computational intensity, variability in data quality, and the need for better interpretation of model representations [1]—the field is rapidly advancing. Future developments will likely focus on enhancing model robustness, interpretability, and scalability, further solidifying the role of scFMs as pivotal tools in unlocking deeper insights into cellular function and disease mechanisms [1]. As benchmark studies suggest, the key to success lies in the thoughtful selection of models and methods tailored to the specific biological question and experimental context [15].

The advent of single-cell sequencing technologies has revolutionized our understanding of cellular heterogeneity, moving beyond mere transcriptomics to encompass multi-modal measurements including chromatin accessibility (ATAC-seq), proteomics, and spatial context. While each omic provides valuable data alone, in concert, they reveal new cell subtypes, cell interactions, and interactions between different omic layers leading to gene regulatory and phenotypic outcomes [33]. However, integration of these disparate data types represents a formidable challenge due to differing dimensionality, statistical properties, and technological noise [34]. The emergence of transformer architectures in single-cell biology offers a promising framework to address these challenges, enabling the development of foundation models that can distill critical biological insights from millions of cells across multiple modalities [35] [36]. This technical guide examines current methodologies, computational frameworks, and experimental protocols for robust multiomics integration within the context of transformer-based approaches, providing researchers with practical strategies for unlocking the full potential of their multimodal data.

Computational Frameworks for Multiomics Integration

Taxonomy of Integration Strategies

Integration methods can be broadly classified based on whether the multi-omics data is matched (profiled from the same cell) or unmatched (profiled from different cells) [33]. This distinction fundamentally shapes the computational approach:

Vertical Integration (Matched): Leverages the cell itself as an anchor to integrate different modalities assayed from the same cell. Methods include weighted nearest neighbors (Seurat v4), variational autoencoders (scMVAE, totalVI), and matrix factorization (MOFA+) [33] [34].