Troubleshooting Mismatched Automated Annotations: A Biomedical Research Guide

This article provides a comprehensive guide for researchers and drug development professionals facing the challenge of mismatched automated annotations in biomedical AI.

Troubleshooting Mismatched Automated Annotations: A Biomedical Research Guide

Abstract

This article provides a comprehensive guide for researchers and drug development professionals facing the challenge of mismatched automated annotations in biomedical AI. It explores the root causes of annotation noise, from inter-expert variability to data drift, and offers practical methodological solutions, including Human-in-the-Loop and active learning frameworks. The content details advanced troubleshooting techniques for quality assurance and optimization, and concludes with robust validation strategies and comparative analyses of annotation tools. The goal is to equip scientific teams with the knowledge to build more reliable, accurate, and generalizable AI models for critical applications in clinical research and drug discovery.

Understanding the Sources and Impact of Annotation Mismatches

Annotation noise refers to errors or inconsistencies in labeled data used for training artificial intelligence and machine learning models. In scientific and clinical research, particularly in drug development, annotation noise presents a significant challenge by compromising the reliability of AI-driven discoveries. This technical support guide defines the types of annotation noise, details methodologies for its detection, and provides troubleshooting solutions for researchers encountering mismatched automated annotations in their experiments.

## Understanding Annotation Noise: Definitions and Taxonomy

What is Annotation Noise?

Annotation noise encompasses all deviations from accurate labeling in datasets. These inconsistencies can stem from various sources, including human error, subjective judgment, insufficient guidelines, or technical limitations in automated labeling systems. In high-stakes fields like medical research, annotation inconsistencies are known to radically degrade machine learning system performance, resulting in less generalizable features and poor model performance [1].

A Taxonomy of Annotation Noise in Complex Tasks

Categorization Noise

- Definition: Occurs when an object or data point is assigned an incorrect class label [2].

- Example: In medical imaging, a benign tumor might be mislabeled as malignant, or in pharmaceutical research, a compound might be incorrectly categorized.

Localization Noise

- Definition: Arises when the spatial coordinates or boundaries of an object are inaccurately annotated [2].

- Example: In cellular imaging, bounding boxes for organelles might be imprecise, capturing either too much or too little of the target structure.

Missing Annotation Noise

- Definition: Refers to objects or data points that are entirely unlabeled despite being present and relevant [2].

- Example: In high-content screening, a percentage of cells in a well might fail to be annotated due to crowding or low contrast.

Bogus Bounding Box Noise

- Definition: Involves the presence of annotations where no actual objects or relevant data points exist [2].

- Example: In microscopic image analysis, artifacts or debris might be incorrectly annotated as relevant biological structures.

## Quantitative Impact of Annotation Noise

Table 1: Measured Impact of Annotation Noise on Model Performance

| Noise Type | Performance Metric | Impact Level | Experimental Context |

|---|---|---|---|

| Mixed Annotation Noise | Model Classification Agreement | Fleiss' κ = 0.383 (Fair) | ICU clinical decision-making with 11 expert annotators [3] |

| Categorization Noise | Detection Precision | 75% with optimal threshold | Object detection with 20% injected noise [1] |

| Categorization Noise | Detection Recall | 93% with optimal threshold | Object detection with 20% injected noise [1] |

| Expert Disagreement | External Validation Agreement | Average Cohen's κ = 0.255 (Minimal) | Cross-validation of 11 clinical expert models [3] |

| Annotation Inconsistencies | QA Time Allocation | Up to 40% of total annotation time | Standard annotation pipeline reporting [1] |

## Experimental Protocols for Noise Detection

Protocol 1: Mislabel Detection in Object Detection Tasks

This methodology identifies categorization and localization noise in bounding box annotations [1].

Step-by-Step Workflow:

- Model Prediction: Run inference on your dataset using a trained detection model (e.g., Faster R-CNN).

- Annotation-Prediction Matching: For each bounding box annotation, identify the model prediction with the maximum Intersection over Union (IOU).

- Discrepancy Measurement: Compute the L2 distance between the one-hot vector of the annotated class and the model's softmax logits for the matched prediction.

- Threshold Optimization: Establish an optimal threshold for the mislabel metric based on your precision-recall requirements.

- Validation: Manually inspect annotations flagged as potentially noisy to confirm accuracy.

Protocol 2: Inter-Annotator Agreement Assessment

This approach quantifies systematic inconsistencies across multiple annotators, particularly relevant for subjective domains like medical annotation [3].

Step-by-Step Workflow:

- Multiple Annotations: Have multiple domain experts (ideally 3+) annotate the same subset of data.

- Agreement Calculation: Compute Fleiss' Kappa for categorical annotations or Intraclass Correlation Coefficient (ICC) for continuous measures.

- Disagreement Analysis: Identify specific data points or categories with the lowest agreement rates.

- Guideline Refinement: Update annotation protocols to address sources of disagreement.

- Continuous Monitoring: Implement ongoing agreement assessments throughout the annotation project.

Protocol 3: Universal Noise Annotation (UNA) Assessment

A comprehensive framework for evaluating all noise types simultaneously in object detection datasets [2].

Step-by-Step Workflow:

- Noise Synthesis: Systematically inject all four noise types (categorization, localization, missing, bogus) into a validation dataset.

- Model Training: Train your detection model on both clean and noisy-enhanced datasets.

- Error Analysis: Use the TIDE (Toolkit for Identifying Detection Errors) framework to quantify how each noise type contributes to performance degradation.

- Robustness Evaluation: Compare model performance across noise conditions to establish baseline robustness.

- Mitigation Strategy: Prioritize addressing the noise types with largest negative impact on your specific model.

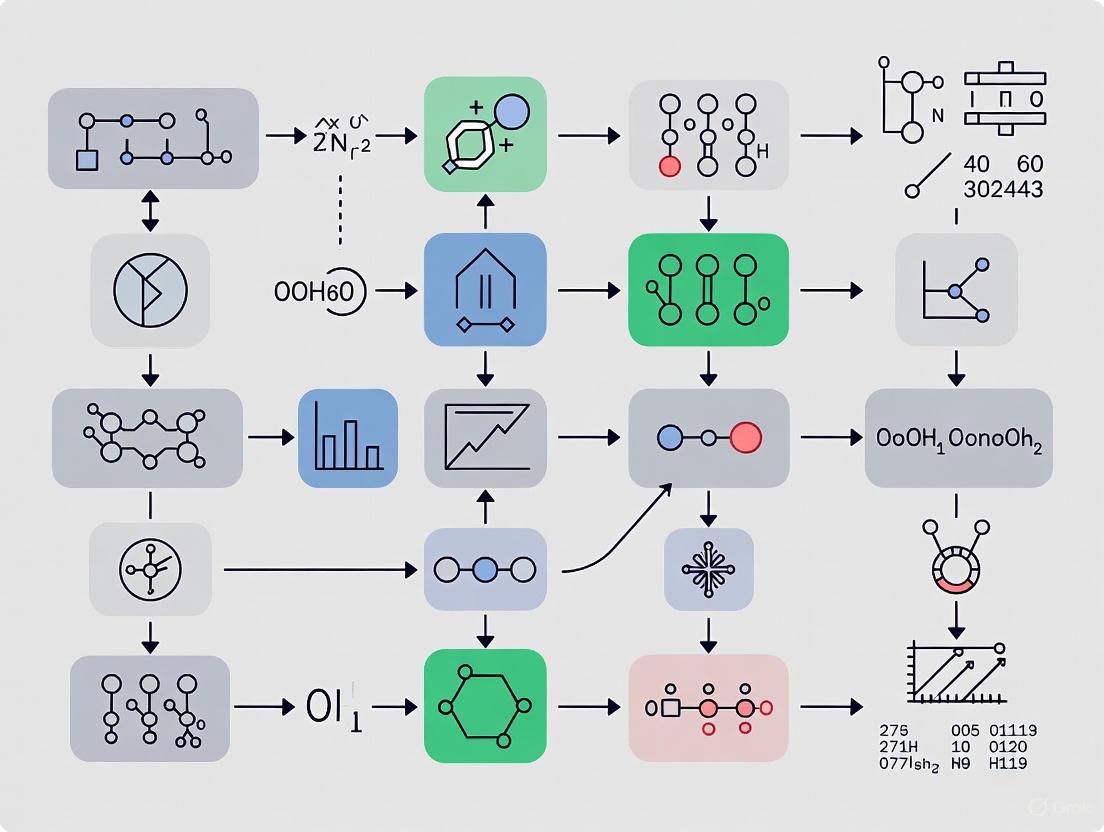

## Experimental Workflow Visualization

Diagram 1: Annotation Noise Assessment Workflow

## Troubleshooting Guides & FAQs

FAQ 1: How can we maintain annotation quality as our dataset scales?

Challenge: Annotation consistency decreases as project size increases, especially with multiple annotators.

Solutions:

- Implement AI-assisted pre-labeling to establish consistency baseline [4]

- Use automated quality control checks to flag outliers [4]

- Establish clear annotation guidelines with visual examples [5]

- Conduct regular calibration sessions with annotation team [3]

- Implement Inter-Annotator Agreement (IAA) metrics to monitor consistency [4]

FAQ 2: What approaches effectively detect biased annotations?

Challenge: Systematic biases in annotations lead to skewed model performance.

Solutions:

- Perform stratified analysis of model performance across data segments [4]

- Implement bias detection tools that flag underrepresented segments [4]

- Diversify data sources to ensure representative sampling [4]

- Conduct "blind spot" analysis where model performance is unexpectedly poor

- Use adversarial validation techniques to identify distribution shifts

FAQ 3: How can we efficiently validate automated annotations?

Challenge: Manual validation of all annotations is time-consuming and expensive.

Solutions:

- Implement the mislabel metric with optimal thresholding to focus manual review on risky annotations [1]

- Use confidence-based sampling to prioritize low-confidence predictions for review

- Employ semi-supervised approaches where uncertain annotations are treated as unlabeled data [1]

- Implement active learning pipelines that continuously identify uncertain labels for review [4]

- Set up a tiered review system with expert oversight for borderline cases

FAQ 4: How do we handle legitimate expert disagreement in annotations?

Challenge: In subjective domains, even experts may legitimately disagree on labels.

Solutions:

- Acknowledge and model the spectrum of opinions rather than enforcing artificial consensus [3]

- Implement probabilistic labeling that captures uncertainty [3]

- Assess annotation "learnability" - only use consistently learnable patterns for model training [3]

- Consider multiple ground truth perspectives when evaluating model performance

- Document disagreement patterns to refine annotation guidelines

## The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Annotation Noise Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| TIDE Framework | Error analysis and decomposition | Quantifies impact of different error types in object detection [2] |

| SuperAnnotate QA Tools | Manual and automated quality assurance | Accelerates annotation review by 4x with pin functionality and approve/disapprove workflow [1] |

| Fleiss' Kappa / Cohen's Kappa | Inter-annotator agreement measurement | Quantifies consistency between multiple human annotators [3] |

| UNA Benchmark | Comprehensive noise evaluation | Standardized benchmark for all noise types in object detection [2] |

| Active Learning Pipelines | Continuous quality maintenance | Identifies uncertain or outdated labels for review throughout model lifecycle [4] |

| AI-Assisted Pre-labeling | Consistency establishment | Provides initial labels that human annotators refine, reducing inconsistencies by 85%+ [4] |

## Annotation Noise Detection System Architecture

Diagram 2: Comprehensive Noise Detection System

## Advanced Methodologies for Noise Robustness

Leveraging Noisy annotations in Model Development

Rather than simply removing noisy annotations, advanced approaches leverage them:

Learnability Assessment: Instead of seeking a "super expert" or using simple majority voting, assess which annotations produce consistently learnable patterns. Models trained on these "learnable" annotated datasets often outperform those trained on full consensus annotations [3].

Noise-Tolerant Architectures: Implement models that explicitly account for annotation uncertainty during training, such as noise-resistant loss functions or probabilistic frameworks that capture annotator expertise.

Semi-Supervised Learning: Treat detected mislabeled samples as unlabeled data in semi-supervised settings, potentially leveraging their information content while mitigating the impact of incorrect labels [1].

Effective management of annotation noise requires a systematic approach combining quantitative assessment, targeted detection methodologies, and continuous quality monitoring. By implementing the protocols and solutions outlined in this guide, researchers can significantly improve annotation quality, enhance model reliability, and accelerate drug development pipelines. The key lies in recognizing that some level of noise is inevitable, and focusing resources on its detection, measurement, and mitigation rather than its complete elimination.

FAQs: Understanding and Troubleshooting Noisy Labels

What are "noisy labels" and why are they a critical problem in biomedical AI? Noisy labels refer to incorrect annotations in training data. In biomedical contexts, this is especially critical because labeling medical images is resource-intensive, requires domain expertise, and suffers from high inter- and intra-observer variability [6]. These noisy labels can mislead deep neural networks, causing them to learn incorrect patterns and ultimately make erroneous predictions that could influence decisions impacting human health [6] [7].

What is the difference between Instance-Independent and Instance-Dependent Label Noise? Label noise is not a single entity; its type significantly impacts the choice of remedy. The table below summarizes the key differences.

| Noise Type | Description | Impact on Models |

|---|---|---|

| Instance-Independent Label Noise (IIN) | Label flipping depends only on the original class. Simpler to model but less realistic [7]. | Many existing techniques handle IIN well, but their effectiveness is limited for real-world noise [7]. |

| Instance-Dependent Label Noise (IDN) | The probability of a wrong label depends on both the true label and the specific input features of the instance [7]. | More accurately represents real-world scenarios but is much harder to combat, as models may overfit complex decision boundaries [7]. |

How can I identify if my dataset is affected by shortcut learning and data acquisition biases? A major cause of poor generalization is shortcut learning, where models exploit spurious correlations (like specific scanner artifacts) present in the training data instead of learning the underlying pathology [8]. You can test for this using a shuffling test: randomly shuffle spatial/temporal components of your data (e.g., image patches) to destroy the true semantic features while retaining acquisition biases. If your model's performance remains high on this shuffled data, it indicates reliance on shortcuts rather than robust features [8].

What is a principled approach to selective deployment when generalization is a concern? When a model is known to underperform on specific patient subgroups, three ethical deployment options exist [9].

| Option | Description | Ethical Consideration |

|---|---|---|

| 1. Delay Deployment | Wait until the algorithm works equally well for all subgroups. | Avoids harm but unfairly delays benefits for populations where the model is accurate [9]. |

| 2. Expedite Deployment | Deploy the model indiscriminately for all. | Risks harming patients from underrepresented groups due to poor performance [9]. |

| 3. Selective Deployment | Deploy the model only for subgroups where it is known to be trustworthy, deferring others to human experts. | An ethical intermediary solution that provides benefits where safe while preventing harm and maintaining an equivalent standard of care for all [9]. |

Experimental Protocols for Combating Noisy Labels

Protocol 1: Implementing a Typicality- and Instance-Dependent Noise (TIDN) Combating Framework

This advanced protocol is designed to handle complex, real-world label noise where atypical samples are more likely to be mislabeled [7].

- Typicality Estimation: Train a Support Vector Machine (SVM) on the features extracted from your dataset. For each sample, calculate its distance to the decision boundary; this distance represents its "typicality," with smaller distances indicating more atypical, and potentially harder-to-label, instances [7].

- TIDN-Attention Module: Integrate an attention module into your deep learning classifier. This module maps the extracted features of each instance to a sample-specific noisy transition matrix, ( T(X) ), which models the probability of a clean label being flipped to a noisy one [7].

- Recursive Training Algorithm: Follow an expectation-maximization (EM)-like recursive process:

- E-step: Use the current model and transition matrix to estimate the latent clean labels.

- M-step: Update the model parameters and the TIDN-attention module's parameters using the estimated clean labels.

- Prediction: After training, the network can make correct predictions by correcting the observed noisy labels using the learned transition matrix [7].

Protocol 2: Data Curation and Sample Selection via the "Small Loss Trick"

This model-free protocol is useful for handling simpler forms of noise and is often used in co-teaching methods [6] [7].

- Dual Network Setup: Train two neural networks simultaneously.

- Small Loss Selection: In each mini-batch, each network forward-propagates all data and calculates the loss for each sample.

- Data Exchange: Each network selects the samples with the smallest losses (presumed to be cleanly labeled) and sends these selected samples to the other network.

- Weight Update: Each network then updates its weights using the small-loss samples received from its peer. This process helps each network learn from likely clean data and avoid overfitting to noisy labels.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and data-centric "reagents" essential for experimenting with and mitigating noisy labels.

| Item | Function & Purpose |

|---|---|

| Transition Matrix, T(X) | A model-based core component that represents the probability of a clean label flipping to a noisy label. Essential for statistically consistent classifiers in the presence of label noise [7]. |

| Typicality Metric | A measure, often the distance to a decision boundary in a feature space, used to identify samples that are atypical and thus more susceptible to being mislabeled [7]. |

| Small-Loss Criterion | A model-free heuristic that assumes samples with lower training loss are more likely to have clean labels. Used for selecting clean data during training [7]. |

| Data Shuffling Test | A diagnostic procedure to detect shortcut learning. By destroying semantic features, it tests if a model relies on spurious data acquisition biases [8]. |

| TIDN-Attention Module | A neural network module that learns to map input features to an instance-dependent transition matrix, enabling the handling of complex, real-world noise [7]. |

| Anchor Points | Highly confident samples (e.g., with high predicted probability) used in some methods to estimate a class-level noise transition matrix under the IIN assumption [7]. |

Core Concept: What is Fleiss' Kappa?

Fleiss' Kappa (κ) is a statistical measure used to assess the reliability of agreement between a fixed number of raters when they classify items into categorical ratings [10]. It answers a critical question: to what extent do multiple raters agree on a classification, beyond what would be expected purely by chance [11]?

It is particularly useful because it generalizes beyond two raters, whereas Cohen's Kappa is limited to only two [10]. Fleiss' Kappa is a measure of inter-rater reliability for nominal (categorical) scales [11].

How to Calculate and Interpret Fleiss' Kappa

The formula for Fleiss' Kappa is [10]: κ = (P̄ - P̄e) / (1 - P̄e) Where:

- P̄ is the observed agreement among raters.

- P̄e is the expected agreement by chance.

The following table provides the standard benchmarks for interpreting the Kappa value, as established by Landis and Koch (1977) [10] [11].

| Kappa (κ) Value | Level of Agreement |

|---|---|

| κ ≤ 0 | Poor |

| 0.01 – 0.20 | Slight |

| 0.21 – 0.40 | Fair |

| 0.41 – 0.60 | Moderate |

| 0.61 – 0.80 | Substantial |

| 0.81 – 1.00 | Almost Perfect |

Troubleshooting FAQs: Resolving Low Agreement

Q1: Our Fleiss' Kappa score is low ("Slight" or "Fair"). What are the most common causes? Low agreement often stems from inconsistencies in the annotation process itself. Common causes include [12] [13]:

- Ambiguous Guidelines: Incomplete, vague, or contradictory instructions for raters.

- Insufficient Rater Training: Raters lack understanding of the categories or the overall purpose of the annotation [13].

- Inherent Subjectivity: The task involves concepts (e.g., sentiment, intent) that are naturally open to interpretation [12].

- Complex or Overlapping Categories: The definitions of the categories are not mutually exclusive or are too difficult to distinguish consistently [13].

Q2: What specific steps can we take to improve a low Kappa score? A multi-faceted approach targeting the root causes is most effective [12] [13]:

- Revise and Clarify Annotation Guidelines: Make instructions explicit with numerous, clear examples, especially for edge cases [12] [13].

- Implement Consensus Meetings: Hold regular sessions where raters discuss and resolve their disagreements to build a shared understanding [12].

- Conduct Iterative Rater Training: Train raters not just initially, but provide refresher courses and ongoing feedback based on quality control checks [12] [13].

- Optimize the Annotation Interface: Use tools that enforce guidelines and reduce the cognitive load on raters [12].

Q3: How does Fleiss' Kappa relate to the problem of mismatched automated annotations in research? Fleiss' Kappa is a diagnostic tool. A low Kappa in the training data indicates inconsistent ground truth, which directly undermines the development of reliable automated annotation systems. If human raters cannot agree, an AI model will learn from this noisy, unreliable data, leading to mismatched and erroneous automated annotations that propagate through the AI development lifecycle [13]. Establishing a high Kappa is therefore a prerequisite for creating trustworthy automated systems.

Q4: Our project requires raters to assign multiple categories to a single item. Can we still use Fleiss' Kappa? The standard Fleiss' Kappa requires mutually exclusive categories. However, recent methodological advances have proposed a generalized version of Fleiss' Kappa designed specifically for scenarios where raters can assign a subject to one or more nominal categories [14]. You would need to ensure you are using a statistical tool or library that implements this generalized version.

Experimental Protocol: Measuring IAA with Fleiss' Kappa

This protocol provides a step-by-step methodology for conducting an Inter-Annotator Agreement study.

1. Pre-Annotation Phase

- Define Categories: Establish a set of categorical labels. Ensure they are mutually exclusive and comprehensively cover the domain.

- Develop Annotation Guidelines: Create a detailed document with the operational definition for each category. Include plenty of annotated examples and rules for handling edge cases [12].

- Train Raters: Conduct a training session with all raters using the guidelines. Practice on a small sample and calculate an initial Kappa to gauge baseline understanding.

2. Annotation Phase

- Sample Selection: Randomly select a representative subset of items (e.g., 50-100) from your full dataset [11].

- Blinded Annotation: Each rater should independently classify every item in the sample. The process must be blinded to prevent raters from influencing each other.

3. Analysis Phase

- Compile Ratings: Organize the results into a ratings matrix.

- Calculate Fleiss' Kappa: Use statistical software (e.g., R, Python, or an online calculator) to compute the Kappa statistic and its significance [11].

- Analyze Disagreements: If Kappa is low, perform a qualitative analysis of items with the highest disagreement to identify patterns and root causes [13].

4. Iteration and Action Phase

- Refine Guidelines: Based on the disagreement analysis, clarify and improve your annotation guidelines.

- Retrain and Re-measure: If significant changes are made, retrain raters and perform another round of IAA measurement on a new sample to validate improvement.

The Scientist's Toolkit: Key Reagents for IAA Analysis

| Tool / Reagent | Function |

|---|---|

| Fleiss' Kappa Statistic | The core metric for chance-corrected agreement between multiple raters on a categorical scale [10] [11]. |

| Annotation Guidelines Document | The definitive reference that standardizes definitions, rules, and examples to ensure consistent rater judgment [12] [13]. |

| IAA Calculation Software (e.g., R, Python, Numiqo) | Tools to compute the Fleiss' Kappa statistic from a matrix of rater assignments [11]. |

| Generalized Kappa Statistic | An extension of Fleiss' Kappa for experimental designs where raters can assign multiple categories to a single subject [14]. |

| Disagreement Analysis Matrix | A qualitative tool (e.g., a spreadsheet) for logging and reviewing items with low rater agreement to identify systematic errors [13]. |

IAA Workflow for Automated Annotation

The following diagram illustrates the recommended workflow for integrating IAA measurement into the development of an automated annotation system, highlighting critical feedback loops for quality control.

Frequently Asked Questions

Q1: What are the primary sources of annotation inconsistency in clinical settings? Annotation inconsistencies among clinical experts primarily arise from four key areas [3]:

- Insufficient Information: Poor quality data or unclear annotation guidelines.

- Human Error: Cognitive "slips" due to factors like fatigue or cognitive overload.

- Observer Subjectivity and Bias: Inherent differences in expert judgment and systematic biases.

- Insufficient Domain Expertise: Though this is less likely when highly experienced clinicians are used.

Q2: I have a dataset labeled by multiple experts. Is majority voting the best way to create a single ground truth? Not necessarily. Research indicates that standard consensus methods like majority vote can consistently lead to suboptimal models [3]. A more effective approach is to assess the learnability of each expert's annotations and use only the datasets deemed 'learnable' to determine the consensus, which has been shown to achieve optimal models in most cases [3].

Q3: What is the difference between "bias" and "noise" in human judgment? In clinical judgment, bias is a systematic error (e.g., consistently underestimating pain for a specific patient group), while noise is unwanted random variability (e.g., different clinicians making different judgments for the same patient) [15]. Reducing either improves overall judgment accuracy.

Q4: What strategies can reduce noise in clinical annotations and decision-making? Two effective strategies for reducing human judgment noise are [15]:

- Using Algorithms: Well-designed scoring systems (e.g., APACHE IV) or AI models apply consistent rules, eliminating inter-clinician variability.

- Averaging Independent Judgments: Combining independent judgments from multiple clinicians can reduce noise, as random errors tend to cancel each other out.

Troubleshooting Guides

Problem: Model Performance is Inconsistent or Poor During External Validation Description: A model trained on annotations from one set of clinical experts performs poorly when validated on an external dataset, and different models built from different experts' labels show low agreement with each other.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| High System Noise: Significant unwanted variability between expert annotators [15]. | Calculate inter-annotator agreement metrics (e.g., Fleiss' κ, Cohen's κ). A "fair" or "minimal" agreement (e.g., κ = 0.383) indicates high system noise [3]. | Implement noise-reduction strategies. Use algorithms to standardize labels where possible, or average independent judgments from multiple experts [15]. |

| Suboptimal Consensus Method: Using a simple majority vote to create ground truth labels [3]. | Evaluate model performance when trained on labels from individual experts versus a majority-vote consensus. | Move beyond simple consensus. Implement a learnability-based consensus method, where only annotations from which a robust model can be built are used to determine the final ground truth [3]. |

| Presence of "Occasion Noise": Inconsistent annotations from the same expert due to fatigue, time of day, or other transient factors [15]. | If possible, analyze annotations from the same expert on similar cases or a secretly repeated case to check for intra-rater inconsistency. | Where feasible, collect multiple annotations for the same case from the same expert over time. Provide clear guidelines and a comfortable annotation environment to minimize fatigue-related errors [3]. |

Problem: Automatically Generated Annotations are Noisy or Unreliable Description: A semi-supervised or automated text annotation system is producing labels of poor quality, which is propagating errors into the training data and final model.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Low Threshold for Automated Labeling: The confidence threshold for accepting a pseudo-label is too low, allowing incorrect labels into the training set [16]. | Manually review a sample of automatically annotated data that was accepted under the current threshold (e.g., 0.6). Check the precision of these labels. | Increase the confidence threshold for automatic labeling. Experiments show that higher thresholds (e.g., 0.9) can lead to significantly better accuracy in the final model [16]. |

| Ineffective Feature Representation: The method used to convert text into machine-readable vectors (e.g., TF-IDF, Word2Vec) is not optimal for the specific clinical dataset [16]. | Train and evaluate multiple models with different feature representation methods on a small, gold-standard labeled set. | Use a meta-vectorizer approach. Experiment with multiple text extraction methods (like TF-IDF and Word2Vec) in combination with different classifiers to find the best-performing combination [16]. |

| Small Amount of Initial Labeled Data: The semi-supervised learning process starts with an insufficient number of reliable, human-annotated examples to guide the initial learning [16]. | Evaluate model performance when starting with different proportions of labeled data (e.g., 5%, 10%, 20%). | Ensure you use a sufficient amount of high-quality initial labels. Research has shown that even with a small set (5%), high accuracy is achievable, but this requires a robust self-learning setup [16]. |

Quantitative Data on Annotation Inconsistencies

The following data, drawn from real-world ICU studies, quantifies the scope of the annotation inconsistency problem.

Table 1: Inter-Annotator Agreement in ICU Studies [3]

| Annotation Task | Agreement Metric | Score | Interpretation |

|---|---|---|---|

| Severity on a five-point ICU Patient Scoring Scale | Fleiss' κ | 0.383 | Fair agreement |

| Predicting Mortality | Fleiss' κ | 0.267 | Minimal agreement |

| Making Discharge Decisions | Fleiss' κ | 0.174 | Minimal agreement |

| Model Classifications on External Validation | Average Pairwise Cohen's κ | 0.255 | Minimal agreement |

Table 2: Automated Annotation Performance with Semi-Supervised Learning [16]

| Machine Learning Model | Text Extraction Method | Labeled Data Scenario | Threshold | Accuracy |

|---|---|---|---|---|

| Decision Tree (DT) | TF-IDF | 5% | 0.9 | 97.1% |

| SVM | TF-IDF | 10% | 0.8 | ~90%+ |

| K-Nearest Neighbors (KNN) | Word2Vec | 20% | 0.7 | ~90%+ |

Experimental Protocols

Protocol 1: Quantifying Inter-Expert Annotation Inconsistency

Objective: To measure the level of disagreement among clinical experts annotating the same ICU data.

- Dataset Preparation: Select a representative set of patient cases (e.g., 60 instances) from the ICU. Each case should be described by relevant clinical features (e.g., drug variables, physiological parameters) [3].

- Expert Annotation: Engage multiple clinical experts (e.g., 11 ICU consultants) to independently annotate each case based on a specific clinical question (e.g., "How ill is the patient?" on a five-point scale) [3].

- Agreement Calculation: Use statistical measures to quantify agreement:

Protocol 2: Semi-Supervised Automated Text Annotation for Hate Speech Detection (Adaptable to Clinical Text)

Objective: To automatically annotate a large volume of unlabeled text data using a small set of initial human annotations [16].

- Data Preprocessing: Clean the text data (e.g., YouTube comments, clinical notes) by removing special characters, performing stemming, and normalizing text [16].

- Meta-Vectorization: Convert the cleaned text into numerical vectors using multiple methods, such as TF-IDF and Word2Vec [16].

- Model Training: Develop multiple machine learning models (e.g., SVM, Decision Tree, KNN, Naive Bayes) using the initial small set of labeled data (e.g., 5%, 10%, 20% of the total data) [16].

- Self-Learning Cycle: a. The trained models predict labels for the unlabeled data. b. Predictions with a confidence score above a set threshold (e.g., 0.9) are accepted and added to the training set. c. The models are re-trained with the enlarged training set. d. This cycle repeats, progressively improving the model and the quantity of labeled data [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Annotation Inconsistency Research

| Item / Tool | Function |

|---|---|

| ICU Datasets (e.g., QEUH, HiRID) | Provide real-world, multivariate patient data (e.g., vital signs, drug variables) for annotation tasks and model validation [3]. |

| Agreement Metrics (Fleiss' κ, Cohen's κ) | Statistical measures to quantitatively assess the level of consistency between multiple annotators [3]. |

| Machine Learning Algorithms (SVM, DT, KNN, NB) | Core classifiers for building predictive models from annotated data and for powering semi-supervised auto-annotation systems [16]. |

| Text Vectorization Methods (TF-IDF, Word2Vec) | Convert unstructured text data into a structured, numerical format that machine learning models can process [16]. |

| APACHE IV Scoring System | An algorithmic tool used to standardize the assessment of patient disease severity and mortality probability, thereby reducing human judgment noise [15]. |

Workflow Diagram: Strategies to Mitigate Annotation Noise

The diagram below summarizes the causes of and solutions for human annotation noise, which can lead to mismatched automated annotations.

Workflow Diagram: Semi-Supervised Automated Annotation

This diagram outlines a semi-supervised learning workflow for automated text annotation, a key method for generating labels while managing the cost and inconsistency of fully manual annotation.

FAQs on Mismatched Automated Annotations

FAQ 1: What are the most common root causes of mismatched automated annotations? Research and industry experience identify three primary root causes:

- Inter-expert Variability: Inherent differences in judgment, bias, or "slips" between domain experts, even highly experienced ones, lead to inconsistent ground truth labels [3] [13].

- Ambiguous Guidelines: Unclear, incomplete, or subjective annotation instructions result in inconsistent application of labels across different annotators or sessions [13] [17].

- Data Complexity: Complex, nuanced, or ambiguous data (e.g., occluded objects, subtle features, or rare edge cases) challenges both human annotators and automated systems, increasing the likelihood of errors [18] [13].

FAQ 2: How does inter-expert variability quantitatively impact AI model performance? Studies show that variability among experts leads to significant performance drops in AI models. The table below summarizes key metrics from a clinical study involving 11 Intensive Care Unit (ICU) consultants [3].

| Metric | Value / Finding | Implication |

|---|---|---|

| Internal Agreement (Fleiss' κ) | 0.383 (Fair agreement) | Labels from different experts on the same data show notable inconsistency [3]. |

| External Validation Agreement (Avg. Cohen's κ) | 0.255 (Minimal agreement) | AI models trained on labels from one expert perform inconsistently when classifying data labeled by others [3]. |

| Disagreement on Discharge Decisions | Fleiss' κ = 0.174 | Experts showed higher inconsistency on certain judgment types (discharge) versus others (mortality, κ=0.267) [3]. |

| Model Performance Impact | Suboptimal and variable performance across models trained on different expert labels | There is often no single "super expert"; models reflect the inconsistencies of their training data [3]. |

FAQ 3: What is a standard experimental protocol for diagnosing the root cause of annotation mismatches? A robust diagnostic protocol involves systematic comparison and consensus analysis [3] [17].

- Objective: To determine if mismatches originate from inter-expert variability, guideline ambiguity, or data complexity.

- Materials:

- A curated dataset containing challenging or representative samples.

- A panel of multiple domain experts (e.g., 3-5).

- A set of initial annotation guidelines.

- Methodology:

- Blinded Annotation: Provide each expert with the same dataset and initial guidelines, ensuring they annotate independently.

- Quantify Inter-Expert Variability: Calculate inter-annotator agreement (IAA) using metrics like Fleiss' Kappa or Cohen's Kappa [3].

- Analyze Disagreement Patterns: Systematically review instances where experts disagree. Categorize the root cause for each disagreement as:

- Iterate on Guidelines: Refine the annotation guidelines to address identified ambiguities.

- Re-test: Have a subset of experts re-annotate a sample using the revised guidelines to measure improvement in IAA.

FAQ 4: What are the best practices for creating annotation guidelines to minimize errors? Clear and comprehensive guidelines are critical for consistency [20] [4].

- Define Fields Exhaustively: Create a detailed list of all fields to be annotated, with clear definitions to prevent confusion [20].

- Use Examples: Provide annotated sample documents for each data type and scenario, including edge cases [20].

- Establish Rules for Consistency: Mandate how to handle repeated values (annotate all instances) and differing values (define which to prioritize) [20].

- Specify Technical Precision: Instruct annotators to keep annotation boxes tight to the target data and avoid extraneous information [20].

- Implement Quality Control: Use Inter-Annotator Agreement (IAA) checks and consensus meetings to resolve disagreements and continuously improve guidelines [4].

FAQ 5: How can I visualize the workflow for diagnosing annotation mismatches? The following diagram outlines the systematic process for diagnosing the root causes of annotation mismatches.

Diagram Title: Diagnostic Workflow for Annotation Mismatches

Troubleshooting Guides

Guide 1: Resolving Issues from Inter-expert Variability

Symptoms:

- Low Inter-Annotator Agreement (IAA) scores (e.g., Fleiss' κ < 0.4) [3].

- Your AI model's performance is inconsistent when validated against labels from different experts.

- Annotations for subjective or complex judgments (e.g., "illness severity") show high variance.

Steps for Resolution:

- Measure IAA: Quantify the level of disagreement using statistical measures like Fleiss' Kappa (for multiple annotators) or Cohen's Kappa (for two annotators) to establish a baseline [3].

- Conduct Consensus Meetings: Bring experts together to review disputed annotations. The goal is not to find a single "correct" answer, but to align on a shared interpretation framework [3] [17].

- Implement a Consensus Labeling Strategy:

- Majority Vote: Use the label selected by the majority of experts as the ground truth.

- Learnability-weighted Consensus: Research suggests that giving more weight to annotations from experts whose labels produce AI models that perform well on external validation can be more effective than simple majority vote [3].

- Document Rationale: Record the reasons behind consensus decisions for ambiguous cases and incorporate them into your annotation guidelines.

Guide 2: Fixing Problems Caused by Ambiguous Guidelines

Symptoms:

- Consistent patterns of error are found across multiple annotators.

- Annotators frequently ask for clarification on the same concepts.

- Systematic errors are found during quality assurance checks (e.g., missing specific edge cases like "pedestrians on scooters") [13].

Steps for Resolution:

- Perform Root Cause Analysis: Sample mismatched annotations and trace the error back to the guideline that was either missing, unclear, or misleading [17].

- Enhance Guidelines with Examples: For every rule, provide positive and negative examples. Crucially, include examples of edge cases and how they should be handled [13].

- Clarify Definitions: Ensure all key terms and classes are defined operationally, leaving little room for subjective interpretation.

- Pilot Test Revised Guidelines: Before full redeployment, have a small group of annotators use the revised guidelines and measure the change in IAA.

Guide 3: Addressing Challenges Posed by Data Complexity

Symptoms:

- Errors consistently occur on specific data types (e.g., occluded objects, low-resolution images, complex biological phenomena) [18].

- Automated pre-labeling tools fail spectacularly on novel or rare scenarios not well-represented in the training data [18].

- Both human annotators and AI models struggle with the same difficult samples.

Steps for Resolution:

- Identify Complex Data Segments: Use your model's uncertainty scores or error analysis to pinpoint the data types that are most problematic [4].

- Augment Expert Oversight: Assign the most complex data segments to your most senior annotators or domain experts for manual review and correction [18].

- Implement Advanced Tools: For computer vision, use tools that support model-assisted labeling and active learning. These tools can pre-label easy cases and flag difficult ones for human review, creating an efficient human-in-the-loop workflow [21] [4].

- Curate Specialized Datasets: Actively collect and annotate more examples of the complex edge cases to improve the model's performance on these challenging scenarios over time.

The Scientist's Toolkit: Research Reagent Solutions

The table below details key resources and methodologies used in the featured experiments on annotation variability.

| Research Reagent / Method | Function & Explanation |

|---|---|

| Inter-Annotator Agreement (IAA) | A statistical measure (e.g., Fleiss' κ, Cohen's κ) to quantify the level of consensus between multiple experts when annotating the same data. It is the primary metric for diagnosing inter-expert variability [3]. |

| Consensus Protocols (e.g., Majority Vote) | A methodology to derive a single ground truth label from multiple conflicting expert annotations. Used to create a standardized dataset for model training from noisy expert labels [3]. |

| Learnability-weighted Consensus | An advanced consensus method where experts' annotations are weighted based on the performance of the AI model trained on them. Aims to create a more robust ground truth dataset than simple majority vote [3]. |

| Human-in-the-Loop (HITL) Workflow | An operational framework that combines automated annotation with human expertise. The AI handles simple, clear-cut cases, while humans focus on complex, ambiguous, or high-stakes annotations, optimizing both speed and accuracy [21] [4]. |

| Active Learning Pipelines | A machine learning technique where the model itself identifies data points it is most uncertain about. These points are then prioritized for human annotation, making the data collection process more efficient and targeted [4]. |

| Bias Detection Tools | Software features that analyze annotated datasets to flag potential biases, such as skewed representation of certain classes or demographics, allowing researchers to correct them before model training [4]. |

Implementing Robust Annotation Frameworks and Tools

Your Quick Guide to Framework Selection

| Aspect | Human-in-the-Loop (HITL) | Semi-Supervised Learning (SSL) |

|---|---|---|

| Core Principle | Human expertise actively integrated into the ML loop for feedback and correction [22] [23]. | Leverages a small amount of labeled data with a large amount of unlabeled data to train models [24] [25]. |

| Primary Goal | Improve model accuracy, interpretability, and trustworthiness through human oversight [23]. | Reduce the cost and effort of data labeling while maintaining performance [24] [25]. |

| Human Role | Active controller, teacher, or oracle; provides iterative feedback and corrects errors [22]. | Primarily passive; provides initial labeled data, with the process then largely automated [24]. |

| Control Dynamic | Interactive and iterative; control can shift between human and model [22]. | Model-controlled; the algorithm automates the exploitation of unlabeled data [24] [22]. |

| Ideal for | Safety-critical applications (e.g., medical diagnosis, autonomous driving), complex edge cases, and tasks requiring high reliability [23] [26]. | Situations with abundant unlabeled data but limited labeling budgets, and for well-defined tasks where the model's initial assumptions hold [24] [25]. |

Frequently Asked Questions & Troubleshooting

Q1: My automated annotations are incorrect even though the underlying data is correct, similar to a case study from BAGNOLI DI SOPRA. Which framework is better for diagnosing and fixing this? [27]

- A: A Human-in-the-Loop (HITL) approach is more suitable for diagnosing this specific issue. The problem often lies in the annotation generation algorithm or template, not the data itself [27]. An expert can inspect the erroneous annotations (e.g., a bride's name repeated), identify the flaw in the logic or template, and implement a corrective strategy. Semi-Supervised Learning might inadvertently propagate these errors via pseudo-labels.

Q2: I have a large volume of unlabeled medical image data, but labeling is expensive and requires domain experts. How can I proceed?

- A: Semi-Supervised Learning (SSL) is an excellent starting point. You can use a small, expertly labeled dataset to bootstrap the model and then apply pseudo-labeling or consistency regularization to leverage the vast unlabeled data [24] [25]. For complex or ambiguous cases, you can later introduce a HITL component where experts review low-confidence pseudo-labels generated by the SSL model.

Q3: In drug development, how can I ensure my model remains reliable when it encounters unexpected scenarios (edge cases)? [28] [26]

- A: For safety-critical fields like drug development, a Human-in-the-Loop strategy is crucial. You can design a workflow where the model flags uncertain predictions or edge cases for human expert review [28] [26]. This continuous feedback loop allows the model to learn from these challenging examples and adapt over time, thereby improving its reliability and safety.

Q4: What's the biggest risk when using Semi-Supervised Learning, and how can I mitigate it?

- A: The primary risk is error propagation and amplification. If the model creates incorrect pseudo-labels with high confidence and retrains on them, performance can degrade rapidly [24] [25].

- Mitigation Strategy: Implement a confidence threshold. Only unlabeled data with predictions above a certain confidence level are assigned pseudo-labels. Lower-confidence data can be held back for potential review by a human-in-the-loop, creating a hybrid system [29].

Q5: We are scaling our annotation project but are concerned about consistency and bias. How can HITL help?

- A: HITL frameworks address this through structured processes [12] [29]:

- Strong Guidelines: Create detailed annotation guidelines with examples and edge cases.

- Annotator Training: Conduct training and calibration sessions for annotators.

- Quality Assurance (QA) Loops: Use consensus labeling (multiple annotators label the same data) and random sampling by QA specialists to measure agreement and catch drift [29].

- Diverse Teams: Building diverse annotation teams helps reduce inherent bias in the labels [29].

Experimental Protocols for Troubleshooting Mismatched Annotations

Protocol 1: Diagnosing Annotation System Flaws with HITL Analysis

This protocol is designed to systematically identify the root cause of incorrect automated annotations, inspired by a real-world case study [27].

- Objective: To isolate and rectify the source of discrepancy between correct underlying data and erroneous automated annotations.

- Materials: A curated dataset where the digital records are known to be correct but the corresponding automated annotations are faulty.

- Methodology:

- Instance Audit: Select a subset of records with incorrect annotations (e.g., Annotation ID 462500 where the bride's name was repeated) and their correct source data (e.g., Marriage Record ID 462493) [27].

- Template Interrogation: A human expert (researcher/developer) examines the annotation template or algorithm logic used to generate the flawed output. The goal is to find errors like duplicated field references or faulty data extraction logic [27].

- Correction and Validation: The expert corrects the identified flaw in the template/algorithm. The system is then run again to regenerate annotations.

- Iterative Verification: The corrected annotations are manually verified against the source truth data. Steps 2-4 are repeated until the accuracy is satisfactory.

- Expected Outcome: Identification of a systematic error in the annotation generation process and a validated fix to prevent its recurrence.

Protocol 2: Implementing a Hybrid SSL-HITL Pipeline for Robust Labeling

This protocol combines the efficiency of SSL with the precision of HITL to create a robust labeling system that minimizes error propagation.

- Objective: To efficiently leverage unlabeled data while safeguarding against the introduction of pervasive labeling errors.

- Materials: A large pool of unlabeled data and a small, high-quality labeled dataset.

- Methodology:

- Initial Model Training: Train a model on the small labeled dataset.

- Pseudo-Labeling with Thresholding: Use the trained model to predict labels on the unlabeled data. Apply a confidence threshold (e.g., only accept predictions with >90% confidence) [24] [25].

- HITL Review Station: Data points with confidence scores below the threshold are automatically routed to a human expert for labeling [22] [23].

- Model Retraining: The model is retrained on the combined original labeled data, the high-confidence pseudo-labels, and the new expert-labeled data.

- Closed-Loop Feedback: The expert's corrections on low-confidence data are used to further refine the model and the confidence calibration in subsequent cycles.

- Expected Outcome: A scalable labeling process that maintains high data quality by focusing human effort on the most ambiguous and critical examples.

Workflow Visualization

HITL and SSL Workflows

Decision Framework for Researchers

The Scientist's Toolkit: Key Research Reagents & Solutions

| Tool or Technique | Function in the Context of Troubleshooting Annotations |

|---|---|

| Active Learning [22] [23] | An HITL technique that intelligently selects the most informative data points for a human to label, maximizing the value of expert time and focusing effort on the most ambiguous cases. |

| Pseudo-Labeling [24] [25] | A core technique in SSL where the model's own predictions on unlabeled data are used as training labels. Critical for bootstrapping, but requires quality control. |

| Confidence Thresholding [24] [25] | A gatekeeping parameter that prevents low-confidence model predictions from being accepted as pseudo-labels, thereby reducing error propagation. |

| Consistency Regularization [24] | An SSL method that encourages a model to produce similar outputs for slightly perturbed versions of the same input data. This leverages the "continuity assumption" and helps the model learn robust features from unlabeled data. |

| Inter-Annotator Agreement (IAA) [12] [29] | A quality assurance metric used in HITL to measure the consistency between different human annotators. Low agreement signals ambiguous guidelines or data. |

| Annotation Guidelines & Ontologies [12] [26] | Formal documents and structured vocabularies that define labeling rules, classes, and how to handle edge cases. They are the foundational "protocol" for ensuring annotation consistency. |

Leveraging Active Learning to Prioritize High-Value Data for Expert Review

Frequently Asked Questions (FAQs)

Q1: What is the primary goal of using Active Learning for data prioritization? The primary goal is to significantly reduce the manual screening workload for experts by using a machine learning model to intelligently identify and present the most informative or uncertain data points from a large, unlabeled pool. This approach can save up to 95% of screening time while ensuring that nearly all relevant data is found [30].

Q2: What are common indicators that my Active Learning system is encountering mismatched annotations? Common indicators include a persistently high rate of model uncertainty (the model remains highly uncertain in its predictions even after many review cycles), a stagnation or drop in model performance metrics, and the expert reviewer frequently disagreeing with the model's suggestions on high-uncertainty samples [1] [4].

Q3: My model seems to have plateaued in performance. Could mismatched annotations be the cause? Yes. If the model has learned from incorrectly labeled data, it can enter a feedback loop where its performance stops improving. This is often caused by annotation bias or a gradual data drift where the characteristics of the incoming data change over time, making past annotations less reliable [4].

Q4: Are there automated methods to detect potential annotation errors? Yes. One effective method involves comparing model predictions against existing annotations. For tasks like object detection, you can compute the L2 distance between the annotated class and the model's softmax logits for the matched prediction. This distance serves as a "mislabel metric," helping to flag risky annotations for review with high recall [1].

Q5: What is a reliable stopping point for an Active Learning review cycle? Since finding 100% of relevant data is often impractical, a common goal is to target 95% of the total inclusions. You can employ stopping rules such as halting after finding a pre-defined number of consecutive irrelevant records (e.g., 50, 100, or 250) or after a set amount of screening time has elapsed [30].

Troubleshooting Guide

| Problem | Symptom | Likely Cause | Solution |

|---|---|---|---|

| Persistent Model Uncertainty | The model's confidence does not improve, or it consistently flags a large portion of the data as uncertain. | Annotation inconsistencies or a poorly defined feature space causing the model to be confused. | 1. Audit labels: Perform a targeted review of annotations on high-uncertainty samples.2. Refine guidelines: Clarify annotation instructions for ambiguous cases.3. Switch models: Try a different feature extractor to re-order the data [30]. |

| Performance Stagnation | Model accuracy or F1 score stops increasing despite continued expert review. | The model is stuck in a local optimum, potentially due to biased sample selection or learning from mislabeled data [4]. | 1. Introduce diversity: Use hybrid sampling (e.g., mix uncertainty with diversity sampling) to explore new data regions.2. Detect noise: Run an automated mislabel detection script to find and correct errors [1]. |

| Low Expert-Model Agreement | The human expert frequently disagrees with the model's predictions on the records it selects for review. | A significant number of mismatched annotations in the training set are misleading the model. | 1. Adopt IAA: Use Inter-Annotator Agreement checks to resolve labeling disagreements.2. Leverage committees: Use the Query by Committee (QBC) method to surface data points where multiple models disagree, highlighting ambiguity [31] [4]. |

Experimental Protocol for Mismatched Annotation Detection

The following protocol details a method to detect mislabeled annotations in an image dataset, adapted from published approaches [1].

1. Objective To identify and flag bounding box annotations that are likely mislabeled, allowing for targeted expert review and correction.

2. Materials and Reagents

- Dataset: Your labeled image dataset (e.g., in PASCAL VOC format).

- Software: A pre-trained object detection model (e.g., Faster R-CNN).

- Computing Environment: Python with deep learning libraries (PyTorch/TensorFlow) and standard data science stacks (NumPy, Pandas).

3. Procedure

- Step 1: Model Prediction. Run inference on your entire labeled dataset using your chosen object detection model to obtain predictions (bounding boxes, class probabilities).

- Step 2: Annotation-Prediction Matching. For each ground-truth bounding box in your dataset, find the model-predicted bounding box that has the maximum Intersection over Union (IoU).

- Step 3: Calculate Mislabel Metric. For each matched pair, compute the L2 distance (Euclidean distance) between the one-hot encoded vector of the annotated class and the model's softmax logits for the predicted class. This distance is your "mislabel score."

- Formula:

Mislabel_Score = || one_hot(annotation) - softmax(prediction) ||₂

- Formula:

- Step 4: Flag Risky Annotations. Rank all annotations by their mislabel score in descending order. Annotations with scores above a chosen threshold are flagged for expert review. The threshold can be selected from the precision-recall curve to achieve the desired balance (e.g., high recall to catch most errors).

4. Quantitative Outcomes The table below summarizes the expected performance of this method based on a benchmark experiment where 20% of annotations were artificially corrupted [1].

| Metric | Value | Interpretation |

|---|---|---|

| Recall | > 93% | The method successfully flags over 93% of all mislabeled annotations. |

| Precision | ~75% | About 75% of the flagged annotations are truly mislabeled; the rest are challenging but correct. |

| Time Savings | ~4x | Manual QA effort is reduced fourfold by focusing only on the high-risk subset. |

Research Reagent Solutions

| Item | Function in the Experiment |

|---|---|

| Pre-trained Detection Model (e.g., Faster R-CNN) | Provides the baseline predictions and class confidence scores (logits) necessary to compute the mislabel metric. |

| Intersection over Union (IoU) | A core evaluation metric used to correctly match ground-truth annotations with model predictions based on their spatial overlap. |

| Mislabel Score (L2 Distance) | The core calculated metric that quantifies the discrepancy between a human annotation and the model's prediction, serving as a proxy for label correctness. |

| Precision-Recall Curve | A diagnostic tool used to evaluate the performance of the mislabel detection method and select an optimal threshold for flagging annotations. |

Active Learning with Quality Control Workflow

The following diagram illustrates the integrated workflow of an Active Learning cycle enhanced with automated quality control to tackle mismatched annotations.

The Researcher-in-the-Loop Active Learning Cycle

This diagram details the core Active Learning cycle, which is central to the data prioritization strategy.

Utilizing Pre-trained Models and Transfer Learning for Effective Pre-labeling

Pre-labeling, the process of using artificial intelligence to generate initial data annotations, has become a fundamental component of modern machine learning workflows, particularly in data-intensive fields like drug development. By leveraging pre-trained models and transfer learning, researchers can significantly accelerate the annotation of complex datasets, from cellular imagery to molecular structures. However, these automated systems can produce mismatched annotations that propagate errors through downstream analysis. This technical support center provides targeted troubleshooting guidance for researchers encountering these challenges, framed within the broader context of ensuring annotation reliability for scientific discovery.

Fundamentals of Transfer Learning for Pre-labeling

What is transfer learning and how does it apply to pre-labeling?

Transfer learning is a machine learning technique that repurposes a model developed for one task as the starting point for a related task [32]. In pre-labeling workflows, this involves using models pre-trained on large, general datasets (like ImageNet for visual tasks) to generate initial annotations on specialized scientific data [33]. This approach provides significant head starts compared to manual annotation or training models from scratch.

The core process involves: selecting an appropriate pre-trained model, freezing early layers that contain general feature detection capabilities, replacing the output layer to match your target annotation classes, and fine-tuning the model on a subset of your domain-specific data [32]. This method is particularly valuable when labeled training data is scarce or expensive to produce, as is common in drug development research.

What are the key benefits of implementing transfer learning for pre-labeling?

- Accelerated Annotation Workflows: Pre-trained models can generate preliminary labels almost immediately, dramatically reducing the time from data collection to analysis [33]

- Reduced Resource Requirements: By building upon existing knowledge, transfer learning requires significantly less labeled data than training models from scratch [32]

- Improved Consistency: Automated pre-labeling reduces human variability in annotation, creating more uniform datasets [33]

- Enhanced Model Performance: Models initialized with transfer learning often achieve higher accuracy faster than those trained from random initialization [32]

Troubleshooting Mismatched Automated Annotations

Common Pre-labeling Error Taxonomy

Based on empirical studies of annotation systems, particularly in complex domains like biomedical imaging, mismatched annotations can be categorized across three key quality dimensions [34]:

Table: Taxonomy of Common Pre-labeling Errors

| Error Category | Error Types | Typical Manifestations |

|---|---|---|

| Completeness Errors | Attribute omission, Missing feedback loop, Edge-case omission, Selection bias | Partially labeled structures, Missing rare cell types, Incomplete boundary detection |

| Accuracy Errors | Wrong class label, Bounding-box errors, Granularity mismatch, Bias-driven errors | Misclassified molecular structures, Imprecise region boundaries, Over/under-segmentation |

| Consistency Errors | Inter-annotator disagreement, Ambiguous instructions, Lack of purpose knowledge | Inconsistent labeling across similar samples, Variable annotation criteria application |

Troubleshooting Guide: Mismatched Annotations

Table: Troubleshooting Framework for Annotation Mismatches

| Problem Symptom | Potential Root Causes | Debugging Steps | Prevention Strategies |

|---|---|---|---|

| Systematic class confusion | Domain mismatch between pre-training and target data, Inadequate fine-tuning | 1. Perform error analysis to identify confused classes2. Verify class definitions in annotation guidelines3. Check for dataset imbalance4. Assess feature space alignment | 1. Use domain-adapted pre-trained models2. Implement stratified sampling3. Apply class-balanced loss functions |

| Poor boundary precision | Model architecture limitations, Resolution mismatch, Inadequate spatial supervision | 1. Evaluate at multiple IoU thresholds2. Check input resolution vs. model capabilities3. Assess augmentation strategies | 1. Select models with appropriate receptive fields2. Implement multi-scale training3. Add boundary-aware loss terms |

| Inconsistent labels across similar instances | Ambiguous annotation guidelines, Insufficient training examples, High inter-annotator variability | 1. Conduct label consistency audit2. Measure inter-annotator agreement3. Review guideline clarity | 1. Establish detailed annotation protocols2. Implement consensus mechanisms3. Use active learning for ambiguous cases |

| Performance degradation over time | Data drift, Concept drift, Feedback loop contamination | 1. Monitor performance metrics longitudinally2. Implement drift detection3. Audit recent annotations | 1. Establish continuous evaluation2. Implement data versioning3. Regular model retraining cycles |

Experimental Protocols for Pre-labeling Systems

Standardized Validation Protocol for Pre-labeling Quality

To ensure reliable pre-labeling in research settings, implement this comprehensive validation protocol:

Phase 1: Baseline Establishment

- Select a representative sample of your dataset (200-500 instances, depending on data complexity)

- Create gold-standard manual annotations with multiple annotators and consensus resolution

- Establish performance benchmarks for your specific task (accuracy, precision, recall thresholds)

Phase 2: Model Adaptation

- Select a pre-trained model aligned with your data modality (ResNet/Inception for images, BERT for text) [32]

- Implement progressive fine-tuning: freeze all layers initially, then unfreeze later layers selectively

- Use a lower learning rate (typically 0.001-0.0001) to preserve pre-trained knowledge while adapting to new domain

Phase 3: Quality Assessment

- Perform cross-validation with emphasis on edge cases and rare categories

- Conduct error analysis with confusion matrices and qualitative review

- Measure inter-annotator agreement between model and human experts

Phase 4: Iterative Refinement

- Implement active learning to identify uncertain predictions for human review

- Establish feedback loops where corrected annotations retrain the model

- Monitor for performance regressions and concept drift

Quantitative Performance Assessment

Table: Performance Benchmarks for Pre-labeling Systems

| Metric | Target Threshold | Measurement Protocol | Interpretation Guidelines |

|---|---|---|---|

| Pre-labeling Accuracy | >90% for mature systems | (Correct pre-labels)/(Total instances) | Below 80% indicates need for model improvement; 80-90% requires selective human review; >90% suitable for bulk processing |

| Human Correction Rate | <30% for efficiency | (Corrected annotations)/(Total pre-labels) | Higher rates indicate poor pre-labeling quality; analyze patterns in required corrections |

| Time Savings | >50% vs. manual annotation | (Manual annotation time - Correction time)/(Manual annotation time) | Measures efficiency gains; below 25% suggests workflow optimization needed |

| Inter-annotator Agreement | >0.8 Cohen's Kappa | Agreement between model and expert annotators | Measures labeling consistency; below 0.6 indicates significant guideline or model issues |

Research Reagent Solutions for Pre-labeling Experiments

Essential Computational Tools for Transfer Learning

Table: Research Reagent Solutions for Pre-labeling Implementation

| Tool Category | Specific Solutions | Function | Implementation Considerations |

|---|---|---|---|

| Pre-trained Models | ResNet, Inception, BERT, CLIP | Provide foundational feature extraction for various data modalities | Select based on domain similarity to target task; consider model size/computational constraints |

| Annotation Platforms | Labelbox, SuperAnnotate, Scale AI | Facilitate human-in-the-loop review and correction of pre-labels | Evaluate integration capabilities with existing MLops infrastructure |

| Transfer Learning Frameworks | TensorFlow Hub, Hugging Face, PyTorch Hub | Simplify access to pre-trained models and transfer learning implementations | Consider community support, documentation, and model currency |

| Quality Validation Tools | FiftyOne, Aquarium Learning | Enable systematic error analysis and performance monitoring | Assess compatibility with data formats and visualization needs |

Workflow Visualization

Frequently Asked Questions

How do we determine the optimal confidence threshold for automated pre-labeling acceptance?

Implement confidence thresholding where pre-labels with high confidence scores are automatically accepted, while low-confidence predictions route to human review [35]. The optimal threshold is domain-dependent and should be determined empirically by:

- Analyzing the distribution of confidence scores across your validation set

- Plotting precision-recall curves at different threshold levels

- Balancing the cost of human review against potential error rates

- Considering the criticality of annotation accuracy for downstream tasks

Typically, thresholds between 0.7-0.9 provide reasonable trade-offs, with higher thresholds for safety-critical applications.

What strategies effectively address bias amplification in pre-labeling systems?

Bias amplification occurs when models multiply existing biases present in training data [35] [34]. Mitigation strategies include:

- Pre-implementation Bias Audit: Analyze pre-training data for representation gaps and the target domain for potential distribution mismatches

- Diverse Data Sampling: Ensure fine-tuning data represents all relevant subpopulations and edge cases

- Adversarial Debiasing: Implement techniques that explicitly punish model reliance on protected attributes

- Continuous Monitoring: Track performance metrics disaggregated by relevant data segments

- Human Oversight Prioritization: Direct human review toward under-represented groups where models likely perform worse

How should we handle domain shift between pre-training data and our specialized research data?

Domain adaptation techniques bridge the gap between source (pre-training) and target (research) domains:

- Progressive Fine-tuning: Start with lower layers frozen, gradually unfreezing while monitoring validation performance on target data

- Feature Alignment: Implement domain adaptation layers that explicitly minimize distribution differences between source and target features

- Data Augmentation: Apply domain-specific transformations to make models invariant to irrelevant variations in research data

- Multi-Task Learning: Jointly train on auxiliary tasks that force the model to learn domain-relevant representations

- Architecture Modification: Adjust input preprocessing and early layers to accommodate domain-specific data characteristics

What quality assurance framework ensures consistent pre-labeling across multiple annotators and iterations?

Establish a comprehensive QA framework incorporating:

- Annotation Guidelines: Develop detailed, unambiguous protocols with examples and edge case handling instructions [34]

- Regular Calibration Sessions: Conduct periodic review sessions to maintain consistency across human annotators

- Quantitative Metrics: Track inter-annotator agreement, precision, recall, and drift metrics consistently

- Blind Review: Implement periodic blind re-annotation of previously labeled samples to measure consistency

- Feedback Integration: Create structured processes for incorporating edge case discoveries into annotation guidelines

How do we implement effective active learning to minimize human annotation effort?

Active learning prioritizes annotation efforts on the most valuable samples:

- Uncertainty Sampling: Flag instances where model confidence is lowest for human review

- Diversity Sampling: Ensure selected samples represent diverse regions of the feature space

- Expected Model Change: Prioritize samples that would cause the greatest model updates if labeled

- Batch Selection: Optimize sets of samples for parallel annotation considering both uncertainty and diversity

- Stopping Criteria: Establish metrics to determine when additional labeling provides diminishing returns

Implementation requires balancing exploration (diverse samples) with exploitation (uncertain samples), typically using multi-armed bandit approaches or similar frameworks.

Best Practices for Developing Clear, Unambiguous Annotation Guidelines

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common causes of mismatched automated annotations? Mismatches often stem from unclear or incomplete annotation guidelines, leading to inconsistent interpretations by both human annotators and AI models. Common issues include class overlap (e.g., distinguishing 'supportive' from 'neutral' sentiment), ambiguous definitions for complex labels, and a lack of examples for difficult or edge cases [36]. Furthermore, automated models can perform variably across different tasks, and their outputs may significantly diverge from human judgment without proper validation [37].

FAQ 2: How can we quickly identify a mismatch between automated and human annotations? Implement a systematic quality assurance (QA) workflow. This involves having expert annotators review a statistically significant sample of the AI-annotated data. Tracking metrics like inter-annotator agreement can help quantify inconsistencies. Using a platform that allows for flagging ambiguous data points is also crucial for identifying mismatches early [36].

FAQ 3: Our team disagrees on specific annotation rules. How can we create a single source of truth? Develop and maintain a living document of detailed annotation guidelines. This document should be created iteratively: have expert annotators label a small dataset, review all disagreements, and use those points of confusion to refine and expand the rules. This process ensures guidelines are grounded in practical challenges, not just theory [36].

FAQ 4: Can we fully automate the data annotation process? While automation can dramatically accelerate annotation, a fully automated process is not recommended for critical research applications. A human-in-the-loop (HITL) approach is considered best practice. In this model, automation handles initial labeling or pre-annotation, while human experts focus on complex cases, quality control, and validating the model's outputs. This ensures accuracy and maintains human judgment in the loop [21] [37].

Troubleshooting Guide: Mismatched Automated Annotations

| Problem | Root Cause | Solution | Validation Protocol |

|---|---|---|---|

| Low Inter-Annotator Agreement | Ambiguous class definitions; lack of examples for edge cases [36]. | Refine guidelines with clear, distinct class definitions and add canonical examples of each, including borderline cases. | Re-measure agreement (e.g., Cohen's Kappa) on a new sample of 100-200 items after guideline update. |

| Systematic AI Model Bias | AI model trained on biased or non-representative data; prompt design issues for LLMs [37]. | Audit training data for representation; implement prompt tuning and optimization for LLM-based annotation [37]. | Compare AI output against a human-annotated gold-standard test set; calculate precision/recall for underrepresented classes. |

| Poor Quality in Pre-labeled Data | Automated pre-annotation tool has inherent limitations or errors that human annotators blindly accept. | Use automation for pre-labels but require human annotators to actively verify every tag, not just passively accept them. | Introduce a QA check where expert annotators review a subset of pre-annotated data before full-scale labeling begins. |

| Inconsistent Handling of Overlapping Classes | Guidelines do not provide a clear decision hierarchy for items that could belong to multiple classes. | Create a flow-chart or decision tree within the guidelines to resolve common class overlap scenarios [36]. | Track the frequency of the previously ambiguous class; a decrease indicates the new decision tree is effective. |

Experimental Protocol for Validating Automated Annotations

This protocol provides a step-by-step methodology to benchmark an automated annotation system against human-generated ground truth, as referenced in the troubleshooting guide.

1. Hypothesis: The automated annotation system (e.g., an LLM, a supervised model) can achieve a level of agreement with human experts that meets or exceeds the observed inter-annotator agreement among humans.

2. Materials and Reagents:

| Research Reagent Solution | Function in Experiment |

|---|---|

| Gold-Standard Test Set | A benchmark dataset of 200-500 items, independently annotated by at least 2-3 human experts with high agreement, serving as ground truth. |

| Automated Annotation Tool | The system to be validated (e.g., GPT-4 API, fine-tuned BERT model, Encord, Snorkel Flow) [21] [37]. |

| Annotation Guideline Document | The detailed, iterative rules and examples used by both human and automated annotators [36]. |

| Statistical Analysis Software | Software (e.g., Python, R) to calculate performance metrics like Cohen's Kappa, F1 score, and confusion matrices. |

3. Method:

- Step 1: Curation of Gold-Standard Test Set. Select a representative sample from your dataset. Have multiple domain-expert annotators label this set independently using the finalized guidelines. Only include items where annotators achieve a predefined agreement threshold (e.g., Kappa > 0.8).

- Step 2: Automated Annotation Run. Process the entire gold-standard test set using the automated annotation tool. Ensure the tool's prompts or models are configured based on the same annotation guidelines.