Unlocking Cellular Heterogeneity: The Essential Role of Clustering in Single-Cell RNA-Seq Cell Type Identification

This comprehensive review explores the critical role of unsupervised clustering in single-cell RNA sequencing for cell type identification, addressing both foundational concepts and cutting-edge methodologies.

Unlocking Cellular Heterogeneity: The Essential Role of Clustering in Single-Cell RNA-Seq Cell Type Identification

Abstract

This comprehensive review explores the critical role of unsupervised clustering in single-cell RNA sequencing for cell type identification, addressing both foundational concepts and cutting-edge methodologies. We examine the computational challenges posed by high-dimensional, sparse scRNA-seq data and systematically evaluate the performance of diverse clustering algorithms, including classical machine learning, community detection, and deep learning approaches. The article provides actionable insights for researchers and drug development professionals on method selection, parameter optimization, and validation strategies, supported by recent benchmark studies. Finally, we discuss emerging trends and future directions in clustering methodology to enhance precision in biomedical and clinical research.

The Computational Challenge: Why Clustering is Essential for Decoding Cellular Heterogeneity

The fundamental limitation of traditional bulk RNA sequencing has catalyzed a revolutionary transformation in biological research. While bulk RNA-seq provides a population-level average of gene expression across thousands to millions of cells, this approach inevitably masks critical biological heterogeneity within cell populations [1]. The single-cell revolution represents a paradigm shift from measuring ensemble averages to profiling the complete transcriptome of individual cells, enabling researchers to resolve the cellular complexity that drives development, disease, and physiological processes [2] [1]. This technological advancement has been particularly transformative for understanding heterogeneous tissues such as tumors, the immune system, and the nervous system, where distinct cell subtypes and transitional states execute specialized functions [3] [1].

At the heart of interpreting single-cell data lies clustering analysis—a computational methodology that groups cells with similar gene expression profiles, enabling cell type identification and characterization [4]. Clustering provides the foundational framework upon which biological interpretation is built, transforming high-dimensional transcriptomic data into biologically meaningful categories. However, this process faces significant challenges related to consistency, reliability, and scalability [4]. As single-cell technologies continue to evolve, generating increasingly massive datasets, the role of robust clustering methodologies becomes ever more critical for accurate biological discovery. This whitepaper examines the technical landscape of single-cell RNA sequencing, with particular emphasis on clustering methodologies as the computational cornerstone of cell type identification research.

Technical Foundations: From Bulk to Single-Cell Resolution

Fundamental Methodological Differences

The transition from bulk to single-cell analysis represents more than merely a difference in scale; it constitutes a fundamental reconceptualization of experimental design and biological interpretation. Bulk RNA sequencing provides a composite signal averaging gene expression across all cells in a sample, making it impossible to determine whether a transcript originates from all cells equally or from a specialized subset [1]. This approach is analogous to hearing the average volume of a large choir rather than distinguishing individual voices. In contrast, single-cell RNA sequencing captures the complete transcriptomic profile of individual cells, enabling researchers to identify rare cell types, characterize cellular heterogeneity, and reconstruct developmental trajectories [2] [1].

The experimental workflows differ significantly between these approaches. Bulk RNA-seq begins with RNA extraction from entire tissue samples or cell populations, followed by library preparation and sequencing [1]. Single-cell protocols, however, require the generation of high-quality single-cell suspensions, individual cell partitioning, cell lysis within isolated compartments, barcoding of transcripts from each cell, and finally library preparation [2] [1]. The partitioning step is particularly crucial, achieved through various technologies including microfluidics (10× Genomics Chromium), microwell plates (BD Rhapsody), or combinatorial barcoding approaches (Parse Biosciences) [2].

Table 1: Comparative Analysis of Bulk versus Single-Cell RNA Sequencing

| Feature | Bulk RNA-seq | Single-Cell RNA-seq |

|---|---|---|

| Resolution | Population average | Individual cells |

| Heterogeneity Detection | Masks cellular diversity | Reveals cellular subpopulations |

| Rare Cell Identification | Limited sensitivity | High sensitivity |

| Required Input | Total RNA from cell population | Single-cell suspension |

| Technical Complexity | Standardized protocols | Specialized equipment and expertise |

| Cost Considerations | Lower per sample | Higher per cell but richer information |

| Data Complexity | Manageable | High-dimensional, requires specialized analysis |

| Primary Applications | Differential expression between conditions, biomarker discovery | Cell type identification, developmental trajectories, tumor heterogeneity |

Experimental Workflow and Platform Selection

The single-cell RNA sequencing workflow encompasses three critical phases: (1) sample preparation and single-cell partitioning, (2) library preparation and sequencing, and (3) computational analysis and clustering [2]. Sample preparation requires optimizing tissue dissociation protocols to generate viable single-cell suspensions while minimizing stress-induced transcriptional responses [2]. Enzymatic digestion, mechanical dissociation, or nuclear isolation represent common approaches, with the optimal strategy dependent on tissue type and research objectives [2]. Recent advances in fixation-based methods, such as ACME (methanol maceration) and reversible DSP fixation, help preserve native transcriptional states by halting cellular responses during dissociation [2].

The selection of an appropriate partitioning platform represents a critical decision point in experimental design. Commercial solutions offer varying throughput, capture efficiencies, and compatibility with different sample types [2]. Microfluidic approaches (10× Genomics Chromium) provide high capture efficiency but have limitations regarding maximum cell size. Microwell-based systems (BD Rhapsody, Singleron) accommodate larger cells but with moderate capture efficiency. Plate-based combinatorial barcoding technologies (Parse Biosciences, Scale BioScience) enable massive scalability but require substantial input cell numbers [2]. The recent introduction of vortex-based oil partitioning (Fluent/PIPseq, now commercialized by Illumina) eliminates microfluidics size restrictions while maintaining high throughput capabilities [2].

Table 2: Commercial Single-Cell Partitioning Platforms

| Platform | Technology | Throughput (Cells/Run) | Capture Efficiency | Max Cell Size | Special Considerations |

|---|---|---|---|---|---|

| 10× Genomics Chromium | Microfluidic oil partitioning | 500-20,000 | 70-95% | 30 µm | Industry standard, high efficiency |

| BD Rhapsody | Microwell partitioning | 100-20,000 | 50-80% | 30 µm | Compatible with larger cells |

| Singleron SCOPE-seq | Microwell partitioning | 500-30,000 | 70-90% | <100 µm | Larger cell capacity |

| Parse Evercode | Multiwell-plate | 1,000-1M | >90% | - | Lowest cost per cell, high input requirement |

| Scale BioScience | Multiwell-plate | 84K-4M | >85% | - | Extreme throughput |

| Fluent/PIPseq (Illumina) | Vortex-based oil partitioning | 1,000-1M | >85% | - | No size restrictions |

Single-Cell RNA Sequencing Experimental Workflow

The Central Role of Clustering in Cell Type Identification

Computational Foundations of Clustering Analysis

Clustering represents the computational cornerstone of single-cell RNA-seq analysis, transforming high-dimensional gene expression data into biologically meaningful cell groups [4]. The process begins with quality control to remove low-quality cells and technical artifacts, followed by normalization to account for varying sequencing depth between cells [2] [5]. Dimensionality reduction techniques, particularly principal component analysis (PCA), then reduce the computational complexity while preserving biological signal [4]. Graph-based clustering algorithms, predominantly Louvain and Leiden approaches, group cells based on similarity in their gene expression profiles within this reduced dimension space [4].

The stochastic nature of these algorithms presents a fundamental challenge to clustering reliability. As these methods search for optimal cell partitions in random orders, cluster labels can vary significantly across different runs depending on the random seed initialization [4]. This inconsistency can lead to the disappearance of previously identified clusters or the emergence of entirely new clusters across analyses, directly impacting biological interpretation and the reliability of downstream analyses such as differential expression and cell-cell communication inference [4].

Addressing Clustering Inconsistency with scICE

The recent development of single-cell Inconsistency Clustering Estimator (scICE) addresses the critical challenge of clustering variability [4]. This method evaluates clustering consistency by generating multiple cluster labels through simple variation of random seeds in the Leiden algorithm, then quantifying label similarity using the inconsistency coefficient (IC) metric [4]. The IC assesses the agreement of cell membership across multiple clustering runs, with values approaching 1 indicating high consistency and reliability [4]. This approach represents a significant advancement over conventional consensus clustering methods, achieving up to 30-fold improvement in computational speed while providing robust consistency evaluation [4].

The scICE framework employs parallel processing to efficiently evaluate clustering consistency across different resolution parameters [4]. After standard quality control and dimensionality reduction with automatic signal selection (e.g., using scLENS), the method constructs a cell similarity graph and distributes it across multiple computing cores [4]. Each process then applies the Leiden algorithm simultaneously to generate multiple cluster labels, enabling comprehensive evaluation of clustering stability across various parameters [4]. This systematic approach identifies reliable cluster configurations while excluding unstable results that may represent computational artifacts rather than biological reality [4].

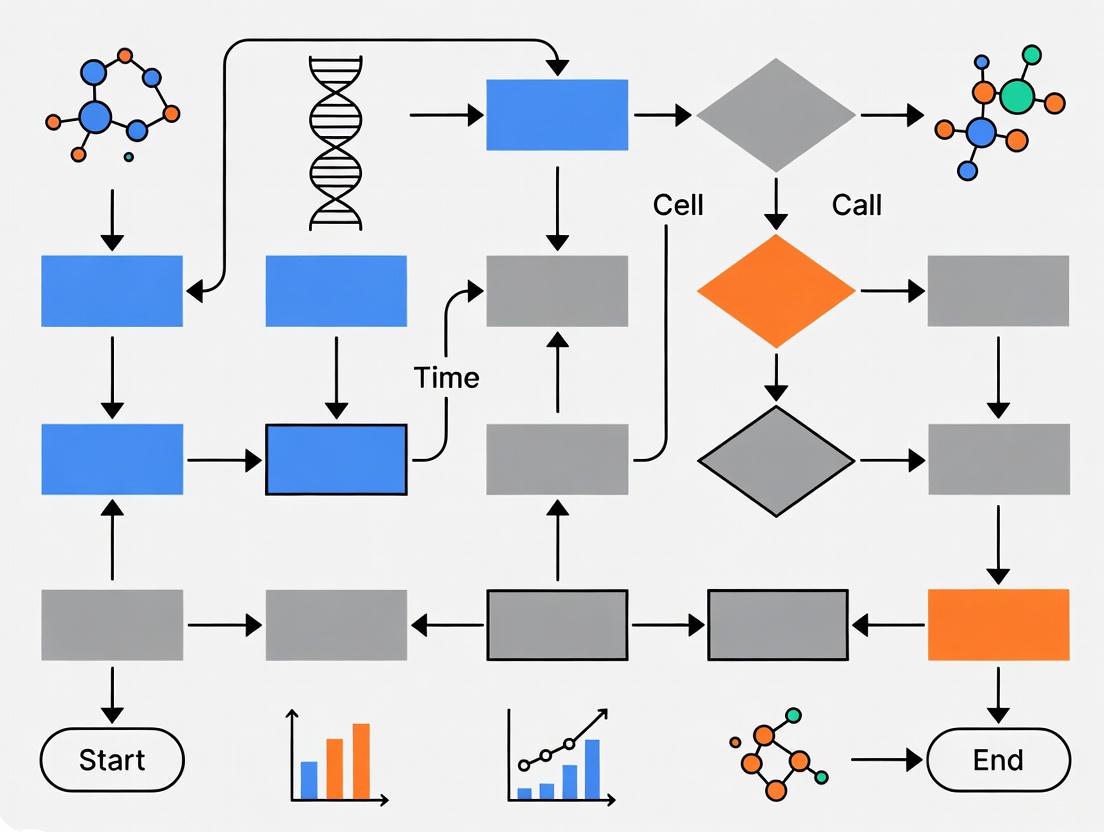

Clustering Consistency Evaluation Framework

Classification of Computational Annotation Methods

Cell type annotation following clustering has evolved through several computational paradigms, each with distinct advantages and limitations [5]. Marker-based methods represent the earliest approach, manually annotating clusters using known cell-type-specific genes from databases such as PanglaoDB and CellMarker [5]. Reference-based correlation methods categorize unknown cells by comparing their expression profiles to pre-constructed reference atlases like the Human Cell Atlas or Mouse Cell Atlas [5]. Supervised classification methods train machine learning models on pre-annotated datasets to predict cell types in new data [5]. Most recently, large-scale pretraining approaches leverage unsupervised deep learning on massive single-cell datasets to capture fundamental gene expression patterns that generalize across diverse cell types [6] [5].

The integration of natural language processing and large language models represents the cutting edge of cell type annotation methodology [6]. These approaches enhance annotation accuracy and scalability by learning complex relationships between gene expression patterns and cell type definitions [6]. Concurrently, emerging single-cell long-read sequencing technologies enable isoform-level transcriptomic profiling, offering higher resolution than conventional gene expression-based methods and providing opportunities to refine cell type definitions based on splicing heterogeneity [6].

Table 3: Computational Methods for Cell Type Annotation

| Method Category | Principles | Advantages | Limitations |

|---|---|---|---|

| Marker Gene-Based | Manual annotation using known cell-type-specific genes | Simple, interpretable | Limited to known markers, subjective |

| Reference-Based Correlation | Similarity matching to reference atlases | Comprehensive for well-characterized types | Limited for novel cell types |

| Supervised Classification | Machine learning trained on labeled data | Automated, scalable | Dependent on training data quality |

| Large-Scale Pretraining | Unsupervised deep learning on massive datasets | Discovers novel patterns, generalizable | Computationally intensive, complex implementation |

Advanced Applications and Visualization in Single-Cell Research

Spatial Transcriptomics and Tissue Context

The integration of single-cell RNA sequencing with spatial information represents a frontier in transcriptional profiling, preserving the architectural context of cells within tissues [7]. Spatial transcriptomics technologies enable comprehensive mapping of gene expression while maintaining positional information, revealing how cellular organization influences function [7]. This approach is particularly valuable for understanding tissue microenvironments, cell-cell interactions, and spatial gradients of gene expression in development and disease [7].

Novel computational tools have emerged to address the visualization challenges inherent in spatial omics data. Spaco (Spatial Coloring) represents a space-aware colorization method specifically designed for spatial datasets that considers intricate tissue topology when assigning colors to categorical data [7]. This approach optimizes color palettes to enhance visual differentiation between neighboring categories, addressing the limitation of traditional color schemes where adjacent regions with similar colors become difficult to distinguish [7].

Accessible Visualization for Color Vision Diversity

Effective visualization is paramount for interpreting complex single-cell data, yet traditional color schemes often create barriers for researchers with color vision deficiencies (CVD) [8] [9]. Approximately 8% of men and 0.5% of women experience some form of CVD, making conventional red-green color palettes problematic for a significant portion of the scientific community [8] [9]. The misuse of color in scientific communication remains prevalent, with rainbow-like and red-green color maps continuing to distort data representation and exclude CVD readers [8].

The scatterHatch R package addresses this challenge through redundant coding of cell groups using both colors and patterns [9]. This approach combines CVD-friendly color palettes with distinctive patterning (horizontal, vertical, diagonal, checkers, crisscross) to differentiate cell groups in both dense clusters and sparse point distributions [9]. By providing dual visual cues, scatterHatch enhances accessibility for all readers regardless of color perception ability while maintaining aesthetic quality [9]. The package supports customization of pattern types, line colors, and thickness, enabling researchers to create highly distinguishable visualizations even for datasets containing dozens of cell groups [9].

Successful single-cell research requires both wet-lab reagents and computational resources working in concert. The following toolkit summarizes essential components for designing and implementing single-cell studies:

Table 4: Essential Single-Cell Research Resources

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Commercial Platforms | 10× Genomics Chromium, BD Rhapsody, Parse Evercode | Single-cell partitioning, barcoding, and library preparation |

| Dissociation Reagents | Enzymatic cocktails (collagenase, trypsin), ACME (methanol fixation) | Tissue dissociation into single-cell suspensions |

| Viability Stains | Propidium iodide, DAPI, fluorescent live/dead stains | Assessment of cell viability before partitioning |

| Reference Databases | Human Cell Atlas, Mouse Cell Atlas, PanglaoDB, CellMarker | Reference data for cell type annotation and marker identification |

| Analysis Pipelines | Seurat (R), Scanpy (Python), scICE | Data processing, clustering, and consistency evaluation |

| Visualization Tools | scatterHatch, Spaco, ggplot2 | Creation of accessible, publication-quality figures |

| Specialized Reagents | Feature Barcoding antibodies, CRISPR screening reagents | Multimodal analysis beyond transcriptomics |

Future Perspectives and Concluding Remarks

The single-cell revolution continues to accelerate, with emerging technologies promising even greater resolution and multidimensionality. Multiomics approaches simultaneously capturing transcriptomic, epigenomic, and proteomic information from individual cells are expanding our understanding of cellular regulation [3]. Computational methods are evolving toward dynamic clustering that can adapt to newly acquired data and open-world recognition frameworks capable of identifying novel cell types beyond training distributions [5]. The integration of large language models and transfer learning approaches addresses the critical challenge of long-tail distributions in cellular heterogeneity, enhancing recognition of rare cell types [6] [5].

The role of clustering in cell type identification research remains fundamental, serving as the critical bridge between raw sequencing data and biological insight. As datasets grow in scale and complexity, robust, consistent, and scalable clustering methodologies will become increasingly essential for extracting meaningful biological knowledge from single-cell experiments. The continued development of computational infrastructure, algorithmic innovations, and accessible visualization tools will empower researchers to fully leverage the potential of single-cell technologies, ultimately advancing our understanding of cellular biology in health and disease.

Defining the Clustering Problem in High-Dimensional Transcriptomic Space

In the field of modern biology, single-cell and spatial transcriptomic technologies have revolutionized our ability to profile gene expression, uncovering cellular heterogeneity with unprecedented resolution. The fundamental challenge, however, lies in interpreting these high-dimensional datasets to identify distinct cell types and states—a process that relies heavily on computational clustering. Clustering serves as the critical first step in discerning biological meaning from complex transcriptomic data, transforming thousands of gene measurements into actionable insights about cellular identity, function, and organization within tissues.

As these technologies evolve, they present unique data characteristics that complicate clustering analyses. Single-cell RNA sequencing (scRNA-seq) achieves single-cell resolution but requires tissue dissociation, resulting in complete loss of spatial context [10]. Conversely, spatial transcriptomics preserves spatial localization within tissues but often does not achieve true single-cell resolution, as spots in datasets of varying resolution contain different numbers of cells [10]. This multi-faceted nature of transcriptomic data demands clustering methods that can adapt to different data structures and biological questions, making the choice of appropriate algorithms a pivotal decision in cell type identification research.

The Core Clustering Problem: Technical Dimensions

Fundamental Computational Challenges

The process of clustering transcriptomic data involves several interconnected technical challenges that directly impact the accuracy of cell type identification:

High-Dimensional Sparsity: Transcriptomic data typically measures thousands of genes across thousands of cells, creating extremely high-dimensional spaces where distances between points become less meaningful—a phenomenon known as the "curse of dimensionality." This is compounded by technical zeros and dropout events where expressed transcripts are not detected.

Data Distribution Variance: Different single-cell modalities produce data with markedly different distributions and feature dimensionalities. Single-cell proteomic data, for instance, often exhibits fundamentally different characteristics from transcriptomic data, posing non-trivial challenges for applying clustering techniques uniformly across modalities [11].

Scale and Noise: Large-scale datasets containing hundreds of thousands of cells require computationally efficient algorithms, while simultaneously dealing with various sources of biological and technical noise that can obscure true cell type distinctions.

Biological Interpretation Complexities

Beyond computational hurdles, biological interpretation introduces additional layers of complexity:

Cell Type Granularity: The appropriate resolution for clustering remains ambiguous, as algorithms must distinguish between fundamental cell types, transitional states, and subtle subtypes without ground truth labels.

Spatial Organization: For spatial transcriptomics, clustering must account for spatial dependencies where neighboring spots often share similar expression patterns due to microenvironmental influences [10].

Temporal Dynamics: Cells exist along developmental trajectories, creating continuous transitions rather than discrete populations that challenge partition-based clustering approaches.

Table 1: Key Challenges in Transcriptomic Data Clustering

| Challenge Category | Specific Issue | Impact on Cell Type Identification |

|---|---|---|

| Technical | High-dimensional sparsity | Reduces distance sensitivity between cell types |

| Data distribution variance | Limits cross-modal algorithm transfer | |

| Computational scale | Restricts analysis of large cell populations | |

| Biological | Continuous transitions | Obscures discrete cell type boundaries |

| Spatial dependencies | Requires specialized spatial clustering methods | |

| Tissue dissociation artifacts | Alters apparent transcriptional states |

Current Computational Approaches and Methodologies

Algorithm Categories and Representatives

Clustering methods for transcriptomic data have evolved into several distinct paradigms, each with unique strengths for handling particular data characteristics:

Classical Machine Learning Approaches: Methods like SC3 employ consensus clustering to enhance reliability by integrating multiple clustering algorithms [11]. Others like TSCAN, SHARP, and MarkovHC are recommended for users who prioritize time efficiency [11].

Community Detection Methods: Algorithms such as Leiden and Louvain leverage graph theory to identify densely connected groups of cells in nearest-neighbor graphs, often providing excellent scalability [11].

Deep Learning Approaches: Modern methods like scDCC, scAIDE, and scDeepCluster use neural networks to learn informative latent representations, with some like scDCC and scDeepCluster recommended for users prioritizing memory efficiency [11].

For spatial transcriptomics specifically, specialized algorithms have emerged that incorporate spatial information directly into the clustering process. BayesSpace, for instance, uses a Bayesian statistical framework that incorporates spatial neighborhood structure into its prior model, encouraging adjacent spots to belong to the same cluster [10]. SpaGCN employs Graph Convolutional Networks to model spatial dependencies, while STAGATE utilizes a Graph Attention Autoencoder framework to integrate spatial information with gene expression data [10].

Performance Benchmarking Insights

Recent comprehensive benchmarking studies provide critical insights into algorithm performance across different modalities. One extensive evaluation of 28 clustering algorithms across 10 paired single-cell transcriptomic and proteomic datasets revealed that scDCC, scAIDE, and FlowSOM consistently achieved top performance for both transcriptomic and proteomic data [11]. The study employed multiple validation metrics including Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), Clustering Accuracy (CA), and Purity to ensure robust evaluation.

Table 2: Top Performing Clustering Algorithms Across Modalities

| Algorithm | Transcriptomic Performance (Rank) | Proteomic Performance (Rank) | Computational Efficiency | Best Use Case |

|---|---|---|---|---|

| scAIDE | 2nd | 1st | Moderate | Top accuracy across modalities |

| scDCC | 1st | 2nd | Memory efficient | Large datasets with memory constraints |

| FlowSOM | 3rd | 3rd | Robust | Noisy data environments |

| SHARP | High time efficiency | High time efficiency | Time efficient | Rapid analysis of large datasets |

| scDeepCluster | Moderate performance | Moderate performance | Memory efficient | Memory-limited environments |

The benchmarking also highlighted important performance trade-offs. While scAIDE, scDCC, and FlowSOM provided top clustering accuracy, methods like TSCAN, SHARP, and MarkovHC were recommended for users prioritizing time efficiency, and community detection-based methods offered a balance between performance and computational demands [11].

Experimental Framework and Validation

Standardized Evaluation Metrics

Rigorous validation of clustering results requires multiple complementary metrics that assess different aspects of performance:

Adjusted Rand Index (ARI): Measures the similarity between predicted clustering and ground truth labels, with values from -1 to 1 where higher values indicate better agreement [11].

Normalized Mutual Information (NMI): Quantifies the mutual information between clustering results and ground truth, normalized to [0, 1] [11].

Clustering Accuracy (CA) and Purity: Direct measures of classification accuracy when ground truth labels are available [11].

For methods that output probabilities rather than hard classifications, metrics like LogLoss (cross-entropy loss) evaluate the quality of probability outputs, with lower values indicating better calibration of prediction confidence [12].

Integrated Clustering Workflows

Modern clustering analyses typically follow integrated workflows that combine multiple steps:

Diagram 1: Integrated Clustering Workflow

A key consideration in these workflows is the selection of Highly Variable Genes (HVGs), which has been shown to significantly impact clustering performance [11]. By focusing on genes with high cell-to-cell variation, clustering algorithms can concentrate on biologically meaningful signals rather than technical noise.

Multi-Omic Integration Approaches

With the rise of technologies like CITE-seq that simultaneously measure mRNA and surface protein levels in individual cells, integration methods have become essential. Benchmarking studies have evaluated 7 feature integration methods including moETM, sciPENN, and totalVI to fuse paired single-cell transcriptomic and proteomic data, extending single-omics clustering algorithms to multi-omics scenarios [11]. This approach demonstrates how integrated analysis of multiple molecular layers can provide more comprehensive cell type identification.

Advanced Spatial Clustering Techniques

Spatial Algorithm Architectures

Spatial transcriptomics requires specialized clustering approaches that leverage spatial coordinates and, increasingly, histological image features. The continuous optimization of these methods has created powerful tools for deciphering spatial patterns of gene expression:

Graph-Based Methods: STAGATE utilizes a Graph Attention Autoencoder framework to integrate spatial information with gene expression data, learning low-dimensional representations that capture spatial dependencies [10].

Deep Learning Frameworks: DeepST integrates a Variational Graph Autoencoder and a denoising autoencoder to jointly model spatial location, histological features, and gene expression [10].

Contrastive Learning Approaches: GraphST incorporates a graph self-supervised contrastive learning strategy, leveraging both spatial information and gene expression data to learn high-quality latent embeddings [10].

iSCALE Framework for Large Tissues

Recent advances like iSCALE address the critical limitation of small capture areas in conventional spatial transcriptomics platforms. iSCALE reconstructs large-scale, super-resolution gene expression landscapes and automatically annotates cellular-level tissue architecture in samples exceeding conventional platform limits [13].

The iSCALE workflow involves selecting regions from the same tissue block that fit standard ST platform capture areas ("daughter captures"), implementing spatial clustering analysis on this data to guide alignment onto the full tissue "mother image," and then using a feedforward neural network to learn relationships between histological image features and gene expression [13]. This approach enables comprehensive gene expression prediction across entire large tissue sections, including regions without direct gene expression measurements.

In benchmarking evaluations on a large gastric cancer sample, iSCALE significantly outperformed previous methods like iStar and RedeHist in identifying key tissue structures including tumor regions, tumor-infiltrated stroma, and tertiary lymphoid structures [13]. Quantitative evaluation using root mean squared error (RMSE), structural similarity index measure (SSIM), and Pearson correlation confirmed iSCALE's superior performance in gene expression prediction accuracy [13].

Implementation Tools and Research Reagents

Essential Computational Tools

Table 3: Key Research Reagent Solutions for Transcriptomic Clustering

| Tool/Platform | Primary Function | Application Context |

|---|---|---|

| Clustergrammer | Interactive heatmap visualization | Visualization of clustering results with zooming, panning, filtering [14] |

| Seurat | Comprehensive scRNA-seq analysis | End-to-end framework integrating dimensionality reduction, clustering, and visualization [10] |

| Scanpy | Single-cell analysis in Python | Preprocessing pipeline for spatial transcriptomics data [10] |

| OmniClust | Multi-modal clustering toolkit | Unified framework for both scRNA-seq and spatial transcriptomics data [10] |

| BayesSpace | Enhanced spatial clustering | Bayesian approach incorporating spatial neighborhood structure [10] |

Effective interpretation of clustering results requires specialized visualization tools:

Clustergrammer: A web-based tool that generates interactive heatmap visualizations with features including zooming, panning, filtering, reordering, and performing enrichment analysis directly from the interface [14].

D3.js Hierarchy Cluster: Produces dendrograms (node-link diagrams that place leaf nodes at the same depth) particularly useful for visualizing hierarchical clustering results [15].

Fisheye Distortion Techniques: Interactive visualization approaches that help explore dense clusters by providing localized magnification of overlapping points [16].

Emerging Methodological Trends

The field of transcriptomic clustering continues to evolve rapidly, with several promising directions emerging:

Multi-Modal Integration: Tools like OmniClust represent a movement toward unified frameworks that can handle both single-cell and spatial transcriptomics data within the same computational environment [10]. These approaches use advanced deep learning architectures like masked autoencoders and contrastive learning to evaluate generalization capability and generate optimal latent representations for clustering.

Large Tissue Scalability: Methods like iSCALE address the critical limitation of analyzing large tissues by leveraging histology images to predict gene expression beyond the physical constraints of current spatial transcriptomics platforms [13].

Benchmarking Standards: Comprehensive evaluations of clustering algorithms across multiple modalities provide much-needed guidance for method selection and highlight complementary strengths of different approaches [11].

Clustering in high-dimensional transcriptomic space remains a fundamental challenge in cell type identification research, but rapid methodological advances are increasing both the accuracy and biological interpretability of results. The integration of spatial information, development of multi-modal approaches, and creation of scalable frameworks for large tissues represent significant progress toward overcoming the inherent limitations of transcriptomic data.

As clustering methods continue to mature, their role in drug development and clinical applications will expand, potentially enabling more precise cell type-specific targeting and personalized therapeutic approaches. The ongoing benchmarking and validation of these methods ensures that the field moves toward increasingly robust and biologically meaningful clustering solutions that can unlock the full potential of single-cell and spatial technologies.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the investigation of cellular heterogeneity at an unprecedented resolution. A fundamental step in scRNA-seq analysis is cell type identification, predominantly achieved through clustering algorithms. The performance of these clustering methods is intrinsically linked to the inherent characteristics of the data itself. This technical guide examines three core data properties—sparsity, noise, and technical artifacts—that critically influence clustering outcomes. We explore the mathematical basis of these challenges, evaluate their impact on cell type separation, and present robust computational strategies to mitigate their effects. By framing these issues within the context of a broader thesis on the role of clustering in cell type identification, this review provides researchers with a comprehensive framework for optimizing their analytical workflows, ensuring more accurate and biologically meaningful cell type discovery in diverse research and drug development applications.

In single-cell RNA sequencing (scRNA-seq) studies, identifying cell types is most frequently accomplished by applying unsupervised clustering algorithms to transcriptome data [17] [18]. This process structures cells into groups based on gene expression similarity, enabling the inference of cellular identity [19]. However, the data fed into these clustering algorithms are not a perfect reflection of biology. They are technical measurements burdened with specific properties that can obscure true biological signals and complicate the distinction between cell types.

The performance of clustering methods is deeply entwined with the nature of the input data. Characteristics such as sparsity, the abundance of zero counts; noise, the combination of biological and technical variability; and technical artifacts, systematic biases introduced during experimentation, collectively pose significant challenges [20]. These factors can prevent clustering algorithms from identifying accurate partitions, leading to misgrouping of distinct cell types or false separation of homogeneous populations. Consequently, understanding and addressing these data characteristics is not merely a preprocessing concern but a foundational aspect of reliable cell type annotation. This guide details these key characteristics, their impacts on clustering for cell type identification, and the experimental and computational protocols designed to overcome them.

Sparsity in Single-Cell Data

Sparsity refers to the high proportion of zero values in a single-cell count matrix. In a typical scRNA-seq dataset, a majority of genes are not detected in a majority of cells. While some zeros represent true biological absence of transcription ("biological zeros"), a significant fraction are "technical zeros" stemming from the limitations of sequencing technology, such as inefficient mRNA capture or low sequencing depth [20].

Impact on Clustering and Cell Type Identification

The sparse nature of scRNA-seq data directly challenges clustering algorithms. Sparsity can weaken the apparent signal distinguishing cell types, as informative marker genes may appear to be only sporadically expressed. This can lead to several problems:

- Reduced Cluster Resolution: Dimensionality reduction techniques, such as PCA, which often precede clustering, may struggle to capture the true structure of the data. This can result in an inability to separate closely related cell subtypes [19].

- Misinterpretation of Cell Types: Over-reliance on genes with high technical zero rates can lead to clusters defined by technical artifacts rather than biology, potentially creating artificial cell subtypes or obscuring rare populations.

- Algorithmic Bias: Clustering algorithms that rely on distance metrics (e.g., Euclidean distance in KNN graphs) can be misled by the high dimensionality and sparse structure, making distances between cells less informative [17].

Mitigation Strategies and Experimental Protocols

Several methodologies have been developed to address data sparsity:

- Feature Selection: Instead of using all genes, clustering is performed on a subset of highly informative genes. This reduces the dimensionality and amplifies the biological signal. Traditional methods select Highly Variable Genes (HVGs) based on variance or deviance [21]. However, a more advanced approach is implemented in Festem, which directly selects cluster-informative marker genes by testing whether a gene's expression follows a homogeneous (non-marker) or heterogeneous (marker) mixture distribution, thereby improving clustering accuracy and marker gene detection with high precision [21].

- Imputation and Modeling: Computational methods attempt to distinguish technical zeros from biological zeros and impute missing expression values. These models use the underlying statistical distribution of the data (e.g., negative binomial) to smooth the count matrix and recover weak signals before clustering is performed [21].

Table 1: Methods to Mitigate Sparsity in scRNA-seq Clustering

| Method Type | Example | Brief Principle | Effect on Clustering |

|---|---|---|---|

| Feature Selection | HVG (e.g., HVGvst) | Selects genes with high variance across cells. | Reduces noise; may miss lowly-expressed informative genes. |

| Feature Selection | Festem | Directly selects genes with heterogeneous distributions (mixture models). | Improves clustering accuracy; directly targets cluster-informative genes [21]. |

| Statistical Modeling | Negative Binomial Models | Models count data to account for over-dispersion and technical zeros. | Provides a more accurate representation of gene expression for distance calculations. |

The following diagram illustrates the conceptual workflow for distinguishing biological from technical zeros, a key step in addressing sparsity.

Diagram 1: A workflow for handling sparsity in scRNA-seq data, involving modeling to classify zeros and imputation.

Noise and Technical Artifacts

Noise in scRNA-seq data arises from multiple sources, including both biological variability (e.g., stochastic transcription) and technical variability (e.g., amplification bias, library preparation). Technical artifacts are systematic non-biological signals, such as batch effects from processing samples on different days or with different reagents [20]. A critical, often overlooked concept is that signals traditionally discarded as "noise," like eye movements in EEG data, can sometimes constitute a significant portion of the true biological signal, a finding that has parallels in single-cell analysis [22].

Impact on Clustering and Cell Type Identification

Noise and artifacts can severely degrade clustering performance:

- Spurious Heterogeneity: Technical noise can create the false appearance of distinct cell subpopulations, leading to over-clustering and the identification of cell types that are not biologically real [20].

- Masked True Heterogeneity: Conversely, strong batch effects can cause two biologically distinct cell types to appear similar if they are processed in the same batch, leading to under-clustering and the failure to identify genuine cell types.

- Reduced Robustness: Clustering results become less stable and reproducible, as the patterns found by the algorithm are heavily influenced by technical confounders rather than consistent biological signals.

Mitigation Strategies and Experimental Protocols

A robust clustering workflow must incorporate steps to account for noise and artifacts.

- Exploratory Data Analysis: Techniques like unconstrained ordination (e.g., MDS, NMDS) can visualize the data to identify outlier samples and the influence of confounding factors, such as batch effects [20].

- Batch Effect Correction: Tools like ComBat or removeBatchEffect can model and remove unwanted variation associated with known technical batches before clustering is performed [20].

- Algorithm Selection: Some clustering algorithms demonstrate better inherent robustness to noise. For instance, a 2025 benchmarking study highlighted that FlowSOM exhibited excellent robustness, while community detection-based methods like Leiden offered a good balance of performance and efficiency [23]. The Festem method also shows superior performance in high-noise scenarios by directly selecting clustering-informative genes, unlike methods that rely on surrogate metrics like variance [21].

Table 2: Characterization of Noise and Artifacts in Single-Cell Data

| Source | Type | Impact on Clustering | Common Mitigation Strategy |

|---|---|---|---|

| Sequencing Depth | Technical Noise | Varies expression levels between cells, affecting distance metrics. | Data normalization (e.g., log1pPF, scran). |

| Batch Effects | Technical Artifact | Causes cells to cluster by batch instead of cell type. | Batch correction algorithms (e.g., ComBat, BBKNN). |

| Amplification Bias | Technical Noise | Introduces variance that can be mistaken for biological heterogeneity. | UMIs (Unique Molecular Identifiers), imputation. |

| Stochastic Transcription | Biological Noise | Obscures the true expression signal of a cell type. | Feature selection, clustering on ensemble signals. |

The protocol below details a standard workflow for mitigating noise and artifacts prior to clustering.

Protocol 1: Preprocessing for Noise and Artifact Reduction

- Normalization: Normalize the raw count matrix to account for differences in sequencing depth per cell. Common methods include scran or sctransform [19].

- Feature Selection: Select a subset of genes (e.g., 500-5000) for downstream analysis to reduce the dimensionality and noise. This can be done using HVG methods or Festem [21] [19].

- Integration/Batch Correction: If multiple batches are present, use an integration algorithm such as Harmony, Scanorama, or BBKNN to align the datasets and remove batch-specific effects.

- Dimensionality Reduction: Perform PCA on the normalized, feature-selected, and integrated data to create a lower-dimensional representation that captures the major axes of biological variation [19].

- Clustering: Apply the clustering algorithm (e.g., Leiden) on the top principal components (e.g., top 30 PCs) to identify cell groups [19].

The Interplay of Characteristics and Clustering Algorithm Selection

Sparsity, noise, and artifacts do not act in isolation; they interact in complex ways to shape the data landscape that clustering algorithms must navigate. The compositional nature of microbiome data, and by extension single-cell data, further complicates this picture, as the value of one feature depends on the values of all others [20]. This means that an increase in the count of one gene is technically accompanied by a decrease in all others, violating the assumptions of many standard statistical models.

The choice of clustering algorithm is critical for navigating these data challenges. A comprehensive 2025 benchmark of 28 clustering algorithms on both transcriptomic and proteomic data provides critical insights [23]. The study found that:

- Top Performers: For top performance across transcriptomic and proteomic data, scAIDE, scDCC, and FlowSOM are recommended. FlowSOM is particularly noted for its robustness [23].

- Efficiency-Oriented Choices: For memory efficiency, scDCC and scDeepCluster are recommended. For time efficiency, TSCAN, SHARP, and MarkovHC are top choices. Community detection-based methods (e.g., Leiden, Louvain) offer a good balance [23].

- Impact of Granularity: The performance of algorithms can be influenced by the level of cell type granularity, emphasizing that no single method is universally best for all scenarios [23].

The following diagram maps the relationships between data challenges, mitigation steps, and clustering outcomes.

Diagram 2: The interplay between data challenges, mitigation strategies, and algorithm selection leading to accurate clustering.

The Scientist's Toolkit: Research Reagent Solutions

This section details key computational tools and reagents essential for conducting robust single-cell clustering analysis in the face of these data challenges.

Table 3: Essential Toolkit for Managing Data Characteristics in Single-Cell Analysis

| Tool/Reagent | Type | Primary Function | Relevance to Data Challenges |

|---|---|---|---|

| Festem | Computational Algorithm | Direct selection of cluster-informative marker genes. | Addresses sparsity and noise by focusing on truly heterogeneous genes [21]. |

| Scanpy | Software Suite | A comprehensive Python toolkit for single-cell analysis. | Provides integrated workflows for normalization, HVG selection, PCA, and Leiden clustering [19]. |

| Seurat | Software Suite | A comprehensive R toolkit for single-cell genomics. | Offers functions for data normalization, integration, and graph-based clustering. |

| Highly Variable Genes (HVGs) | Computational Method | Gene selection based on variance or deviance. | Reduces dimensionality and mitigates noise, though may miss informative genes [21]. |

| ComBat | Computational Algorithm | Empirical Bayes method for batch effect correction. | Removes technical artifacts to prevent batch-driven clustering [20]. |

| Leiden Algorithm | Clustering Algorithm | Community detection on KNN graphs. | A fast and well-connected graph-based method, robust for large datasets [19] [23]. |

| FlowSOM | Clustering Algorithm | Self-Organizing Map-based clustering. | Shows high robustness and performance across omics data types [23]. |

| Unique Molecular Identifiers (UMIs) | Wet-lab Reagent | Tags individual mRNA molecules during library prep. | Reduces technical noise from amplification bias, mitigating spurious heterogeneity. |

The accurate identification of cell types via clustering is a cornerstone of single-cell biology, but it is a process highly sensitive to the underlying properties of the data. Sparsity, noise, and technical artifacts are not mere nuisances; they are fundamental characteristics that must be acknowledged and addressed throughout the analytical pipeline. The broader thesis of clustering's role in cell type identification must therefore encompass a deep understanding of these data challenges.

Successful navigation of this landscape requires a multi-faceted approach: rigorous preprocessing to mitigate technical confounders, careful feature selection to enhance biological signal, and the strategic choice of clustering algorithms proven to be robust and effective for the specific data modality and biological question at hand. As benchmarking studies continue to illuminate the strengths and weaknesses of various methods, and as new tools like Festem offer more direct ways to select informative features, the field moves closer to a future where computational cell type identification is both more accurate and more reliable. For researchers and drug development professionals, adhering to these principles is essential for generating biologically meaningful and translatable insights from single-cell experiments.

Cell type identification is a fundamental goal in single-cell RNA sequencing (scRNA-seq) analysis, and clustering serves as the critical first step in this discovery process. The pipeline transforms high-dimensional gene expression data into biologically meaningful cell type labels through a multi-stage process. This technical guide details the core components of this pipeline, framed within the broader thesis that clustering provides the essential structural foundation upon which biological meaning is built. The process begins with clustering to partition cells into putative groups, followed by marker gene detection, and culminates in annotation through various methods, including the emerging approach of using large language models (LLMs). This guide provides researchers, scientists, and drug development professionals with both the theoretical framework and practical methodologies for implementing a robust cell type annotation workflow.

The Computational Foundation: Clustering Algorithms

Clustering algorithms group cells based on similarity in their gene expression profiles, creating the initial putative cell types that require biological interpretation. The performance of this clustering step directly impacts all downstream annotation efforts.

Community Detection-Based Clustering

The Leiden algorithm has become the preferred method for scRNA-seq data clustering, outperforming other methods and superseding the Louvain algorithm [19]. Leiden operates on a k-nearest neighbor (KNN) graph constructed from a lower-dimensional representation (typically principal components) of the gene expression data. The algorithm optimizes community structure by moving nodes between communities to maximize a quality function, followed by refinement and aggregation steps repeated until partitions stabilize [19]. A key parameter is the resolution parameter, which controls the coarseness of clustering: higher values yield more clusters, enabling identification of finer cell states [19].

Benchmarking Clustering Performance

Selecting appropriate clustering methods requires understanding their performance characteristics across different data types. A comprehensive 2025 benchmark study evaluated 28 clustering algorithms on paired transcriptomic and proteomic data, providing critical insights for method selection [11].

Table 1: Top-Performing Clustering Algorithms Across Omics Modalities (2025 Benchmark)

| Algorithm | Transcriptomic Performance (Rank) | Proteomic Performance (Rank) | Algorithm Category | Key Strengths |

|---|---|---|---|---|

| scAIDE | 2nd | 1st | Deep Learning | Top overall performance for proteomic data |

| scDCC | 1st | 2nd | Deep Learning | Excellent for transcriptomic data; memory efficient |

| FlowSOM | 3rd | 3rd | Classical Machine Learning | Robust performance across modalities |

| Leiden | Not top-ranked individually | Not top-ranked individually | Community Detection | Balance of performance and efficiency |

The benchmark evaluated methods using multiple metrics including Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), Clustering Accuracy (CA), and Purity [11]. For users prioritizing memory efficiency, scDCC and scDeepCluster are recommended, while TSCAN, SHARP, and MarkovHC offer superior time efficiency [11].

From Clusters to Biological Meaning: The Annotation Pipeline

Once cells are clustered, the annotation process translates computational groupings into biologically meaningful cell type identities through a multi-step process.

Marker Gene Detection

Following clustering, differentially expressed genes (marker genes) are identified for each cluster. These genes, significantly upregulated in specific clusters compared to all others, provide the transcriptional signature used for biological interpretation. Common methods include Wilcoxon rank-sum tests, t-tests, and logistic regression, which generate ranked lists of marker genes for each cluster.

Annotation Approaches

Manual Annotation with Reference Databases

Researchers traditionally compare detected marker genes against established biological knowledge bases such as CellMarker, PanglaoDB, and the Human Protein Atlas. This manual process requires significant expertise and is prone to observer bias, though it remains a common practice in the field.

Automated Annotation with Large Language Models

Recently, LLMs have emerged as powerful tools for automating cell type annotation by leveraging their encoded biological knowledge. Two prominent frameworks have been developed for this purpose:

AnnDictionary is an open-source Python package built on LangChain and AnnData that supports multiple LLM providers with a single line of code configuration [24]. It includes multithreading optimizations for atlas-scale data and provides functions for cell type annotation, gene set annotation, and automated label management [24]. Its benchmarking on Tabula Sapiens v2 revealed that LLM annotation of most major cell types achieves more than 80-90% accuracy, with performance varying by model size [24].

mLLMCelltype implements a multi-LLM consensus framework that integrates predictions from multiple models (including GPT, Claude, Gemini, and others) to improve accuracy and reduce individual model biases [25]. This approach achieves 95% annotation accuracy through consensus algorithms and provides uncertainty quantification metrics while reducing API costs by 70-80% [25].

Table 2: Benchmarking LLM Performance on Cell Type Annotation

| LLM Framework | Reported Accuracy | Key Innovation | Advantages | Limitations |

|---|---|---|---|---|

| AnnDictionary | 80-90% for major cell types [24] | Provider-agnostic architecture | Supports all major LLM providers; optimized for large data | Single-model approach potentially susceptible to model-specific biases |

| mLLMCelltype | 95% through consensus [25] | Multi-LLM consensus framework | Reduced bias; uncertainty quantification; cost efficiency | Increased complexity of managing multiple API connections |

| Claude 3.5 Sonnet | >80% functional annotation match [24] | Specialized for functional annotation | Excels at gene set functional annotation | Not a comprehensive framework |

Experimental Protocol for Benchmarking Annotation Methods

To ensure reproducible evaluation of annotation methods, follow this standardized protocol:

Data Pre-processing Pipeline:

- Normalize raw counts using scran or sctransform

- Identify highly variable genes (2000-5000 genes)

- Scale expression values and regress out technical confounders

- Perform principal component analysis (PCA) using top variable genes

- Construct k-nearest neighbor graph (k=5-100 depending on dataset size)

- Apply Leiden clustering across multiple resolution parameters

- Compute differentially expressed genes for each cluster

Annotation Benchmarking Methodology:

- Apply LLM annotation to cluster marker genes using standardized prompts

- Compare automated annotations with manual expert annotations

- Calculate agreement metrics: direct string matching, Cohen's kappa (κ), and LLM-rated matches (perfect/partial/not-matching)

- Assess cross-LLM agreement using unified label categories

- Perform replicate analyses to ensure robustness [24]

Validation Framework:

- Compare cluster annotations with known cell type markers from literature

- Validate annotations using protein expression data (CITE-seq) where available

- Assess biological coherence of annotated cell types through pathway enrichment

- Evaluate annotation stability across different clustering resolutions

Visualization of the Annotation Workflow

The following diagram illustrates the complete cell type annotation pipeline from raw data to biological interpretation:

Workflow of the cell type annotation pipeline showing key computational and biological validation steps.

Table 3: Key Research Reagent Solutions for scRNA-seq Cell Type Annotation

| Resource Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Clustering Algorithms | Leiden [19], scDCC [11], scAIDE [11], FlowSOM [11] | Partition cells into transcriptionally similar groups | Initial discovery of putative cell populations; Leiden is preferred for general use |

| LLM Annotation Frameworks | AnnDictionary [24], mLLMCelltype [25] | Automated cell type annotation using biological knowledge encoded in LLMs | Rapid, consistent annotation of cluster marker genes; mLLMCelltype provides higher accuracy through consensus |

| Reference Databases | CellMarker, PanglaoDB, Human Protein Atlas | Curated knowledge bases of cell type-specific markers | Manual verification and biological grounding of computational predictions |

| Benchmarking Platforms | scCCESS [26], SPDB [11] | Evaluate clustering performance and method robustness | Method selection and validation of analysis pipelines |

| Multi-omics Integration | moETM, sciPENN, totalVI [11] | Integrate transcriptomic and proteomic data for validation | Confirm annotations using protein expression evidence from CITE-seq |

The cell type annotation pipeline represents a critical bridge between computational clustering and biological interpretation in single-cell genomics. This guide has detailed the essential components of this process, from foundational clustering algorithms through emerging LLM-based annotation methods. The integration of multiple LLMs through consensus frameworks like mLLMCelltype demonstrates particularly promising direction, achieving 95% accuracy while mitigating individual model biases. As clustering methodologies continue to evolve alongside annotation technologies, the pipeline from clusters to biological meaning will become increasingly automated, reproducible, and accurate—ultimately accelerating discovery in basic research and drug development. The benchmarking protocols and experimental frameworks presented here provide researchers with standardized approaches for validating and comparing methods within this rapidly advancing field.

In single-cell RNA sequencing (scRNA-seq) research, the fundamental task of cell type identification relies heavily on computational clustering. This process groups cells based on their gene expression profiles, forming the basis for discovering novel cell types and states, which is critical for understanding developmental biology and disease mechanisms [27] [28]. However, this analytical foundation faces two interconnected fundamental limitations: the Curse of Dimensionality and Computational Complexity. The Curse of Dimensionality describes the problem that in theory high-dimensional data contains more information, but in practice this is not the case. Higher dimensional data often contains more noise and redundancy, providing diminishing returns for downstream analysis [29]. Concurrently, Computational Complexity challenges emerge as the volume of data grows exponentially, making even algorithms with polynomial time complexity unacceptable in practical applications [30]. This technical guide examines these core limitations within the context of cell type identification research, providing researchers with methodologies to diagnose, understand, and mitigate these challenges in their experimental workflows.

The Curse of Dimensionality in Single-Cell Data Analysis

Theoretical Foundation and Impact on Distance Metrics

The Curse of Dimensionality, a term first coined by R. Bellman, manifests particularly severely in scRNA-seq data where each of the thousands of genes represents a separate dimension [29] [31]. In this high-dimensional expression space, each cell's expression profile defines its location, creating computational challenges for distance-based clustering algorithms.

The core problem emerges from the behavior of distance metrics in high-dimensional spaces. As dimensionality increases, the Euclidean distance—which forms the basis for algorithms like k-means—begins to converge to a constant value between any given examples [32]. This occurs because the volume of the space grows exponentially with each additional dimension, causing data points to become increasingly sparse and distances between points to become more similar [33].

Table 1: Effects of High Dimensionality on scRNA-seq Data Analysis

| Aspect | Low-Dimensional Space | High-Dimensional Space | Impact on Cell Clustering |

|---|---|---|---|

| Distance Distribution | Wide variation in pairwise distances | Distances converge to constant value | Reduced ability to distinguish cell populations |

| Data Sparsity | Dense data distribution | Sparse distribution with many empty regions | Difficulty identifying dense clusters of similar cells |

| Noise Accumulation | Limited noise effects | Noise dominates in many dimensions | Biological signal obscured by technical variation |

| Neighborhood Structure | Meaningful local neighborhoods | Most points become equidistant | Compromised cell similarity assessments |

Diagnosing Dimensionality Problems in Clustering Algorithms

Researchers can identify when their clustering analysis suffers from dimensionality problems through several diagnostic approaches:

- Distance Metric Examination: Calculate all pairwise distances between cells and plot their distribution. If values are highly concentrated around a constant, dimensionality reduction is needed [33].

- Principal Component Analysis: Evaluate the variance explained by successive principal components. If variance decreases slowly across many components, the effective dimensionality is high [31].

- Cluster Stability Assessment: Perform subsampling of the dataset and compare cluster assignments across iterations. High variability indicates sensitivity to dimensionality [33].

For k-means clustering specifically, the algorithm becomes less effective at distinguishing between examples as the dimensionality of the data increases due to distance convergence [32]. In practice, this means that even distinct cell types may become inseparable in the high-dimensional gene expression space.

Computational Complexity in Big Data Environments

Theoretical Framework of Computational Tractability

Classical computational complexity theory classifies solvable problems in polynomial time as tractable and intractable ones. However, in big data calculations, this framework undergoes fundamental changes. Algorithms with polynomial time complexity or even linear time complexity have become unacceptable in practical applications, effectively rendering previously tractable problems intractable [30]. This paradigm shift is particularly relevant to single-cell genomics, where datasets routinely contain expressions of >20,000 genes across >100,000 cells.

The computational burden manifests differently across various stages of single-cell analysis:

Table 2: Computational Complexity in Single-Cell Analysis Workflows

| Analysis Stage | Algorithmic Operations | Time Complexity | Big Data Challenges |

|---|---|---|---|

| Data Preprocessing | Normalization, QC filtering | O(n·p) for n cells, p genes | Linear scaling becomes prohibitive at massive scale |

| Feature Selection | Highly variable gene detection | O(n·p²) in worst case | Quadratic dependence on genes limits scalability |

| Dimensionality Reduction | PCA computation | O(min(n²·p, n·p²)) | Memory and time bottlenecks with large n and p |

| Clustering | k-means optimization | O(n·p·k·i) for k clusters, i iterations | Multiple dependencies exacerbate scaling issues |

Empirical Observations in Algorithm Performance

Recent comparative studies of time complexity in big data engineering reveal that theoretical time complexity provides a valuable framework for understanding algorithm performance, but real-world implementations must account for system-level factors that influence efficiency [34]. For example, while MergeSort is theoretically optimal in terms of comparison-based sorting algorithms, its performance in distributed systems is often limited by the overhead of merging data across nodes [34].

In single-cell clustering, the CHOIR tool was compared with 15 existing clustering methods across 230 simulated and 5 real datasets, including single-cell RNA sequencing, spatial transcriptomic, multi-omic, and ATAC-seq data [28]. Such comprehensive benchmarking is computationally intensive but necessary to establish methodological efficacy in the face of growing data complexity.

Experimental Protocols for Mitigation Strategies

Dimensionality Reduction Methodologies

Principal Component Analysis (PCA) Protocol

PCA discovers axes in high-dimensional space that capture the largest amount of variation. The protocol involves:

- Input Preparation: Use log-normalized expression values and select the top 2000 genes with the largest biological components to reduce computational work and high-dimensional random noise [31].

- Algorithm Execution:

- Compute the covariance matrix of the normalized data matrix

- Perform singular value decomposition (SVD) on the covariance matrix

- Extract eigenvectors (principal components) and eigenvalues (variance explained)

- Implementation Code (using scran/scater):

- Component Selection: Retain the top d principal components that capture sufficient biological variation, typically 10-50 components in scRNA-seq analysis [31].

t-Distributed Stochastic Neighbor Embedding (t-SNE) Protocol

t-SNE is a non-linear dimensionality reduction technique that projects high-dimensional data onto 2D or 3D components:

- Preprocessing: First perform PCA to obtain a lower-dimensional representation (typically 50 dimensions) [31].

- Similarity Calculation:

- Construct a probability distribution over pairs of cells in the high-dimensional space such that similar cells have high probability of being picked

- Use Gaussian distribution centered at each point

- Low-Dimensional Mapping:

- Define a similar probability distribution over points in the low-dimensional map

- Use Student t-distribution with one degree of freedom (heavy-tailed)

- Optimization: Minimize the Kullback-Leibler divergence between the two distributions using gradient descent.

- Implementation Code:

Uniform Manifold Approximation and Projection (UMAP) Protocol

UMAP is a graph-based, non-linear dimensionality reduction technique that assumes data is uniformly distributed on a locally connected Riemannian manifold:

- Graph Construction:

- Compute the k-nearest neighbors graph (typically k=15-30)

- Apply a fuzzy simplicial set construction to represent topological relationships

- Optimization:

- Initialize a low-dimensional representation (typically using Laplacian eigenmaps)

- Minimize the cross-entropy between the high-dimensional and low-dimensional topological representations

- Implementation Code:

Comparative Analysis of Dimensionality Reduction Techniques

Independent comparisons have evaluated the stability, accuracy and computing cost of 10 different dimensionality reduction methods for single-cell data [29]. The findings indicate:

- t-SNE yields the best overall performance in terms of accuracy

- UMAP shows the highest stability and best separation of original cell populations

- PCA remains widely used for initial data compaction before applying non-linear methods

Table 3: Performance Characteristics of Dimensionality Reduction Methods

| Method | Type | Computational Complexity | Strengths | Limitations |

|---|---|---|---|---|

| PCA | Linear | O(min(n²·p, n·p²)) | Highly interpretable, computationally efficient | Limited to linear structures |

| t-SNE | Non-linear | O(n²) | Excellent cluster separation, handles non-linearity | Computational intensive, perplexity sensitivity |

| UMAP | Non-linear | O(n¹¹) | Preserves global structure, faster than t-SNE | Parameter sensitivity, theoretical complexity |

Visualization Frameworks

Dimensionality Reduction Workflow

Diagram 1: Dimensionality Reduction Workflow for Single-Cell Data

Computational Complexity Relationships

Diagram 2: Factors Affecting Computational Complexity in scRNA-seq Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools for Single-Cell Clustering Analysis

| Tool/Category | Specific Implementation | Function/Purpose | Application Context |

|---|---|---|---|

| Clustering Algorithms | k-means | Distance-based partitioning | Initial cell type identification |

| CHOIR | Random forest-based clustering with statistical testing | Improved detection of rare cell populations [28] | |

| Dimensionality Reduction | PCA (scran/scater) | Linear dimensionality reduction | Initial data compaction, noise reduction [31] |

| t-SNE (scater) | Non-linear projection for visualization | Cluster visualization in 2D/3D [29] | |

| UMAP (scater) | Manifold learning for visualization | Preserving global structure in visualization [29] | |

| Programming Frameworks | R/Bioconductor | Statistical analysis and visualization | Comprehensive single-cell analysis workflows [31] |

| Scanpy (Python) | Single-cell analysis in Python | Alternative to R/Bioconductor ecosystem [29] | |

| Benchmarking Tools | Clustering comparison frameworks | Algorithm performance evaluation | Method selection for specific data types [28] |

The interrelated challenges of dimensionality and computational complexity represent fundamental constraints in single-cell research for cell type identification. The Curse of Dimensionality diminishes the effectiveness of distance-based clustering algorithms as gene numbers increase, while Computational Complexity creates practical barriers to analysis as cell numbers grow exponentially. Mitigation strategies centered on dimensionality reduction—including PCA, t-SNE, and UMAP—provide essential approaches for navigating these limitations. Furthermore, emerging tools like CHOIR demonstrate that algorithmic innovations can overcome some inherent constraints of conventional clustering methods [28]. As single-cell technologies continue to evolve, producing ever-larger datasets, the development of computationally efficient and dimensionality-aware methods will remain critical for advancing our understanding of cellular heterogeneity in health and disease. Researchers must therefore maintain awareness of both the theoretical foundations and practical implementations of these approaches to ensure robust and interpretable cell type identification in their studies.

Algorithmic Landscape: From Classical Machine Learning to Deep Learning Approaches

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by enabling the quantification of gene expression at the individual cell level, thereby revealing cellular heterogeneity that was previously obscured in bulk tissue measurements [35]. This technology has become indispensable for understanding developmental biology, tumor heterogeneity, and complex disease mechanisms [36]. Clustering stands as a fundamental computational technique in scRNA-seq analysis, serving as the primary method for identifying distinct cell populations and putative cell types based on similar gene expression patterns [23] [36]. The accurate identification of cell types through clustering allows researchers to characterize novel cell states, understand disease-specific cellular alterations, and identify potential therapeutic targets [23].

Within the landscape of computational tools developed for scRNA-seq clustering, classical machine learning methods remain widely used due to their interpretability, robustness, and computational efficiency [36]. This technical guide focuses on three prominent classical machine learning methods—SC3, CIDR, and TSCAN—which employ distinct algorithmic approaches to address the challenges inherent to single-cell data, including high dimensionality, technical noise, and high dropout rates [36] [37]. We examine their underlying methodologies, performance characteristics, and practical implementation to equip researchers with the knowledge needed to select and apply these tools effectively in their single-cell research and drug development pipelines.

Methodological Deep Dive: Algorithms and Workflows

SC3 (Single-Cell Consensus Clustering)

SC3 implements a consensus clustering approach that combines multiple clustering solutions to achieve high accuracy and robustness [38]. The algorithm operates through a structured pipeline that transforms the input expression matrix into a stable set of cell clusters. The method begins with gene filtering based on expression levels and dropout rates, followed by multiple parallel steps including distance matrix calculation, transformation using principal component analysis (PCA), and k-means clustering with varying parameters [38]. A key innovation of SC3 is its spectral transformation step, where it retains between 4% and 7% of the eigenvectors after dimensional reduction, which has been empirically demonstrated to optimize clustering performance across diverse datasets [38].

The core strength of SC3 lies in its consensus matrix, which aggregates the multiple clustering results into a single matrix representing the probability that each pair of cells belongs to the same cluster. The final clusters are determined by applying hierarchical clustering to this consensus matrix [38]. This approach significantly enhances stability compared to single-run clustering methods, mitigating the variability that typically arises from different initial conditions in stochastic algorithms [38]. SC3 incorporates a method based on Random Matrix Theory (RMT) to suggest the optimal number of clusters, and provides visualization tools including consensus matrices and silhouette plots to help researchers select appropriate clustering resolutions [38].

CIDR (Clustering through Imputation and Dimensionality Reduction)

CIDR employs an innovative approach that addresses the dropout problem in single-cell data through an imputation strategy [37]. Unlike methods that rely on data normalization as a preprocessing step, CIDR incorporates dropout handling directly into its clustering pipeline. The algorithm begins by calculating a pairwise dissimilarity matrix between all cells, but critically modifies this calculation to account for potential dropout events [37]. CIDR identifies genes with unexpectedly low expression—potential dropout events—and uses this information to adjust the dissimilarity metric, effectively imputing missing expression values in a manner that enhances the signal for cell-type discrimination.

Following dissimilarity matrix calculation and implicit imputation, CIDR applies principal coordinate analysis (PCoA, a classical multidimensional scaling technique) to reduce dimensionality [37]. The algorithm then performs hierarchical clustering on the reduced-dimensional space to identify cell groups. A significant advantage of CIDR is its ability to automatically determine the number of clusters through an approach that analyzes the eigenvalues from the PCoA step, identifying an "elbow point" that indicates the optimal dimensionality for clustering [37]. This integrated approach to handling dropouts without requiring separate normalization makes CIDR particularly effective for datasets with high technical variability.

TSCAN

TSCAN employs a fundamentally different approach centered on pseudo-temporal ordering of cells, which it then leverages for clustering purposes [37]. The method begins with dimensionality reduction through PCA, followed by the construction of a minimum spanning tree (MST) that connects cells based on their similarity in the reduced dimension space [37]. This tree structure represents potential developmental trajectories, with branches corresponding to different cell lineages or states. TSCAN then partitions the tree into distinct segments, which correspond to cell clusters that represent different stages along a differentiation continuum or distinct cell subpopulations.

A distinctive feature of TSCAN is its bidirectional integration of clustering and pseudo-temporal ordering [37]. While most clustering methods operate independently of trajectory inference, TSCAN uses the pseudo-temporal information to inform the clustering process, resulting in groups that reflect both transcriptional similarity and developmental relationships. This approach is particularly valuable for analyzing data from processes involving continuous transitions, such as differentiation, cellular activation, or disease progression. TSCAN includes functionality to automatically estimate the number of clusters based on the tree structure, though users can also specify this parameter based on biological knowledge [37].

Performance Benchmarking and Comparative Analysis

Experimental Protocol for Method Evaluation

Benchmarking clustering algorithms for single-cell data requires standardized evaluation protocols and metrics. The most common approach involves using real datasets with known cell type annotations and simulated datasets with ground truth [36]. Performance is typically quantified using metrics that compare computational clusters to reference labels:

- Adjusted Rand Index (ARI): Measures the similarity between two clusterings, with values ranging from -1 to 1, where 1 indicates perfect agreement [23] [38].