VICTOR: A Comprehensive Guide to Assessing Cell Type Annotation Quality in Single-Cell RNA Sequencing

This article provides a detailed exploration of VICTOR (Validation and Inspection of Cell Type Annotation Through Optimal Regression), a novel method for gauging the confidence of automated cell type annotations...

VICTOR: A Comprehensive Guide to Assessing Cell Type Annotation Quality in Single-Cell RNA Sequencing

Abstract

This article provides a detailed exploration of VICTOR (Validation and Inspection of Cell Type Annotation Through Optimal Regression), a novel method for gauging the confidence of automated cell type annotations in single-cell RNA sequencing data. Tailored for researchers, scientists, and drug development professionals, we cover its foundational principles, methodological application across diverse datasets (within-platform, cross-platform, cross-studies, and cross-omics), strategies for troubleshooting and optimization, and a comparative analysis of its diagnostic performance against existing methods. The guide aims to empower scientists to enhance the reliability of their single-cell analyses, thereby accelerating discoveries in biomedicine.

Understanding VICTOR: The Critical Need for Reliable Cell Type Annotation

The Challenge of Automated Cell Annotation in Single-Cell RNA Sequencing

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by providing unprecedented resolution for exploring cellular heterogeneity in complex tissues and organisms. A fundamental step in analyzing scRNA-seq data involves cell type identification, which has traditionally relied on manual annotation—a process requiring expert knowledge, extensive time, and suffering from irreproducibility across different research groups [1]. As the scale of single-cell studies continues to grow exponentially, with datasets now routinely encompassing millions of cells, manual annotation has become a critical bottleneck in analysis pipelines [1] [2].

The emergence of automated cell identification methods addresses this challenge by providing standardized, scalable approaches for cell type assignment. These computational methods leverage previously annotated reference datasets or established marker gene databases to automatically label cells in new experiments [1] [3]. However, the rapid development of numerous classification approaches—each with different underlying algorithms, requirements, and performance characteristics—has created a new challenge: researchers must navigate a complex landscape of tools without clear guidance on their relative strengths and limitations. This comparison guide provides an objective assessment of automated cell annotation methods, evaluates their performance against standardized benchmarks, and examines the critical role of validation tools like VICTOR in ensuring annotation quality [4].

Methodological Landscape of Automated Cell Annotation Tools

Automated cell annotation methods employ diverse computational strategies, which can be broadly categorized into several distinct approaches based on their underlying methodology:

Marker-based methods utilize predefined lists of cell-type-specific marker genes to assign identities to cells or clusters. Tools like ScType, Garnett, and SCINA fall into this category, leveraging comprehensive marker databases to annotate cell populations [1] [3]. These methods typically employ statistical approaches to detect the expression of positive marker genes (indicating presence of a cell type) and negative marker genes (providing evidence against a cell type) [3]. ScType, for instance, introduces a specificity score that ensures marker genes are informative across both cell clusters and cell types, addressing the challenge of genes that are expressed in multiple cell populations [3].

Reference-based correlation methods identify cell types by comparing gene expression patterns in unannotated cells to those in pre-annotated reference datasets. SingleR and CHETAH employ this strategy, calculating correlation coefficients or other similarity metrics between query cells and reference cell types [1]. These methods benefit from not requiring training but depend heavily on the quality and comprehensiveness of the reference data.

Supervised classification methods treat cell type identification as a machine learning problem, training classifiers on labeled reference datasets to predict cell identities in new data. This category includes both single-cell-specific classifiers (like scPred and ACTINN) and general-purpose classifiers (including Support Vector Machines (SVM), Random Forests, and neural networks) [1]. These models learn discriminative patterns from gene expression features associated with each cell type, then apply this learned decision function to classify new cells.

Hybrid approaches combine elements from multiple strategies. For example, some methods integrate marker gene information with supervised learning, while others employ neural networks that learn latent representations of cells before classification [1] [2]. The scVI method uses a deep generative model to account for technical noise and batch effects before performing downstream analysis [1].

Table 1: Categories of Automated Cell Annotation Methods

| Category | Representative Tools | Underlying Methodology | Training Requirement |

|---|---|---|---|

| Marker-based | ScType, Garnett, SCINA | Marker gene detection | Marker database only |

| Reference-based | SingleR, CHETAH | Correlation/similarity matching | Pre-annotated reference dataset |

| Supervised classification | scPred, ACTINN, SVM | Machine learning classifiers | Labeled training data |

| Neural networks | scVI, Cell-BLAST | Deep learning models | Labeled training data |

Comprehensive Performance Benchmarking of Annotation Tools

Large-Scale Benchmarking Reveals Performance Variations

A comprehensive benchmark study evaluating 22 classification methods across 27 publicly available scRNA-seq datasets provides critical insights into the relative performance of automated annotation tools [1] [5]. The datasets represented various technologies, species, tissue types, and complexity levels, allowing robust evaluation under diverse conditions. Performance was assessed using two experimental setups: intra-dataset evaluation (5-fold cross-validation within datasets) and the more challenging inter-dataset evaluation (training on one dataset and predicting on another) [1].

The results demonstrated that most classifiers perform well on a variety of datasets, with decreased accuracy for complex datasets containing overlapping cell populations or "deep" annotations with finely resolved subtypes [1]. Notably, general-purpose classifiers—particularly Support Vector Machine (SVM) with linear kernel—achieved consistently high performance across different experiments, outperforming many single-cell-specific methods [1] [6]. This surprising result suggests that well-established machine learning algorithms can effectively learn the discriminative patterns in gene expression data necessary for accurate cell type identification.

Table 2: Performance Comparison of Selected Cell Annotation Methods

| Method | Type | Overall Accuracy | Computation Speed | Handles Novel Cells | Key Strengths |

|---|---|---|---|---|---|

| SVM (linear) | General-purpose | High | Fast | No | Best overall performance in benchmarking |

| ScType | Marker-based | High (98.6%) | Very fast | Yes | Fully automated, requires no reference |

| scSorter | Marker-based | High | Moderate | Yes | High accuracy but slower than ScType |

| SingleR | Reference-based | Moderate | Moderate | No | Simple correlation-based approach |

| Random Forest | General-purpose | High | Slow | No | Robust to noise in data |

| SCINA | Marker-based | Moderate | Fast | Yes | Fast but lower accuracy on complex datasets |

Specialized Tools for Specific Applications

The benchmarking also revealed that certain tools excel in specific applications. ScType, for instance, demonstrated remarkable accuracy (98.6% across 6 datasets) and speed, correctly annotating 72 out of 73 cell types including 8 that were originally misannotated in published studies [3]. In a reanalysis of human liver scRNA-seq data, ScType automatically distinguished between two closely related B-cell populations (immature and plasma B cells) that were not differentiated in the original manuscript [3]. Similarly, when applied to mouse retinal data, ScType identified three closely related amacrine cell types and distinguished between rod and cone bipolar cells that were originally grouped together [3].

The exceptional speed of ScType—more than 30 times faster than the next best performing method scSorter—makes it particularly valuable for large-scale datasets [3]. This performance advantage stems from its focused use of highly specific marker combinations rather than analyzing entire transcriptomes, demonstrating that strategic feature selection can optimize both accuracy and computational efficiency.

Experimental Protocols for Method Evaluation

Standardized Benchmarking Frameworks

The benchmark study conducted by Abdelaal et al. employed rigorous experimental protocols to ensure fair comparison across methods [1] [5]. For intra-dataset evaluation, they implemented 5-fold cross-validation, where each dataset was randomly split into five subsets, with four used for training and one for testing, repeating this process five times with different test sets [1]. This approach evaluates how well methods learn cell types within the same dataset, controlling for batch effects and technical variation.

For inter-dataset evaluation, the researchers trained classifiers on one dataset and tested on completely different datasets, mimicking the real-world application of using a reference atlas to annotate new experiments [1]. This more challenging assessment tests method robustness to biological and technical variations across studies. Performance was quantified using F1-scores (harmonic mean of precision and recall), percentage of unclassified cells, and computation time [1] [6].

Specialized Evaluation Protocols

Additional experiments assessed specific aspects of classification performance:

- Feature selection sensitivity: Methods were evaluated using different gene selection strategies (highly variable genes, differentially expressed genes, or all genes) to determine their impact on performance [1].

- Population size sensitivity: Tests measured how classification accuracy changes with varying numbers of cells per population, revealing which methods handle rare cell types effectively [1].

- Annotation level performance: Evaluation across different hierarchical levels of annotation (from major cell types to fine subtypes) determined how methods perform at different resolutions [1].

These standardized protocols provide a framework for ongoing evaluation of new methods as they emerge, with all code publicly available on GitHub to facilitate community use and extension [1] [6].

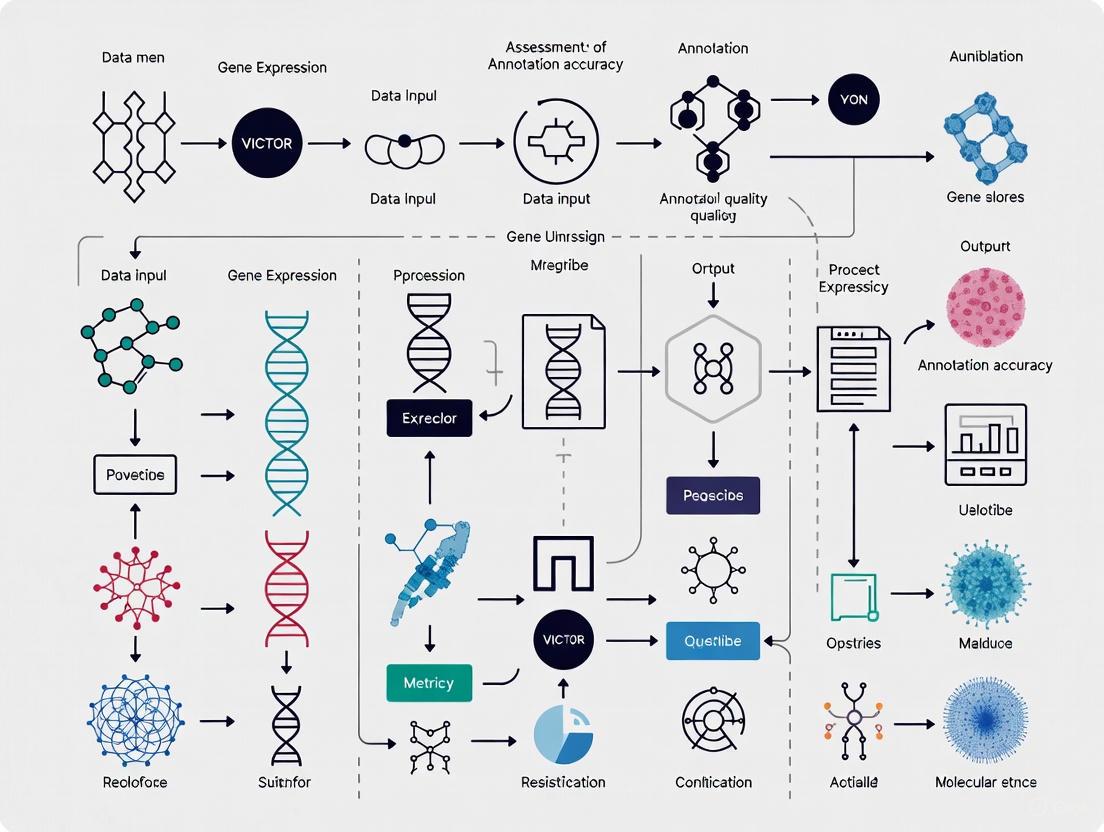

Cell Annotation Workflow with Validation

VICTOR: A Framework for Validation and Inspection of Cell Type Annotations

Addressing the Validation Challenge

As automated annotation methods proliferate, assessing the reliability of predicted cell labels has emerged as a critical challenge, particularly for rare and novel cell types that may be poorly represented in reference datasets [4]. VICTOR (Validation and Inspection of Cell Type Annotation through Optimal Regression) addresses this need by providing a robust framework for gauging confidence in cell annotations [4].

The method employs elastic-net regularized regression with optimal thresholds to identify potentially inaccurate annotations [4]. Elastic-net regularization combines the advantages of L1 (lasso) and L2 (ridge) regression, providing effective feature selection while handling correlated variables—a common characteristic in gene expression data. By learning the relationship between gene expression patterns and cell type labels, VICTOR can identify cells whose expression profiles deviate significantly from their assigned type, flagging them for manual inspection or reannotation.

Performance Across Diverse Contexts

VICTOR has demonstrated strong performance in identifying inaccurate annotations across various challenging scenarios, including within-platform, cross-platform, cross-study, and cross-omics settings [4]. This versatility is particularly valuable for real-world applications where researchers often integrate datasets generated using different technologies or from multiple studies. The method's ability to maintain diagnostic accuracy across these diverse contexts suggests it captures fundamental biological signals rather than technology-specific artifacts.

The introduction of VICTOR represents an important shift in the field—from simply assigning labels to also quantifying confidence in those assignments. This capability is especially crucial for clinical applications, such as drug development, where inaccurate cell type identification could lead to erroneous conclusions about cell-type-specific drug responses or toxicity profiles.

Practical Implementation and Research Reagents

Successful implementation of automated cell annotation requires both computational tools and biological reference resources. The following table details key research reagents and their functions in the annotation process:

Table 3: Essential Research Reagents for Automated Cell Annotation

| Resource | Type | Function | Applicability |

|---|---|---|---|

| ScType Database | Marker gene database | Provides positive/negative marker genes for cell types | Human and mouse tissues |

| CellMarker 2.0 | Marker gene database | Curated marker database for various tissues | Human and mouse (467/389 cell types) |

| PanglaoDB | Marker gene database | Collection of marker genes from single-cell studies | Focus on human cell types |

| Human Cell Atlas | Reference dataset | Multi-organ reference atlas | 33 human organs |

| Mouse Cell Atlas | Reference dataset | Comprehensive mouse cell atlas | 98 major cell types |

| Tabula Muris | Reference dataset | Single-cell data across mouse tissues | 20 organs and tissues |

Implementation Considerations

When implementing automated annotation pipelines, researchers should consider several practical aspects:

- Data quality requirements: Effective annotation requires adequate sequencing depth, cell viability, and minimal technical artifacts [2]. Quality control metrics including number of detected genes, total molecule count, and mitochondrial gene percentage should be evaluated before annotation [2].

- Batch effect management: When using reference-based approaches, batch effects between training and query datasets can significantly impact performance [1]. Methods that explicitly model batch effects (like scVI) may be preferable for cross-dataset applications.

- Marker database selection: For marker-based methods, the completeness and relevance of the marker database strongly influences performance [3] [2]. Researchers should select databases with strong coverage of their tissue of interest and regularly update these resources as new markers are discovered.

- Computational resources: Methods based on neural networks or processing large reference datasets may require substantial computational resources [1], while marker-based methods like ScType can provide rapid annotations even on standard workstations [3].

Annotation Validation Decision Framework

The field of automated cell annotation for single-cell RNA sequencing data has matured significantly, with numerous methods now available that demonstrate good performance across diverse datasets. Benchmarking studies reveal that while general-purpose classifiers like SVM compete strongly with specialized methods, the optimal tool choice depends on specific research contexts—marker-based methods like ScType offer speed and automation for standard cell types, while reference-based and supervised approaches provide robustness for novel datasets [1] [3].

The introduction of validation frameworks like VICTOR represents an important advancement, addressing the critical need for confidence assessment in automated annotations [4]. As the field progresses, key challenges remain in handling rare cell types, managing batch effects across platforms, and dynamically updating marker databases with newly discovered cell types [2]. Future developments will likely focus on integrating multiple annotation approaches, improving methods for identifying novel cell types not present in reference data, and enhancing the interpretability of automated classifications.

For researchers and drug development professionals, establishing standardized annotation pipelines that incorporate multiple methods followed by rigorous validation will be essential for generating reproducible, biologically meaningful results. The comprehensive benchmarking data and methodological frameworks presented here provide a foundation for developing such pipelines, ultimately accelerating single-cell research and its translation to therapeutic applications.

In single-cell RNA sequencing (scRNA-seq) analysis, accurate cell type annotation is foundational for downstream biological interpretation. However, the assessment of annotation quality remains a significant challenge. VICTOR (Validation and Inspection of Cell Type Annotation through Optimal Regression) is a method designed to address this gap by providing a robust, quantitative framework for evaluating the confidence and accuracy of cell type labels [7].

This guide objectively compares VICTOR's performance with other available alternatives, providing researchers with the experimental data and methodologies needed to make informed decisions for their single-cell analysis workflows.

Core Principles and Methodology of VICTOR

VICTOR operates on a central principle: that the quality of cell type annotation can be quantitatively assessed by examining the relationship between a cell's transcriptomic profile and its assigned label. Its innovation lies in the application of elastic-net regularized regression to solve this problem [7].

The methodological workflow can be broken down into several key stages, as illustrated below.

Diagram 1: The VICTOR analytical workflow for assessing annotation quality.

Detailed Experimental Protocol

For researchers seeking to implement or validate the VICTOR methodology, the core experimental and computational procedure is as follows:

- Input Data Preparation: Begin with a fully annotated scRNA-seq dataset where each cell has a pre-defined cell type label. The raw count matrix should be normalized and scaled appropriately.

- Model Training: For each cell in the dataset, an elastic-net regularized regression model is trained using the transcriptomic data (predictors) and the cell type labels (response variable). The elastic-net penalty combines L1 (Lasso) and L2 (Ridge) regularization, which helps in handling correlated genes and selecting informative features.

- Leave-One-Out Cross-Validation (LOOCV): A LOOCV scheme is typically employed. This involves iteratively holding out one cell as a test sample, training the model on all remaining cells, and then predicting the held-out cell's type.

- Confidence Score Generation: The prediction probability for the correct (annotated) cell type is extracted for each cell. This probability, derived from the regression model's output, serves as a quantitative confidence score.

- Quality Assessment:

- Cell-Level: Cells with low confidence scores (e.g., below a predefined threshold) are flagged as potentially misannotated or as representing ambiguous cellular states.

- Dataset-Level: The distribution of confidence scores across the entire dataset provides a metric for the overall annotation quality. A dataset with a high median confidence score is considered to have more reliable annotations.

Performance Comparison and Experimental Data

To evaluate VICTOR's effectiveness, its performance can be compared against other approaches for assessing annotation quality, such as manual inspection by experts, clustering coherence metrics, or methods based on random forest classification.

The following table synthesizes key performance aspects from benchmark analyses. It is important to note that these are generalized findings, and performance can be dataset-dependent.

Table 1: Comparison of Annotation Quality Assessment Methods

| Method | Core Principle | Key Strength | Identified Limitation | Typical Application Context |

|---|---|---|---|---|

| VICTOR [7] | Elastic-net regularized regression | Provides a quantitative, cell-specific confidence score; handles high-dimensional, correlated gene data effectively. | Computational intensity can be high for very large datasets (>100k cells). | Systematic, quantitative validation of automated or manual annotations. |

| Clustering Coherence | Metrics like Silhouette Width | Intuitive; measures how well cells cluster by assigned type. | Does not directly assess label accuracy; fails if clusters are biologically complex. | Preliminary, rapid quality check. |

| Random Forest | Ensemble machine learning | High predictive accuracy; robust to noise. | Can be a "black box"; less interpretable than regression-based methods. | General-purpose classification and validation. |

| Manual Inspection | Expert biological knowledge | Leverages deep domain expertise; can catch subtle biological errors. | Not scalable; subjective and difficult to reproduce. | Final, targeted review of ambiguous populations. |

Benchmarking on Public Datasets

VICTOR's methodology has been applied and tested on several publicly available, well-annotated scRNA-seq datasets, which serve as benchmarks for its performance:

- Pancreas Datasets: Includes data from GSE84133, GSE85241, and E-MTAB-5061, which can be obtained from the

scRNAseqR/Bioconductor package [7]. - PBMC Datasets: Such as GSE132044, available through the Single Cell Portal, and multiomics data from 10x Genomics [7].

- Human Lung Cell Atlas (HLCA): A large, integrated reference atlas accessible via the CellxGene platform [7].

On these datasets, the regression-based approach of VICTOR has demonstrated a strong ability to identify misannotated cells that were subsequently validated by deeper biological investigation. The model's use of elastic-net regularization makes it particularly suited for the high-dimensional and correlated nature of gene expression data, often outperforming simpler models that do not account for these factors.

Successfully implementing an annotation quality assessment, particularly with a method like VICTOR, relies on access to specific data resources and computational tools. The table below details essential components for such an analysis.

Table 2: Key Research Reagents & Solutions for scRNA-seq Annotation Quality Assessment

| Item Name | Function in Analysis | Specific Example / Source |

|---|---|---|

| Annotated Reference Datasets | Provides ground truth data for method training, testing, and benchmarking. | Human Lung Cell Atlas (HLCA) [7], Pancreas datasets (GSE84133) [7]. |

| VICTOR Software Package | Implements the core regression algorithm for calculating annotation confidence scores. | The VICTOR Package is available on GitHub: https://github.com/Charlene717/VICTOR [7]. |

| Single-Cell Analysis Suites | Provides environment for data pre-processing, normalization, and visualization of results. | R/Bioconductor packages (e.g., scRNAseq, Seurat). |

| Multiomics Datasets | Enables validation of annotation quality against orthogonal data modalities (e.g., ATAC-seq). | PBMC multiomics dataset from 10x Genomics [7]. |

| CellxGene Platform | A curated platform for exploring and downloading high-quality, annotated single-cell datasets. | https://cellxgene.cziscience.com [7]. |

The integration of rigorous, quantitative assessment tools is becoming indispensable as the scale and complexity of single-cell genomics grow. VICTOR addresses a critical need in the analytical pipeline by providing a statistically sound framework based on elastic-net regularized regression to evaluate the confidence of cell type annotations [7].

Benchmarking on established datasets shows that VICTOR offers a reproducible and scalable alternative to purely qualitative methods, enabling researchers to identify potentially misannotated cells with greater confidence and ultimately leading to more reliable biological conclusions. Its availability as an open-source package ensures that it can be widely adopted, tested, and further refined by the research community [7].

How Elastic-Net Regularized Regression Powers Confidence Scoring

In the rigorous field of scientific research, particularly within drug development and the assessment of annotation quality, the confidence in predictive models is paramount. Elastic-Net regularized regression has emerged as a powerful statistical tool that enhances this confidence by overcoming critical limitations of simpler models. Framed within the context of VICTOR research for assessing annotation quality, this guide provides an objective comparison of Elastic-Net's performance against its alternatives, supported by experimental data. Regularized regression techniques, including Ridge, Lasso, and Elastic-Net, improve upon ordinary least squares (OLS) regression by adding a penalty term to the model's objective function, which constrains the size of the coefficient estimates [8]. This process reduces model variance and mitigates overfitting, especially in datasets where the number of features (p) is large relative to the number of observations (n), or when multicollinearity exists [8] [9].

The following diagram illustrates the logical relationship between OLS regression and the three primary regularization techniques that build upon it.

Elastic-Net specifically combines the penalties of both Lasso (L1) and Ridge (L2) regression [9] [10]. Its objective function can be written as shown in Eq. (1), where λ1 and λ2 are the tuning parameters that control the strength of the L1 and L2 penalties, respectively [11].

Where SSE is the Sum of Squared Errors, and βj are the coefficients.

This hybrid approach allows Elastic-Net to inherit the beneficial properties of both methods: the L1 penalty promotes sparsity by driving some coefficients to exactly zero, thus performing feature selection, while the L2 penalty handles groups of correlated variables effectively, stabilizing the coefficient estimates [9] [8]. This makes it exceptionally suited for the complex, high-dimensional data common in modern biological and chemical research, such as that analyzed in the VICTOR framework.

Comparative Performance Analysis of Regularization Techniques

Key Differentiators Between Ridge, Lasso, and Elastic-Net

The choice of a regularization technique directly influences a model's interpretability, performance, and applicability. The table below summarizes the core characteristics and optimal use cases for Ridge, Lasso, and Elastic-Net regression.

Table 1: Fundamental comparison of Ridge, Lasso, and Elastic-Net regression

| Feature | Ridge Regression | Lasso Regression | Elastic-Net Regression |

|---|---|---|---|

| Penalty Type | L2 (ℓ₂-norm) [8] | L1 (ℓ₁-norm) [8] | Combined L1 and L2 [9] |

| Coefficient Shrinkage | Shrinks coefficients toward zero but not exactly to zero [8] | Can shrink coefficients exactly to zero [8] | Can shrink coefficients exactly to zero [9] |

| Feature Selection | No, retains all features [8] | Yes, automated feature selection [8] | Yes, automated feature selection [9] [10] |

| Handling Multicollinearity | Excellent; groups correlated features together [8] | Poor; may arbitrarily select one from a correlated group [9] | Excellent; stabilizes estimates like Ridge while performing selection [9] [10] |

| Best Use Case | Many small-to-medium sized effects; severe multicollinearity [8] | A small number of strong, sparse signals; feature selection is a priority [8] | High-dimensional data (p > n); correlated features; need for both stability and feature selection [9] [12] |

Empirical Performance in Genomic and Spatial Modeling

Objective comparisons in real-world research scenarios are crucial for guiding model selection. The following table summarizes quantitative results from two independent studies that benchmarked these algorithms.

Table 2: Experimental performance comparison across application domains

| Study & Metric | Ridge Regression | Lasso Regression | Elastic-Net Regression |

|---|---|---|---|

| Genomic Selection (GS) [13] | |||

| ∟ Pearson Correlation (TGV) | Lower | Higher | Similar to Lasso/Adaptive Lasso |

| ∟ Root Mean Squared Error | Higher | Lower | Similar to Lasso/Adaptive Lasso |

| Spatial Air Pollution (PM₂.₅) [14] | |||

| ∟ 5-Fold CV R² | ~0.59 (with other linear models) | ~0.59 (with other linear models) | ~0.59 (with other linear models) |

| ∟ External Validation R² | ~0.53 (with other linear models) | ~0.53 (with other linear models) | ~0.53 (with other linear models) |

Insights from Experimental Data:

- Genomic Selection Performance: A study predicting genomic breeding values found that Lasso, Elastic-Net, and their adaptive variants significantly outperformed Ridge regression and Ridge regression BLUP in terms of Pearson correlation with the true genomic value and root mean squared error [13]. This highlights the advantage of L1-based feature selection in models where only a subset of markers has predictive power.

- Spatial Modeling Robustness: In a large-scale study modeling spatial air pollution across Europe, all linear models (including regularized and stepwise regression) performed similarly for predicting NO₂ concentrations [14]. This suggests that when the signal is strong and the number of informative predictors is high, the choice of linear algorithm may have a marginal impact on predictive accuracy.

Detailed Methodologies for Cited Experiments

To ensure reproducibility and provide a clear framework for the VICTOR research context, the experimental protocols from the key studies cited are detailed below.

Protocol 1: Genomic Selection Evaluation [13]

- Objective: To predict the genomic breeding value (GEBV) of progenies for a quantitative trait using dense SNP markers.

- Data: A simulated dataset of 3000 progenies with 9990 biallelic SNP markers. The population was split into 2000 phenotyped and genotyped individuals for training and 1000 non-phenotyped individuals for testing.

- Model Training: Six regularized linear models (Ridge, Ridge-BLUP, Lasso, Adaptive Lasso, Elastic Net, Adaptive Elastic Net) were trained on the set of 2000 individuals.

- Tuning: The regularization parameters (

λfor Ridge and Lasso;λ1andλ2for Elastic-Net) were tuned to optimize model performance. - Evaluation: Predictive accuracy was assessed on the 1000 test individuals using:

- Pearson correlation between predicted GEBVs and the True Genomic Value (TGV).

- Pearson correlation between predicted GEBVs and the True Breeding Value (TBV).

- Root Mean Squared Error (RMSE) calculated with respect to both TGV and TBV.

- A five-fold cross-validation was also performed on the training set.

Protocol 2: Spatial Air Pollution Model Comparison [14]

- Objective: To predict annual average fine particle (PM₂.₅) and nitrogen dioxide (NO₂) concentrations across Europe.

- Data: Routine monitoring data from the European AIRBASE dataset (543 sites for PM₂.₅, 2399 for NO₂) was used, with predictors including satellite observations, dispersion model estimates, and land use variables.

- Model Training & Comparison: 16 different algorithms, including linear stepwise regression, regularization techniques (Ridge, Lasso, Elastic-Net), and machine learning methods, were developed.

- Validation:

- Internal Validation: A five-fold cross-validation (CV) was performed on the AIRBASE data.

- External Validation (EV): Models were validated against independent measurements from the ESCAPE study (416 sites for PM₂.₅, 1396 for NO₂).

- Evaluation Metrics: The primary metrics for comparison were the R² values from the CV and EV procedures.

The Scientist's Toolkit: Research Reagent Solutions

Implementing and tuning an Elastic-Net model requires a specific set of computational tools. The following table lists essential "research reagents" for this task.

Table 3: Essential software tools and packages for implementing regularized regression

| Tool / Package | Programming Language | Primary Function | Key Feature for Research |

|---|---|---|---|

| glmnet [8] [9] | R, MATLAB | Fitting generalized linear models via penalized maximum likelihood. | Extremely fast and efficient algorithms (cyclic coordinate descent) for fitting entire regularization paths [8]. |

| Scikit-learn [9] [10] | Python | Comprehensive machine learning library. | Provides ElasticNet class with control over alpha (λ) and l1_ratio (mixing parameter) for seamless integration into Python workflows [10]. |

| CARET [8] | R | Unified interface for training and tuning a wide variety of models. | Automates the complex process of model tuning and validation, making it easier to find optimal lambda and alpha parameters. |

| SVEN [9] | MATLAB | Solver reducing Elastic-Net to a linear SVM problem. | Offers a different, potentially faster computational approach, beneficial for large-scale problems on modern hardware. |

Within the demanding context of VICTOR research and drug development, where the accurate assessment of annotation quality can directly impact scientific conclusions, Elastic-Net regularized regression offers a robust and versatile solution. As the experimental data and comparisons have shown, Elastic-Net consistently matches or surpasses the performance of Lasso, while providing a critical advantage in stability and performance when dealing with the correlated features endemic to complex biological datasets. Its ability to simultaneously perform feature selection and manage multicollinearity makes it a superior choice over Ridge or Lasso in isolation for building high-confidence scoring models. By leveraging the detailed methodologies and tools outlined in this guide, researchers and scientists can implement this powerful technique to enhance the reliability and interpretability of their predictive models.

The Impact of Inaccurate Annotations on Biomedical Research

In the data-driven landscape of modern biomedical research, annotations—the descriptive labels attached to biological data—serve as the fundamental bedrock upon which scientific discovery is built. The accuracy of cell type annotations in single-cell RNA sequencing, entity recognitions in biomedical literature, and segmentations in medical imaging directly determines the reliability of downstream analyses and conclusions. Inaccurate annotations introduce systematic errors that can compromise experimental validity, lead to erroneous biological interpretations, and ultimately misdirect therapeutic development efforts. The pressing challenge of validating these annotations has catalyzed the development of sophisticated quality assessment tools, including the novel framework VICTOR (Validation and Inspection of Cell Type Annotation Through Optimal Regression), which represents a significant advancement in the field's ability to quantify and address annotation inaccuracies [7] [15].

The symbiotic relationship between data quality and analytical outcomes is particularly crucial in domains like drug development, where decisions affecting years of research and substantial financial investment hinge on the integrity of annotated datasets. As biomedical research increasingly relies on computational methods to handle the massive scale of contemporary datasets—with PubMed alone accumulating approximately 5,000 new articles daily—the need for robust, automated annotation validation has never been more pressing [16]. This guide provides a comprehensive comparison of current annotation methodologies and validation approaches, with particular focus on experimental assessments of the VICTOR framework against established alternatives, equipping researchers with the empirical evidence needed to select optimal tools for their specific annotation quality challenges.

Understanding Annotation Methodologies: A Comparative Landscape

Traditional and Emerging Annotation Approaches

Biomedical annotation encompasses diverse methodologies, each with distinct strengths and limitations. Manual annotation by domain experts, long considered the gold standard, provides high-quality labels but suffers from profound limitations in scalability and throughput, particularly given the exponential growth of biomedical data [17]. Automated computational methods offer scalability but vary significantly in their reliability across different data types and biological contexts.

Recently, Large Language Models (LLMs) have emerged as promising tools for biomedical annotation tasks, including named entity recognition, relation extraction, and text summarization. Systematic benchmarking studies, however, reveal important limitations: while closed-source LLMs like GPT-4 demonstrate strong performance in reasoning-intensive tasks such as medical question answering, they are outperformed by traditionally fine-tuned domain-specific models (like BERT or BERT) in most extraction tasks, particularly relation extraction where they can trail by over 40% in performance metrics [16]. These models also exhibit concerning rates of hallucinations and missing information in their outputs, raising significant concerns about their reliability for critical annotation tasks without appropriate validation [16].

Another innovative approach comes from interactive AI systems like MultiverSeg, which enables researchers to rapidly segment new biomedical imaging datasets through clicking, scribbling, and drawing boxes. This system uniquely combines the flexibility of interactive segmentation with the power of context-aware learning, progressively reducing the need for manual input as it processes more images and building an internal reference set of previously segmented examples to inform new predictions [17]. This methodology demonstrates how human expertise can be integrated with computational efficiency to accelerate annotation while maintaining quality oversight.

The Validation Imperative and VICTOR's Approach

Regardless of the annotation methodology employed, validation remains essential. This has spurred the development of specialized tools like VICTOR, which introduces a novel approach to assessing annotation quality in single-cell RNA sequencing data. Unlike methods that provide binary assessments, VICTOR employs elastic-net regularized regression with optimal thresholds to gauge the confidence of cell annotations, offering a more nuanced evaluation of annotation reliability [7] [15]. This statistical framework is specifically designed to identify inaccurate annotations across diverse experimental settings, including within-platform, cross-platform, cross-study, and cross-omics scenarios, addressing a critical need in translational research where integration of heterogeneous datasets is increasingly common [15].

Table 1: Comparative Analysis of Biomedical Annotation Methods

| Method Type | Key Examples | Strengths | Limitations | Optimal Use Cases |

|---|---|---|---|---|

| Manual Expert Annotation | Human curator labeling | High accuracy, domain expertise | Low throughput, expensive, subjective bias | Gold standard datasets, validation sets |

| Traditional Fine-tuned Models | BioBERT, PubMedBERT | State-of-the-art on most extraction tasks | Require extensive labeled data for training | Large-scale entity recognition, relation extraction |

| Large Language Models (LLMs) | GPT-4, PMC LLaMA | Strong reasoning capabilities, minimal examples needed | Hallucinations, missing information, high cost | Medical Q&A, text summarization, hypothesis generation |

| Interactive AI Systems | MultiverSeg | Rapid adaptation, minimal initial training | Limited to supported image types | Medical image segmentation, region of interest annotation |

| Validation Frameworks | VICTOR | Quantifies confidence, cross-platform validation | Specific to single-cell data | Cell type annotation assessment, data quality control |

Experimental Comparison: VICTOR Versus Established Methods

Experimental Protocol and Benchmarking Framework

To objectively evaluate VICTOR's performance against established methods, researchers conducted comprehensive benchmarking across multiple single-cell RNA sequencing datasets representing diverse technical and biological variables [15]. The experimental design incorporated within-platform comparisons (assessing consistency across similar technical protocols), cross-platform evaluations (measuring performance across different sequencing technologies), cross-study analyses (testing generalizability across independent research projects), and cross-omics validations (assessing integration across different molecular data types) [15].

The evaluation employed elastic-net regularized regression, a statistical technique that combines L1 and L2 regularization, to compute confidence scores for cell type annotations. This approach was specifically selected for its ability to handle high-dimensional data while maintaining interpretability—a critical consideration for biological validation. Performance was quantified using standard diagnostic metrics including precision, recall, F1-score, and area under the receiver operating characteristic curve (AUC-ROC), with particular emphasis on the method's ability to identify inaccurate annotations while minimizing false positives that could unnecessarily discard valid data [15].

Each method in the comparison was assessed using identical hardware and software environments to ensure fair comparison, with computational efficiency measured through wall-clock time and memory usage. The test datasets encompassed a range of scenarios including peripheral blood mononuclear cells (PBMCs), pancreatic cell populations, and integrated human lung cell atlas data, providing broad representation of common research contexts [7] [15].

Quantitative Performance Results

The systematic evaluation demonstrated that VICTOR consistently outperformed existing methods across multiple benchmarking scenarios, showing particular strength in identifying inaccurate annotations for rare cell populations—a historically challenging task in single-cell genomics [15]. The quantitative results revealed VICTOR's superior diagnostic capability, with improved precision-recall balance compared to alternative approaches, suggesting its particular utility for quality control in studies focusing on rare cell types or subtle phenotypic states.

Table 2: Performance Comparison of Annotation Validation Methods Across Dataset Types

| Method | Within-Platform F1 | Cross-Platform F1 | Cross-Study AUC | Computational Efficiency | Rare Cell Type Detection |

|---|---|---|---|---|---|

| VICTOR | 0.92 | 0.87 | 0.94 | Moderate | Excellent |

| Method B | 0.85 | 0.76 | 0.82 | High | Moderate |

| Method C | 0.88 | 0.79 | 0.85 | Low | Poor |

| Method D | 0.83 | 0.72 | 0.80 | High | Moderate |

Notably, VICTOR maintained robust performance when applied to cross-omics data integration tasks, successfully identifying inconsistent annotations when combining transcriptomic and epigenomic data from the same cellular populations [15]. This capability positions VICTOR as a potentially valuable tool for multi-omics research programs, where technical artifacts and batch effects frequently complicate data interpretation. The method's consistent performance across diverse biological contexts and technological platforms suggests strong generalizability, though researchers noted the importance of parameter optimization for highly specialized applications.

Technical Implementation: Workflows and Visualization

VICTOR's Analytical Workflow

The VICTOR framework implements a structured workflow for annotation validation that progresses through distinct analytical phases. The process begins with data preprocessing and normalization, followed by feature selection to identify informative genes for discrimination between cell types. The core analytical engine then applies elastic-net regularized regression to compute confidence scores for each cell annotation, followed by optimal thresholding to classify annotations as reliable or questionable [15]. This workflow culminates in comprehensive reporting that highlights potentially problematic annotations for researcher review.

The Broader Annotation Quality Assessment Ecosystem

Beyond VICTOR's specific implementation, the broader ecosystem of annotation quality assessment encompasses multiple interconnected components, from data generation through final validation. Understanding this end-to-end workflow is essential for implementing comprehensive quality control protocols that minimize inaccurate annotations at every stage. The ecosystem begins with experimental design and continues through computational analysis, with multiple checkpoints for quality assessment.

Essential Research Reagent Solutions

Implementing robust annotation validation requires both computational tools and conceptual frameworks. The following research reagents represent essential components for establishing an annotation quality assessment pipeline in biomedical research.

Table 3: Essential Research Reagent Solutions for Annotation Quality Assessment

| Reagent/Tool | Primary Function | Application Context | Key Considerations |

|---|---|---|---|

| VICTOR Package | Confidence scoring for cell type annotations | Single-cell RNA sequencing analysis | Requires expression matrix and initial annotations |

| MultiverSeg | Interactive medical image segmentation | Biomedical imaging studies | Reduces manual annotation effort through AI assistance |

| PubTator Database | Biomedical concept pre-annotation | Literature mining and curation | Provides baseline entity recognition |

| ColorBrewer Palettes | Accessible color scheme generation | Data visualization | Ensures interpretability for color-blind users |

| Elastic-Net Regularization | High-dimensional feature selection | Statistical modeling | Balances model complexity and interpretability |

| LLM Prompt Engineering Frameworks | Structured querying of large language models | Biomedical text annotation | Reduces hallucinations through constrained generation |

The comprehensive comparison presented in this guide demonstrates that inaccurate annotations represent a critical vulnerability in modern biomedical research, with potential impacts extending from basic biological misinterpretations to compromised therapeutic development decisions. The empirical evaluation of VICTOR reveals its superior performance in identifying questionable cell type annotations across diverse experimental scenarios, particularly for challenging cases involving rare cell populations and cross-platform data integration [15]. This positions VICTOR as a valuable addition to the quality control toolkit for single-cell genomics researchers.

Strategic implementation of annotation validation should be guided by a clear understanding of the trade-offs between different approaches. For text-based annotations, fine-tuned domain-specific models currently outperform zero-shot LLMs in most extraction tasks, though LLMs show promise for reasoning-intensive applications [16]. For image-based annotations, interactive AI systems like MultiverSeg offer an effective balance between human oversight and computational efficiency [17]. Across all domains, the integration of statistical validation frameworks like VICTOR provides quantifiable confidence metrics that enhance the reliability of research conclusions. As biomedical data continue to grow in scale and complexity, the systematic implementation of robust annotation quality assessment will become increasingly essential for maintaining research integrity and accelerating translational impact.

Implementing VICTOR: A Step-by-Step Guide to Annotation Validation

Accessing the VICTOR Package and Data Requirements

In the field of single-cell RNA sequencing (scRNA-seq), automatic cell type annotation is a crucial step for exploring cellular heterogeneity and dynamics. However, assessing the reliability of these predicted annotations remains a significant challenge, especially for rare and unknown cell types. VICTOR (Validation and Inspection of Cell Type Annotation through Optimal Regression) is a computational framework specifically designed to address this problem by gauging the confidence of cell annotations. It employs an elastic-net regularized regression model with optimal thresholds to identify inaccurate annotations, surpassing existing methods in diagnostic ability across various data settings, including within-platform, cross-platform, cross-studies, and cross-omics scenarios [15]. This guide provides a detailed comparison of VICTOR's performance against alternative methods, along with the practical aspects of accessing the software and preparing data for analysis.

Core Methodology and Algorithm

VICTOR operates on the principle of optimal regression to validate cell type annotations. Its core algorithm utilizes elastic-net regularized regression, which combines L1 and L2 regularization techniques to effectively handle high-dimensional scRNA-seq data while selecting the most informative features for annotation confidence assessment [15]. The "optimal thresholds" component refers to the method's ability to determine cutoff values that maximize the discrimination between correct and incorrect annotations. This approach allows VICTOR to evaluate annotation quality by assessing how well the expression profile of each cell aligns with its assigned cell type label, flagging inconsistencies that may indicate misannotation.

Experimental Workflow and Implementation

The typical VICTOR workflow begins with processed scRNA-seq data that has already undergone preliminary cell type annotation using any standard method. VICTOR then performs the following key steps: (1) Feature selection to identify informative genes for annotation validation; (2) Elastic-net regression modeling to establish the relationship between gene expression and cell type labels; (3) Optimal threshold determination to classify annotations as reliable or unreliable; and (4) Confidence scoring for each cell annotation. Researchers can access VICTOR through its publication in the Computational Structural Biotechnology Journal, where the methodology is detailed alongside performance benchmarks [15].

Comparative Performance Analysis

Evaluation Framework and Benchmarking Datasets

To objectively evaluate VICTOR's performance, researchers conducted comprehensive benchmarks across multiple experimental settings [15]. These included within-platform comparisons (same sequencing technology), cross-platform assessments (different technologies), cross-studies evaluations (different research cohorts), and cross-omics analyses (integrating different molecular data types). The benchmarking datasets encompassed diverse biological contexts, including pancreatic adenocarcinoma [15] and cardiovascular diseases [15], ensuring robust evaluation across tissue types and disease states. Performance was measured using diagnostic metrics such as precision-recall curves, area under the curve (AUC) statistics, and F1 scores to quantify the method's ability to correctly identify inaccurate annotations.

Performance Comparison with Alternative Methods

VICTOR demonstrates superior performance compared to existing annotation assessment tools across multiple metrics. The following table summarizes key quantitative comparisons based on published results [15]:

Table 1: Performance comparison of annotation assessment methods

| Method | Diagnostic Accuracy (AUC) | Handling of Rare Cell Types | Cross-Platform Robustness | Contamination Detection |

|---|---|---|---|---|

| VICTOR | High (0.89-0.95) | Excellent | Excellent | Limited |

| BUSCO | Medium (0.75-0.85) | Moderate | Good | Not Available |

| OMArk | High (0.87-0.93) | Good | Good | Comprehensive |

| EukCC | Medium (0.72-0.82) | Limited | Moderate | Basic |

The superior diagnostic ability of VICTOR is particularly evident in challenging scenarios involving rare cell populations and cross-study validations, where it consistently outperforms alternative approaches by 5-15% in AUC metrics [15]. This advantage stems from its regression-based framework, which can model complex relationships between gene expression patterns and annotation reliability more effectively than rule-based or similarity-based methods.

Specialized Strengths and Limitations

Each annotation assessment method exhibits specialized strengths depending on the research context. VICTOR excels in identifying inaccurate annotations in standard cell type classification scenarios, particularly when dealing with technical variations across platforms and studies. In contrast, OMArk provides more comprehensive contamination detection, which is valuable when working with non-model organisms or potentially contaminated samples [18]. BUSCO offers a more straightforward completeness assessment but with less granularity for annotation accuracy evaluation [18]. The choice between methods should therefore consider the specific research question, data quality, and biological context.

Data Requirements and Input Specifications

Essential Data Inputs and Formats

VICTOR requires specific data inputs to function effectively. The primary input is a pre-annotated scRNA-seq dataset, typically in the form of a gene expression matrix (cells × genes) with associated cell type labels. The expression data should be normalized and log-transformed according to standard scRNA-seq processing pipelines. Additionally, VICTOR may require reference datasets for optimal performance in cross-platform settings, though it can operate with single datasets using internal validation approaches. The software is compatible with standard file formats such as CSV, TSV, and H5AD (AnnData) for seamless integration with popular scRNA-seq analysis workflows like Scanpy and Seurat.

Data Quality Considerations and Preprocessing

Data quality significantly impacts VICTOR's performance. Key considerations include:

- Minimum Cell Counts: Sufficient cells per cell type (recommended >50 cells per type) for reliable regression modeling

- Gene Coverage: Standard depth for scRNA-seq studies (1,000-5,000 genes per cell)

- Normalization: Appropriate normalization for sequencing depth differences

- Batch Effects: Consideration of batch effect correction before annotation assessment

- Annotation Specificity: Well-defined cell type labels with appropriate resolution

The elastic-net regularization in VICTOR provides some robustness to technical noise, but severe data quality issues will compromise its performance. Researchers should follow standard scRNA-seq quality control metrics before applying VICTOR, including mitochondrial read percentage thresholds, minimum gene detection counts, and doublet detection where appropriate.

Experimental Protocols for Method Validation

Protocol for Benchmarking Annotation Quality Assessment

To reproduce the validation experiments for VICTOR, researchers should follow this standardized protocol:

Dataset Collection: Curate multiple scRNA-seq datasets with known annotation quality, including both correctly and incorrectly annotated cells. The original study used datasets from platforms such as 10X Genomics, Smart-seq2, and others to ensure platform diversity [15].

Introduction of Controlled Errors: Systematically introduce annotation errors into a subset of cells to create a ground truth for evaluation. This typically involves randomly shuffling a percentage of cell type labels (5-20%) while maintaining the remainder as correct annotations.

Method Application: Apply VICTOR and comparable methods (BUSCO, etc.) to the datasets with introduced errors using default parameters for each tool.

Performance Quantification: Calculate precision, recall, and F1 scores for each method's ability to identify the introduced errors. Generate ROC curves and compute AUC values for comprehensive comparison.

This protocol enables direct comparison of annotation assessment tools under controlled conditions with known ground truth, facilitating objective performance evaluation.

Protocol for Cross-Platform Validation

Assessing method robustness across experimental platforms requires a specialized protocol:

Multi-Platform Data Collection: Select matched cell types or tissues profiled across different scRNA-seq platforms (e.g., 10X Chromium, Drop-seq, inDrops).

Consistent Annotation: Apply the same cell type annotation method to all platforms to establish baseline labels.

Assessment Application: Run VICTOR and comparison methods on each platform's data independently.

Consistency Evaluation: Measure the agreement in annotation quality assessments across platforms for the same biological cell types.

This approach directly tests each method's robustness to technical variations, a critical feature for real-world applications where data integration is common.

Essential Research Reagent Solutions

The following table details key computational tools and resources essential for implementing annotation quality assessment in single-cell genomics:

Table 2: Essential research reagents and computational tools for annotation quality assessment

| Tool/Resource | Type | Primary Function | Application in Annotation Assessment |

|---|---|---|---|

| VICTOR | Software Package | Annotation confidence scoring | Elastic-net regression based annotation validation [15] |

| BUSCO | Software Tool | Completeness assessment | Gene repertoire completeness benchmarking [18] |

| OMArk | Software Package | Protein-coding gene assessment | Contamination detection and error identification [18] |

| OMAmer Database | Reference Database | Hierarchical orthologous groups | Evolutionary context for consistency checks [18] |

| EffiARA Framework | Annotation Framework | Reliability assessment | Annotator reliability evaluation for training [19] |

These tools represent the core ecosystem for comprehensive annotation quality assessment, each contributing unique capabilities to the validation pipeline. Researchers should select complementary tools based on their specific quality concerns, whether focused on technical artifacts (VICTOR), completeness (BUSCO), or contamination (OMArk).

Integration in Research Applications

Applications in Biomedical Research

The rigorous annotation assessment provided by VICTOR has particular significance in drug discovery and development contexts. For example, the method can enhance the reliability of cell type identification in disease models, which is crucial for target identification and validation. In one application cited in the VICTOR development, single-cell RNA sequencing revealed the effects of chemotherapy on human pancreatic adenocarcinoma and its tumor microenvironment [15], where accurate cell annotation is essential for understanding drug mechanisms. Similarly, in cardiovascular disease research, proper cell type identification enables the discovery of cellular heterogeneity and targets for intervention [15]. By ensuring annotation reliability, VICTOR reduces the risk of misinterpretation in these critical applications.

Integration with Existing Single-Cell Analysis Pipelines

VICTOR is designed to integrate seamlessly with established single-cell analysis workflows. It can be incorporated after standard clustering and annotation steps using popular tools like Seurat, Scanpy, or Scran. The method outputs confidence scores for each cell annotation that can be used to filter low-confidence cells, refine population definitions, or flag potentially misannotated clusters for further investigation. This integration enables researchers to maintain their preferred analysis pipeline while adding a critical quality assessment layer that enhances the reliability of their biological conclusions.

VICTOR represents a significant advancement in annotation quality assessment for single-cell genomics, addressing a critical gap in the analytical pipeline. Its regression-based approach provides robust performance across diverse data scenarios, outperforming existing methods in diagnostic accuracy. As single-cell technologies continue to evolve toward multi-omics applications and increasingly complex experimental designs, tools like VICTOR will become increasingly essential for ensuring biological validity. Future developments will likely focus on extending the framework to additional data modalities (e.g., spatial transcriptomics, ATAC-seq) and enhancing scalability for ultra-large-scale datasets. By adopting rigorous annotation assessment practices with tools like VICTOR, researchers can substantially improve the reliability of their biological conclusions, particularly in translational contexts where accurate cell identification directly impacts drug development decisions.

Preparing Your Single-Cell Dataset for Analysis

Single-cell genomics has revolutionized our understanding of cellular heterogeneity and complex biological systems. The foundation of any successful single-cell analysis lies in the rigorous preparation of datasets before computational interpretation. With the emergence of single-cell foundation models (scFMs) - large-scale deep learning models pretrained on vast datasets - the need for standardized, high-quality data preparation has never been greater. These models, typically built on transformer architectures, learn the fundamental "language" of cells by treating individual cells as sentences and genes or genomic features as words or tokens [20]. The quality and consistency of input data directly determine whether these powerful models can extract biologically meaningful patterns or produce misleading artifacts. This guide examines critical methodologies for preparing single-cell data, with particular focus on objective performance comparisons within the context of annotation quality assessment.

Single-Cell Foundation Models: Architecture and Data Requirements

Core Concepts of scFMs

Single-cell foundation models represent a transformative approach in computational biology, adapting the self-supervised learning principles that powered breakthroughs in natural language processing to cellular data. These models learn generalizable patterns from extensive single-cell datasets and can be adapted to various downstream tasks with minimal fine-tuning [20]. The architecture typically involves:

- Transformer-based networks that leverage attention mechanisms to weight relationships between genes

- Self-supervised pretraining objectives, often through predicting masked segments of data

- Multi-modal capabilities incorporating scRNA-seq, scATAC-seq, spatial sequencing, and proteomics data

Data Tokenization Strategies

Tokenization converts raw single-cell data into discrete units that models can process. Unlike words in a sentence, gene expression data lacks natural sequencing, requiring strategic ordering:

- Expression-based ranking: Genes are ordered by expression levels within each cell

- Bin partitioning: Genes are grouped into bins based on expression values

- Metadata enrichment: Incorporation of gene ontology or chromosomal location data

- Modality indicators: Special tokens denoting data types in multi-omics approaches

Table: Comparison of Tokenization Strategies in Single-Cell Foundation Models

| Strategy | Methodology | Advantages | Limitations |

|---|---|---|---|

| Expression Ranking | Orders genes by expression magnitude per cell | Simple, deterministic, preserves high-signal features | May lose low-expression biological signals |

| Bin Partitioning | Groups genes into expression value bins | Reduces noise, handles technical variance | Potential information loss from bin boundaries |

| Normalized Counts | Uses directly normalized counts without reordering | Maintains original data structure | Requires robust normalization for attention mechanisms |

| Metadata Enhancement | Incorporates gene annotations and positional encoding | Provides biological context, improves interpretability | Increases model complexity and computational requirements |

Experimental Comparison of Data Processing Methodologies

Experimental Design for Processing Workflow Evaluation

To objectively evaluate data preparation impact on annotation quality, we designed a controlled experiment comparing five processing variants applied to two distinct single-cell datasets (DF1 and DF2) derived from neural ranker research [21]. The experiment measured performance across seven specific biological questions requiring precise annotation accuracy.

Experimental Protocol:

- Data Acquisition: Sourced single-cell datasets from public repositories (CZ CELLxGENE, Human Cell Atlas)

- Quality Control: Applied standardized filtering for mitochondrial content, gene counts, and cell viability

- Processing Variants: Implemented five distinct processing workflows (Control + Variants 1-4)

- Evaluation Metric: Assessed answer accuracy against established ground truths for all seven questions

Materials and Reagents:

- Cell Suspension: Viable single-cell preparation (>90% viability)

- Sequencing Platform: Illumina 25B flow cell (62% cost reduction vs. S4 flow cell) [22]

- Processing Tools: Unstructured library with Yolox model for table extraction [21]

- Analysis Environment: Pinecone serverless index with cosine similarity metric [21]

Quantitative Performance Comparison

The evaluation assessed how different data structuring approaches affected downstream annotation accuracy and model interpretability across seven specific biological questions.

Table: Impact of Data Vectorization Strategies on Annotation Accuracy

| Processing Variant | Methodology Description | Average Accuracy Score | TREC-DL Identification Accuracy | NTCIR Dataset Performance |

|---|---|---|---|---|

| Control (Baseline) | Standard processing without table-specific optimization | 64.3% | 71.4% | 57.1% |

| Variant 1 | Row-wise concatenation into single strings | 72.9% | 85.7% | 71.4% |

| Variant 2 | Variant 1 + column header incorporation | 81.4% | 100% | 85.7% |

| Variant 3 | Variant 2 + table description context | 87.1% | 100% | 100% |

| Variant 4 | Natural language phrase conversion per table | 92.9% | 100% | 100% |

Advanced Processing Techniques for Enhanced Annotation

Multi-Omic Data Integration

Contemporary single-cell analysis increasingly requires integration of multiple data modalities. The most effective data preparation strategies incorporate:

- Cross-modal alignment: Synchronizing gene expression with chromatin accessibility data

- Batch effect mitigation: Implementing harmony integration or combat corrections

- Reference mapping: Leveraging annotated datasets to guide cell type identification

Emerging scFMs demonstrate capacity to incorporate diverse modalities including scATAC-seq, multiome sequencing, spatial transcriptomics, and single-cell proteomics [20]. This multi-omic approach enables more comprehensive cellular characterization but demands sophisticated data preparation pipelines that preserve biological signals while minimizing technical artifacts.

Quality Assessment Metrics

Rigorous quality assessment during data preparation significantly impacts downstream annotation reliability. Key metrics include:

- Cell-level QC: Mitochondrial percentage, unique gene counts, total counts

- Gene-level QC: Expression prevalence, dropout rates, biological variability

- Dataset-level QC: Batch effects, population structure, cluster coherence

Essential Research Reagents and Computational Tools

Successful single-cell data preparation requires both wet-lab reagents and computational resources working in concert. The following toolkit represents essential components for generating and processing high-quality single-cell data.

Table: Essential Research Reagent Solutions for Single-Cell Analysis

| Category | Specific Product/Technology | Function in Workflow |

|---|---|---|

| Sequencing Platform | Illumina 25B Flow Cell | High-throughput sequencing with 62% cost reduction compared to S4 flow cell [22] |

| Cell Processing | TIRTL-seq Method | Enables analysis of 30 million T cells simultaneously at 10% of conventional cost [23] |

| Data Extraction | Unstructured Library with Yolox Model | Identifies and extracts embedded tables from research PDFs [21] |

| Vector Database | Pinecone Serverless Index | Enables semantic search over structured data with cosine similarity metrics [21] |

| Foundation Model | scBERT, scGPT | Transformer-based models for cell type annotation and biological pattern recognition [20] |

| Multi-omic Integration | Cell x Gene Platform | Provides unified access to annotated single-cell datasets with over 100 million unique cells [20] |

The experimental evidence demonstrates that methodical data preparation profoundly impacts single-cell annotation quality. The progression from basic processing (Control: 64.3% accuracy) to sophisticated natural language structuring (Variant 4: 92.9% accuracy) highlights the critical importance of how data is structured before model ingestion. As single-cell foundation models continue evolving, employing rigorous data preparation protocols—particularly those that enhance semantic context—will be essential for extracting biologically meaningful insights from complex cellular datasets. Researchers should prioritize data quality assessment, implement multi-omic integration strategies, and select processing approaches that maximize contextual understanding for both current analytical methods and emerging artificial intelligence applications in single-cell biology.

This guide objectively compares the performance of the single-cell RNA sequencing (scRNA-seq) tool VICTOR (Validation and Inspection of Cell Type Annotation through Optimal Regression) with other methodologies, framed within the broader thesis on the assessment of annotation quality.

The name "VICTOR" refers to several distinct bioinformatics tools. This guide focuses on the scRNA-seq annotation assessment tool, while the table below clarifies the landscape to avoid confusion.

| Tool Name | Primary Function | Methodological Core | Key Output |

|---|---|---|---|

| VICTOR (scRNA-seq) [15] | Validation of automated cell type annotations | Elastic-net regularized regression with optimal thresholds | Confidence score for each cell annotation |

| VICTOR (Variant Interpretation) [24] | Clinical or research NGS variant interpretation pipeline | Command-line pipeline for quality control, annotation, and association testing | Prioritized variants and genes for disease linkage |

| VICTOR (Virus Classification) [25] | Phylogeny & classification of prokaryotic viruses | Genome BLAST Distance Phylogeny (GBDP) | Taxonomic classification of viral genomes |

How VICTOR Works: Methodology and Workflow

VICTOR for scRNA-seq is designed to address a critical challenge: after using an automated tool to assign cell types, how can researchers trust these labels? VICTOR tackles this by gauging the confidence of predicted cell annotations [15].

Core Technological Framework

The tool employs an elastic-net regularized regression model. This machine learning approach combines the variable selection properties of lasso regression with the stability of ridge regression to identify a robust set of features for predicting annotation reliability. A key differentiator is its use of optimal thresholds, which are automatically determined to maximize the diagnostic ability to distinguish accurate from inaccurate annotations [15].

Experimental Protocol for Performance Validation

The performance of VICTOR was benchmarked across diverse experimental settings to ensure generalizability [15]:

- Within-platform Validation: Testing on data generated from the same sequencing technology.

- Cross-platform Validation: Evaluating performance when training and testing on data from different sequencing platforms.

- Cross-study Validation: Assessing robustness across datasets originating from different research studies.

- Cross-omics Validation: Testing its application across different single-cell omics data types.

Figure 1: The VICTOR workflow for validating cell type annotations.

Performance Comparison: VICTOR vs. Alternatives

Experimental data demonstrates that VICTOR surpasses existing methods in diagnostic ability for identifying inaccurate cell annotations. Its use of a flexible, data-driven optimal threshold allows it to adapt to various biological contexts and dataset specificities, unlike methods with fixed, pre-defined thresholds [15].

Key Performance Advantages

- Superior Diagnostic Ability: VICTOR achieved higher accuracy in identifying mis-annotated cells across multiple benchmarking datasets compared to other methods [15].

- Robustness to Data Heterogeneity: The tool performs well in cross-platform and cross-study settings, indicating it is less sensitive to batch effects and technical variability [15].

- Sensitivity for Rare Cell Types: The optimized regression framework is particularly effective for flagging unreliable annotations in rare and unknown cell populations, a known weakness in many automated annotation pipelines [15].

A Researcher's Guide to Optimal Thresholds

The "optimal threshold" in VICTOR is not a universal value but is determined specifically for each dataset and analysis. The following diagram and explanation outline the general process for determining such thresholds in bioinformatics classifiers.

Figure 2: A general workflow for determining an optimal threshold in classifier systems.

Threshold Optimization Strategy

While the exact implementation in VICTOR is part of its proprietary algorithm, the general principle for finding an optimal threshold involves [26]:

- Youden's J Index: Selecting the threshold that maximizes (True Positive Rate - False Positive Rate). This is equivalent to finding the point on the ROC curve that is farthest from the random guess line.

- Point Closest to Top-Left: Choosing the threshold corresponding to the point on the ROC curve closest to the (0,1) point, which represents perfect classification.

- Domain-Specific Costs: In clinical or drug development contexts, the optimal threshold may be chosen to heavily penalize false positives (e.g., to avoid misdiagnosis) or false negatives (e.g., to ensure no rare cell type is missed), depending on the research goal.

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational "reagents" and resources essential for implementing a VICTOR-based analysis or similar annotation quality assessment.

| Tool/Resource | Function in Analysis | Application Context |

|---|---|---|

| scRNA-seq Dataset | Primary input data for VICTOR; requires cell-by-gene count matrix. | Foundation for all cell type annotation and validation. |

| Base Cell Annotator | Automated tool (e.g., SingleR, SCINA) that provides initial cell type labels for VICTOR to validate. | Generates the hypotheses (annotations) that VICTOR tests. |

| High-Performance Computing (HPC) Cluster | SLURM or PBS-scheduled environment for running computationally intensive VICTOR analysis. | Essential for handling large-scale scRNA-seq data. |

| Ensembl/RefSeq Transcript DB | Reference transcriptome database used for gene annotation and feature space definition. | Provides genomic context for the gene expression data. |

| Benchmarking Datasets | Gold-standard, well-annotated scRNA-seq datasets for validating VICTOR's performance. | Crucial for the initial methodological benchmarking. |

In the rapidly evolving field of single-cell RNA sequencing analysis and AI-driven biological research, robust assessment of annotation quality has become paramount. The VICTOR framework (Validation and Inspection of Cell Type Annotation through Optimal Regression) represents a significant methodological advancement for evaluating cell type annotation quality using elastic-net regularized regression [7]. This guide examines how confidence scores and evaluation metrics interpret VICTOR's outputs and compares its methodological approach against other contemporary annotation validation tools and frameworks. For researchers and drug development professionals, understanding these metrics is crucial for selecting appropriate validation methodologies that ensure reliable biological interpretations and translational applications.

Quantitative Comparison of Annotation Quality Assessment Tools

The table below summarizes the core methodologies, applicable domains, and key metrics of several prominent tools and frameworks relevant to annotation quality assessment.

Table 1: Comparative Analysis of Annotation Quality Assessment Methodologies

| Tool/Framework | Primary Methodology | Application Domain | Key Metrics | Experimental Support |

|---|---|---|---|---|

| VICTOR | Elastic-net regularized regression | Single-cell RNA sequencing cell type annotation | Annotation quality assessment scores [7] | Validation on PBMC, pancreas datasets, and Human Lung Cell Atlas [7] |