Zero-Shot Learning with Single-Cell Foundation Models: Current State, Challenges, and Future Directions

Single-cell foundation models (scFMs), pretrained on millions of single-cell transcriptomes, promise to revolutionize biological discovery by enabling zero-shot learning—applying model knowledge to new data without task-specific training.

Zero-Shot Learning with Single-Cell Foundation Models: Current State, Challenges, and Future Directions

Abstract

Single-cell foundation models (scFMs), pretrained on millions of single-cell transcriptomes, promise to revolutionize biological discovery by enabling zero-shot learning—applying model knowledge to new data without task-specific training. This article provides a comprehensive overview for researchers and drug development professionals, exploring the foundational concepts of scFMs and zero-shot inference. We examine methodological approaches and applications, from cell type annotation to drug perturbation prediction, and critically address performance challenges revealed by recent rigorous evaluations. The content synthesizes troubleshooting strategies and optimization techniques, while presenting a framework for the validation and comparative benchmarking of these models against traditional methods. By integrating the latest research, this article serves as a guide for the effective application and future development of zero-shot scFMs in biomedical research.

Understanding Single-Cell Foundation Models and the Zero-Shot Paradigm

What Are Single-Cell Foundation Models? Defining the Core Concepts

Single-cell foundation models (scFMs) are large-scale deep learning models pretrained on vast datasets of single-cell genomics data, capable of being adapted to a wide range of downstream biological tasks [1]. Inspired by the success of large language models (LLMs) in natural language processing, these models aim to decipher the 'language' of cells by learning universal patterns from millions of single-cell transcriptomes [1] [2].

In these models, individual cells are treated analogously to sentences, and genes or other genomic features along with their expression values are treated as words or tokens [1]. The premise is that by exposing a model to millions of cells encompassing many tissues and conditions, it can learn fundamental, generalizable principles of cellular biology [1].

Core Architectural Concepts of scFMs

The development of a single-cell foundation model involves several key components, from data assembly to model architecture and pretraining.

- Data Sources for Pretraining: A critical ingredient for any scFM is the compilation of large and diverse datasets. Platforms like CZ CELLxGENE, which provides access to over 100 million unique annotated cells, and public repositories like the Gene Expression Omnibus (GEO) are foundational to this effort [1]. Curated compendia such as the Human Cell Atlas, PanglaoDB, and the Human Ensemble Cell Atlas collate data from multiple sources to create extensive training corpora [1].

- Tokenization: This process converts raw gene expression data into discrete units, or tokens, that the model can process. A key challenge is that gene expression data is not naturally sequential. Common strategies to impose order include:

- Ranking genes within each cell by their expression levels, feeding the ordered list as a "sentence" [1].

- Binning gene expression values into discrete categories and using those as tokens [1] [3].

- Gene tokens are often combined with value embeddings (representing expression level) and positional embeddings (indicating the gene's rank or position) [4].

- Model Architecture: Most scFMs are built on the transformer architecture, which uses attention mechanisms to weight the relationships between any pair of input tokens (genes) [1]. Popular variants include:

- Pretraining Strategy: These models are typically trained using self-supervised learning, most commonly masked gene expression prediction [5] [6]. In this task, the model is shown input data with a subset of genes withheld and must predict the expression of these masked genes based on the context of the remaining genes [6]. The logic is that successfully completing this task requires the model to learn the underlying regulatory and functional relationships between genes [6].

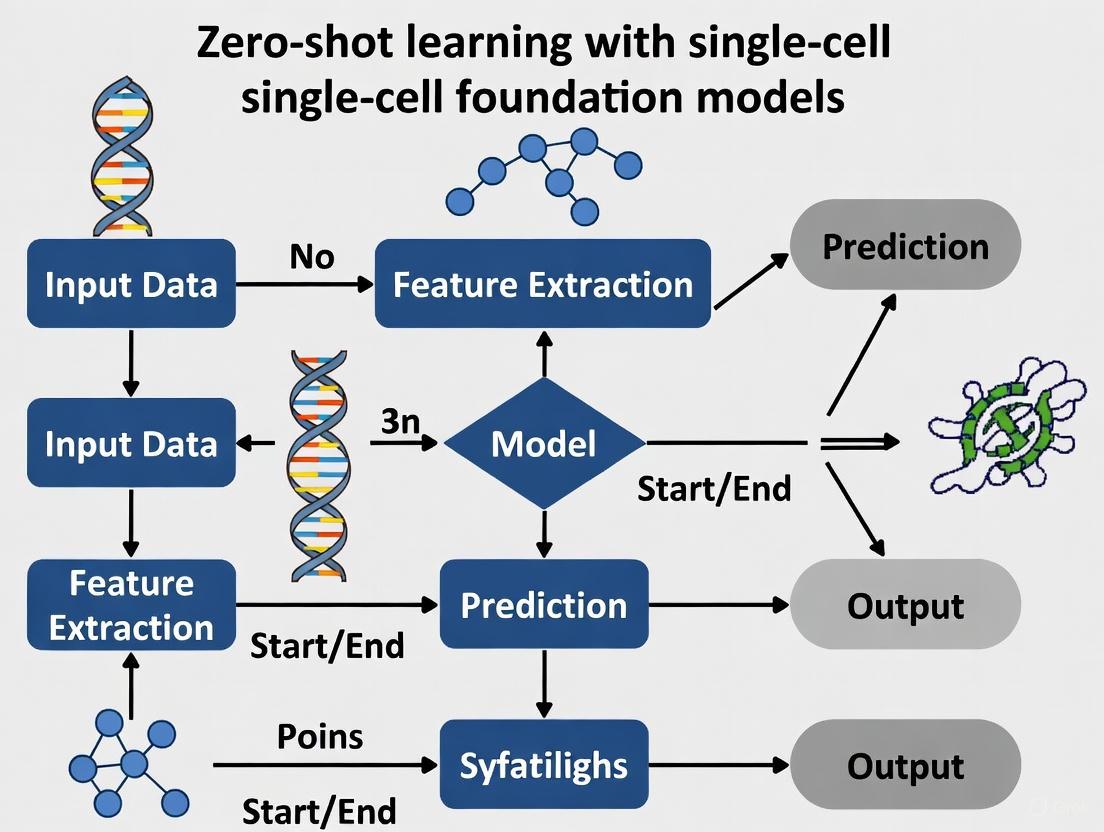

The following diagram illustrates a typical workflow for building and applying a single-cell foundation model.

Current Research Landscape & Zero-Shot Performance

The "zero-shot" capability of a foundation model—its performance on new, unseen data without any task-specific training—is critical for biological discovery settings where labels are unknown [5]. Recent rigorous evaluations have revealed both the promise and limitations of current scFMs in this regard.

Benchmarking Zero-Shot Performance

A key evaluation of two popular models, Geneformer and scGPT, examined their zero-shot performance on tasks like cell type clustering and batch integration across multiple datasets [5] [6]. The findings suggest that in their zero-shot configuration, these models can face significant reliability challenges.

- Cell Type Clustering: In separating known cell types, both Geneformer and scGPT were found to perform worse than established methods like scVI and Harmony, and sometimes even underperformed the simple approach of selecting Highly Variable Genes (HVG) [5] [6].

- Batch Integration: In the task of integrating data from multiple sources to remove technical "batch effects" while preserving biological variation, Geneformer consistently underperformed relative to scGPT, Harmony, scVI, and HVG across most datasets [5]. scGPT showed more competitive performance, particularly on complex datasets involving both technical and biological batch effects [5].

Table 1: Summary of Model Zero-Shot Performance in Key Tasks (Adapted from Genome Biology, 2025)

| Model | Cell Type Clustering | Batch Integration | Notable Strengths / Weaknesses |

|---|---|---|---|

| Geneformer | Underperformed baselines (HVG, scVI, Harmony) [5] | Consistently ranked last across metrics [5] | Embedding space often failed to retain cell type information; structure driven by batch effects [5] |

| scGPT | Inconsistent; outperformed on one dataset but worse on others [5] | Competitive on complex datasets with biological batch effects [5] | Performance may be influenced by overlap between evaluation and pretraining datasets [5] |

| scVI | Consistently strong performance [5] | Strong performer, especially on technical variation [5] | Established baseline, generative model [5] [4] |

| Harmony | Consistently strong performance [5] | Strong performer, but challenged on some datasets [5] | Established baseline, adjusts PC embeddings [5] [4] |

| HVG (Baseline) | Outperformed Geneformer and scGPT across metrics [5] | Achieved best batch integration scores in some evaluations [5] | Simple feature selection method [5] |

Insights into Performance Limitations

Research points to two main hypotheses for these zero-shot limitations. First, the masked language model pretraining framework itself might not inherently produce useful cell embeddings for these tasks. Second, the models may have failed to learn the pretraining task effectively [5]. For instance, analysis of scGPT's gene expression prediction revealed that, even when using its cell embedding, its predictive ability was only slightly improved and largely limited to highly expressed "housekeeping" genes, questioning whether it learns deeper, context-dependent relationships between genes [6].

Emerging Models and Improvements

Despite these challenges, the field is evolving rapidly. Newer, larger models are being developed, such as CellFM, an 800-million-parameter model trained on 100 million human cells, which reports outperforming existing models in tasks like cell annotation and gene function prediction [3]. Furthermore, research into efficient fine-tuning techniques (training less than 1% of a model's parameters) shows promise in enabling robust zero-shot generalization to unseen cell lines and conditions, such as predicting responses to novel drugs [7].

Essential Protocols for scFM Evaluation

For researchers aiming to evaluate single-cell foundation models, particularly in zero-shot settings, the following protocols outline key methodological steps.

Protocol for Zero-Shot Cell Type Clustering

This protocol assesses the quality of a model's cell embeddings for distinguishing cell types without any further training [5] [4].

- Objective: To evaluate whether a pretrained scFM's embeddings can separate known cell types in a new, unseen dataset.

- Experimental Steps:

- Dataset Selection: Acquire a well-annotated scRNA-seq dataset that was not part of the model's pretraining corpus. The dataset should have a known set of cell type labels. Examples include the Pancreas benchmark dataset or data from the Asian Immune Diversity Atlas (AIDA) [5] [4].

- Embedding Extraction: Pass the gene expression matrix of the new dataset through the pretrained scFM without performing any fine-tuning to obtain a latent cell embedding for each cell.

- Dimensionality Reduction: Apply a standard dimensionality reduction technique (e.g., UMAP, t-SNE) to the cell embeddings for visualization.

- Clustering & Evaluation: Use a clustering algorithm (e.g., Leiden, Louvain) on the full embeddings and compare the resulting clusters to the ground-truth cell type labels.

- Evaluation Metrics:

Protocol for Zero-Shot Batch Integration

This protocol evaluates a model's ability to produce embeddings that mix cells from different technical batches while preserving biological variation [5].

- Objective: To quantify the removal of batch effects and conservation of biological variance in a model's embeddings.

- Experimental Steps:

- Dataset Selection: Select a dataset with known, strong batch effects from multiple sources or experimental techniques, such as the Pancreas dataset with five different sources [5].

- Embedding Extraction: Obtain cell embeddings for the dataset using the pretrained scFM in zero-shot mode.

- Visualization: Create UMAP plots colored by both batch and cell type to qualitatively assess integration.

- Evaluation Metrics:

- Batch mixing metrics: Quantify how well cells from different batches are intermixed [5].

- Principal Component Regression (PCR) score: Measures the proportion of variance in the embeddings explained by batch effects versus biological cell type [5]. A lower batch PCR score indicates better integration.

Table 2: Essential Data, Models, and Frameworks for scFM Research

| Resource Name | Type | Primary Function |

|---|---|---|

| CZ CELLxGENE [1] | Data Platform | Provides unified access to over 100 million annotated single-cells; a primary source of pretraining data. |

| BioLLM Framework [8] | Software Tool | A unified interface that standardizes APIs for diverse scFMs, enabling seamless model switching and benchmarking. |

| Geneformer [5] [4] | Foundation Model | An encoder-based model pretrained on ~30 million cells using a gene ranking tokenization strategy. |

| scGPT [5] [4] | Foundation Model | A decoder-based model pretrained on ~33 million cells using gene value binning and attention masks. |

| Harmony [5] [4] | Algorithm | A robust baseline method for batch integration, often used for performance comparison. |

| scVI [5] [4] | Generative Model | A robust, probabilistic baseline model for cell embedding and batch correction. |

Single-cell foundation models represent a promising paradigm for analyzing cellular heterogeneity. While they are robust and versatile tools, current evaluations indicate that their zero-shot performance can be inconsistent, and they may be outperformed by simpler, established methods in tasks like cell type clustering and batch integration [5] [4] [6]. This underscores the importance of rigorous zero-shot evaluation in their development and deployment. For researchers, the choice to use a complex scFM versus a simpler alternative should be guided by the specific task, dataset size, need for biological interpretability, and computational resources [4]. As the field matures with larger models like CellFM [3] and standardized evaluation frameworks like BioLLM [8], scFMs are poised to become more reliable and indispensable tools for unlocking deeper insights into cellular function and disease.

Single-cell foundation models (scFMs) represent a paradigm shift in computational biology, leveraging large-scale deep learning to interpret the complex language of cellular biology [1]. These models are trained on vast datasets containing tens of millions of single-cell transcriptomes, enabling a unified framework for analyzing cellular heterogeneity and regulatory networks [1]. The architecture of scFMs is predominantly built upon transformer-based neural networks, which process single-cell data through specialized tokenization methods and self-supervised pretraining objectives [1] [9]. Within the context of zero-shot learning—where models must perform tasks without any further training on the target data—the architectural choices of scFMs become critically important for enabling robust biological discovery [5].

Core Architectural Components of scFMs

Transformer Architectures: The Backbone of scFMs

The transformer architecture serves as the fundamental engine for most single-cell foundation models, providing the capacity to capture intricate, long-range relationships between genes within a cell [1]. These models primarily utilize two architectural variants:

- Encoder-based architectures (e.g., scBERT): Employ bidirectional attention mechanisms that learn from all genes in a cell simultaneously, making them particularly effective for classification tasks and generating cell embeddings [1] [10].

- Decoder-based architectures (e.g., scGPT, cell2sentence): Utilize unidirectional masked self-attention that iteratively predicts masked genes conditioned on known genes, excelling in generative tasks [1] [10].

The self-attention mechanism within transformers allows scFMs to learn which genes in a cell are most informative of the cell's identity or state, and how they co-vary across different cellular contexts [1]. This capability is essential for building models that can generalize to novel biological contexts without task-specific fine-tuning.

Table 1: Comparison of Prominent scFM Architectures

| Model | Architecture Type | Tokenization Approach | Pretraining Data Scale | Key Applications |

|---|---|---|---|---|

| scGPT [1] [9] | Decoder-based | Gene-level with expression binning | 33M+ human cells | Zero-shot annotation, multi-omic integration, perturbation prediction |

| Geneformer [1] [10] | Encoder-based | Gene-level with expression ranking | 27M+ human cells | Cell embedding, network inference |

| scBERT [1] | Encoder-based | Gene-level with expression binning | Millions of cells | Cell type annotation |

| cell2sentence (C2S) [10] | Decoder-based | Natural language tokenization | 57M+ human and mouse cells + biological texts | Cell type prediction, biological interpretation |

| Nicheformer [9] | Graph Transformer | Spatial context tokens | 53M+ spatially resolved cells | Spatial niche modeling, context prediction |

Tokenization Strategies: From Biology to Tokens

Tokenization converts raw gene expression data into discrete units that transformers can process, representing a critical adaptation of natural language processing techniques to biological data [1] [11]. Unlike words in a sentence, genes have no inherent sequential order, necessitating specialized approaches:

- Gene ranking by expression: Genes are ordered within each cell by their expression levels, creating a deterministic sequence from highest to lowest expressed genes [1] [10]. This approach provides a consistent input structure but may sacrifice biological relationships.

- Expression value binning: Genes are partitioned into bins based on their expression values, with these rankings determining their positional encoding [1] [9].

- Natural language tokenization: Some models like cell2sentence leverage existing language model tokenizers by representing gene sequences as natural language strings [10].

Each gene is typically represented as a token embedding that combines a gene identifier with its expression value [1]. Special tokens may be added to represent cell-level metadata, experimental batch information, or modality indicators (e.g., for multi-omics data) [1]. Positional encoding schemes are then adapted to represent the relative order or rank of each gene in the cell, providing necessary sequence context to the transformer [1].

Pretraining Objectives and Strategies

scFMs are pretrained using self-supervised learning on massive, diverse collections of single-cell data, enabling them to learn fundamental biological principles that generalize across tasks [1]. The primary pretraining objective is masked gene modeling, where:

- The model is shown input data with a subset of genes withheld (masked) and must predict the expression of these masked genes based on the remaining genes in the cell [1] [6].

- This task forces the model to learn the complex regulatory relationships and co-expression patterns between genes [1].

- Through this process, the model develops a deep understanding of cellular "syntax" - how genes work together to define cell states and functions [1].

The pretraining data for scFMs is typically drawn from large-scale resources such as CZ CELLxGENE (containing over 100 million unique cells), the Human Cell Atlas, and other multiorgan atlases that provide broad coverage of cell types, states, and conditions [1]. Effective pretraining requires careful data selection, filtering of cells and genes, and balancing of dataset compositions to capture a wide spectrum of biological variation while mitigating technical noise and batch effects [1].

Experimental Protocols for scFM Development and Benchmarking

Protocol 1: Model Pretraining and Tokenization

Purpose: To train a foundational scFM from large-scale single-cell data using appropriate tokenization and self-supervised learning.

Materials and Reagents:

- Computational Resources: High-performance computing cluster with multiple GPUs (e.g., NVIDIA A100 or H100)

- Software: Python 3.8+, PyTorch or JAX, transformer libraries (Hugging Face Transformers, scGPT codebase)

- Data: Curated single-cell datasets from CZ CELLxGENE, Human Cell Atlas, or GEO/SRA repositories

Procedure:

- Data Curation and Quality Control

- Download and harmonize single-cell datasets from public repositories [1]

- Filter cells based on quality metrics (mitochondrial content, number of genes detected)

- Filter genes to include those detected in a minimum number of cells (e.g., >0.1% of cells)

- Perform basic normalization (e.g., library size normalization) without batch correction

Tokenization and Input Representation

- For each cell, rank genes by expression levels from highest to lowest [1] [10]

- Select top N genes (e.g., 2,000-5,000) based on this ranking as the "sentence" for that cell

- Convert each gene to a token embedding combining:

- Gene identifier embedding (learned)

- Expression value embedding (via binning or continuous representation) [1]

- Add special tokens for cell identity, batch information, or modality as needed [1]

- Apply positional encodings based on the rank order of genes

Model Architecture Configuration

- Initialize transformer architecture (encoder, decoder, or encoder-decoder)

- Set model dimensions (hidden size, number of layers, attention heads) based on computational constraints

- For decoder models: implement causal masking for autoregressive generation

- For encoder models: implement bidirectional attention

Self-Supervised Pretraining

- Implement masked gene modeling: randomly mask 15-20% of input tokens

- Train model to predict expression values of masked genes using mean squared error or similar loss

- Use AdamW optimizer with learning rate warmup and cosine decay

- Train for sufficient iterations (typically 100,000+ steps) on multiple GPUs

Model Validation

- Evaluate reconstruction accuracy on held-out validation cells

- Assess whether model captures known biological relationships (e.g., gene-gene correlations)

Protocol 2: Zero-Shot Performance Evaluation

Purpose: To assess the zero-shot capabilities of pretrained scFMs on downstream biological tasks without any fine-tuning.

Materials and Reagents:

- Pretrained Models: scGPT, Geneformer, or custom pretrained scFMs

- Benchmark Datasets: Curated evaluation datasets with known cell type labels (e.g., Tabula Sapiens, Pancreas datasets)

- Baseline Methods: Traditional computational tools (scVI, Harmony) and simple feature selection (Highly Variable Genes)

Procedure:

- Embedding Generation

- Process held-out test datasets through the pretrained scFM without any parameter updates

- Extract cell embeddings from the model's final layer or specialized cell token [5]

- For comparison, generate embeddings using baseline methods (scVI, Harmony, HVG)

Cell Type Clustering Evaluation

Batch Integration Assessment

- Evaluate embeddings on datasets with known batch effects

- Quantify batch mixing using:

- Visually inspect UMAP/t-SNE plots for batch mixing versus biological preservation

Gene Expression Prediction

- Assess the model's ability to predict held-out gene expression values

- Mask subsets of genes and compare predictions to true values

- Calculate correlation coefficients between predicted and actual expression [6]

Statistical Analysis

- Perform multiple runs with different random seeds

- Use paired statistical tests to compare method performance

- Report confidence intervals for performance metrics

Table 2: Key Metrics for Zero-Shot Evaluation of scFMs

| Evaluation Dimension | Key Metrics | Ideal Outcome | Current scFM Performance |

|---|---|---|---|

| Cell Type Clustering [5] | AvgBio, ASW, ARI | High scores (>0.8) indicating clear separation of cell types | Mixed: scGPT comparable to baselines on some datasets, Geneformer consistently underperforms |

| Batch Integration [5] | batchASW, PCR | Low batchASW, low PCR indicating minimal batch effects | Moderate: scGPT outperforms baselines on complex biological batches, underperforms on technical batches |

| Gene Expression Prediction [6] | Pearson correlation, MSE | High correlation, low error for context-specific genes | Limited: models often predict median expression; slight improvement for highly expressed "housekeeping" genes |

| Biological Conservation | Gene-gene correlation preservation | Maintenance of known biological relationships in embedding space | Varies by model and dataset |

Table 3: Key Resources for scFM Research and Development

| Resource Category | Specific Tools/Databases | Function/Purpose | Access Information |

|---|---|---|---|

| Data Repositories [1] | CZ CELLxGENE Discover, DISCO, Human Cell Atlas | Provide standardized, curated single-cell datasets for model training and benchmarking | Publicly available web portals with API access |

| Pretrained Models [1] [9] [10] | scGPT, Geneformer, cell2sentence, scPlantFormer | Offer pretrained foundation models for transfer learning and zero-shot evaluation | Hugging Face, GitHub repositories, BioLLM framework |

| Computational Frameworks [9] | BioLLM, scGNN+, scVI, Harmony | Provide standardized benchmarking, automated workflows, and baseline comparisons | Open-source Python packages |

| Evaluation Benchmarks [5] | Pancreas dataset, Tabula Sapiens, Immune cell atlas | Curated datasets with known ground truth for systematic model evaluation | Publicly available with standardized preprocessing |

| Interpretability Tools [10] | Transcoders, sparse autoencoders, circuit analysis | Enable mechanistic interpretation of model decisions and biological insights | Custom implementations building on transcoder frameworks |

Critical Analysis and Future Directions

The architecture of current scFMs shows promise but faces significant challenges in zero-shot settings. Recent evaluations reveal that even prominent models like scGPT and Geneformer underperform simpler methods like Highly Variable Genes (HVG) selection or established tools like Harmony and scVI in cell type clustering and batch integration tasks [5] [6]. This performance gap suggests that the masked gene modeling pretraining objective may not be sufficient for developing robust cellular representations that transfer effectively to downstream tasks without fine-tuning [5].

A key limitation stems from the fundamental difference between natural language and biological systems. While language has inherent sequential structure, gene expression data lacks natural ordering, requiring artificial sequencing through gene ranking [1] [11]. This artificial structure may not optimally capture biological relationships. Additionally, current models struggle with polysemanticity in gene expression, where the same gene may play different roles in different cellular contexts [11].

Future architectural improvements should focus on:

- Multimodal Integration: Incorporating additional data modalities (spatial context, epigenomics, proteomics) to provide richer biological context [1] [9].

- Advanced Interpretability: Applying techniques like transcoders to extract biologically meaningful circuits from model weights [10].

- Geometric Learning: Developing embedding spaces that better reflect the underlying biological manifold structure [11].

- Specialized Pretraining: Designing pretraining objectives that more directly align with downstream zero-shot tasks.

For researchers focusing on zero-shot learning with scFMs, rigorous evaluation using the protocols outlined here is essential before deploying these models in discovery settings where labeled data is unavailable [5]. The field would benefit from standardized benchmarks and evaluation practices that properly assess true biological understanding rather than exploiting statistical artifacts [5] [6].

What is Zero-Shot Learning? The Promise of Annotation-Free Discovery

Zero-shot learning (ZSL) represents a paradigm shift in machine learning, enabling models to recognize and categorize objects or concepts without having seen any labeled examples of those specific categories during training [12]. This approach stands in contrast to traditional supervised learning, which requires vast amounts of annotated data for each class the model needs to identify.

In the context of single-cell biology, ZSL offers transformative potential for uncovering novel biological insights without the bottleneck of manual cell annotation [13]. As single-cell technologies generate increasingly massive datasets, the ability to perform annotation-free discovery becomes crucial for identifying novel cell types, rare disease-associated cells, and complex cellular states that may lack established reference data [1] [13]. This protocol explores the application of ZSL principles through single-cell foundation models (scFMs) to advance biological discovery while highlighting current limitations and evaluation benchmarks.

Theoretical Foundations of Zero-Shot Learning

Core Mechanism and Definitions

ZSL operates by leveraging auxiliary information to bridge the gap between classes seen during training and unseen classes encountered during inference [12] [14]. Instead of learning explicit decision boundaries for every possible class, ZSL models learn to map inputs into a semantic space where relationships between concepts can be measured through similarity metrics.

Key Definitions:

- Seen Classes: Categories for which labeled examples are available during training.

- Unseen Classes: Categories for which no labeled examples are available during training.

- Auxiliary Information: Semantic representations that describe classes (e.g., textual descriptions, attributes, or embeddings) [14].

- Generalized Zero-Shot Learning (GZSL): A more challenging setting where test samples may come from both seen and unseen classes [12] [14].

Technical Approaches in ZSL

Table 1: Comparison of Zero-Shot Learning Technical Approaches

| Approach | Mechanism | Auxiliary Information | Common Applications |

|---|---|---|---|

| Attribute-Based | Learns to recognize class-descriptive attributes (e.g., "has wings," "is furry") and composes them to identify unseen classes [12] [15] | Manually defined attribute vectors | Computer vision, object recognition |

| Embedding-Based | Maps both input features and class labels into a shared semantic space where classification is determined by similarity [12] | Word embeddings (Word2Vec, GloVe, BERT), language model representations | Cross-modal retrieval, image captioning |

| Transfer Learning-Based | Leverages knowledge gained from pre-training on large datasets and adapts it to new tasks without additional labeled examples [12] [16] | Pre-trained model parameters | Natural language processing, single-cell biology |

Zero-Shot Learning in Single-Cell Foundation Models

Architecture of Single-Cell Foundation Models

Single-cell foundation models (scFMs) are large-scale deep learning models pre-trained on massive single-cell datasets, typically using transformer architectures [1]. These models aim to learn universal biological principles that can be transferred to various downstream tasks with minimal or no additional training.

The typical scFM processing workflow involves:

- Tokenization: Converting raw gene expression data into discrete tokens, often by ranking genes by expression levels or binning expression values [1]

- Embedding: Transforming tokens into vector representations using gene embeddings, value embeddings, and positional embeddings

- Transformer Processing: Applying self-attention mechanisms to model complex gene-gene interactions and capture biological patterns [1] [17]

- Output Generation: Producing latent representations of cells and genes that encode biological knowledge

Diagram 1: Single-Cell Foundation Model Architecture

Zero-Shot Capabilities of scFMs

In theory, scFMs should enable zero-shot biological discovery by leveraging knowledge acquired during pre-training. Potential applications include:

- Novel cell type identification without reference atlases

- Cross-tissue and cross-species generalization

- Rare disease cell detection without labeled examples

- Cellular perturbation prediction for unseen compounds or genetic manipulations

However, recent evaluations of popular scFMs like Geneformer and scGPT reveal significant limitations in their zero-shot capabilities [5]. When assessed on tasks such as cell type clustering and batch integration without fine-tuning, these models frequently underperform simpler baseline methods like Highly Variable Genes (HVG) selection or established integration tools like Harmony and scVI [5].

Table 2: Zero-Shot Performance of Single-Cell Foundation Models on Benchmark Tasks

| Model | Cell Type Clustering (AvgBIO Score) | Batch Integration (iLISI Score) | Novel Cell Type Detection | Reference |

|---|---|---|---|---|

| scGPT | Variable performance across datasets; outperformed by baselines on most benchmarks | Moderate performance on technical batches; struggles with biological variation | Limited evaluation available | [5] |

| Geneformer | Consistently underperforms HVG selection and established baselines | Poor performance; embeddings often dominated by batch effects | Not rigorously evaluated | [5] |

| HVG Baseline | Superior performance across most benchmarking datasets | Best overall performance in full-dimensional metrics | Not applicable | [5] |

| scVI | Strong performance on most datasets | Excellent technical batch correction; challenges with biological variation | Not applicable | [5] |

Protocols for Annotation-Free Discovery in Single-Cell Biology

Mixture Modeling for Multiple-Instance Learning (MMIL)

For scenarios where only patient-level labels are available (e.g., disease status) but individual cell labels are unknown, the MMIL protocol provides a practical approach for annotation-free cell classification [13].

Experimental Workflow:

Diagram 2: MMIL Algorithm Workflow

Step-by-Step Protocol:

Data Preparation

- Collect single-cell data from healthy donors (all cells considered baseline)

- Collect single-cell data from diseased patients (mixture of baseline and disease-associated cells)

- Process data using standard normalization and feature selection techniques

Model Initialization

- Initialize probabilities for each cell belonging to baseline or disease-associated class

- Set parameters: ρ (proportion of baseline cells in patients) and ζ (fraction of patient-derived cells in prediction population)

Expectation-Maximization Iteration

- E-Step: Estimate cell labels using current classifier probabilities

- M-Step: Train classifier using estimated cell labels

- Repeat until convergence of model parameters

Validation and Interpretation

- Evaluate using cross-validation against any available gold-standard labels

- Perform sensitivity analysis on parameter ρ

- Interpret selected features for biological relevance

Application Example: MMIL was successfully applied to detect leukemia cells in acute myeloid leukemia (AML) using mass cytometry data, achieving performance approaching that of a hematopathologist despite using only patient-level labels during training [13]. The method also demonstrated strong generalization across different tissues, treatment time points, and identification of minimal residual disease (MRD) cells.

Zero-Shot Evaluation Protocol for scFMs

To rigorously assess the zero-shot capabilities of single-cell foundation models, implement the following evaluation protocol:

Embedding Extraction

- Obtain cell embeddings from pre-trained scFMs without any fine-tuning

- Use default model settings and recommended preprocessing steps

Task-Specific Evaluation

- Cell Type Clustering: Apply clustering algorithms to embeddings and compare to known cell type annotations using metrics like Average BIO Score (AvgBIO) and Average Silhouette Width (ASW)

- Batch Integration: Assess ability to remove technical artifacts while preserving biological variation using metrics like iLISI and principal component regression (PCR)

- Novel Cell Type Detection: Evaluate performance on intentionally held-out cell types

Baseline Comparison

- Compare against simple baselines including HVG selection, scVI, and Harmony

- Use consistent evaluation metrics across all methods

- Perform statistical testing to determine significance of performance differences

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Zero-Shot Learning in Single-Cell Biology

| Resource Category | Specific Tools/Platforms | Function and Application |

|---|---|---|

| Pre-trained Models | scGPT, Geneformer, UCE, scFoundation, LangCell, scCello [17] | Provide foundational biological knowledge for transfer to new tasks without extensive retraining |

| Benchmark Datasets | CZ CELLxGENE, Human Cell Atlas, PanglaoDB, Asian Immune Diversity Atlas (AIDA) v2 [1] [17] | Curated single-cell datasets with high-quality annotations for model evaluation and development |

| Evaluation Frameworks | scGraph-OntoRWR, Lowest Common Ancestor Distance (LCAD), AvgBIO Score, iLISI [17] | Specialized metrics for assessing biological relevance and technical performance of zero-shot methods |

| Baseline Methods | Highly Variable Genes (HVG) selection, Harmony, scVI, Seurat [5] [17] | Established computational methods for comparison against novel zero-shot approaches |

Discussion and Future Directions

The promise of annotation-free discovery in single-cell biology through zero-shot learning remains compelling, though current implementations face significant challenges. While methods like MMIL demonstrate practical pathways for cell classification without complete labels [13], the zero-shot performance of large foundation models requires substantial improvement to fulfill their theoretical potential [5].

Critical areas for future development include:

- Improving pretraining objectives to yield more biologically meaningful representations

- Developing better evaluation protocols that capture real-world discovery scenarios

- Addressing domain shift between pretraining data and target applications

- Creating more transparent and interpretable model architectures

As these challenges are addressed, zero-shot learning approaches are poised to transform single-cell research by enabling truly exploratory analysis unconstrained by pre-existing annotations, potentially accelerating discovery of novel cell types, disease mechanisms, and therapeutic targets.

Single-cell foundation models (scFMs) represent a transformative approach in computational biology, leveraging large-scale deep learning architectures pretrained on vast single-cell datasets to enable a wide range of downstream tasks [1]. These models typically employ transformer-based architectures that learn the fundamental "language" of cells by processing gene expression profiles as textual sequences, where individual genes serve as tokens and complete cell profiles form sentences [1]. The pretraining phase is critical for developing models that can generalize across diverse biological contexts and perform effectively in zero-shot learning scenarios—where models must make predictions on new data without task-specific fine-tuning [5].

The performance and generalizability of scFMs are fundamentally constrained by the quality, scale, and diversity of their pretraining data. Large, well-annotated, and standardized datasets allow models to capture the complex biological variation present across tissues, cell types, developmental stages, and disease states [1]. This application note provides a comprehensive overview of three pivotal public data resources—CELLxGENE, GEO, and the Human Cell Atlas—that collectively provide the foundational data infrastructure for developing robust scFMs capable of strong zero-shot performance.

Data Resource Comparative Analysis

Table 1: Key Characteristics of Public Data Sources for scFM Pretraining

| Resource | Primary Content | Data Scale | Access Method | Update Frequency | Embeddings/Models |

|---|---|---|---|---|---|

| CZ CELLxGENE Discover | Standardized single-cell transcriptomics data from healthy human and mouse tissues [18] | 33M+ unique cells; 436 datasets; 2.7K+ cell types [18] | Web portal; Census API (Python/R) [19] | Weekly (latest); Long-term supported (LTS) releases every 6 months [19] | scVI, scGPT, Geneformer, UCE embeddings [20] [19] |

| Human Cell Atlas (HCA) | Multimodal single-cell data from international consortium; tissue-specific biological networks [21] [22] | 30M+ cells (as of 2022); Regular additions of new projects [22] | Data Portal; Managed access for controlled data [22] | Regular monthly updates with new projects and tissues [22] | Spatial transcriptomics; Emerging atlas-specific embeddings |

| NCBI GEO | Heterogeneous omics data from individual studies; microarray and sequencing data | Not quantified in search results | Web portal; Programmatic access | Continuous submission | Limited standardized embeddings |

Qualitative Assessment of Resource Utility

Table 2: Strategic Application of Data Resources in scFM Development

| Resource | Strengths for scFM Pretraining | Limitations for scFM Pretraining | Optimal Use Cases |

|---|---|---|---|

| CELLxGENE | Standardized processing: Uniform data curation and annotation enables seamless integration [18].Dedicated embeddings: Precomputed embeddings (scVI, scGPT) facilitate transfer learning [19].Reproducible access: Versioned Census releases ensure model reproducibility [19]. | Limited modality diversity: Primarily focused on transcriptomics with emerging multimodal support [18]. | Primary pretraining corpus: Ideal for building generalizable foundational models.Benchmarking: Standardized data enables fair model comparisons. |

| Human Cell Atlas | Spatial context: Increasing spatial transcriptomics data provides architectural context [21].Tissue networks: Organized by biological systems (e.g., Lung Network, Heart Network) [22].Diversity focus: Explicit emphasis on population diversity in recent initiatives [21]. | Data heterogeneity: Variable processing pipelines can introduce technical artifacts.Access complexity: Managed access requirements for some datasets create barriers [22]. | Specialized scFMs: Tissue-specific or spatially-aware foundation models.Diversity enhancement: Augmenting training data with population variation. |

| NCBI GEO | Extensive repository: Largest collection of diverse omics datasets.Methodological breadth: Captures wide range of experimental protocols and conditions. | Standardization challenges: Heterogeneous processing requires significant preprocessing.Metadata inconsistency: Variable annotation quality complicates data integration. | Data augmentation: Supplementing primary training corpora with specialized datasets.Transfer learning evaluation: Testing model generalization across heterogeneous data. |

Experimental Protocols for Data Utilization in scFM Research

Protocol 1: Constructing a Pretraining Corpus from CELLxGENE Census

Principle: Assemble a high-quality, diverse pretraining dataset from CELLxGENE Census that maximizes biological variation while minimizing technical artifacts [1] [19].

Procedure:

- Census Access: Utilize the CELLxGENE Census API to access the most recent Long-Term Supported (LTS) release for reproducibility [19].

Quality Filtering: Apply uniform quality control metrics—retain cells with gene counts between 500-5000 and mitochondrial reads below 20% to remove low-quality cells and potential artifacts [1].

Gene Selection: Filter for protein-coding genes expressed in at least 0.1% of cells to focus on biologically relevant features and reduce noise [1].

Dataset Balancing: Strategically sample cells across tissues, donors, and conditions to prevent bias toward overrepresented populations (e.g., blood cells) [1].

Metadata Integration: Incorporate standardized metadata (tissue, cell type, development stage, disease status) as conditional inputs or for stratified sampling [18] [19].

Train-Validation Split: Partition data at the donor or study level to prevent data leakage and ensure realistic evaluation of model generalizability.

Technical Considerations:

- Batch Effects: Preserve study identifiers as batch labels for potential correction during model training or evaluation [1].

- Reproducibility: Record exact Census version (e.g., "2025-01-30") and all filtering parameters for experimental replication [19].

Protocol 2: Zero-Shot Evaluation of scFMs for Cell Type Annotation

Principle: Evaluate scFM embeddings zero-shot for cell type annotation to assess inherent biological understanding without task-specific fine-tuning [5].

Procedure:

- Benchmark Curation: Compile evaluation datasets encompassing diverse tissues and technologies not seen during pretraining (e.g., Tabula Sapiens, Pancreas, PBMC datasets) [5].

Embedding Generation: Pass held-out datasets through the pretrained scFM without updating model weights to generate cell embeddings in a zero-shot manner [5].

Baseline Comparison: Compare against established methods including:

- Highly Variable Genes (HVG): Standardized feature selection followed by PCA.

- Harmony: Batch integration method for removing technical variation.

- scVI: Probabilistic generative model for single-cell data [5].

Quantitative Metrics: Calculate multiple complementary performance metrics:

- Average BIO Score: Measures cell type clustering purity and separation.

- Average Silhouette Width (ASW): Quantifies cluster compactness and distinction [5].

Qualitative Assessment: Visualize embeddings using UMAP or t-SNE to inspect cell type separation and batch integration.

Critical Interpretation:

- Current scFMs (scGPT, Geneformer) may underperform simpler methods (HVG, scVI) in zero-shot cell type annotation, highlighting limitations in their pretrained biological representations [5].

- Performance varies significantly across tissues and technologies, indicating context-dependent utility [5].

Table 3: Critical Computational Tools for scFM Development and Evaluation

| Resource Category | Specific Tools/Platforms | Primary Function in scFM Research |

|---|---|---|

| Data Repositories | CZ CELLxGENE Census [18] [19] | Provides standardized, analysis-ready single-cell data for model pretraining. |

| HCA Data Portal [22] | Supplies diverse, multi-tissue single-cell data with spatial context. | |

| Model Architectures | scGPT [1] [5] | Transformer-based foundation model for single-cell biology using GPT architecture. |

| Geneformer [5] | Transformer model trained on transcriptomic data for cellular network inference. | |

| Evaluation Frameworks | Zero-shot benchmarking pipeline [5] | Standardized protocol for assessing scFM performance without fine-tuning. |

| Analysis Ecosystems | Scanpy, Seurat | Standard single-cell analysis toolkits for preprocessing and evaluation. |

| TensorFlow, PyTorch | Deep learning frameworks for model implementation and training. |

Critical Analysis of Current Limitations and Future Directions

Despite their transformative potential, current scFMs face significant challenges in zero-shot learning scenarios. Recent evaluations reveal that proposed foundation models like scGPT and Geneformer may underperform simpler baseline methods (e.g., HVG selection, scVI, Harmony) on tasks including cell type clustering and batch integration when applied zero-shot [5]. This performance gap suggests potential limitations in how effectively these models learn transferable biological principles during pretraining.

Key limitations impacting zero-shot performance include:

Architectural Constraints: The masked language model pretraining objective may not optimally capture biological relationships essential for zero-shot generalization [5].

Data Quality Variation: Inconsistencies in data quality and processing across studies introduce confounding technical artifacts that models must disentangle [1].

Interpretability Challenges: Extracting biologically meaningful insights from the latent representations of scFMs remains nontrivial, complicating model debugging and improvement [1].

Future development should prioritize:

- Improved Pretraining Objectives: Designing tasks that explicitly encourage learning of biological mechanisms rather than technical correlations.

- Multimodal Integration: Incorporating simultaneous training on transcriptomic, epigenetic, proteomic, and spatial data to create more comprehensive cellular representations [1].

- Standardized Evaluation: Establishing unified benchmarks for zero-shot performance assessment across diverse biological tasks [5].

The development of robust single-cell foundation models with strong zero-shot learning capabilities depends critically on strategic utilization of public data resources. CELLxGENE provides the most standardized and accessible pretraining corpus, while the Human Cell Atlas offers valuable spatial and tissue-specific data, and GEO supplies specialized datasets for augmentation. Researchers must carefully consider the tradeoffs between standardization, scale, and diversity when constructing pretraining corpora. Rigorous zero-shot evaluation remains essential for validating true biological understanding rather than dataset-specific memorization. As these data resources continue to expand and evolve, they will undoubtedly enable the next generation of scFMs capable of genuine biological discovery through zero-shot inference.

Masked Gene Modeling (MGM) has emerged as a predominant self-supervised pretraining task for single-cell foundation models (scFMs). Inspired by masked language modeling in natural language processing, MGM trains models to reconstruct randomly masked portions of a cell's gene expression profile. This task forces the model to learn the underlying biological principles and complex gene-gene relationships that define cellular states, enabling the development of general-purpose representations transferable to diverse downstream analyses in a zero-shot manner [1] [17].

The core premise is that by exposing a model to millions of cells encompassing myriad tissues and conditions, it can learn fundamental, transferable patterns of biology. During pretraining, models develop rich internal representations of cells and genes that can be applied to new datasets without additional task-specific training, which is crucial for exploratory biological research where labels are often unknown or costly to obtain [5] [1].

Key Architectural Components and Implementation

Tokenization and Input Representation

A critical step in adapting transformer architectures to single-cell RNA-seq (scRNA-seq) data is tokenization—converting raw gene expression values into discrete input units. Unlike words in a sentence, genes lack a natural sequential order, necessitating specific strategies to structure the model input.

Common Tokenization Strategies:

- Gene Ranking by Expression: Genes are ordered based on their expression magnitude within each cell, creating a deterministic sequence of the top N genes [1] [17]. This approach is used by models like Geneformer and LangCell [17].

- Expression Value Binning: Continuous expression values are discretized into bins or categories, and the binned values are used as inputs [1]. scGPT, for instance, employs value binning for its value embeddings [17].

- Normalized Counts: Some models, such as scFoundation, forgo complex sequencing and directly use normalized counts, projecting the values into an embedding space [17].

Table 1: Input Representation in Selected Single-Cell Foundation Models

| Model Name | # Input Genes | Value Embedding | Gene Symbol Embedding | Positional Embedding |

|---|---|---|---|---|

| Geneformer [17] | 2048 ranked genes | Ordering | Lookup Table (512d) | ✓ |

| scGPT [17] | 1200 HVGs | Value binning | Lookup Table (512d) | × |

| scFoundation [17] | ~19,000 genes | Value projection | Lookup Table (768d) | × |

| UCE [17] | 1024 sampled genes | / | Protein Embedding (5120d) | ✓ |

After tokenization, all tokens are converted into embedding vectors, which are processed by the transformer layers. Special tokens, such as those representing cell identity or assay modality, may be prepended to provide additional context [1].

Model Architectures and Pretraining Objectives

Most scFMs are built on the transformer architecture. Two primary variants are employed:

- Encoder-only Models (BERT-like): These models use a bidirectional attention mechanism, meaning all genes in a cell can attend to all other genes simultaneously to reconstruct the masked ones. This architecture is well-suited for tasks focused on generating high-quality cell and gene embeddings for classification and analysis [1]. scBERT is an example of this approach [1].

- Decoder-only Models (GPT-like): These models use a unidirectional or masked self-attention mechanism, where the model predicts the next or a masked gene conditioned only on the preceding, known genes. This architecture is often used for generative tasks [1]. scGPT is a prominent decoder-based model [1] [17].

The primary pretraining objective is the reconstruction of masked gene expression values. The model is trained to minimize the difference between the predicted and actual expression values for the masked genes, using losses such as Mean Squared Error (MSE) or Cross-Entropy (CE) [17].

Quantitative Performance of MGM-Pretrained Models

Evaluating the zero-shot performance of scFMs—where pretrained models are applied directly to new tasks without fine-tuning—is critical for assessing the true generalizable knowledge acquired during pretraining. This is especially important in discovery settings where labels are unknown [5].

Performance on Cell Type Clustering

In zero-shot cell type clustering, embeddings from MGM-pretrained models are used directly for clustering, and the results are compared to known cell type labels.

Table 2: Zero-shot Cell Type Clustering Performance (AvgBIO Score) [5]

| Model / Method | PBMC (12k) | Pancreas | Immune | Tabula Sapiens |

|---|---|---|---|---|

| HVG (Baseline) | 0.65 | 0.62 | 0.69 | 0.66 |

| scVI (Baseline) | 0.63 | 0.65 | 0.66 | 0.64 |

| Harmony (Baseline) | 0.64 | 0.63 | 0.65 | 0.63 |

| scGPT | 0.67 | 0.59 | 0.60 | 0.61 |

| Geneformer | 0.55 | 0.52 | 0.55 | 0.54 |

As shown in Table 2, established baselines like Highly Variable Genes (HVG), scVI, and Harmony often outperform or match the performance of foundation models like scGPT and Geneformer in this zero-shot setting. This suggests that MGM pretraining does not automatically guarantee superior cell type separation without fine-tuning [5].

Performance on Batch Integration

Batch integration aims to remove technical variations between datasets while preserving biological differences. Performance is measured by how well batch effects are mixed (Batch Mixing Score) and how much biological information is retained (Cell-type ASW).

Table 3: Zero-shot Batch Integration Performance [5]

| Model / Method | Batch Mixing Score (↑) | Cell-type ASW (↑) | PCR Batch (↓) |

|---|---|---|---|

| HVG (Baseline) | 0.72 | 0.63 | 0.41 |

| scVI (Baseline) | 0.68 | 0.65 | 0.38 |

| Harmony (Baseline) | 0.65 | 0.64 | 0.45 |

| scGPT | 0.63 | 0.59 | 0.49 |

| Geneformer | 0.51 | 0.53 | 0.68 |

In batch integration, HVG selection again demonstrates strong performance. Geneformer's embeddings, in particular, were found to have a higher proportion of variance explained by batch effects than the original data, indicating inadequate batch mixing in a zero-shot context [5].

Experimental Protocol for Zero-Shot Evaluation

This protocol outlines the steps to evaluate the zero-shot capabilities of an MGM-pretrained model on a new target dataset for cell type clustering and batch integration.

Materials and Software Requirements

- Computing Environment: A machine with a GPU (e.g., NVIDIA V100 or A100) is recommended for faster inference, though CPU is feasible.

- Software: Python (>=3.8), PyTorch or TensorFlow, and the specific model's library (e.g., scGPT, Geneformer).

- Target Dataset: A preprocessed scRNA-seq dataset (e.g., in Anndata or Seurat format) with held-out cell type labels and batch information for evaluation.

Step-by-Step Procedure

Model Acquisition and Loading:

- Download the pretrained model weights for the scFM (e.g., from a GitHub repository or model hub).

- Load the model into memory using the corresponding library, ensuring it is in evaluation/inference mode.

Target Data Preprocessing:

- Quality Control: Filter out low-quality cells based on metrics like UMI counts, number of genes detected, and mitochondrial read percentage [23]. Filter out lowly expressed genes.

- Gene Alignment: Map the genes in the target dataset to the gene vocabulary used during the model's pretraining. This may require filtering for a common set of genes or handling missing genes as defined by the model's authors.

- Normalization: Apply the normalization method (e.g., log1p, library size normalization) that is compatible with the loaded scFM.

Zero-Shot Embedding Generation:

Downstream Task Application:

- Cell Type Clustering:

- Perform dimensionality reduction (e.g., UMAP, t-SNE) on the extracted cell embeddings.

- Cluster the cells using a standard algorithm like Leiden or Louvain clustering.

- Compare the clusters to the held-out ground truth cell type labels using metrics like Adjusted Rand Index (ARI), Normalized Mutual Information (NMI), or Average BIO Score (AvgBIO) [5].

- Batch Integration Assessment:

- Visualize the embeddings, coloring cells by batch and by cell type.

- Quantitatively evaluate using metrics like:

- Batch Mixing Score: Measures the degree of mixing between different batches.

- Average Silhouette Width (ASW) for Cell-type: Assesses the preservation of biological variation.

- Principal Component Regression (PCR) Batch: Quantifies the amount of variance explained by batch effects [5].

- Cell Type Clustering:

Benchmarking:

- Compare the performance of the scFM embeddings against established baseline methods, such as using Highly Variable Genes (HVG) directly, or embeddings from scVI and Harmony [5].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Reagents and Resources for MGM Pretraining and Evaluation

| Category | Item | Description and Function |

|---|---|---|

| Data Resources | CZ CELLxGENE Census [5] [1] | A unified resource providing access to millions of curated and standardized single-cell datasets, serving as a primary source for pretraining data. |

| Human Cell Atlas [1] | A reference map of all human cells, providing comprehensive data on cell types and states across tissues. | |

| Gene Expression Omnibus (GEO) [1] | A public functional genomics data repository that hosts a vast number of submitted single-cell sequencing studies. | |

| Software & Models | scGPT [5] [17] | A transformer-based foundation model pretrained on 33 million human cells using MGM. Supports multiple omics modalities. |

| Geneformer [5] [17] | A transformer model pretrained on 30 million cells, using a ranked-genes approach for tokenization and MGM. | |

| Seurat / Scanpy [23] | Standard toolkits for single-cell data analysis, used for preprocessing, visualization, and benchmarking. | |

| Evaluation Metrics | AvgBIO Score [5] | A composite metric for evaluating cell type clustering quality, combining multiple clustering benchmarks. |

| Batch Mixing Score [5] | Quantifies how well batches are integrated in the latent space. | |

| Cell-type ASW [5] | Average Silhouette Width; measures the preservation of cell type separation after integration. |

Masked Gene Modeling is a powerful self-supervised paradigm for learning generalizable representations of single-cell biology. However, rigorous zero-shot evaluation reveals that current MGM-pretrained models do not consistently outperform simpler baseline methods on tasks like cell type clustering and batch integration, highlighting a significant challenge for the field [5].

Future work should focus on improving the pretraining objectives and model architectures to learn more transferable and biologically meaningful representations. The development of benchmarks that more directly assess a model's capacity for zero-shot biological discovery, beyond just technical tasks, will be crucial. As models scale and training datasets become larger and more diverse, the promise of scFMs to serve as robust, plug-and-play tools for zero-shot learning in biomedical research remains a central and achievable goal [1] [17].

Practical Applications and Methodological Approaches in Zero-Shot Analysis

Zero-Shot Cell Type Annotation and Novel Cell Type Discovery

Zero-shot learning represents a paradigm shift in the analysis of single-cell RNA sequencing (scRNA-seq) data. In contrast to supervised methods that require extensive labeled datasets for training, zero-shot approaches leverage pre-existing knowledge to annotate cell types and discover novel cellular states without task-specific fine-tuning [5]. This capability is critically important for exploratory biological research where comprehensive cell type labels are unknown or incomplete. The emergence of single-cell foundation models (scFMs), pretrained on millions of cells, promises to unlock this potential by learning universal biological representations transferable to diverse downstream tasks [24] [9].

The zero-shot paradigm is particularly valuable for discovering novel cell types and states that fall outside existing classification schemas. In clinical and drug development contexts, this enables researchers to identify previously uncharacterized cell populations in disease microenvironments or in response to treatment, potentially revealing new therapeutic targets [4]. However, rigorous benchmarking studies have revealed significant limitations in current scFMs, which sometimes underperform simpler methods in zero-shot settings [5] [4]. This application note synthesizes current methodologies, performance benchmarks, and experimental protocols to establish robust practices for zero-shot cell type annotation and novel cell discovery.

Performance Benchmarking of scFMs in Zero-Shot Tasks

Comprehensive evaluations of scFM performance reveal a complex landscape where no single model consistently outperforms others across all tasks. The table below summarizes key findings from recent large-scale benchmarking studies.

Table 1: Zero-Shot Performance of Single-Cell Foundation Models for Cell Type Annotation

| Model | Pretraining Corpus | Key Strengths | Performance Notes | Limitations |

|---|---|---|---|---|

| scGPT | 33 million human cells [9] | Cross-species annotation, multi-omic integration [9] | Inconsistent zero-shot clustering; outperformed by HVGs on some datasets [5] | Embeddings sometimes retain batch effects; variable performance across tissues [5] |

| Geneformer | 27 million human cells [4] | Gene network inference, developmental trajectories [4] | Underperforms HVG, scVI, and Harmony in clustering (AvgBIO score) [5] | Poor batch integration; embeddings often cluster by batch rather than cell type [5] |

| scPlantFormer | 1 million plant cells (Arabidopsis thaliana) [9] | Cross-species annotation (92% accuracy) [9] | Specialized for plant systems; limited evaluation in human contexts | Domain-specific applicability |

| LangCell | Not specified | Gene embedding quality | Competitive on gene-level tasks [4] | Cell-level performance varies [4] |

Table 2: Comparison of Zero-Shot Performance Against Established Baselines

| Method | Category | Cell Type Clustering | Batch Integration | Novelty Detection |

|---|---|---|---|---|

| scGPT (zero-shot) | Foundation Model | Variable across datasets [5] | Moderate (better on complex biological batches) [5] | Limited published evidence |

| Geneformer (zero-shot) | Foundation Model | Consistently outperformed by baselines [5] | Poor (high batch effect retention) [5] | Limited published evidence |

| HVG Selection | Traditional | Robust performance across datasets [5] | Excellent quantitative scores [5] | Limited capability |

| scVI | Generative Model | Strong performance [5] | Excellent for technical variation [5] | Established capability |

| Harmony | Integration Algorithm | Strong performance [5] | Excellent for technical batches [5] | Limited capability |

Notably, a zero-shot evaluation of scGPT and Geneformer revealed that both models were outperformed by simpler methods like highly variable gene (HVG) selection and established integration algorithms such as Harmony and scVI on cell type clustering tasks, as measured by average BIO (AvgBIO) scores [5]. This performance gap highlights the critical need for rigorous benchmarking before deploying scFMs in research pipelines.

Experimental Protocols for Zero-Shot Annotation

Protocol 1: Zero-Shot Cell Type Annotation Using Precomputed Embeddings

Purpose: To annotate cell types in a target scRNA-seq dataset using pre-trained foundation models without fine-tuning.

Materials:

- Target scRNA-seq dataset (count matrix)

- Pretrained scFM (e.g., scGPT, Geneformer)

- Reference cell type markers (e.g., from Cell Ontology)

- Computational environment with appropriate libraries (Python, PyTorch)

Procedure:

- Data Preprocessing:

- Normalize the target dataset using standard scRNA-seq workflows (e.g., SCTransform)

- Filter low-quality cells and genes

- Log-transform expression values

Embedding Generation:

- Load the pretrained model weights

- Pass the preprocessed count matrix through the model to extract cell embeddings

- Reduce dimensionality using UMAP or t-SNE for visualization

Cell Type Prediction:

- Calculate similarity scores between query cells and reference cell type signatures

- Assign cell type labels based on maximum similarity

- Set confidence thresholds to flag low-probability assignments

Validation:

- Assess clustering quality using metrics like average silhouette width (ASW)

- Manually inspect marker gene expression for assigned types

- Identify populations with ambiguous assignments for further investigation

Protocol 2: Novel Cell Type Discovery Through Multimodal Similarity Search

Purpose: To identify novel cell populations that lack strong similarity to known reference types.

Materials:

- CellWhisperer framework or similar multimodal tool [25]

- Integrated transcriptome-text embedding model

- Reference atlas with comprehensive coverage (e.g., Human Cell Atlas)

Procedure:

- Multimodal Embedding:

- Generate joint embeddings of transcriptomes and textual descriptions using contrastive learning

- Project both query cells and reference populations into shared latent space

Similarity Assessment:

- Compute distances between query cells and all reference cell types

- Identify outlier populations with low similarity to any reference type

- Perform hierarchical clustering to confirm distinctness of putative novel population

Characterization:

- Extract differentially expressed genes for the novel population

- Use natural language queries to explore potential functions (e.g., "cells with high metabolic activity")

- Compare with developmental trajectories or disease states for context

Biological Validation:

- Design experimental validation using protein markers or spatial transcriptomics

- Contextualize findings within relevant biological processes or disease mechanisms

Visualization Workflows for Annotation Results

Effective visualization is essential for interpreting zero-shot annotation results and communicating findings. The following workflows integrate established tools with novel multimodal approaches.

Diagram 1: Zero-Shot Annotation Visualization Workflow

Advanced tools like Vitessce enable integrative visualization of multimodal single-cell data across multiple coordinated views [26]. This framework supports simultaneous exploration of transcriptomics, cell-type annotations, spatially resolved transcripts, and imaging data, facilitating the interpretation of novel cell populations in their biological context.

Table 3: Essential Computational Tools for Zero-Shot Cell Type Annotation

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| CELLxGENE Census | Data Platform | Curated single-cell data for reference and benchmarking [25] | https://cellxgene.cziscience.com/ |

| CellWhisperer | Multimodal AI | Natural language query of transcriptomic data [25] | https://cellwhisperer.bocklab.org |

| Vitessce | Visualization Framework | Interactive visualization of multimodal single-cell data [26] | http://vitessce.io |

| scBubbletree | Visualization Package | Quantitative visualization of scRNA-seq cluster relationships [27] | Bioconductor R package |

| BioLLM | Benchmarking Framework | Standardized interface for evaluating foundation models [9] | Open source |

| Human Cell Atlas | Reference Data | Comprehensive map of human cell types [25] | https://www.humancellatlas.org/ |

Integrated Protocol for Validation and Biological Interpretation

Diagram 2: Multimodal Validation Protocol

Purpose: To validate and biologically contextualize putative novel cell types identified through zero-shot annotation.

Materials:

- Spatial transcriptomics data (e.g., 10x Visium, MERFISH)

- Protein expression data (CITE-seq, CODEX)

- Functional annotation databases (GO, KEGG)

- CellWhisperer or similar multimodal interpretation tool

Procedure:

- Spatial Validation:

- Map putative novel cell populations to spatial coordinates

- Assess spatial clustering patterns and neighborhood contexts

- Correlate with histological features in matched tissue sections

Multimodal Correlation:

- Integrate transcriptomic findings with protein expression patterns

- Confirm uniqueness at multiple molecular layers

- Identify surface markers for experimental isolation

Functional Annotation:

- Perform pathway enrichment analysis on marker genes

- Use CellWhisperer's natural language capability to generate biological hypotheses

- Contextualize within relevant disease mechanisms or developmental processes

Expert Integration:

- Combine computational evidence with domain knowledge

- Design targeted experiments to validate functional characteristics

- Propose formal naming and classification through appropriate ontologies

Zero-shot cell type annotation and novel cell discovery represent frontier capabilities in single-cell genomics with significant potential for biological discovery and therapeutic development. While current foundation models show promise, their performance varies considerably across biological contexts and dataset characteristics. The protocols and benchmarks presented here provide a framework for rigorous application of these methods while acknowledging current limitations. As the field evolves, continued development of multimodal approaches and biologically-informed evaluation metrics will be essential for realizing the full potential of zero-shot learning in cellular taxonomy and discovery.

A fundamental challenge in single-cell genomics is the integration of datasets from different studies, technologies, or laboratories to extract meaningful biological insights. Batch effects—non-biological variations introduced by technical differences—can obscure true biological signals and hinder cross-study comparisons. While traditional computational methods often require dataset-specific fine-tuning, single-cell foundation models (scFMs) offer a promising alternative through their emergent zero-shot capabilities. This Application Note examines current scFMs and their application in overcoming batch effects without fine-tuning, providing researchers with practical protocols for evaluating and implementing these approaches.

The Batch Effect Challenge in Single-Cell Biology

Batch effects represent a significant obstacle in single-cell research, particularly when integrating data across different experimental conditions, technologies, or donor populations. These technical variations can:

- Obscure true biological differences between cell states and conditions

- Limit statistical power by reducing effective sample sizes

- Introduce false positives in differential expression analysis

- Hinder reproducibility across studies and platforms

The problem is particularly acute in exploratory research where comprehensive labels for supervised fine-tuning are unavailable. In these contexts, models must generate robust representations without task-specific training, making zero-shot performance a critical evaluation metric [5].

Zero-Shot Performance of Single-Cell Foundation Models

Evaluation Framework

Rigorous evaluation of scFMs in zero-shot settings reveals important limitations and strengths. Performance should be assessed using multiple complementary metrics:

- Cell type clustering: Ability to separate known cell types without fine-tuning

- Batch integration: Effectiveness in removing technical variations while preserving biological signals

- Biological relevance: Concordance with established biological knowledge

Benchmarking studies typically compare scFMs against established baselines including Highly Variable Genes (HVG) selection, Harmony, and scVI [5] [17].

Performance Comparison

Recent evaluations demonstrate variable performance across models and datasets:

Table 1: Zero-shot performance comparison across integration methods

| Method | Cell Type Clustering (AvgBIO Score) | Batch Integration (Batch Mixing Score) | Biological Relevance (scGraph-OntoRWR) |

|---|---|---|---|

| HVG Selection | 0.74 | 0.89 | 0.68 |

| Harmony | 0.71 | 0.76 | 0.72 |

| scVI | 0.73 | 0.82 | 0.75 |

| Geneformer | 0.62 | 0.61 | 0.65 |

| scGPT | 0.68 | 0.79 | 0.71 |

| scShift | 0.76 | 0.85 | 0.78 |

Data compiled from multiple benchmarking studies [5] [28] [17].

Notably, simpler methods like HVG selection can outperform foundation models in some zero-shot scenarios, particularly for batch integration tasks [5]. However, specialized models like scShift demonstrate exceptional capabilities in disentangling batch-dependent and independent variations when pretrained on compendiums of scRNA-seq atlases [28].

Experimental Protocols for Zero-Shot Evaluation

Protocol 1: Evaluating Batch Integration Performance

Objective: Assess model performance in removing batch effects while preserving biological variation.

Materials:

- Processed single-cell dataset with known batch labels and cell type annotations

- Pretrained foundation model (Geneformer, scGPT, scShift, or alternatives)

- Baseline methods (HVG, Harmony, scVI) for comparison

- Computing environment with appropriate libraries (Python, R)

Procedure:

- Data Preparation:

- Standardize input data to match model requirements (gene ranking for Geneformer, HVG selection for scGPT)

- Ensure batch labels and cell type annotations are available for evaluation

- Split data by batch origin if evaluating cross-dataset performance

Embedding Generation:

- Generate cell embeddings using the foundation model in zero-shot mode

- No fine-tuning or parameter optimization should be performed

- Extract embeddings at the appropriate layer (cell-level embeddings)

Quantitative Assessment:

- Calculate batch mixing metrics (e.g., PCR score, LISI)

- Evaluate cell type separation (e.g., ASW, AvgBIO score)

- Compute biological relevance metrics (e.g., scGraph-OntoRWR)

Visualization:

- Generate 2D visualizations (UMAP, t-SNE) of embeddings

- Color by batch labels to assess integration

- Color by cell type to assess biological preservation

Expected Outcomes: Foundation models should demonstrate competitive batch mixing while maintaining or improving biological signal preservation compared to baselines [5] [17].

Protocol 2: Cross-Dataset Biological State Transfer

Objective: Evaluate model capability to identify consistent biological states across independent datasets.

Materials:

- Multiple datasets with shared biological conditions (e.g., disease states)

- Pretrained scShift model or equivalent

- Reference biological annotations for validation

Procedure:

- Model Setup:

- Utilize scShift's dual-encoder architecture for batch-dependent and batch-independent variations

- Configure sparsity regularization (l0) for dataset label encoding

- Apply independence regularization between centralized latent variables and dataset labels

Embedding Extraction:

- Extract biological embeddings (batch-dependent components)

- Extract unperturbed embeddings (batch-independent components)

- Process new datasets without additional training

Cross-Dataset Comparison:

- Project biological states from different datasets into shared space

- Identify conserved biological patterns across batches

- Validate with known biological ground truth

Downstream Analysis:

- Construct classifiers for biological states using embeddings

- Identify state-specific gene expression patterns

- Characterize cellular interactions and potential therapeutic targets

Expected Outcomes: Successful models will identify consistent biological states (e.g., disease signatures) across technically diverse datasets without fine-tuning [28].

Research Reagent Solutions

Table 2: Essential computational tools for zero-shot batch integration

| Tool Name | Type | Primary Function | Implementation Requirements |

|---|---|---|---|

| Geneformer | Foundation Model | Cell embedding via transformer architecture | Python, 40M parameters, 30M pretraining cells [17] |

| scGPT | Foundation Model | Multi-task learning on single-cell data | Python, 50M parameters, 33M pretraining cells [5] [17] |

| scShift | Specialized Framework | Disentangling batch and biological variations | Python, variational inference framework [28] |

| Harmony | Integration Algorithm | Batch effect correction | R/Python, linear integration approach [5] |

| scVI | Generative Model | Probabilistic modeling of scRNA-seq | Python, deep generative modeling [5] |

| CELLxGENE | Data Platform | Curated single-cell data repository | Web access or local installation [1] |

Implementation Workflows

Workflow 1: Zero-Shot Dataset Integration

Workflow 2: Biological State Identification Across Batches