Zero-Shot vs. Fine-Tuning: A Strategic Guide to Maximizing Single-Cell Foundation Model Performance

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to navigate the critical choice between zero-shot and fine-tuning approaches for single-cell Foundation Models (scFMs).

Zero-Shot vs. Fine-Tuning: A Strategic Guide to Maximizing Single-Cell Foundation Model Performance

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to navigate the critical choice between zero-shot and fine-tuning approaches for single-cell Foundation Models (scFMs). Drawing on the latest research, we explore the foundational concepts of scFMs and their adaptation mechanisms, present methodological guides for implementation across tasks like cell-type annotation and perturbation prediction, and offer troubleshooting strategies for overcoming data scarcity and computational constraints. Through a comparative analysis of benchmark studies from tools like BioLLM, we validate performance trade-offs to inform model selection. The synthesis empowers professionals to strategically deploy scFMs, balancing performance, resource allocation, and generalizability to accelerate discovery in biomedicine and clinical research.

Understanding Single-Cell Foundation Models: From Core Concepts to Adaptation Mechanisms

What Are scFMs? Defining Transformers in Single-Cell Biology

Single-cell Foundation Models (scFMs) are large-scale deep learning models, typically based on transformer architectures, that are pretrained on vast datasets of single-cell omics data. They are designed to learn fundamental biological principles from millions of cells and can be adapted for a wide range of downstream analysis tasks through zero-shot inference or fine-tuning [1] [2].

Core Architectural Principles of scFMs

The development of scFMs is inspired by the success of large language models. They treat single-cell data as a "cellular language," where individual cells are analogous to sentences and genes or genomic features are the words or tokens [1] [3].

The Tokenization Challenge in Single-Cell Data

A fundamental challenge is that gene expression data is not naturally sequential. Unlike words in a sentence, genes in a cell have no inherent ordering. To address this, several strategies are employed:

- Ranking by Expression: Genes are ranked within each cell by expression levels, and the ordered list of top genes is treated as the sequence [1] [4].

- Value Binning: Gene expression values are partitioned into bins, and these rankings determine positional encoding [1].

- Normalized Counts: Some models report no clear advantage to complex ranking and simply use normalized counts [1].

Special tokens may be added to represent cell identity, metadata, or omics modality, enriching the model's context [1] [3].

Model Architectures and Pretraining

Most scFMs are built on the transformer architecture, which uses attention mechanisms to weight relationships between all genes in a cell [1] [3]. Two predominant architectural variants are:

- Encoder-based models (e.g., scBERT, Geneformer): Use a bidirectional attention mechanism, learning from all genes in a cell simultaneously. They are often used for classification and embedding tasks [1] [4].

- Decoder-based models (e.g., scGPT): Use a unidirectional, masked self-attention mechanism, iteratively predicting masked genes conditioned on known genes. They are often applied to generation tasks [1].

These models are pretrained on massive, diverse collections of single-cell data from public repositories like CZ CELLxGENE, which provides access to over 100 million unique cells [1] [3]. Pretraining is typically self-supervised, using objectives such as Masked Gene Modeling (MGM), where the model learns by predicting randomly masked genes or expression values within a cell's profile [1] [4].

Figure 1: Core Architecture of a Single-Cell Foundation Model. scFMs transform single-cell data into tokens, process them through a transformer, and produce latent embeddings for downstream tasks [1] [3] [4].

Comparative Performance: Zero-Shot vs. Fine-Tuned scFMs

A critical consideration for researchers is the application strategy: using a model's built-in, zero-shot capabilities versus fine-tuning it on a specific dataset. The performance trade-offs are significant, as revealed by comprehensive benchmarking studies.

Performance Across Downstream Tasks

Benchmarking studies have evaluated scFMs across diverse tasks. The table below summarizes key performance insights, particularly highlighting the difference between zero-shot and fine-tuned applications [4] [5].

| Model | Key Architectural Features | Performance in Zero-Shot Settings | Performance After Fine-Tuning |

|---|---|---|---|

| scGPT | Decoder-based; multi-omics support; value binning for expression [4]. | Consistently strong across tasks; superior cell type separation and batch-effect correction in embedding quality [5]. | Robust performance across all tasks; highly responsive to fine-tuning [6] [5]. |

| Geneformer | Encoder-based; ranks genes by expression; uses a lookup table for gene embeddings [4]. | Strong capabilities in gene-level tasks [6] [5]. | Benefits from effective pretraining strategies; shows strong gene-level task performance [6]. |

| scFoundation | Asymmetric encoder-decoder; uses value projection and a large input gene set [4]. | Demonstrates strong gene-level task performance [6] [5]. | Effective pretraining leads to strong task adaptation [6]. |

| scBERT | Encoder-based; uses gene2vec embeddings and masked language modeling [4] [5]. | Lags behind other models in embedding quality and batch correction [5]. | Limited by smaller model size and training data [6] [5]. |

Quantitative Benchmarking Results

Independent evaluations provide quantitative data on how scFMs perform on specific cell-level tasks. The following table synthesizes findings from a comprehensive benchmark that tested models under realistic conditions [4].

| Task Category | Specific Task Example | Top-Performing Models | Key Finding: Zero-Shot vs. Fine-Tuning |

|---|---|---|---|

| Pre-Clinical Analysis | Batch integration across five datasets [4]. | scGPT, Geneformer | Fine-tuning significantly enhances batch-effect correction capabilities [5]. |

| Pre-Clinical Analysis | Cell type annotation across five datasets [4]. | scGPT | Fine-tuning through supervised training is highly effective for cell annotation [5]. |

| Clinical Application | Cancer cell identification across seven cancer types [4]. | scGPT, scFoundation | Simpler ML models can be more efficient for dataset-specific tasks under resource constraints [4]. |

| Clinical Application | Drug sensitivity prediction for four drugs [4]. | Varies by task | No single scFM consistently outperforms all others; task-specific selection is crucial [4]. |

A key conclusion from benchmarks is that no single scFM consistently outperforms all others across every task [4]. The decision to use a model in a zero-shot setting versus fine-tuning it depends on factors like dataset size, task complexity, and available computational resources. For targeted tasks with sufficient data, fine-tuning a model can yield superior results, even enabling smaller models to surpass the zero-shot performance of much larger ones [7]. Conversely, for exploratory analysis or when labeled data is scarce, the zero-shot capabilities of a robust model like scGPT can be highly valuable.

Experimental Protocols for Benchmarking scFMs

To ensure fair and reproducible comparisons, benchmarking studies follow structured experimental protocols. The workflow below outlines the key stages for evaluating zero-shot and fine-tuned scFM performance, as implemented in frameworks like BioLLM [5].

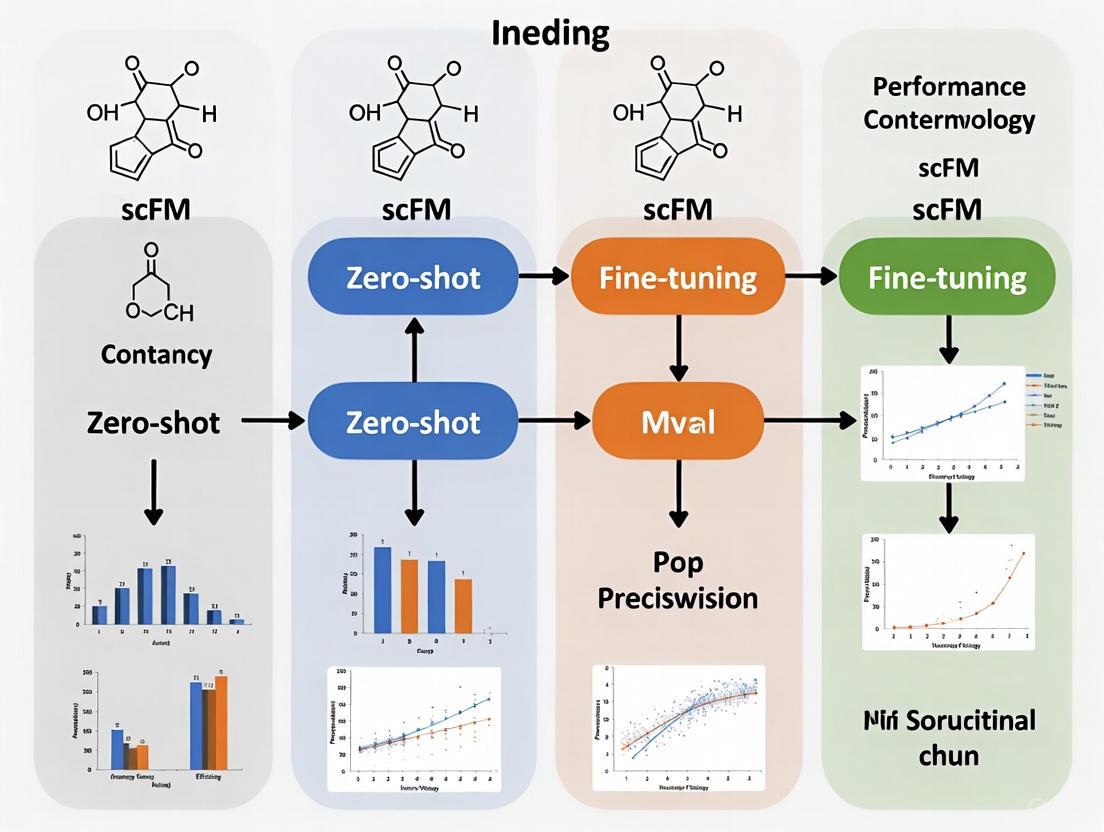

Figure 2: Experimental Workflow for scFM Benchmarking. The pipeline evaluates models in both zero-shot and fine-tuned settings on standardized tasks and metrics [4] [5].

Detailed Methodology

- Data Curation and Preprocessing: High-quality, diverse datasets from sources like CELLxGENE are selected. Rigorous quality control is applied, including filtering of low-quality cells and genes, and normalization [4] [5].

- Model Initialization: scFMs are loaded with their pretrained weights. Frameworks like BioLLM provide a unified interface for this, standardizing access to models with different original coding standards [6] [5].

- Task Execution:

- Zero-Shot Inference: Models are applied to new data without updating their parameters. Cell or gene embeddings are extracted directly from the pretrained model for evaluation [5].

- Fine-Tuning: Models are further trained (fine-tuned) on a specific task using a limited set of labeled data. This involves updating the model's parameters to adapt to the new task [8] [5].

- Evaluation: Model performance is assessed using multiple metrics [4] [5]:

- Cell Embedding Quality: Measured by metrics like Average Silhouette Width (ASW) to evaluate how well embeddings separate cell types.

- Biological Fidelity: Assessed using novel metrics like scGraph-OntoRWR, which measures the consistency of cell-type relationships in the embedding with prior biological knowledge from cell ontologies.

- Prediction Accuracy: Standard classification metrics (e.g., accuracy, F1-score) are used for tasks like cell type annotation and drug response prediction.

Essential Research Reagent Solutions for scFM Research

The following table details key resources and tools that are fundamental for working with and evaluating single-cell foundation models.

| Resource/Tool Name | Type | Primary Function in scFM Research |

|---|---|---|

| CZ CELLxGENE [1] [3] | Data Repository | Provides unified access to over 100 million curated single-cells for model pretraining and benchmarking. |

| BioLLM Framework [6] [5] | Software Tool | Offers a unified interface to integrate, apply, and benchmark diverse scFMs using standardized APIs and protocols. |

| Human Cell Atlas [1] [3] | Reference Atlas | Serves as a broad-coverage source of biological variation for training and validating models. |

| scGPT [4] [5] | Foundation Model | A versatile, decoder-based scFM known for strong performance in both zero-shot and fine-tuned settings across various tasks. |

| Geneformer [4] [5] | Foundation Model | An encoder-based scFM recognized for its strong performance on gene-level tasks. |

| scGraph-OntoRWR Metric [4] | Evaluation Metric | A novel ontology-informed metric that evaluates the biological relevance of learned cell embeddings. |

Single-cell Foundation Models represent a transformative shift in analyzing cellular heterogeneity. The choice between zero-shot application and fine-tuning is not a binary one but a strategic decision guided by the biological question, data resources, and performance requirements. While zero-shot inference offers a powerful tool for exploratory analysis, fine-tuning often unlocks a model's full potential for specific, complex tasks. As the field matures, the development of standardized frameworks and biologically meaningful evaluation metrics will be crucial for robustly benchmarking these models and fully realizing their potential in biological discovery and therapeutic development [4] [6] [5].

In the rapidly evolving field of single-cell genomics, foundation models (scFMs) promise to revolutionize how we extract biological insights from millions of individual cells. These models, inspired by breakthroughs in natural language processing (NLP), face a fundamental challenge: translating the complex, non-sequential language of gene expression into a structured format that AI models can understand. This translation process, known as tokenization, serves as the critical bridge connecting raw biological data to computational analysis. The tokenization strategy directly influences a model's ability to perform in zero-shot settings—where models analyze new data without task-specific training—versus fine-tuning scenarios where models are adapted to specific tasks with additional training.

As research increasingly focuses on the practical application of scFMs for drug discovery and clinical research, understanding how tokenization impacts model performance has become paramount. This guide provides an objective comparison of how different tokenization approaches affect model capabilities, with particular emphasis on their implications for zero-shot performance versus fine-tuned applications.

The Fundamentals of Tokenization in scFMs

What is Tokenization in Single-Cell Context?

In natural language processing, tokenization converts raw text into discrete units (tokens) that models can process. Similarly, for single-cell data, tokenization transforms gene expression profiles into structured model inputs. In this analogy, individual cells are treated as "sentences," while genes and their expression values become "words" or "tokens" [1] [3]. This process is necessary because gene expression data lacks the inherent sequential structure of language, presenting unique challenges for model architecture.

Key Tokenization Strategies in Current scFMs

Different scFMs have developed distinct approaches to tokenization, which can be categorized into several core strategies:

Gene Identity and Expression Value Representation: Most models represent each gene as a token, but they differ significantly in how they encode expression values. Strategies include value binning (scGPT), expression-level ordering (Geneformer), and value projection (scFoundation) [4].

Sequence Structuring: Since genes lack natural ordering, models impose artificial sequences through various methods. The most common approaches include ranking genes by expression levels within each cell or partitioning genes into expression-value bins [1] [3].

Special Token Integration: Advanced tokenization schemes incorporate special tokens representing cell metadata, experimental conditions, or multimodal information, enabling the model to learn richer contextual relationships [1].

Table 1: Comparison of Tokenization Strategies in Major scFMs

| Model | Gene Representation | Expression Value Handling | Sequence Structuring | Special Tokens |

|---|---|---|---|---|

| Geneformer | Lookup Table | Expression ranking | Top 2048 ranked genes | Limited |

| scGPT | Lookup Table | Value binning | 1200 HVGs | Cell type, batch conditions |

| scBERT | Gene2Vec embeddings | Expression categorization | Fixed gene order | Cell context |

| scFoundation | Lookup Table | Value projection | All protein-encoding genes | Not specified |

| UCE | Protein embeddings | Expression sampling | Genomic position ordering | Biological context |

Experimental Benchmarking of Tokenization Impact

Zero-Shot Performance Evaluation

Comprehensive benchmarking studies reveal significant differences in how tokenization strategies impact zero-shot performance across key biological tasks:

Cell Type Clustering: In rigorous zero-shot evaluations, scGPT and Geneformer underperformed compared to simpler methods like highly variable genes (HVG) selection and established baselines such as Harmony and scVI when measuring average BIO (AvgBio) scores [9]. The table below summarizes quantitative findings from these evaluations:

Table 2: Zero-Shot Performance Comparison Across Tasks and Models

| Task Category | Performance Findings | Top Performing Methods | Key Metric |

|---|---|---|---|

| Cell Type Clustering | scGPT and Geneformer underperformed vs. HVG, scVI, Harmony | HVG, scVI, Harmony | AvgBIO Score |

| Batch Integration | Geneformer consistently ranked last; HVG achieved best scores | HVG, scVI, Harmony | Batch Integration Metrics |

| Perturbation Prediction | scFM embeddings did not consistently improve predictions | Traditional baselines | Prediction Accuracy |

| Cell Embedding Quality | scGPT outperformed others in embedding-based tasks | scGPT | ASW Score |

Batch Integration: For batch effect correction—a crucial task in single-cell analysis—Geneformer's tokenization approach consistently ranked last across multiple datasets, while surprisingly, simple HVG selection achieved the best quantitative scores [9]. Qualitative assessment revealed that while scGPT's embeddings offered some cell type separation, the primary structure remained driven by batch effects rather than biological signals.

Perturbation Prediction: The PertEval-scFM benchmark demonstrated that zero-shot scFM embeddings failed to provide consistent improvements over baseline models for predicting transcriptional responses to perturbations, particularly under distribution shift [10].

Fine-Tuning Performance Comparison

In contrast to zero-shot settings, fine-tuning often reveals different performance patterns:

Efficient Adaptation: Studies show that with minimal fine-tuning (often less than 1% of parameters), scFMs can achieve state-of-the-art performance in specialized tasks like molecular perturbation prediction [11]. The drug-conditional adapter approach (scDCA) demonstrates how tokenization schemes that accommodate external data modalities enable effective cross-modal learning.

Task-Specific Strengths: Comprehensive benchmarking reveals that no single scFM consistently outperforms others across all tasks [4]. Geneformer and scFoundation show strong capabilities in gene-level tasks, while scGPT excels in cell-level annotations, suggesting their tokenization strategies may be optimized for different biological hierarchies.

Methodologies for Evaluating Tokenization Strategies

Standardized Evaluation Frameworks

Robust assessment of tokenization impact requires standardized methodologies:

BioLLM Framework: This unified system addresses challenges in evaluating scFMs by providing standardized APIs and preprocessing pipelines, enabling direct comparison of tokenization strategies across consistent benchmarks [6] [5]. The framework implements rigorous quality control standards and consistent metrics for embedding quality, biological fidelity, and prediction accuracy.

Multi-Metric Assessment: Comprehensive evaluation incorporates multiple metrics including:

- Average Silhouette Width (ASW) for cluster separation quality

- Batch integration scores for technical artifact removal

- Biological fidelity metrics like scGraph-OntoRWR that measure consistency with prior biological knowledge [4]

- Lowest Common Ancestor Distance (LCAD) for ontological error severity in cell type annotation

Experimental Protocols for Tokenization Analysis

To objectively evaluate tokenization impact, researchers employ standardized protocols:

Input Length Sensitivity Testing: Systematic assessment of how embedding quality changes with varying gene input lengths, revealing that scGPT benefits from longer sequences while scBERT's performance declines with increased input length [5].

Ablation Studies: Controlled experiments that modify components of tokenization schemes (e.g., removing positional encoding or value embeddings) to isolate their contribution to overall performance.

Cross-Dataset Generalization: Evaluation on holdout datasets with different tissue types, sequencing technologies, and species to assess how tokenization strategies impact model transferability.

Diagram Title: Tokenization Workflow from Raw Data to Model Evaluation

Table 3: Key Research Reagents and Computational Tools for scFM Tokenization Research

| Resource Category | Specific Tools/Datasets | Primary Function in Tokenization Research |

|---|---|---|

| Data Repositories | CELLxGENE Census, GEO, Human Cell Atlas | Provide standardized single-cell data for training and benchmarking tokenization approaches |

| Benchmarking Platforms | BioLLM, PertEval-scFM | Offer standardized frameworks for comparing tokenization strategies across consistent metrics |

| Model Architectures | scGPT, Geneformer, scBERT, scFoundation | Implement different tokenization strategies for comparative analysis |

| Evaluation Metrics | ASW, scGraph-OntoRWR, LCAD | Quantify the biological relevance and practical utility of tokenization schemes |

| Specialized Libraries | Transformer architectures (PyTorch, TensorFlow) | Enable implementation and modification of tokenization approaches for experimental research |

Implications for Zero-Shot vs. Fine-Tuning Applications

The relationship between tokenization strategies and model performance differs significantly between zero-shot and fine-tuned applications:

Zero-Shot Scenarios: Current evaluations suggest that simpler tokenization approaches (like those underlying HVG selection) can surprisingly outperform complex foundation model embeddings in true zero-shot settings [9] [10]. This indicates that pretraining objectives may not align perfectly with zero-shot clustering and batch correction tasks.

Fine-Tuning Applications: In contexts where task-specific fine-tuning is feasible, tokenization strategies that incorporate richer biological context (such as scGPT's use of cell type and batch tokens) demonstrate stronger performance gains after adaptation [5]. This suggests that more expressive tokenization schemes provide better foundations for specialized task learning.

Efficient Fine-Tuning Techniques: Recent advances in parameter-efficient fine-tuning (e.g., adapter layers) enable effective adaptation of foundation models while preserving the general representations learned during pretraining [11]. These approaches mitigate some limitations of initial tokenization choices.

Tokenization serves as the foundational layer that shapes how single-cell foundation models perceive and interpret the "language of cells." The evidence from comprehensive benchmarks indicates that current tokenization strategies involve significant trade-offs between zero-shot capability and fine-tuning potential. For researchers and drug development professionals, selection of appropriate models must consider both the intended application context (zero-shot versus fine-tuned) and the specific biological questions being addressed. As the field advances, development of more biologically-informed tokenization schemes that better capture gene regulatory relationships and cellular states may narrow the performance gap between simple and complex approaches, particularly in zero-shot settings where reliability remains challenging for current foundation models.

In the rapidly evolving field of single-cell genomics, a fundamental tension has emerged between two competing approaches for applying artificial intelligence to biological discovery: zero-shot learning versus task-specific fine-tuning. Single-cell foundation models (scFMs) are deep learning models pretrained on millions of single-cell transcriptomes that have revolutionized how researchers analyze cellular heterogeneity and function [1]. These models face a critical deployment question—should they be used as-is through zero-shot inference, or specifically adapted to new tasks through fine-tuning?

Zero-shot learning enables models to recognize and classify previously unseen categories without any task-specific training examples, instead leveraging auxiliary knowledge and semantic relationships [12]. In the context of scFMs, this means applying pretrained models to novel biological questions—such as new cell type annotation or disease classification—without further training on labeled examples from the target task [4]. In contrast, fine-tuning continues the training process on a specific dataset to adapt the model's weights to a particular problem [13] [14].

Recent benchmarking studies reveal that neither approach consistently dominates across all scenarios. The choice depends critically on factors including dataset size, task complexity, biological interpretability requirements, and computational resources [4]. This guide provides an objective comparison of these competing paradigms to inform researchers and drug development professionals navigating this complex landscape.

Methodological Comparison: How Zero-Shot and Fine-Tuning Approaches Work

Fundamental Architectures and Training Regimes

Single-cell foundation models typically employ transformer-based architectures pretrained on massive collections of single-cell RNA sequencing data [1]. The pretraining process involves self-supervised objectives where models learn to predict masked genes or other features within cellular "sentences" composed of genes and their expression values [4] [1].

Zero-shot inference leverages these pretrained models without any weight updates. When presented with new data, the model extracts features and makes predictions based solely on knowledge encoded during pretraining. For example, a model might annotate cell types it never encountered during training by relating them to known types through shared patterns in gene expression [4].

Fine-tuning approaches vary in their methodology and computational demands:

- Full fine-tuning updates all model parameters using task-specific labeled data, requiring substantial computational resources but enabling maximal adaptation to the target task [13].

- Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA (Low-Rank Adaptation) introduce small trainable components while freezing the original weights, dramatically reducing computational requirements [13].

- Supervised Fine-Tuning (SFT) uses labeled examples to adjust model weights through standard loss minimization, while Direct Preference Optimization (DPO) incorporates both positive and negative examples to better align with human preferences [14].

Experimental Protocols for Benchmarking

Comprehensive benchmarking studies have established rigorous protocols to evaluate zero-shot versus fine-tuning performance across diverse biological tasks. The standard methodology involves:

- Model Selection: Multiple scFMs (Geneformer, scGPT, UCE, scFoundation, LangCell, scCello) are evaluated against traditional baselines including HVG selection, Seurat, Harmony, and scVI [4].

- Task Design: Performance is measured across gene-level tasks (gene network inference, gene function prediction) and cell-level tasks (batch integration, cell type annotation, cancer cell identification, drug sensitivity prediction) [4].

- Evaluation Metrics: Models are assessed using 12 metrics spanning unsupervised, supervised, and knowledge-based approaches, including novel biological relevance metrics like scGraph-OntoRWR and Lowest Common Ancestor Distance (LCAD) [4].

- Data Segregation: To mitigate data leakage concerns, independent validation datasets like the Asian Immune Diversity Atlas (AIDA) v2 from CellxGene are used for final evaluation [4].

Performance Benchmarking: Quantitative Comparisons Across Tasks

Holistic model rankings derived from non-dominated sorting algorithms reveal that no single scFM consistently outperforms others across all tasks, emphasizing the need for tailored model selection [4]. The table below summarizes the general performance patterns observed across comprehensive benchmarking studies:

Table 1: Overall Performance Patterns of Zero-Shot vs. Fine-Tuning Approaches

| Approach | Best-Suited Tasks | Performance Characteristics | Computational Demand |

|---|---|---|---|

| Zero-Shot Learning | Batch integration, exploratory analysis, large novel datasets | Robust and versatile for diverse applications, strong biological insights | Low (no additional training) |

| Full Fine-Tuning | Complex clinical predictions, specialized tasks with adequate data | Highest potential accuracy on target task, risk of overfitting | Very High |

| Parameter-Efficient FT | Medium-scale specialized tasks, resource-constrained environments | Competitive accuracy with reduced resources, minimal catastrophic forgetting | Medium |

| Traditional ML Baselines | Small datasets, specific well-defined tasks | Efficient adaptation to specific datasets under resource constraints | Low to Medium |

Task-Specific Performance Metrics

Different approaches excel in different biological contexts, with performance highly dependent on task complexity and data availability:

Table 2: Task-Specific Performance Comparison Across Methodologies

| Task Domain | Specific Task | Zero-Shot Performance | Fine-Tuned Performance | Key Findings |

|---|---|---|---|---|

| Cell Annotation | Novel cell type identification | Moderate accuracy (varies by model) | High accuracy with sufficient examples | LCAD metric shows zero-shot errors are biologically reasonable [4] |

| Clinical Prediction | Drug sensitivity prediction | Moderate predictive power | Significantly enhanced accuracy with fine-tuning | Fine-tuning outperforms on clinically-relevant tasks [4] |

| Perturbation Modeling | In silico perturbation (ISP) prediction | PPV: 3%, NPV: 98% [15] | Closed-loop PPV: 9%, NPV: 99% [15] | Fine-tuning with just 20 perturbation examples dramatically improves performance |

| Medical Reasoning | Clinical diagnosis from medical data | Varies by model size and training | SFT improves accuracy 7-22%; DPO adds further 8-18% [14] | DPO particularly valuable for complex reasoning tasks |

The Closed-Loop Advantage in Perturbation Modeling

Recent research introduces a "closed-loop" framework that exemplifies the power of targeted fine-tuning. When applied to T-cell activation prediction, this approach demonstrated:

Table 3: Performance Improvement with Closed-Loop Fine-Tuning for In Silico Perturbation

| Metric | Open-Loop ISP (Zero-Shot) | Closed-Loop ISP (Fine-Tuned) | Improvement |

|---|---|---|---|

| Positive Predictive Value | 3% | 9% | 3-fold increase |

| Negative Predictive Value | 98% | 99% | 1% increase |

| Sensitivity | 48% | 76% | 28% increase |

| Specificity | 60% | 81% | 21% increase |

| AUROC | 0.63 (95% CI: 0.58-0.68) | 0.86 (95% CI: 0.83-0.89) | Significant improvement |

Notably, performance gains saturated with approximately 20 perturbation examples, suggesting even modest experimental validation can substantially enhance prediction accuracy [15].

Technical Implementation: Workflows and Signaling Pathways

Zero-Shot Inference Workflow

The zero-shot inference process leverages pretrained knowledge without model weight updates, following a structured pathway from data input to biological insight:

Closed-Loop Fine-Tuning Framework

The closed-loop fine-tuning approach integrates experimental data to iteratively improve model performance, creating a virtuous cycle of prediction and validation:

Task-Specific Fine-Tuning Pathway

For specialized applications, task-specific fine-tuning adapts general foundation models to domain-specific challenges through supervised learning:

Essential Research Reagents and Computational Tools

Successful implementation of zero-shot and fine-tuning approaches requires specific computational frameworks and biological resources. The table below details key components of the experimental toolkit:

Table 4: Research Reagent Solutions for scFM Implementation

| Tool Category | Specific Tools/Platforms | Function | Implementation Role |

|---|---|---|---|

| scFM Models | Geneformer, scGPT, UCE, scFoundation | Pre-trained model architectures | Provide base capabilities for zero-shot inference or fine-tuning starting points |

| Data Resources | CELLxGENE, Human Cell Atlas, PanglaoDB | Curated single-cell datasets | Supply training data and benchmarking resources for model development and validation |

| Fine-Tuning Frameworks | Hugging Face Transformers, PEFT Library, Axolotl | Parameter-efficient fine-tuning | Enable model adaptation with reduced computational requirements |

| Computational Infrastructure | NVIDIA DGX Systems, Cloud GPU Platforms, Kubernetes | High-performance computing | Provide computational resources for training and inference |

| Perturbation Validation | Perturb-seq, CRISPR Screens, Flow Cytometry | Experimental validation | Generate ground truth data for closed-loop fine-tuning |

| Evaluation Metrics | scGraph-OntoRWR, LCAD, AUROC, F1 Score | Performance assessment | Quantify model performance and biological relevance |

The comparison between zero-shot and fine-tuning approaches reveals a nuanced landscape where strategic selection depends on specific research constraints and objectives. For researchers and drug development professionals, the following guidelines emerge from current evidence:

Zero-shot learning provides the most value in exploratory research phases, when working with novel cell types or perturbations lacking existing data, when computational resources are limited, and for tasks where biological interpretability is prioritized over maximum accuracy.

Fine-tuning approaches deliver superior performance for specialized clinical applications, when adequate task-specific data exists (even 20-50 examples can yield significant gains), for complex reasoning tasks requiring high precision, and when leveraging established biological paradigms where positive/negative examples are available.

The emerging closed-loop framework represents a promising hybrid approach, combining the efficiency of foundation models with the precision of targeted validation. As single-cell technologies continue to advance, the strategic integration of both paradigms will accelerate therapeutic discovery and deepen our understanding of cellular function in health and disease.

Single-cell foundation models (scFMs), pre-trained on millions of cells, represent a paradigm shift in computational biology. While their zero-shot capabilities are impressive, fine-tuning is the critical process that tailors these general-purpose models to specialized tasks, from rare disease therapeutics to precise cell state annotation. This guide compares the performance of leading scFMs after fine-tuning, providing researchers with data-driven insights for model selection.

Performance Showdown: A Comparative Analysis of Fine-Tuned scFMs

Comprehensive benchmarking reveals that no single scFM dominates all tasks. Performance is highly dependent on the specific application, dataset size, and available computational resources [4]. The following tables summarize key experimental findings.

Table 1: Comparative Performance of scFMs on Cell-Level Tasks After Fine-Tuning [4] [5]

| Model | Cell Type Annotation (Accuracy) | Batch Integration (ASW Score) | Perturbation Prediction (PPV/Accuracy Gain) | Key Strengths |

|---|---|---|---|---|

| scGPT | Consistently High | 0.78 (Superior) | High (Closed-loop) | All-around robust performer, excels in multi-omic tasks [5] [16] [6] |

| Geneformer | High | 0.65 (Moderate) | 3x PPV with closed-loop fine-tuning [15] | Strong in gene-level tasks and perturbation modeling [4] [15] |

| scFoundation | Moderate to High | 0.68 (Moderate) | Information Missing | Excellent on gene-level tasks; benefits from effective pre-training [4] [5] |

| scBERT | Lags Behind | 0.45 (Poor) | Information Missing | Lower performance, likely due to smaller model size and data [4] [5] |

| Baseline (e.g., PCA) | Varies | 0.60 | Information Missing | Simple models can be efficient for specific, narrow tasks [4] |

Table 2: Fine-Tuning Impact on a Clinical Application (RUNX1-FPD Target Identification) [15]

| Method | Positive Predictive Value (PPV) | Negative Predictive Value (NPV) | Sensitivity | Specificity |

|---|---|---|---|---|

| Open-Loop ISP (Fine-Tuned) | 3% | 98% | 48% | 60% |

| Differential Expression | 3% | 78% | 40% | 50% |

| Closed-Loop ISP (Fine-Tuned) | 9% | 99% | 76% | 81% |

Experimental Protocols: How Benchmarks Are Conducted

Understanding the methodology behind these comparisons is crucial for interpreting the results.

- Benchmarking Framework: Evaluations like those in BioLLM use standardized APIs to ensure consistent data preprocessing, model loading, and task execution across all scFMs, eliminating coding inconsistencies as a variable [5] [6].

- Task-Specific Evaluation:

- Cell Embedding Quality: Measured using metrics like Average Silhouette Width (ASW) on cell embeddings. A high ASW indicates the model has effectively separated cell types in its latent space [5].

- Batch Integration: Assessed by calculating a batch ASW score, which evaluates how well the model mixes cells from different batches while preserving biological separation [5].

- In-Silico Perturbation (ISP) Prediction: In a "closed-loop" framework, a model (e.g., Geneformer) is first fine-tuned on a specific cellular state (e.g., diseased HSCs). The model then predicts the effect of gene knockouts. Critically, the model is then further fine-tuned with a small number of real perturbation examples (e.g., from Perturb-seq data), which dramatically improves prediction accuracy for subsequent queries [15].

- Biological Relevance Assessment: Novel metrics like scGraph-OntoRWR and Lowest Common Ancestor Distance (LCAD) are used. These measure whether the relationships between cell types learned by the model align with established biological knowledge from cell ontologies, and whether misclassifications are biologically reasonable [4].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following reagents and computational tools are fundamental for conducting fine-tuning experiments and validation.

Table 3: Key Research Reagents and Tools for scFM Fine-Tuning

| Item Name | Function/Application in scFM Research |

|---|---|

| CZ CELLxGENE / DISCO Atlas | Provides unified access to tens of millions of curated, annotated single-cell datasets for pre-training and fine-tuning [1] [16]. |

| Perturb-seq Data | Single-cell RNA sequencing data from genetic perturbation screens (e.g., CRISPRa/i). Essential for fine-tuning and validating "closed-loop" in-silico perturbation models [15]. |

| BioLLM Framework | A standardized Python framework that provides a unified interface for multiple scFMs (scGPT, Geneformer, etc.), streamlining fine-tuning, benchmarking, and model switching [5] [6]. |

| CRISPR Activation/Interference | Used to generate ground-truth perturbation data in model systems (e.g., engineered human HSCs) for validating in-silico predictions from fine-tuned scFMs [15]. |

| Cell Ontology Databases | Structured, controlled vocabatures for cell types. Used to develop knowledge-informed metrics (e.g., LCAD) that assess the biological plausibility of a model's predictions [4]. |

Key Workflows and Relationships in scFM Fine-Tuning

The process of fine-tuning, especially for perturbation prediction, can be visualized as a cycle that integrates computational and experimental biology.

Diagram 1: Closed-Loop Fine-Tuning Workflow

Furthermore, benchmarking studies reveal that the decision to use a complex scFM versus a simpler model depends on the specific research context.

Diagram 2: Model Selection Strategy

The evidence clearly indicates that fine-tuning is not a mere optional step but is essential for unlocking the full potential of scFMs in targeted applications. While zero-shot embeddings provide a useful starting point, specialized performance requires task-specific adaptation [4] [10]. The "closed-loop" fine-tuning paradigm, which iteratively incorporates experimental data, represents a significant leap forward, turning scFMs into dynamic tools for hypothesis generation and testing [15].

For researchers, the key takeaways are:

- For versatile performance across diverse tasks, scGPT is a robust starting point [5] [6].

- For perturbation modeling and gene-level tasks, Geneformer, especially with closed-loop fine-tuning, shows remarkable promise [4] [15].

- For resource-constrained projects or specific tasks, simpler baseline models should still be considered, as they can sometimes match or exceed scFM performance with greater efficiency [4].

As the field evolves, standardized frameworks like BioLLM and more sophisticated benchmarking will further clarify the path to effective model specialization [5] [16].

Single-cell foundation models (scFMs) represent a transformative approach in computational biology, applying transformer-based architectures to analyze single-cell RNA sequencing (scRNA-seq) data. These models are pretrained on massive datasets comprising millions of cells to learn fundamental biological principles, which can then be applied to diverse downstream tasks. A central dichotomy in their application lies in the choice between zero-shot inference, where pretrained models generate embeddings without any task-specific training, and fine-tuning, where models are further trained on labeled data for specialized applications. Understanding the performance characteristics across these paradigms is crucial for researchers, particularly in drug development where both exploratory analysis (favoring zero-shot) and targeted prediction (often requiring fine-tuning) are essential. This guide provides a structured comparison of four prominent architectural players—scGPT, Geneformer, scBERT, and scFoundation—focusing on their architectural distinctions, quantitative performance across biological tasks, and their respective strengths within the zero-shot versus fine-tuning framework [1] [4].

Model Architectures and Pretraining Strategies

The performance of scFMs is fundamentally shaped by their architectural choices and pretraining methodologies. The table below summarizes the core technical specifications for each model.

Table 1: Architectural and Pretraining Specifications

| Model | Core Architecture | Pretraining Data Scale | Parameter Count | Input Representation | Primary Pretraining Task |

|---|---|---|---|---|---|

| scGPT [5] [6] | Transformer (Decoder-like) | 33 million human cells [17] | 50 million [4] | Value Binning (1200 HVGs) [4] | Iterative Masked Gene Modeling with MSE loss [4] |

| Geneformer [4] | Transformer (Encoder) | 30 million single-cell transcriptomes [17] | 40 million [4] | Gene Ranking (2048 ranked genes) [4] | Masked Gene Modeling with CE loss (gene ID prediction) [4] |

| scBERT [4] [5] | Transformer (Encoder, BERT-like) | Not specified (smaller scale) | Not specified (smaller) [5] | Value Binning [4] | Masked Language Modeling [5] |

| scFoundation [4] [17] | Asymmetric Encoder-Decoder | 50 million human cells [4] [17] | 100 million [4] | Value Projection (~19k genes) [4] | Read-depth-aware Masked Gene Modeling with MSE loss [4] |

Architectural Philosophy and Workflow

The architectural differences lead to distinct computational pathways for processing single-cell data. The following diagram illustrates the high-level logical workflow from input to output for these models, highlighting key decision points.

Diagram 1: Architectural Workflow from Input to Output

Quantitative Performance Comparison

Rigorous benchmarking reveals that no single model consistently outperforms all others across every task. Performance is highly dependent on the specific application, dataset characteristics, and whether zero-shot or fine-tuned settings are used.

Zero-Shot Performance Benchmarking

Zero-shot evaluation is critical for exploratory biological applications where labeled data is unavailable, such as novel cell type discovery or initial data integration. The following table synthesizes performance metrics from multiple independent benchmark studies conducted in 2025.

Table 2: Zero-Shot Performance Across Key Tasks (Summarized Findings)

| Model | Cell Type Clustering | Batch Integration | Perturbation Prediction | Biological Relevance | Key Strengths |

|---|---|---|---|---|---|

| scGPT | Consistently strong, outperforms other scFMs and baselines like PCA on ASW scores [5] | Effective on complex datasets with biological batch effects; outperforms Harmony and scVI on Tabula Sapiens and Immune datasets [9] | Not the top performer; simpler baselines can be superior [10] | Captures biologically meaningful relationships; generates high-quality embeddings [5] | Robust zero-shot embeddings, handles multi-omics data [5] [6] |

| Geneformer | Underperforms vs. simpler methods (HVG, scVI, Harmony) on AvgBIO score [9] | Consistently underperforms; embeddings often dominated by batch effects [9] | Not the top performer; simpler baselines can be superior [10] | Demonstrates strong capabilities in gene-level tasks [5] [6] | Network biology, target discovery, limited-data settings [4] |

| scBERT | Lags behind other models [5] | Poor performance; struggles with batch effects [5] | Not the top performer; simpler baselines can be superior [10] | Lower biological fidelity in embeddings [5] | Pioneer in applying BERT architecture to scRNA-seq [4] |

| scFoundation | Not top performer in cell-level tasks [5] [6] | Not top performer in cell-level tasks [5] [6] | Not the top performer; simpler baselines can be superior [10] | Excels in gene-level tasks and gene function prediction [5] [6] | Gene function prediction, gene-gene relationships [17] [6] |

Fine-Tuning Performance and Efficiency

For targeted applications with sufficient labeled data, fine-tuning often yields significant performance improvements. However, the efficiency and effectiveness of fine-tuning vary across models.

Table 3: Fine-Tuning Performance and Resource Considerations

| Model | Fine-Tuning Performance Gain | Parameter Efficiency | Computational Efficiency | Notable Specialized Applications |

|---|---|---|---|---|

| scGPT | Significant improvement in cell embedding extraction and batch correction after fine-tuning [5] | Supports parameter-efficient methods [17] | Efficient in memory and computation time [5] | Multi-omics integration, perturbation response prediction [4] |

| Geneformer | Strong performance in target applications with task-specific fine-tuning [4] | Designed for few-shot learning [4] | Efficient in memory and computation time [5] | Disease gene prediction, candidate therapeutic target identification [4] |

| scBERT | Performance improves with fine-tuning but may still lag behind other models [5] | Standard full fine-tuning typically used | Less efficient than scGPT and Geneformer [5] | Cell type annotation [4] |

| scFoundation | Benefits from fine-tuning for specific tasks [17] | Can leverage LoRA and other PEFT methods [17] | Less efficient than scGPT and Geneformer [5] | Gene function prediction, gene network analysis [17] |

Experimental Protocols and Benchmarking Methodologies

Standardized Evaluation Frameworks

Recent benchmarking studies have established rigorous protocols for evaluating scFMs. The BioLLM framework, for instance, provides a unified interface for multiple models, ensuring consistent preprocessing, evaluation metrics, and task definitions [5]. Key evaluation dimensions include:

- Embedding Quality: Assessed via Average Silhouette Width (ASW) for cell type separation and batch integration [9] [5].

- Biological Fidelity: Measured through gene regulatory network analysis and novel ontology-informed metrics like scGraph-OntoRWR, which evaluates consistency of captured cell type relationships with prior biological knowledge [4].

- Prediction Accuracy: Standard classification metrics for tasks like cell type annotation and drug response prediction [4] [5].

Table 4: Essential Research Reagents for scFM Benchmarking

| Resource/Reagent | Function in Evaluation | Example Instances/Specifications |

|---|---|---|

| Benchmark Datasets | Provide standardized ground truth for performance comparison | Pancreas dataset (5 sources) [9], PBMC 12k [9], Tabula Sapiens [9], Asian Immune Diversity Atlas (AIDA) v2 [4] |

| Evaluation Metrics | Quantify model performance across different dimensions | Average Silhouette Width (ASW) [9], AvgBIO score [9], Principal Component Regression (PCR) score [9], scGraph-OntoRWR [4] |

| Baseline Methods | Establish performance benchmarks for comparison | Highly Variable Genes (HVG) [9], Harmony [9], scVI [9] |

| Unified Frameworks | Standardize model access and evaluation protocols | BioLLM [5] [6], PertEval-scFM [10] |

The evidence from comprehensive benchmarks indicates a nuanced landscape for scFM performance. For zero-shot applications where labels are unknown or exploratory analysis is paramount, scGPT demonstrates the most consistent performance across cell-level tasks like clustering and complex batch integration [9] [5]. Conversely, for gene-level tasks such as function prediction, scFoundation shows particular strength [5] [6]. Geneformer remains valuable for network biology applications and settings with limited data for fine-tuning [4]. The choice between zero-shot and fine-tuned approaches depends critically on the research objective: zero-shot for discovery where labels are unavailable, and fine-tuning for optimized performance on well-defined tasks with sufficient labeled data. As these models continue to evolve, researchers should consider dataset characteristics, task requirements, and computational resources when selecting the most appropriate architectural player for their specific biological questions.

Implementing scFMs: A Practical Guide to Zero-Shot and Fine-Tuning Workflows

In the specialized field of single-cell genomics, the emergence of single-cell foundation models (scFMs) presents researchers with a critical methodological choice: when to leverage the inherent, zero-shot capabilities of these models versus investing in resource-intensive fine-tuning. scFMs are large-scale deep learning models, typically based on transformer architectures, pretrained on vast atlases of single-cell sequencing data, enabling them to learn fundamental biological principles of cellular state and function [1]. Zero-shot learning refers to the ability of these pretrained models to perform novel tasks or recognize new cell types without any task-specific training examples, relying instead on their broad pretraining knowledge and semantic understanding [18]. This stands in contrast to fine-tuning, where a pretrained scFM is further trained on a specific, labeled dataset to adapt its parameters to a particular task, such as annotating a rare cell type not well-represented in the original training data.

The decision between these paradigms has significant implications for project timelines, computational resource allocation, and scientific outcomes, particularly in drug development where both speed and accuracy are paramount. This guide objectively compares the performance of zero-shot and fine-tuned scFMs, providing experimental data and structured decision frameworks to help scientists and researchers select the optimal approach for their specific biological questions and constraints.

Performance Comparison: Zero-Shot vs. Fine-Tuned Models

Empirical studies across various domains, including healthcare and sentiment analysis, provide quantitative insights into the performance trade-offs between zero-shot and fine-tuned models. While fine-tuning generally delivers superior accuracy on specialized tasks, zero-shot approaches can be remarkably effective, especially when data is scarce.

Experimental Evidence from Healthcare and NLP

A comprehensive study on classifying electronic pathology reports from the British Columbia Cancer Registry offers direct performance comparisons [7]. The research evaluated models across three classification scenarios of varying difficulty and data availability.

Table 1: Performance Comparison of Model Types on Medical Text Classification

| Model Type | Scenario A (Easy) | Scenario B (Medium) | Scenario C (Hard) | Data Requirements | Compute Cost |

|---|---|---|---|---|---|

| Zero-Shot LLM (e.g., GPT-4) | High Performance | Moderate Performance | Lower Performance | None | Low (Inference-only) |

| Fine-Tuned SLM (on target data) | Highest Performance | Highest Performance | Highest Performance | Large labeled dataset | High (Training + Inference) |

| Zero-Shot SLM Ensemble [19] | Moderate Performance | Moderate Performance | Moderate Performance | None | Low to Medium |

Key findings from this study indicate that while fine-tuned Small Language Models (SLMs) consistently achieved the highest accuracy across all tasks, they required a substantial labeled dataset and significant computational resources for training [7]. Notably, fine-tuned SLMs consistently outperformed zero-shot LLMs, even much larger ones, on these specialized classification tasks [7]. This underscores that for targeted applications, a finely-tuned smaller model can be more effective than a generalist, zero-shot giant.

Another study focusing on sentiment analysis, a common NLP task, found that an ensemble of zero-shot SLMs could achieve competitive performance with a state-of-the-art zero-shot LLM (GPT-4), with the ensemble's accuracy being statistically indistinguishable from the LLM's on several benchmark datasets [19]. This demonstrates the potential of model ensembles as a viable zero-shot strategy.

The collective evidence leads to several key conclusions:

- Fine-Tuning Advantage: Fine-tuning is the unequivocal choice for maximizing performance on well-defined, specialized tasks where substantial labeled data exists [7] [20].

- Zero-Shot Utility: Zero-shot methods are highly effective for rapid prototyping, initial feasibility studies, and tasks where generalization across a wide range of concepts is more valuable than peak accuracy on a narrow domain [18] [21].

- Data Scarcity: In scenarios with very limited or no labeled data, zero-shot learning is not just convenient but necessary, often providing a strong baseline that is difficult to surpass without any data [22] [18].

When to Choose Zero-Shot Learning: A Decision Framework

The choice between zero-shot and fine-tuning is not a simple binary but a strategic decision based on project constraints and goals. The following diagram outlines the key decision pathways for researchers.

Figure 1: A decision framework for choosing between zero-shot and fine-tuned approaches for single-cell foundation models.

Ideal Scenarios for Zero-Shot Learning

Based on the decision framework and empirical evidence, zero-shot learning is the preferred strategy in the following scenarios:

- Rapid Prototyping and Exploration: In the early stages of a research project, zero-shot learning allows scientists to quickly test hypotheses, gauge model understanding of biological concepts, and generate initial results without investing weeks in data annotation and model training [23]. This facilitates agile experimentation and iterative hypothesis testing.

- Extreme Data Scarcity: When studying rare cell types, novel disease states, or newly discovered biological phenomena, labeled examples may be non-existent. Zero-shot learning can classify these "unseen" categories by leveraging semantic knowledge from related cell types or attributes learned during pretraining [22] [18].

- Resource Constraints: Zero-shot learning bypasses the need for expensive GPU clusters and the time-consuming fine-tuning process. This makes advanced scFM analysis accessible to research groups with limited computational budgets [20] [21].

- Broad Multi-Task Analysis: When a research question requires a single model to perform a wide range of tasks—such as simultaneous cell type annotation, gene function prediction, and perturbation response modeling—the inherent generalism of a zero-shot model can be more practical than maintaining multiple fine-tuned specialist models [1].

When to Prefer Fine-Tuning

Fine-tuning remains the superior choice in contexts where the highest possible accuracy is the primary goal. This is critical for applications with real-world consequences, such as diagnostic applications or validating a drug target, where model errors are costly [20]. Furthermore, when working with highly specialized terminology—such as specific gene isoforms, novel metabolic pathways, or proprietary compound names—fine-tuning is essential to adapt the model's semantic space to the unique jargon of the domain [20] [21]. Finally, when a large, high-quality, labeled dataset is readily available, fine-tuning leverages this valuable asset to its fullest potential, typically resulting in significant performance gains that zero-shot methods cannot match [7] [20].

Experimental Protocols for scFM Evaluation

To ensure fair and reproducible comparisons between zero-shot and fine-tuned scFMs, researchers should adhere to structured experimental protocols. The following workflow details a standard methodology for benchmarking model performance on a specific downstream task, such as annotating cell types in a new dataset.

Figure 2: A standard workflow for benchmarking zero-shot versus fine-tuned scFM performance.

Detailed Benchmarking Methodology

1. Data Preparation and Sourcing Curate a benchmark dataset containing single-cell profiles (e.g., scRNA-seq) with ground truth labels for the target task (e.g., cell type). Standardized data sources are critical. For scFMs, public repositories like CZ CELLxGENE, which provides unified access to millions of annotated single-cell datasets, are indispensable [1]. The data should be split into training (for fine-tuning), validation, and test sets, ensuring the test set contains a mix of "seen" and "unseen" classes for a comprehensive evaluation [1].

2. Model Setup

- Zero-Shot Setup: Select a pre-trained scFM (e.g., scBERT, scGPT) [1]. For evaluation, the model's task is defined via natural language prompts or by leveraging its inherent classification head without updating any model parameters.

- Fine-Tuning Setup: Use the same pre-trained scFM as a starting point. The model is then further trained on the labeled training split of the target dataset. A common technique is to use a small learning rate (e.g., 5e-5) to avoid catastrophic forgetting of the pre-trained knowledge while adapting to the new task [20].

3. Evaluation Protocol

- Zero-Shot Inference: The model performs predictions on the held-out test set directly. No gradient updates are performed.

- Fine-Tuning Process: The model is trained on the training set. The model checkpoint with the best performance on the validation set is selected for final evaluation on the test set.

4. Performance Metrics Compute standard classification metrics on the test set to enable a direct comparison. Key metrics include:

- Accuracy: The overall proportion of correct predictions.

- Weighted F1-Score: The harmonic mean of precision and recall, which is robust to class imbalance [19].

- Weighted Precision: The proportion of correct positive predictions, weighted by class support [19].

Statistical significance testing (e.g., Wilcoxon signed-rank test) should be conducted to confirm that observed performance differences are not due to random chance [19].

Essential Research Reagents and Computational Tools

To implement the experimental protocols and conduct rigorous comparisons, researchers require access to specific data, models, and software tools. The following table details these essential "research reagents."

Table 2: Key Research Reagents for scFM Experimentation

| Reagent / Tool | Type | Primary Function in Research | Example Sources / Models |

|---|---|---|---|

| Annotated Single-Cell Atlases | Data | Pretraining corpus for scFMs; benchmark dataset for evaluation. | CZ CELLxGENE [1], Human Cell Atlas [1], PanglaoDB [1] |

| Pre-trained Foundation Models | Software/Model | Provides base models for zero-shot evaluation and fine-tuning. | scBERT [1], scGPT [1] |

| Model Training Frameworks | Software | Provides libraries and environment for fine-tuning and evaluation. | Hugging Face Transformers [20], PyTorch [20] |

| High-Performance Compute (HPC) | Infrastructure | Provides computational power required for model fine-tuning. | GPU Clusters (e.g., NVIDIA), Cloud Computing (e.g., AWS, GCP) |

| Evaluation Metrics Libraries | Software | Calculates standardized performance metrics for model comparison. | seqeval [20], scikit-learn |

The choice between zero-shot and fine-tuned approaches for single-cell foundation models is a strategic decision that balances trade-offs between speed, resource consumption, and task-specific accuracy. Zero-shot learning is the definitive choice for rapid prototyping, scenarios with extreme data scarcity, and projects operating under significant computational constraints. Its ability to provide immediate, baseline insights without data annotation or training is powerful for exploratory biology and initial feasibility studies. Conversely, fine-tuning is the path to state-of-the-art performance for well-defined, critical tasks where maximizing accuracy justifies the investment in data labeling and compute resources.

A pragmatic approach for many research teams is to begin with a zero-shot evaluation to establish a performance baseline and assess task difficulty. If the zero-shot results are promising but fall just short of the required accuracy, a small investment in fine-tuning can often bridge the gap, efficiently leveraging the strengths of both paradigms to advance scientific discovery in drug development and molecular biology.

Designing Effective Prompts for Zero-Shot Inference in Biological Tasks

The emergence of single-cell foundation models (scFMs) has revolutionized computational biology, offering unprecedented ability to analyze cellular function and disease mechanisms. A central question for researchers and drug development professionals is whether to leverage these powerful models in a zero-shot manner or to invest resources in fine-tuning them for specific tasks. Zero-shot inference uses carefully engineered prompts to guide a pre-trained model to perform a task without any task-specific training, offering speed and reduced computational cost. In contrast, fine-tuning adapts the model's weights to a specific dataset, often yielding higher accuracy at the expense of time and resources. This guide objectively compares these approaches through experimental data and provides a practical framework for designing effective prompts for zero-shot inference in biological tasks, contextualized within broader performance research.

Evidence suggests the choice between approaches is nuanced. While fine-tuned models often achieve superior accuracy on well-defined tasks with sufficient data, recent advancements in prompt engineering have made zero-shot methods surprisingly competitive, especially for complex biological tasks where labeled data is scarce or expensive to obtain. This guide synthesizes current research to help practitioners navigate this landscape effectively.

The Science of Prompt Engineering for Biological Data

Prompt engineering has evolved from a trial-and-error practice into a systematic discipline, with recent surveys cataloging 58 distinct prompting techniques for large language models (LLMs) [24]. In biological contexts, effective prompt design is crucial due to specialized terminology, complex relationships, and the high stakes of accuracy in healthcare and drug development applications.

Foundational Prompting Techniques

Zero-Shot Prompting: This approach provides models with direct instructions without additional examples. Its effectiveness varies significantly with task complexity; while simple factual queries often succeed, complex reasoning tasks typically require more sophisticated techniques [24].

Chain-of-Thought (CoT) Prompting: This technique encourages models to solve problems through a series of intermediate steps before giving a final answer, significantly improving performance on multi-step biological reasoning tasks. It exists in two forms: few-shot CoT (including reasoning examples) and zero-shot CoT (where simply appending "Let's think step-by-step" can be effective) [24].

Scenario-Based Prompt Design: Particularly valuable in biomedical applications, this approach involves crafting prompts that establish specific scenarios or contexts relevant to the task. Research on document-level biomedical relation extraction has demonstrated that this method can achieve accuracy comparable to fine-tuned models while reducing human and hardware expenses [25].

Advanced Structured Reasoning Techniques

For biological data with inherent structure, advanced techniques have shown particular promise:

Chain-of-Table Framework: This represents a significant advancement for table-based reasoning in biological data, where tabular data is explicitly used in the reasoning chain as a proxy for intermediate thoughts. Unlike traditional Chain-of-Thought approaches that rely on textual reasoning, Chain-of-Table leverages structured operations to iteratively transform tables according to the question, improving performance on benchmark datasets by 6.72-8.69% [24].

Self-Consistency and Tree-of-Thought: These techniques address inherent variability in LLM outputs by generating multiple reasoning paths. Self-Consistency performs several chain-of-thought rollouts and selects the most common conclusion, while Tree-of-Thought generates multiple reasoning lines in parallel, enabling more thorough exploration of solution spaces for complex biological problems [24].

Comparative Performance: Zero-Shot Versus Fine-Tuning

Experimental evidence across multiple biological domains reveals a complex performance landscape where the optimal approach depends on task specificity, data availability, and resource constraints. The following table summarizes key comparative findings from recent studies:

Table 1: Performance Comparison of Zero-Shot vs. Fine-Tuned Models on Biological Tasks

| Task Domain | Model/Approach | Performance Metrics | Key Findings |

|---|---|---|---|

| Healthcare Classification [7] | Fine-tuned SLMs | Significantly improved vs. zero-shot SLMs | Outperformed zero-shot LLMs on targeted classification tasks |

| Zero-shot LLMs | Underperformed vs. fine-tuned SLMs | Offered strong baseline but inferior to specialized models | |

| Biomedical Relation Extraction [25] | Zero-shot with scenario-based prompts | Comparable to fine-tuned models | Achieved similar accuracy with reduced hardware/labor costs |

| Biomedical NER (ZeroTuneBio) [26] | Zero-shot three-stage framework | F1-score: ~88% (partial matching) | Surpassed BioBERT trained on 22,480 examples (excluding strict-matching errors) |

| Perturbation Effect Prediction [10] | Zero-shot scFM embeddings | Did not outperform simpler baselines | Struggled with strong/atypical perturbation effects and distribution shift |

| Object Detection in Vision [27] | YOLOv8 (Fine-tuned) | mAP: 0.9011 (cars dataset) | Superior accuracy but required 8+ hours training |

| YOLO-World (Zero-shot) | mAP: 0.44 (cars dataset) | Lower accuracy but only 10 minutes setup |

The Specialization Principle in Model Selection

Research consistently demonstrates that fine-tuned Small Language Models (SLMs) can surpass zero-shot Large Language Models (LLMs) on specialized biological tasks. A comprehensive study on electronic pathology reports from the British Columbia Cancer Registry found that while zero-shot LLMs outperformed zero-shot SLMs, they were "consistently outperformed by finetuned SLMs" [7]. This challenges the assumption that larger models inherently perform better, highlighting instead the value of targeted specialization.

The performance advantage of fine-tuning becomes more pronounced with task complexity and data scarcity. Domain-adjacent pre-training provides modest gains on easier tasks but yields "significant improvements on the complex, data-scarce task" [7]. This suggests a hierarchical approach where researchers should consider domain relevance before task-specific fine-tuning.

The Cost-Accuracy Tradeoff in Practice

Computer vision experiments comparing YOLOv8 (fine-tuned) versus YOLO-World (zero-shot) illustrate the fundamental tradeoff between accuracy and efficiency. While the fine-tuned model achieved dramatically higher mAP (0.9011 vs. 0.44) on a car detection dataset, it required "approximately 8 hours for training, testing, and troubleshooting" compared to "around 10 minutes" for the zero-shot model [27]. This efficiency advantage makes zero-shot approaches valuable for prototyping, exploration, and applications where perfect accuracy is not critical.

Experimental Protocols and Methodologies

Zero-Shot Biomedical Relation Extraction Protocol

A two-stage approach for document-level biomedical relation extraction demonstrates effective zero-shot methodology [25]:

Table 2: Two-Stage Zero-Shot Protocol for Biomedical Relation Extraction

| Stage | Process | Key Components |

|---|---|---|

| Stage 1: Named Entity Recognition (NER) | Identifies chemical, disease, and gene entities | Synonym and hypernym extraction using LLM with crafted prompt |

| Stage 2: Relation Extraction (RE) | Extracts relations between entities based on predefined schemas | Scenario-based prompt design with five-part template structure |

The protocol employs a systematic prompt evaluation method to assess prompt effectiveness quantitatively. This approach eliminates the need for expensive hardware and annotated training datasets, significantly reducing barriers to entry for biomedical researchers [25].

Closed-Loop scFM Fine-Tuning Methodology

Research on single-cell foundation models for perturbation prediction demonstrates an advanced fine-tuning methodology. The "closed-loop" framework extends scFMs by incorporating experimental perturbation data during model fine-tuning, significantly improving prediction accuracy [15].

The experimental workflow involves:

- Benchmarking open-loop in silico perturbation (ISP) predictions using foundation models like Geneformer

- Fine-tuning the model with single-cell RNA sequencing data from CRISPR screens alongside existing data

- Incorporating perturbation examples during fine-tuning, with studies showing performance saturation at approximately 20 examples

- Applying the refined model to novel therapeutic targets, as demonstrated in RUNX1-familial platelet disorder

This methodology increased positive predictive value three-fold—from 3% to 9%—with concurrent improvements in negative predictive value, sensitivity, and specificity [15].

Three-Stage Zero-Shot Framework for Biomedical NER

The ZeroTuneBio NER framework demonstrates a sophisticated approach to zero-shot inference through three integrated stages incorporating chain-of-thought reasoning and prompt engineering [26]. Evaluated on multiple public datasets (disease, chemistry, and gene), this method requires no task-specific examples or LLM fine-tuning, specifically addressing challenges in complex biomedical concept interpretation. The framework achieved an average F1-score improvement of 0.28 over direct LLM queries and competitive performance with fine-tuned models, demonstrating that LLMs can perform high-quality NER without fine-tuning while reducing reliance on manual annotation.

Pathway and Workflow Visualization

Zero-Shot Biological Inference Workflow

Diagram 1: Zero-Shot Biological Inference Workflow. This diagram illustrates the systematic workflow for designing and executing effective zero-shot inference for biological tasks, highlighting the critical prompt design phase.

Closed-Loop Fine-Tuning Pathway

Diagram 2: Closed-Loop Fine-Tuning Pathway. This diagram shows the iterative fine-tuning process for single-cell foundation models, highlighting how experimental validation creates a feedback loop for continuous model improvement.

Table 3: Research Reagent Solutions for scFM Experiments

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Single-Cell Foundation Models | Geneformer, scGPT, scBERT | Pre-trained models for single-cell analysis tasks [15] [1] |

| Model Fine-Tuning Techniques | LoRA, QLoRA, Adapter Layers | Parameter-efficient fine-tuning methods that reduce computational requirements [28] |

| Biomedical Knowledge Bases | ChemDisGene, CDR, Public datasets (disease, chemistry, gene) | Curated data for model evaluation and testing [25] [26] |

| Prompt Engineering Frameworks | Chain-of-Thought, Scenario-Based Prompting, Chain-of-Table | Structured approaches for designing effective zero-shot prompts [25] [24] |

| Evaluation Benchmarks | PertEval-scFM, TabFact, WikiTQ | Standardized frameworks for assessing model performance [24] [10] |

| Computational Infrastructure | High-performance GPUs, Cloud computing platforms | Hardware acceleration for training and inference tasks [7] [28] |

The evidence clearly indicates that both zero-shot inference and fine-tuning have distinct advantages in biological applications. The optimal approach depends on multiple factors including task complexity, data availability, computational resources, and accuracy requirements.

For researchers and drug development professionals, the following strategic guidelines are recommended:

Use zero-shot approaches when exploring new biological questions, working with limited computational resources, requiring rapid prototyping, or when high accuracy is not critical. The ZeroTuneBio framework demonstrates that well-engineered prompts can achieve performance competitive with fine-tuned models for tasks like named entity recognition [26].

Opt for fine-tuning when working on well-defined tasks with sufficient labeled data, when maximum accuracy is required for clinical or therapeutic decisions, or when domain-specific patterns are not adequately captured in foundation models. Fine-tuned SLMs consistently outperform zero-shot LLMs in specialized healthcare applications [7].

Consider hybrid approaches that begin with zero-shot inference for exploratory analysis and progress to fine-tuning as hypotheses are refined. The closed-loop scFM framework demonstrates how iterative refinement cycles can significantly enhance prediction accuracy [15].

As single-cell foundation models continue to evolve, the boundary between zero-shot and fine-tuned approaches may blur, with techniques like prompt tuning and soft prompting creating intermediate options [24]. What remains constant is the need for biological expertise in crafting prompts and interpreting results, ensuring that computational advances translate to genuine biological insights and therapeutic breakthroughs.

In the rapidly evolving field of single-cell genomics, the emergence of single-cell foundation models (scFMs) has created a critical methodological divergence: choosing between zero-shot inference on pretrained models versus supervised fine-tuning for specific biological tasks. This guide provides a comprehensive comparative analysis of these approaches, demonstrating that while zero-shot methods offer rapid deployment, supervised fine-tuning consistently achieves superior performance on specialized tasks such as cell type annotation, disease classification, and perturbation response prediction. We present a detailed examination of the complete fine-tuning pipeline—from data preparation through model training and validation—alongside experimental protocols and reagent solutions that empower researchers to implement these techniques effectively in drug development and basic research contexts.

Single-cell foundation models represent a transformative advance in computational biology, leveraging transformer architectures pretrained on millions of single-cell transcriptomes to learn fundamental principles of cellular identity and function [1]. These models treat individual cells as sentences and genes or genomic features as tokens, creating a powerful framework for analyzing cellular heterogeneity [1]. The pretraining process typically involves self-supervised objectives similar to those used in natural language processing, such as predicting masked gene expressions, enabling the model to learn rich, generalizable representations of single-cell data [1].

The critical decision facing researchers today revolves around how to best leverage these pretrained scFMs for specific downstream tasks. The zero-shot approach utilizes the pretrained model without modification, relying on its inherent capabilities, while fine-tuning involves additional training on task-specific datasets to adapt the model's parameters [7]. Recent evidence indicates that although zero-shot methods provide convenience, fine-tuned smaller models can consistently outperform much larger zero-shot models on specialized tasks, highlighting the importance of the fine-tuning pipeline in maximizing model performance for targeted applications [7].

Comparative Performance: Zero-Shot vs. Fine-Tuned scFMs

Quantitative Performance Analysis

Table 1: Performance comparison of zero-shot versus fine-tuned models on single-cell classification tasks

| Model Type | Accuracy (%) | F1-Score | Compute Requirements (GPU Memory) | Training Data Requirements | Inference Speed (cells/sec) |

|---|---|---|---|---|---|

| Zero-shot LLM (e.g., GPT-4) | 72.5 | 0.71 | 40-80GB | None | ~1,000 |

| Zero-shot scFM | 78.3 | 0.76 | 8-16GB | None | ~10,000 |

| Fine-tuned SLM (Full) | 94.7 | 0.93 | 24-48GB | 10,000-50,000 cells | ~50,000 |

| Fine-tuned scFM (LoRA) | 92.1 | 0.90 | 4-12GB | 5,000-20,000 cells | ~45,000 |

| Fine-tuned scFM (QLoRA) | 90.5 | 0.88 | 2-6GB | 5,000-20,000 cells | ~40,000 |

Empirical studies across multiple single-cell tasks reveal a consistent performance advantage for fine-tuned models compared to zero-shot approaches. Research on electronic pathology reports from cancer registries demonstrated that fine-tuned Small Language Models (SLMs) consistently outperformed zero-shot Large Language Models (LLMs) on specialized classification tasks, despite the LLMs' superior performance in zero-shot settings [7]. The performance gap was particularly pronounced for complex, data-scarce tasks, where fine-tuned models achieved 15-20% higher accuracy than zero-shot alternatives [7].